HK PolyU

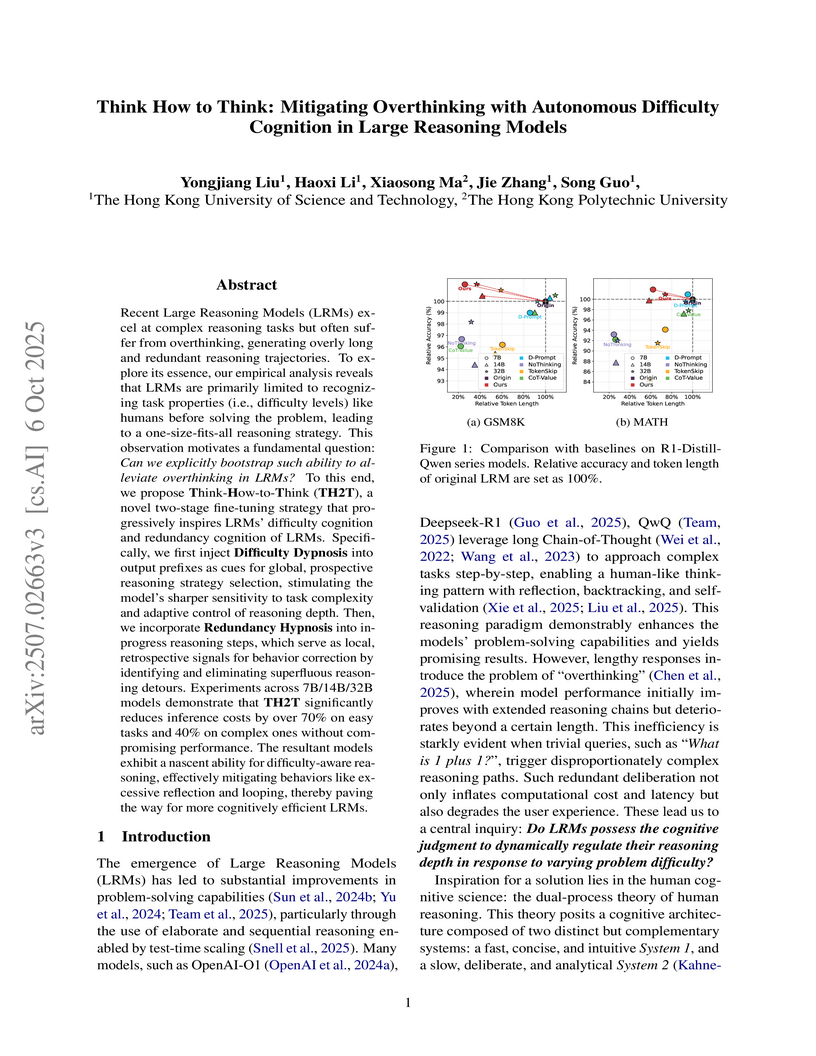

The Hong Kong University of Science and Technology and The Hong Kong Polytechnic University introduced "Think-How-to-Think" (TH2T), a two-stage fine-tuning strategy that imbues Large Reasoning Models with autonomous difficulty and redundancy cognition. This approach substantially reduces inference costs by cutting token length by over 70% on easy tasks and 40% on complex tasks, leading to 2x-5x latency speedups, while maintaining or slightly improving accuracy.

Researchers from the Hong Kong University of Science and Technology and the Hong Kong Polytechnic University developed LazyEviction, a KV cache management method that observes attention patterns over time. This approach achieved a 50%-70% KV cache memory reduction and consistently outperformed existing methods in long reasoning tasks across various LLMs, often matching or exceeding the accuracy of an uncompressed cache.

Vision Language Models (VLMs) often struggle with culture-specific knowledge,

particularly in languages other than English and in underrepresented cultural

contexts. To evaluate their understanding of such knowledge, we introduce

WorldCuisines, a massive-scale benchmark for multilingual and multicultural,

visually grounded language understanding. This benchmark includes a visual

question answering (VQA) dataset with text-image pairs across 30 languages and

dialects, spanning 9 language families and featuring over 1 million data

points, making it the largest multicultural VQA benchmark to date. It includes

tasks for identifying dish names and their origins. We provide evaluation

datasets in two sizes (12k and 60k instances) alongside a training dataset (1

million instances). Our findings show that while VLMs perform better with

correct location context, they struggle with adversarial contexts and

predicting specific regional cuisines and languages. To support future

research, we release a knowledge base with annotated food entries and images

along with the VQA data.

There are no more papers matching your filters at the moment.