evolutionary-algorithms

Researchers from South China University of Technology introduced Evolutionary Reasoning Optimization (ERO), a neuroevolution framework that applies evolutionary strategies to Large Language Models' parameters, enabling a Qwen-7B model to develop robust System 2 reasoning capabilities. This method empirically demonstrated that reasoning can emerge through evolution, allowing the evolved model to outperform GPT-5 on the Abstraction and Reasoning Corpus (ARC) benchmark, challenging the notion that only model scaling improves such abilities.

Automatically synthesizing verifiable code from natural language requirements ensures software correctness and reliability while significantly lowering the barrier to adopting the techniques of formal methods. With the rise of large language models (LLMs), long-standing efforts at autoformalization have gained new momentum. However, existing approaches suffer from severe syntactic and semantic errors due to the scarcity of domain-specific pre-training corpora and often fail to formalize implicit knowledge effectively. In this paper, we propose AutoICE, an LLM-driven evolutionary search for synthesizing verifiable C code. It introduces the diverse individual initialization and the collaborative crossover to enable diverse iterative updates, thereby mitigating error propagation inherent in single-agent iterations. Besides, it employs the self-reflective mutation to facilitate the discovery of implicit knowledge. Evaluation results demonstrate the effectiveness of AutoICE: it successfully verifies 90.36\% of code, outperforming the state-of-the-art (SOTA) approach. Besides, on a developer-friendly dataset variant, AutoICE achieves a 88.33\% verification success rate, significantly surpassing the 65\% success rate of the SOTA approach.

The growing prevalence of artificial intelligence (AI) in various applications underscores the need for agents that can successfully navigate and adapt to an ever-changing, open-ended world. A key challenge is ensuring these AI agents are robust, excelling not only in familiar settings observed during training but also effectively generalising to previously unseen and varied scenarios. In this thesis, we harness methodologies from open-endedness and multi-agent learning to train and evaluate robust AI agents capable of generalising to novel environments, out-of-distribution inputs, and interactions with other co-player agents. We begin by introducing MiniHack, a sandbox framework for creating diverse environments through procedural content generation. Based on the game of NetHack, MiniHack enables the construction of new tasks for reinforcement learning (RL) agents with a focus on generalisation. We then present Maestro, a novel approach for generating adversarial curricula that progressively enhance the robustness and generality of RL agents in two-player zero-sum games. We further probe robustness in multi-agent domains, utilising quality-diversity methods to systematically identify vulnerabilities in state-of-the-art, pre-trained RL policies within the complex video game football domain, characterised by intertwined cooperative and competitive dynamics. Finally, we extend our exploration of robustness to the domain of LLMs. Here, our focus is on diagnosing and enhancing the robustness of LLMs against adversarial prompts, employing evolutionary search to generate a diverse range of effective inputs that aim to elicit undesirable outputs from an LLM. This work collectively paves the way for future advancements in AI robustness, enabling the development of agents that not only adapt to an ever-evolving world but also thrive in the face of unforeseen challenges and interactions.

Agentic AI systems built on large language models (LLMs) offer significant potential for automating complex workflows, from software development to customer support. However, LLM agents often underperform due to suboptimal configurations; poorly tuned prompts, tool descriptions, and parameters that typically require weeks of manual refinement. Existing optimization methods either are too complex for general use or treat components in isolation, missing critical interdependencies.

We present ARTEMIS, a no-code evolutionary optimization platform that jointly optimizes agent configurations through semantically-aware genetic operators. Given only a benchmark script and natural language goals, ARTEMIS automatically discovers configurable components, extracts performance signals from execution logs, and evolves configurations without requiring architectural modifications.

We evaluate ARTEMIS on four representative agent systems: the \emph{ALE Agent} for competitive programming on AtCoder Heuristic Contest, achieving a \textbf{13.6% improvement} in acceptance rate; the \emph{Mini-SWE Agent} for code optimization on SWE-Perf, with a statistically significant \textbf{10.1\% performance gain}; and the \emph{CrewAI Agent} for cost and mathematical reasoning on Math Odyssey, achieving a statistically significant \textbf{36.9% reduction} in the number of tokens required for evaluation. We also evaluate the \emph{MathTales-Teacher Agent} powered by a smaller open-source model (Qwen2.5-7B) on GSM8K primary-level mathematics problems, achieving a \textbf{22\% accuracy improvement} and demonstrating that ARTEMIS can optimize agents based on both commercial and local models.

Researchers from the University of Oxford, MILA, and NVIDIA introduce EGGROLL, an Evolution Strategies algorithm that scales black-box optimization to billion-parameter neural networks by employing low-rank parameter perturbations. The method achieves a hundredfold increase in training throughput and enables stable pre-training of pure-integer recurrent language models, demonstrating competitive or superior performance on reinforcement learning and large language model fine-tuning tasks.

Large Language Models' (LLMs) programming capabilities enable their participation in open-source games: a game-theoretic setting in which players submit computer programs in lieu of actions. These programs offer numerous advantages, including interpretability, inter-agent transparency, and formal verifiability; additionally, they enable program equilibria, solutions that leverage the transparency of code and are inaccessible within normal-form settings. We evaluate the capabilities of leading open- and closed-weight LLMs to predict and classify program strategies and evaluate features of the approximate program equilibria reached by LLM agents in dyadic and evolutionary settings. We identify the emergence of payoff-maximizing, cooperative, and deceptive strategies, characterize the adaptation of mechanisms within these programs over repeated open-source games, and analyze their comparative evolutionary fitness. We find that open-source games serve as a viable environment to study and steer the emergence of cooperative strategy in multi-agent dilemmas.

Large Language Models (LLMs) are increasingly being deployed in real-world applications, but their flexibility exposes them to prompt injection attacks. These attacks leverage the model's instruction-following ability to make it perform malicious tasks. Recent work has proposed JATMO, a task-specific fine-tuning approach that trains non-instruction-tuned base models to perform a single function, thereby reducing susceptibility to adversarial instructions. In this study, we evaluate the robustness of JATMO against HOUYI, a genetic attack framework that systematically mutates and optimizes adversarial prompts. We adapt HOUYI by introducing custom fitness scoring, modified mutation logic, and a new harness for local model testing, enabling a more accurate assessment of defense effectiveness. We fine-tuned LLaMA 2-7B, Qwen1.5-4B, and Qwen1.5-0.5B models under the JATMO methodology and compared them with a fine-tuned GPT-3.5-Turbo baseline. Results show that while JATMO reduces attack success rates relative to instruction-tuned models, it does not fully prevent injections; adversaries exploiting multilingual cues or code-related disruptors still bypass defenses. We also observe a trade-off between generation quality and injection vulnerability, suggesting that better task performance often correlates with increased susceptibility. Our results highlight both the promise and limitations of fine-tuning-based defenses and point toward the need for layered, adversarially informed mitigation strategies.

Quality-Diversity (QD) algorithms constitute a branch of optimization that is concerned with discovering a diverse and high-quality set of solutions to an optimization problem. Current QD methods commonly maintain diversity by dividing the behavior space into discrete regions, ensuring that solutions are distributed across different parts of the space. The QD problem is then solved by searching for the best solution in each region. This approach to QD optimization poses challenges in large solution spaces, where storing many solutions is impractical, and in high-dimensional behavior spaces, where discretization becomes ineffective due to the curse of dimensionality. We present an alternative framing of the QD problem, called \emph{Soft QD}, that sidesteps the need for discretizations. We validate this formulation by demonstrating its desirable properties, such as monotonicity, and by relating its limiting behavior to the widely used QD Score metric. Furthermore, we leverage it to derive a novel differentiable QD algorithm, \emph{Soft QD Using Approximated Diversity (SQUAD)}, and demonstrate empirically that it is competitive with current state of the art methods on standard benchmarks while offering better scalability to higher dimensional problems.

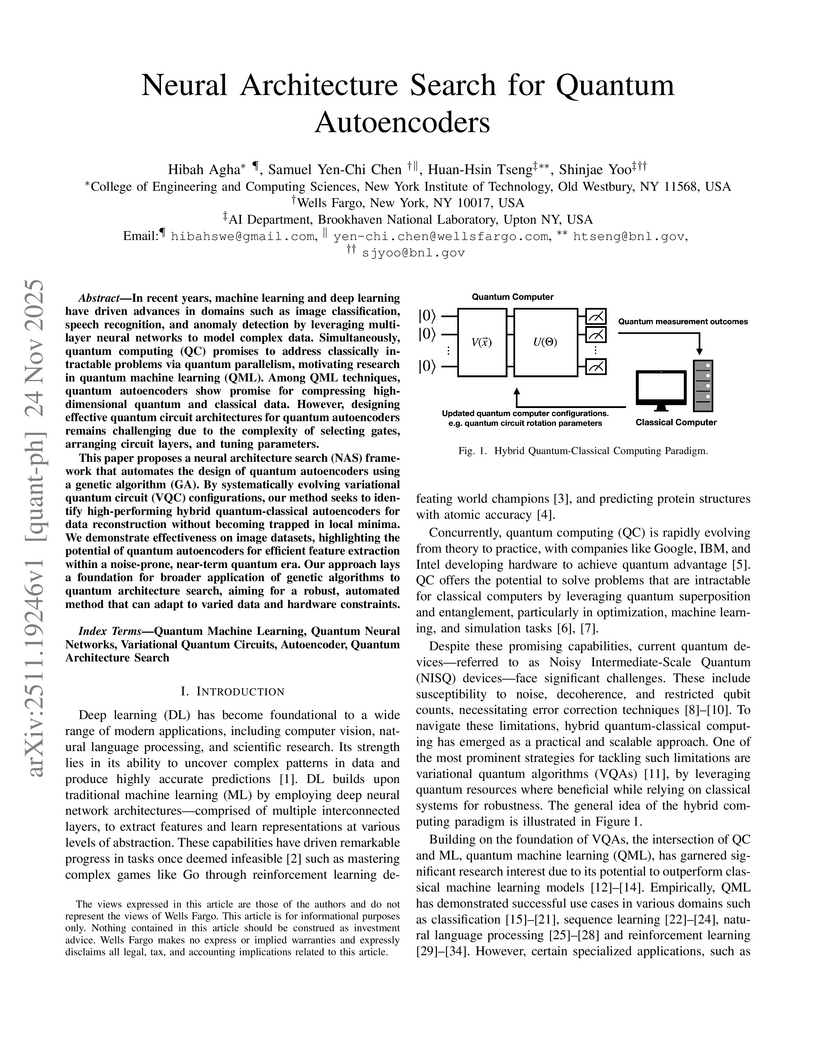

In recent years, machine learning and deep learning have driven advances in domains such as image classification, speech recognition, and anomaly detection by leveraging multi-layer neural networks to model complex data. Simultaneously, quantum computing (QC) promises to address classically intractable problems via quantum parallelism, motivating research in quantum machine learning (QML). Among QML techniques, quantum autoencoders show promise for compressing high-dimensional quantum and classical data. However, designing effective quantum circuit architectures for quantum autoencoders remains challenging due to the complexity of selecting gates, arranging circuit layers, and tuning parameters.

This paper proposes a neural architecture search (NAS) framework that automates the design of quantum autoencoders using a genetic algorithm (GA). By systematically evolving variational quantum circuit (VQC) configurations, our method seeks to identify high-performing hybrid quantum-classical autoencoders for data reconstruction without becoming trapped in local minima. We demonstrate effectiveness on image datasets, highlighting the potential of quantum autoencoders for efficient feature extraction within a noise-prone, near-term quantum era. Our approach lays a foundation for broader application of genetic algorithms to quantum architecture search, aiming for a robust, automated method that can adapt to varied data and hardware constraints.

EGGROLL, a novel evolution strategy algorithm, scales backprop-free optimization to billion-parameter neural networks by employing low-rank perturbations. It achieves a hundredfold increase in training throughput, enables the pre-training of pure integer recurrent language models, and demonstrates competitive performance in LLM reasoning fine-tuning and reinforcement learning.

EvoSynth, developed by researchers from Fudan University and Shanghai AI Lab, introduces a multi-agent framework that autonomously synthesizes novel, executable code-based jailbreak algorithms for large language models. This system consistently achieves an average attack success rate of 95.9% against state-of-the-art LLMs, generates significantly more diverse attacks, and demonstrates high evasiveness against current defenses.

FORGEDAN, an evolutionary framework developed by researchers including those from iFLYTEK Security Laboratory and Peking University, automatically generates fluent and stealthy adversarial prompts to bypass Large Language Model (LLM) safety alignments. It achieves a 98.27% attack success rate (ASR) on Gemma-2-9B and 87.50% on Qwen2.5-7B, significantly outperforming existing black-box jailbreaking methods by integrating multi-strategic mutation, semantic fitness measurement, and dual-dimensional jailbreak judgment.

AgenticSciML introduces a multi-agent AI framework designed to autonomously discover and refine scientific machine learning (SciML) methodologies. The system consistently discovered solutions that outperformed single-agent baselines by 10x to over 11,000x in error reduction, generating novel SciML strategies across six benchmark problems.

Researchers from City University of Hong Kong, Zhejiang University, and Hangzhou Dianzi University developed a novel black-box attack, Guardrail Reverse-engineering Attack (GRA), to extract the decision-making policies of commercial LLM guardrails. This method achieved high-fidelity replication of guardrail behavior on systems like ChatGPT and DeepSeek with minimal API costs, demonstrating a critical vulnerability in current LLM safety mechanisms.

Google DeepMind, in collaboration with mathematicians from Brown University and UCLA, developed AlphaEvolve, an AI system that autonomously discovers and improves mathematical constructions across various domains. The system achieved new state-of-the-art results in problems like finite field Kakeya sets, autocorrelation inequalities, and kissing numbers, inspiring new theoretical work.

The FM Agent from Baidu AI Cloud is a multi-agent framework that integrates LLM-based reasoning with large-scale evolutionary search to autonomously discover state-of-the-art solutions across diverse, complex problem domains. It achieves new top performances, including up to 20.77x speedups on GPU kernel optimization and outperforming human submissions on over 51% of Kaggle ML tasks.

Researchers from Virginia Tech and Sandia National Laboratories introduced LLEMA, a framework integrating large language models with chemistry-informed evolutionary search and memory-based refinement for multi-objective, synthesizability-aware materials discovery. The system consistently achieved higher hit rates and thermodynamic stability compared to baselines across 14 diverse materials tasks, while also significantly reducing LLM memorization.

FELA is a multi-agent evolutionary system that automates feature engineering for complex industrial event log data by leveraging large language models and a hierarchical knowledge structure. It consistently outperforms existing LLM-based baselines, generating explainable features that improve predictive model performance across diverse datasets, and has been deployed internally at Tencent for labor savings.

In this work, we introduce CodeEvolve, an open-source evolutionary coding agent that unites Large Language Models (LLMs) with genetic algorithms to solve complex computational problems. Our framework adapts powerful evolutionary concepts to the LLM domain, building upon recent methods for generalized scientific discovery. CodeEvolve employs an island-based genetic algorithm to maintain population diversity and increase throughput, introduces a novel inspiration-based crossover mechanism that leverages the LLMs context window to combine features from successful solutions, and implements meta-prompting strategies for dynamic exploration of the solution space. We conduct a rigorous evaluation of CodeEvolve on a subset of the mathematical benchmarks used to evaluate Google DeepMind's closed-source AlphaEvolve. Our findings show that our method surpasses AlphaEvolve's performance on several challenging problems. To foster collaboration and accelerate progress, we release our complete framework as an open-source repository.

10 Oct 2025

Researchers at UC Berkeley demonstrated that an AI-Driven Research for Systems (ADRS) framework can autonomously discover and refine algorithms for complex systems problems, frequently surpassing human-designed state-of-the-art solutions within hours and at low computational cost. The approach utilizes large language models (LLMs) to iteratively generate and evaluate solutions within high-fidelity simulators across diverse systems domains.

There are no more papers matching your filters at the moment.