Institute of Artificial Intelligence (TeleAI)

UniModel introduces a visual-only framework that unifies multimodal understanding and generation by representing both text and images as pixel-level data within a single diffusion transformer. This approach enables coherent text-to-image generation and image captioning, demonstrating strong cycle consistency and emergent controllability by operating entirely in a shared visual latent space.

Generative models have made significant progress in synthesizing visual content, including images, videos, and 3D/4D structures. However, they are typically trained with surrogate objectives such as likelihood or reconstruction loss, which often misalign with perceptual quality, semantic accuracy, or physical realism. Reinforcement learning (RL) offers a principled framework for optimizing non-differentiable, preference-driven, and temporally structured objectives. Recent advances demonstrate its effectiveness in enhancing controllability, consistency, and human alignment across generative tasks. This survey provides a systematic overview of RL-based methods for visual content generation. We review the evolution of RL from classical control to its role as a general-purpose optimization tool, and examine its integration into image, video, and 3D/4D generation. Across these domains, RL serves not only as a fine-tuning mechanism but also as a structural component for aligning generation with complex, high-level goals. We conclude with open challenges and future research directions at the intersection of RL and generative modeling.

05 Dec 2025

Egocentric AI assistants in real-world settings must process multi-modal inputs (video, audio, text), respond in real time, and retain evolving long-term memory. However, existing benchmarks typically evaluate these abilities in isolation, lack realistic streaming scenarios, or support only short-term tasks. We introduce \textbf{TeleEgo}, a long-duration, streaming, omni-modal benchmark for evaluating egocentric AI assistants in realistic daily contexts. The dataset features over 14 hours per participant of synchronized egocentric video, audio, and text across four domains: work \& study, lifestyle \& routines, social activities, and outings \& culture. All data is aligned on a unified global timeline and includes high-quality visual narrations and speech transcripts, curated through human this http URL defines 12 diagnostic subtasks across three core capabilities: Memory (recalling past events), Understanding (interpreting the current moment), and Cross-Memory Reasoning (linking distant events). It contains 3,291 human-verified QA items spanning multiple question formats (single-choice, binary, multi-choice, and open-ended), evaluated strictly in a streaming setting. We propose Real-Time Accuracy (RTA) to jointly capture correctness and responsiveness under tight decision windows, and Memory Persistence Time (MPT) as a forward-looking metric for long-term retention in continuous streams. In this work, we report RTA results for current models and release TeleEgo, together with an MPT evaluation framework, as a realistic and extensible benchmark for future egocentric assistants with stronger streaming memory, enabling systematic study of both real-time behavior and long-horizon memory.

Open-Vocabulary Remote Sensing Image Segmentation (OVRSIS), an emerging task that adapts Open-Vocabulary Segmentation (OVS) to the remote sensing (RS) domain, remains underexplored due to the absence of a unified evaluation benchmark and the domain gap between natural and RS images. To bridge these gaps, we first establish a standardized OVRSIS benchmark (\textbf{OVRSISBench}) based on widely-used RS segmentation datasets, enabling consistent evaluation across methods. Using this benchmark, we comprehensively evaluate several representative OVS/OVRSIS models and reveal their limitations when directly applied to remote sensing scenarios. Building on these insights, we propose \textbf{RSKT-Seg}, a novel open-vocabulary segmentation framework tailored for remote sensing. RSKT-Seg integrates three key components: (1) a Multi-Directional Cost Map Aggregation (RS-CMA) module that captures rotation-invariant visual cues by computing vision-language cosine similarities across multiple directions; (2) an Efficient Cost Map Fusion (RS-Fusion) transformer, which jointly models spatial and semantic dependencies with a lightweight dimensionality reduction strategy; and (3) a Remote Sensing Knowledge Transfer (RS-Transfer) module that injects pre-trained knowledge and facilitates domain adaptation via enhanced upsampling. Extensive experiments on the benchmark show that RSKT-Seg consistently outperforms strong OVS baselines by +3.8 mIoU and +5.9 mACC, while achieving 2x faster inference through efficient aggregation. Our code is \href{this https URL}{\textcolor{blue}{here}}.

Perceptual studies demonstrate that conditional diffusion models excel at reconstructing video content aligned with human visual perception. Building on this insight, we propose a video compression framework that leverages conditional diffusion models for perceptually optimized reconstruction. Specifically, we reframe video compression as a conditional generation task, where a generative model synthesizes video from sparse, yet informative signals. Our approach introduces three key modules: (1) Multi-granular conditioning that captures both static scene structure and dynamic spatio-temporal cues; (2) Compact representations designed for efficient transmission without sacrificing semantic richness; (3) Multi-condition training with modality dropout and role-aware embeddings, which prevent over-reliance on any single modality and enhance robustness. Extensive experiments show that our method significantly outperforms both traditional and neural codecs on perceptual quality metrics such as Fréchet Video Distance (FVD) and LPIPS, especially under high compression ratios.

Researchers from TeleAI, China Telecom Corp Ltd, developed an effective solution for Emotional Support Conversation (ESC) by fine-tuning Qwen2.5 Large Language Models with advanced prompt engineering. Their approach, which includes both LoRA and full-parameter fine-tuning, achieved a second-place ranking in the NLPCC 2025 Task 8 evaluation, with their best model yielding a total score of 39.62 and a G-score of 87.20.

Recent advances in end-to-end spoken language models (SLMs) have significantly improved the ability of AI systems to engage in natural spoken interactions. However, most existing models treat speech merely as a vehicle for linguistic content, often overlooking the rich paralinguistic and speaker characteristic cues embedded in human speech, such as dialect, age, emotion, and non-speech vocalizations. In this work, we introduce GOAT-SLM, a novel spoken language model with paralinguistic and speaker characteristic awareness, designed to extend spoken language modeling beyond text semantics. GOAT-SLM adopts a dual-modality head architecture that decouples linguistic modeling from acoustic realization, enabling robust language understanding while supporting expressive and adaptive speech generation. To enhance model efficiency and versatility, we propose a modular, staged training strategy that progressively aligns linguistic, paralinguistic, and speaker characteristic information using large-scale speech-text corpora. Experimental results on TELEVAL, a multi-dimensional evaluation benchmark, demonstrate that GOAT-SLM achieves well-balanced performance across both semantic and non-semantic tasks, and outperforms existing open-source models in handling emotion, dialectal variation, and age-sensitive interactions. This work highlights the importance of modeling beyond linguistic content and advances the development of more natural, adaptive, and socially aware spoken language systems.

StitchFusion is a "stitch-based" framework for multimodal semantic segmentation that integrates diverse visual inputs by enabling direct information sharing within pre-trained encoder blocks. This approach achieves state-of-the-art performance with considerably fewer additional parameters across multiple datasets, including DeLiVER and Mcubes.

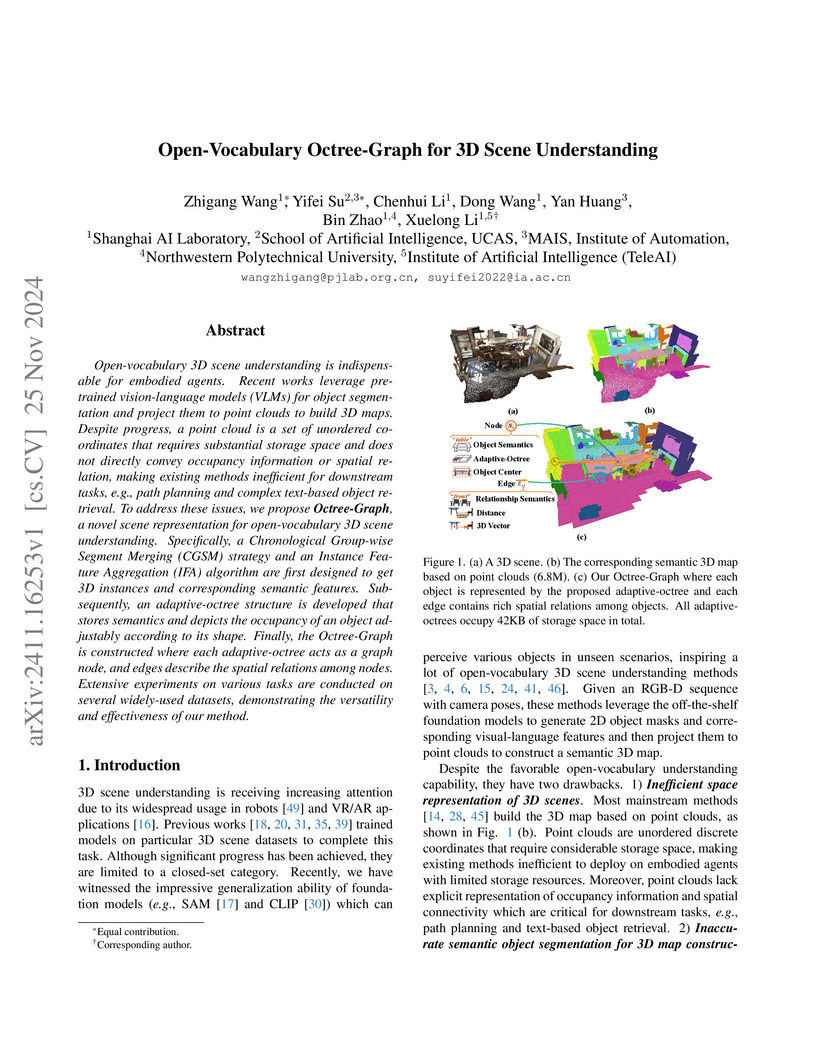

The Octree-Graph introduces a training-free system for open-vocabulary 3D scene understanding that combines efficient adaptive-octree spatial representation with a graph framework. The system achieves a +17.1% mIoU improvement on ScanNet for 3D semantic segmentation and reduces storage by two orders of magnitude compared to point clouds, enabling complex object retrieval and multi-resolution path planning.

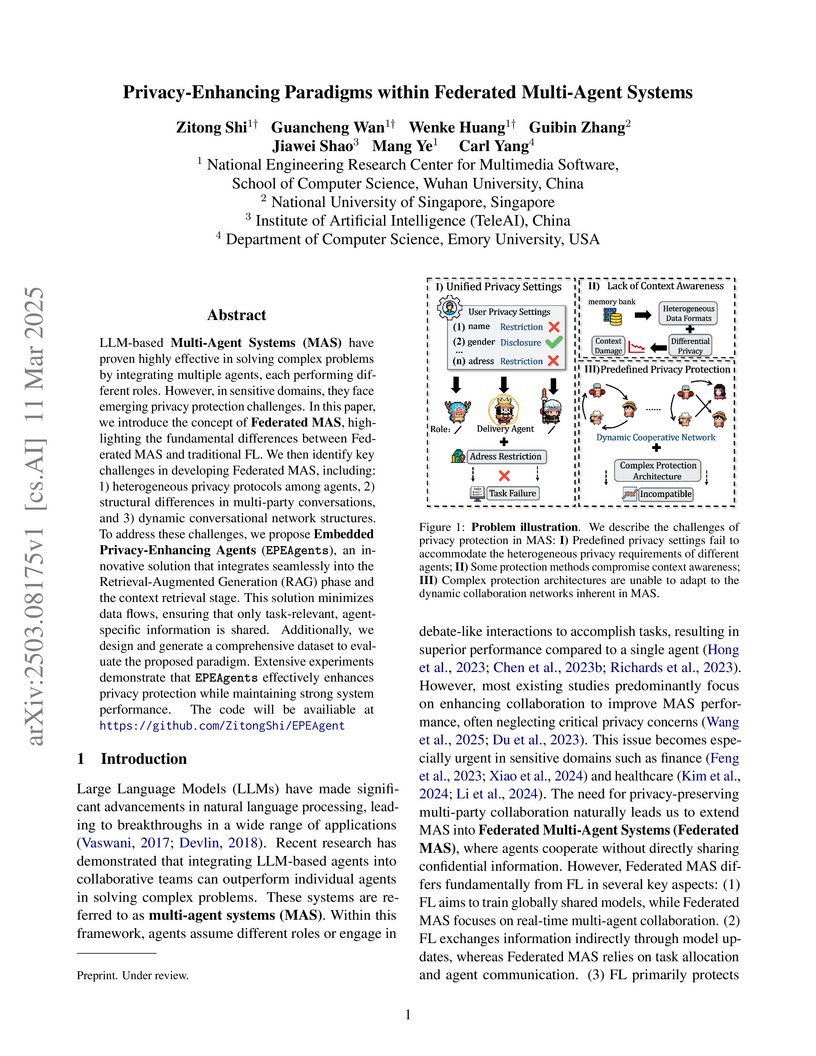

Researchers introduce Embedded Privacy-Enhancing Agents (EPEAgents) within a new Federated Multi-Agent Systems paradigm, significantly improving privacy in LLM-based agent collaborations up to 97.62% while maintaining or enhancing task performance. This framework addresses the unique privacy challenges of dynamic multi-agent interactions, enabling safer deployment in sensitive domains.

Researchers at the Institute of Artificial Intelligence (TeleAI), China Telecom, China, introduce the "Beyond-Semantic Speech" (BoSS) framework, which formalizes the implicit cues and contextual dynamics essential for human-like spoken interaction. Their evaluation demonstrates that current spoken language models largely fall short in interpreting and generating these beyond-semantic dimensions, such as dialect, emotion, age, and non-verbal information.

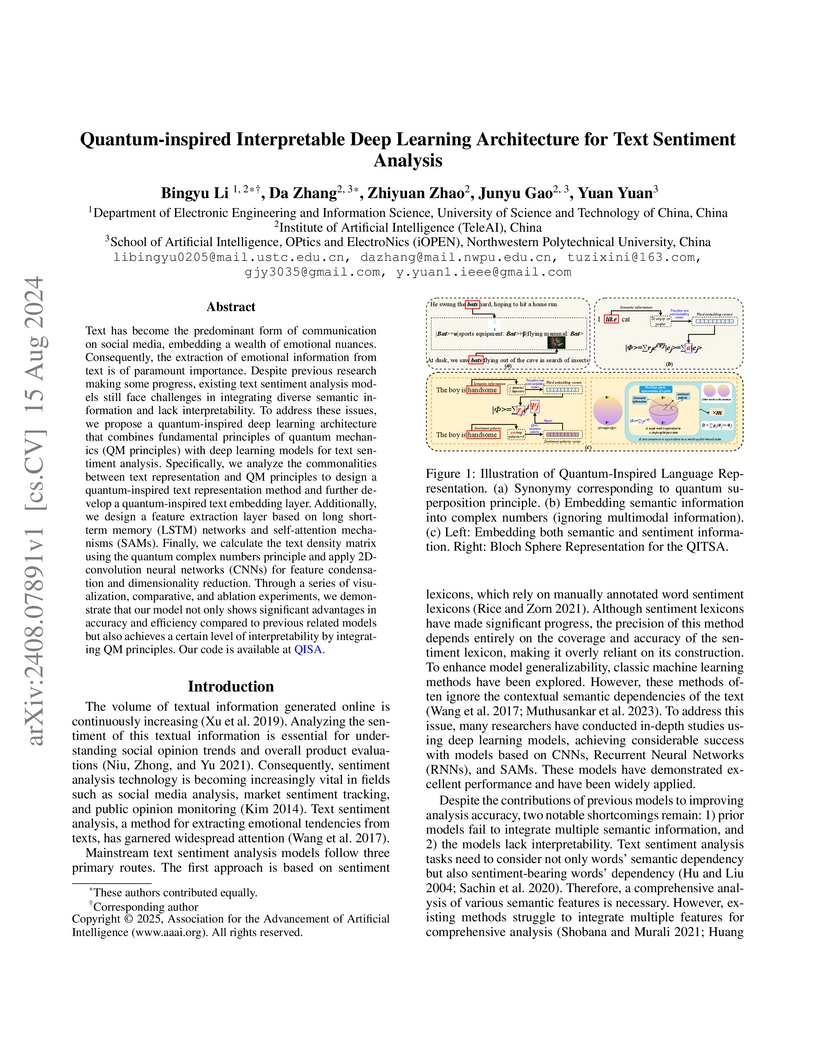

Text has become the predominant form of communication on social media,

embedding a wealth of emotional nuances. Consequently, the extraction of

emotional information from text is of paramount importance. Despite previous

research making some progress, existing text sentiment analysis models still

face challenges in integrating diverse semantic information and lack

interpretability. To address these issues, we propose a quantum-inspired deep

learning architecture that combines fundamental principles of quantum mechanics

(QM principles) with deep learning models for text sentiment analysis.

Specifically, we analyze the commonalities between text representation and QM

principles to design a quantum-inspired text representation method and further

develop a quantum-inspired text embedding layer. Additionally, we design a

feature extraction layer based on long short-term memory (LSTM) networks and

self-attention mechanisms (SAMs). Finally, we calculate the text density matrix

using the quantum complex numbers principle and apply 2D-convolution neural

networks (CNNs) for feature condensation and dimensionality reduction. Through

a series of visualization, comparative, and ablation experiments, we

demonstrate that our model not only shows significant advantages in accuracy

and efficiency compared to previous related models but also achieves a certain

level of interpretability by integrating QM principles. Our code is available

at QISA.

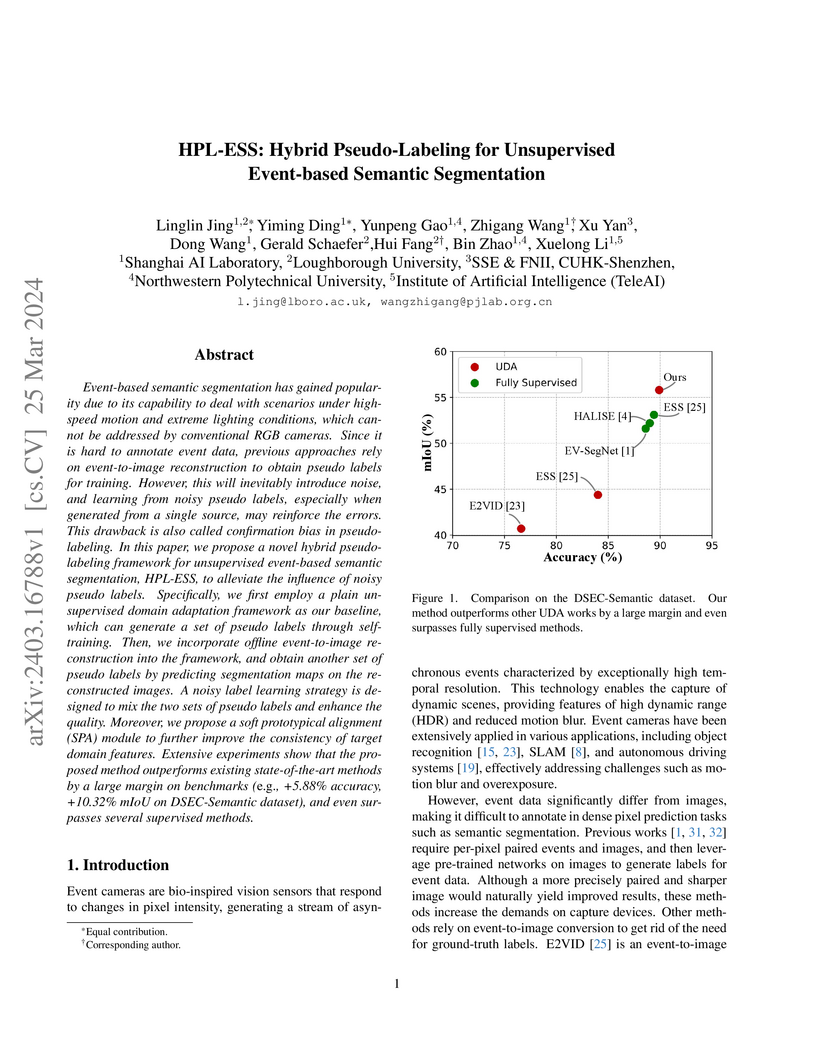

Event-based semantic segmentation has gained popularity due to its capability to deal with scenarios under high-speed motion and extreme lighting conditions, which cannot be addressed by conventional RGB cameras. Since it is hard to annotate event data, previous approaches rely on event-to-image reconstruction to obtain pseudo labels for training. However, this will inevitably introduce noise, and learning from noisy pseudo labels, especially when generated from a single source, may reinforce the errors. This drawback is also called confirmation bias in pseudo-labeling. In this paper, we propose a novel hybrid pseudo-labeling framework for unsupervised event-based semantic segmentation, HPL-ESS, to alleviate the influence of noisy pseudo labels. In particular, we first employ a plain unsupervised domain adaptation framework as our baseline, which can generate a set of pseudo labels through self-training. Then, we incorporate offline event-to-image reconstruction into the framework, and obtain another set of pseudo labels by predicting segmentation maps on the reconstructed images. A noisy label learning strategy is designed to mix the two sets of pseudo labels and enhance the quality. Moreover, we propose a soft prototypical alignment module to further improve the consistency of target domain features. Extensive experiments show that our proposed method outperforms existing state-of-the-art methods by a large margin on the DSEC-Semantic dataset (+5.88% accuracy, +10.32% mIoU), which even surpasses several supervised methods.

There are no more papers matching your filters at the moment.