University of Modena and Reggio Emilia

This research develops a method for robots to generate high-level semantic region maps of indoor environments (e.g., "kitchen," "bedroom") without relying on explicit object recognition. The approach adapts Vision-Language Models for embodied perception, outperforming object-based and traditional scene classification baselines in online mapping.

Researchers from the University of Modena and Reggio Emilia developed WF-Net, a framework for multi-class unlearning in image classification that enables simultaneous forgetting of all classes from a single model instance. This approach achieved 0.0% accuracy on forget sets for MNIST and CIFAR-10, substantially reduced accuracy for ImageNet classes, and intrinsically discovered class-specific network components.

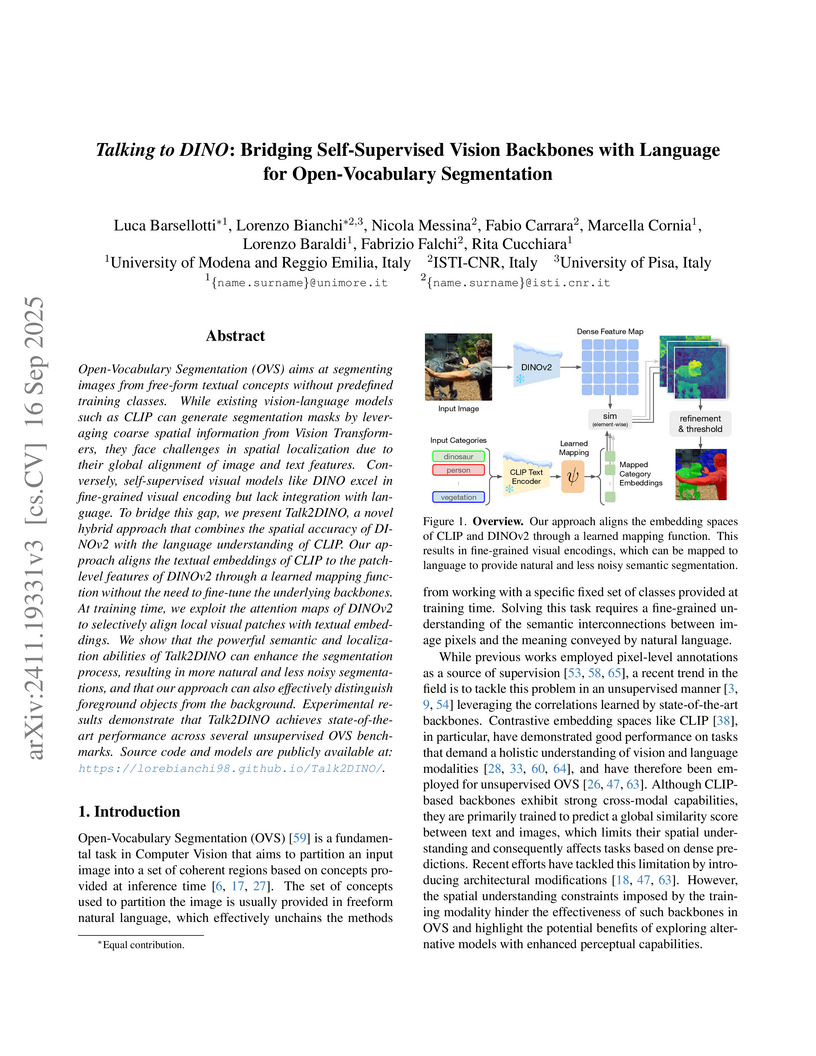

Talk2DINO, developed by researchers at UNIMORE and ISTI-CNR, combines the fine-grained visual features of DINOv2 with the rich semantic understanding of CLIP for open-vocabulary segmentation. The approach uses a lightweight, learned projection to bridge their embedding spaces, reaching an average mIoU of 46.3 on eight unsupervised OVS benchmarks with significantly fewer trained parameters than existing methods.

The Core Space Merging framework is introduced for efficiently combining low-rank adaptations (LoRAs) of large neural networks. This method achieves up to 600x faster merging by performing operations in a compact, lossless subspace, while reaching state-of-the-art accuracy, including 94.16% normalized accuracy on Llama 3 8B for NLI tasks and 76.3% on ViT-B/32 for vision tasks.

This review paper from Huawei RAMS Lab, the University of Pisa, and other institutions systematically analyzes the safety challenges of World Models for embodied AI agents in autonomous driving and robotics. It identifies and quantifies recurring safety-critical failures, termed "pathologies," across state-of-the-art models, revealing consistent shortcomings in areas like physical conformity, temporal consistency, and traffic adherence.

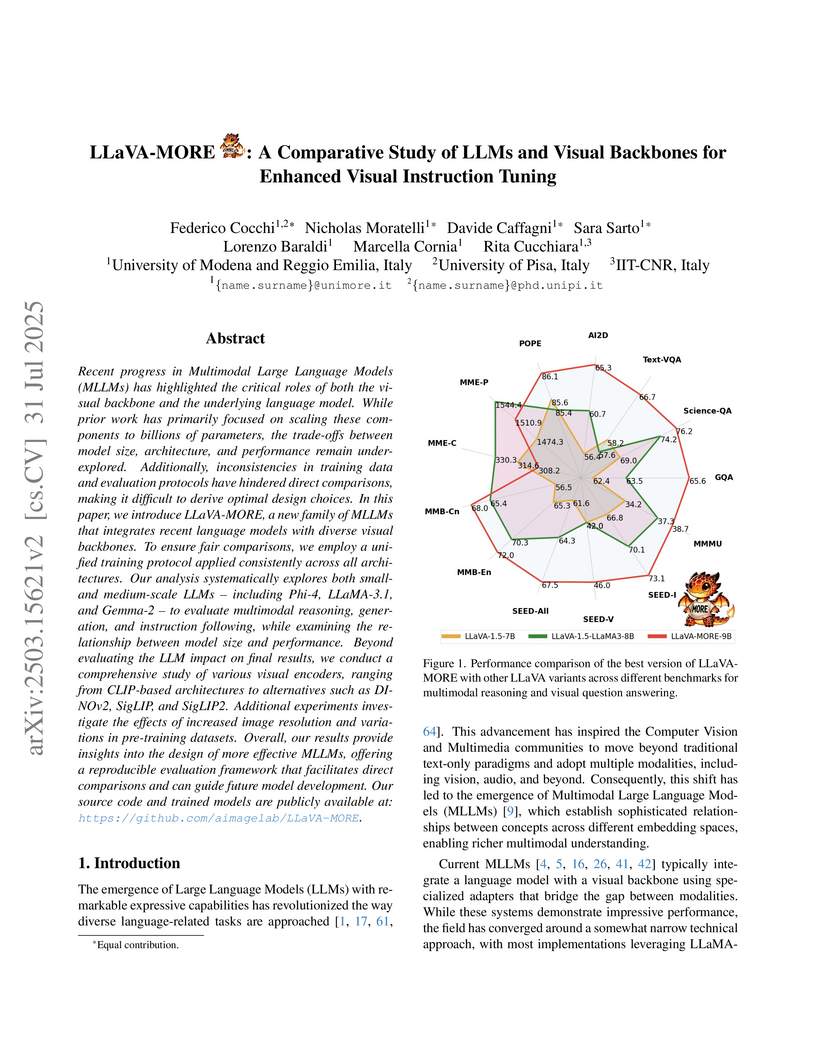

LLaVA-MORE introduces a systematic comparative study of Multimodal Large Language Models (MLLMs), investigating the impact of various Large Language Models and visual backbones under a unified training and evaluation protocol. This empirical analysis provides insights into component contributions and architectural trade-offs, establishing new performance baselines for MLLMs.

Open-Vocabulary object detectors can generalize to an unrestricted set of categories through simple textual prompting. However, adapting these models to rare classes or reinforcing their abilities on multiple specialized domains remains essential. While recent methods rely on monolithic adaptation strategies with a single set of weights, we embrace modular deep learning. We introduce DitHub, a framework designed to build and maintain a library of efficient adaptation modules. Inspired by Version Control Systems, DitHub manages expert modules as branches that can be fetched and merged as needed. This modular approach allows us to conduct an in-depth exploration of the compositional properties of adaptation modules, marking the first such study in Object Detection. Our method achieves state-of-the-art performance on the ODinW-13 benchmark and ODinW-O, a newly introduced benchmark designed to assess class reappearance. For more details, visit our project page: this https URL

Researchers from the University of Modena and Reggio Emilia and affiliated institutions provided a comprehensive survey of visual-based Multimodal Large Language Models (MLLMs), structuring the rapidly evolving field by analyzing architectures, training methodologies, and diverse task capabilities. The work identifies prevailing trends in model design and training, details MLLM advancements in tasks like visual grounding and image generation, and highlights persistent challenges such as hallucinations and high computational demands.

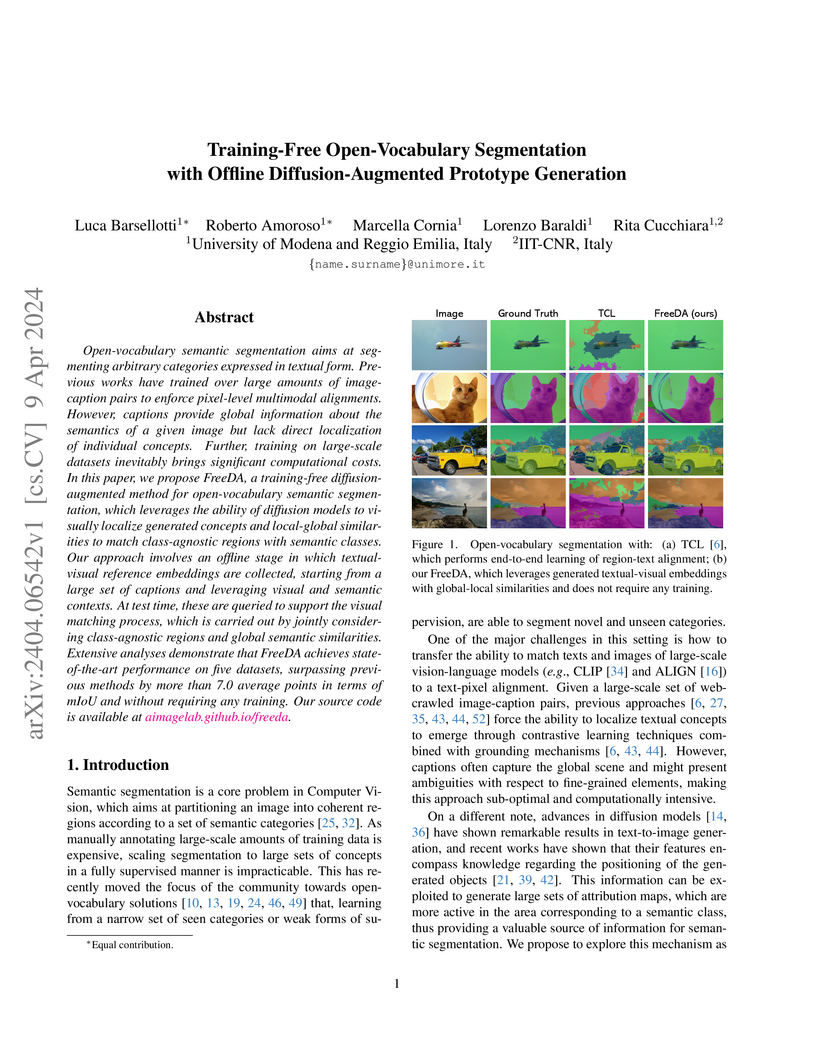

Open-vocabulary semantic segmentation aims at segmenting arbitrary categories expressed in textual form. Previous works have trained over large amounts of image-caption pairs to enforce pixel-level multimodal alignments. However, captions provide global information about the semantics of a given image but lack direct localization of individual concepts. Further, training on large-scale datasets inevitably brings significant computational costs. In this paper, we propose FreeDA, a training-free diffusion-augmented method for open-vocabulary semantic segmentation, which leverages the ability of diffusion models to visually localize generated concepts and local-global similarities to match class-agnostic regions with semantic classes. Our approach involves an offline stage in which textual-visual reference embeddings are collected, starting from a large set of captions and leveraging visual and semantic contexts. At test time, these are queried to support the visual matching process, which is carried out by jointly considering class-agnostic regions and global semantic similarities. Extensive analyses demonstrate that FreeDA achieves state-of-the-art performance on five datasets, surpassing previous methods by more than 7.0 average points in terms of mIoU and without requiring any training.

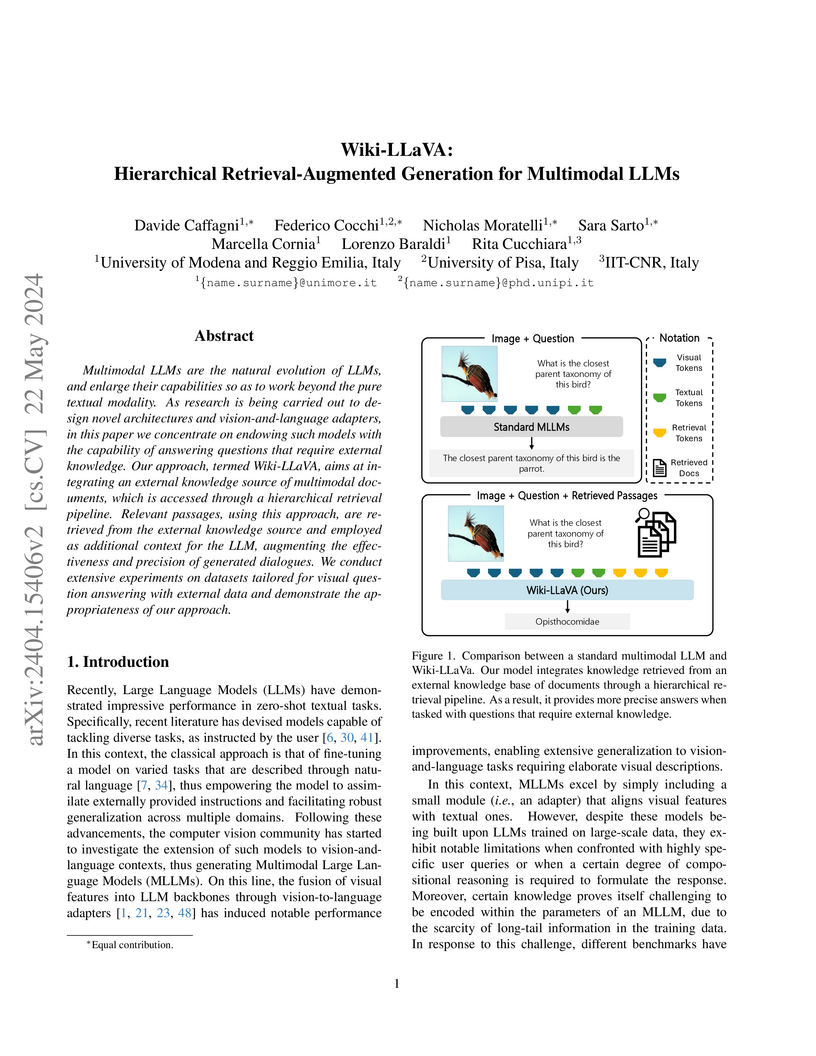

Multimodal LLMs are the natural evolution of LLMs, and enlarge their capabilities so as to work beyond the pure textual modality. As research is being carried out to design novel architectures and vision-and-language adapters, in this paper we concentrate on endowing such models with the capability of answering questions that require external knowledge. Our approach, termed Wiki-LLaVA, aims at integrating an external knowledge source of multimodal documents, which is accessed through a hierarchical retrieval pipeline. Relevant passages, using this approach, are retrieved from the external knowledge source and employed as additional context for the LLM, augmenting the effectiveness and precision of generated dialogues. We conduct extensive experiments on datasets tailored for visual question answering with external data and demonstrate the appropriateness of our approach.

Augmenting Multimodal LLMs with Self-Reflective Tokens for Knowledge-based Visual Question Answering

Augmenting Multimodal LLMs with Self-Reflective Tokens for Knowledge-based Visual Question Answering

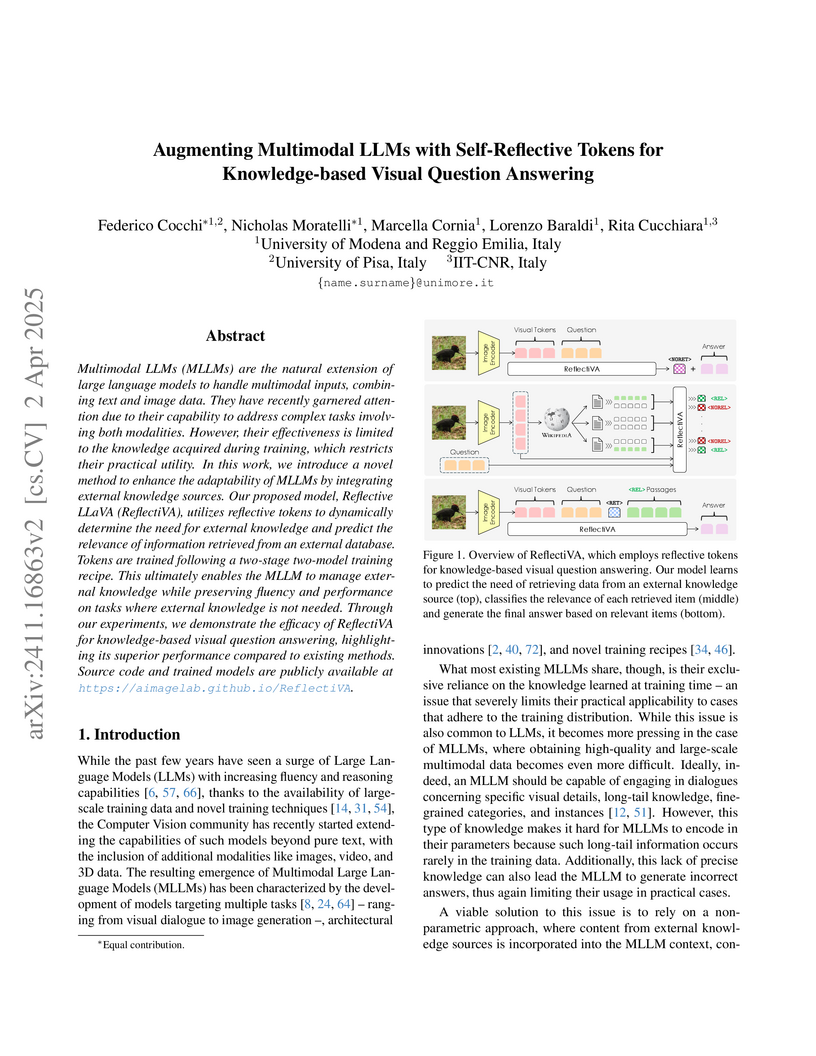

Multimodal LLMs (MLLMs) are the natural extension of large language models to

handle multimodal inputs, combining text and image data. They have recently

garnered attention due to their capability to address complex tasks involving

both modalities. However, their effectiveness is limited to the knowledge

acquired during training, which restricts their practical utility. In this

work, we introduce a novel method to enhance the adaptability of MLLMs by

integrating external knowledge sources. Our proposed model, Reflective LLaVA

(ReflectiVA), utilizes reflective tokens to dynamically determine the need for

external knowledge and predict the relevance of information retrieved from an

external database. Tokens are trained following a two-stage two-model training

recipe. This ultimately enables the MLLM to manage external knowledge while

preserving fluency and performance on tasks where external knowledge is not

needed. Through our experiments, we demonstrate the efficacy of ReflectiVA for

knowledge-based visual question answering, highlighting its superior

performance compared to existing methods. Source code and trained models are

publicly available at this https URL

The Chain of Merges (CoM) framework addresses Merging Covariate Shift (MCS) in activation-based model merging by recursively updating activation statistics layer-wise. This approach consistently outperforms existing methods, achieving 92.62% average normalized accuracy on ViT-B/32 vision tasks and 99.69% on LLaMA 3-8B language tasks.

10 Oct 2025

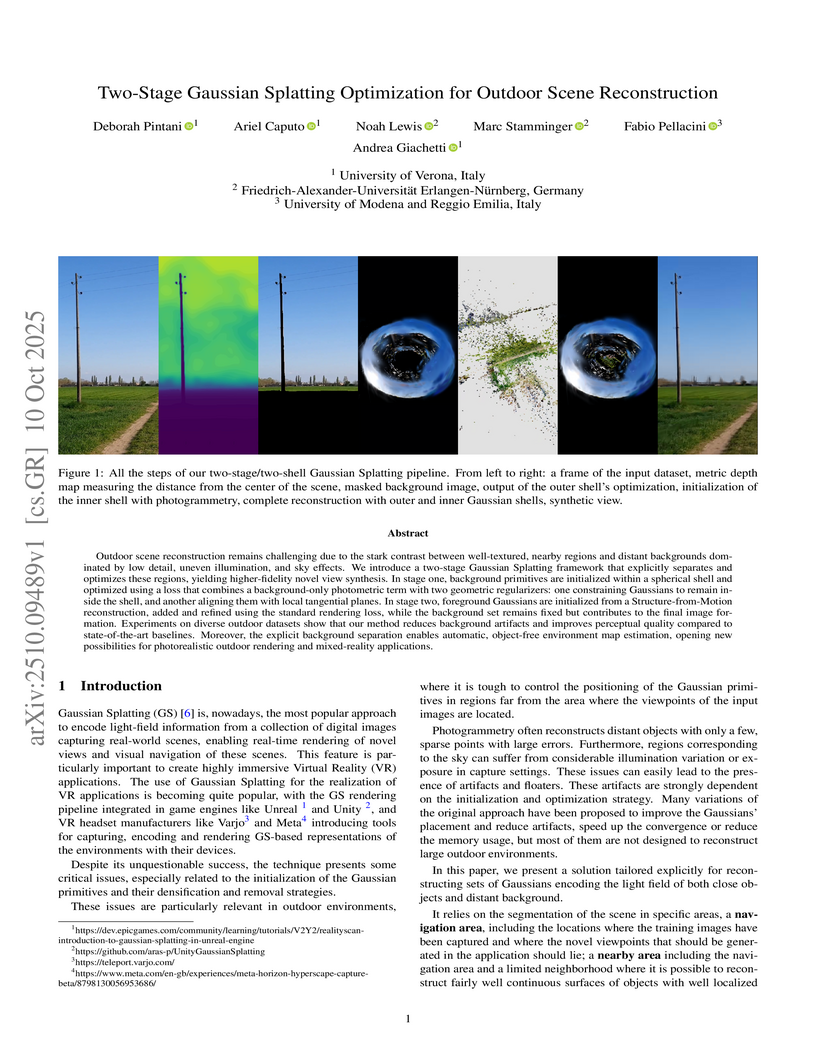

Outdoor scene reconstruction remains challenging due to the stark contrast between well-textured, nearby regions and distant backgrounds dominated by low detail, uneven illumination, and sky effects. We introduce a two-stage Gaussian Splatting framework that explicitly separates and optimizes these regions, yielding higher-fidelity novel view synthesis. In stage one, background primitives are initialized within a spherical shell and optimized using a loss that combines a background-only photometric term with two geometric regularizers: one constraining Gaussians to remain inside the shell, and another aligning them with local tangential planes. In stage two, foreground Gaussians are initialized from a Structure-from-Motion reconstruction, added and refined using the standard rendering loss, while the background set remains fixed but contributes to the final image formation. Experiments on diverse outdoor datasets show that our method reduces background artifacts and improves perceptual quality compared to state-of-the-art baselines. Moreover, the explicit background separation enables automatic, object-free environment map estimation, opening new possibilities for photorealistic outdoor rendering and mixed-reality applications.

Generated Scalable Vector Graphics (SVG) images demand evaluation criteria tuned to their symbolic and vectorial nature: criteria that existing metrics such as FID, LPIPS, or CLIPScore fail to satisfy. In this paper, we introduce SVGauge, the first human-aligned, reference based metric for text-to-SVG generation. SVGauge jointly measures (i) visual fidelity, obtained by extracting SigLIP image embeddings and refining them with PCA and whitening for domain alignment, and (ii) semantic consistency, captured by comparing BLIP-2-generated captions of the SVGs against the original prompts in the combined space of SBERT and TF-IDF. Evaluation on the proposed SHE benchmark shows that SVGauge attains the highest correlation with human judgments and reproduces system-level rankings of eight zero-shot LLM-based generators more faithfully than existing metrics. Our results highlight the necessity of vector-specific evaluation and provide a practical tool for benchmarking future text-to-SVG generation models.

A new framework for Vision-Language Model safety, Hyperbolic Safety-Aware CLIP (HySAC), leverages hyperbolic geometry to explicitly differentiate safe and unsafe content, shifting from unlearning to an awareness paradigm. This approach enables dynamic redirection of unsafe queries to relevant safe content, outperforming prior methods with a 30.5% R@1 for unsafe text to safe image retrieval, while also allowing controlled access to unsafe content with an 81.1% R@1 for unsafe text to unsafe image retrieval.

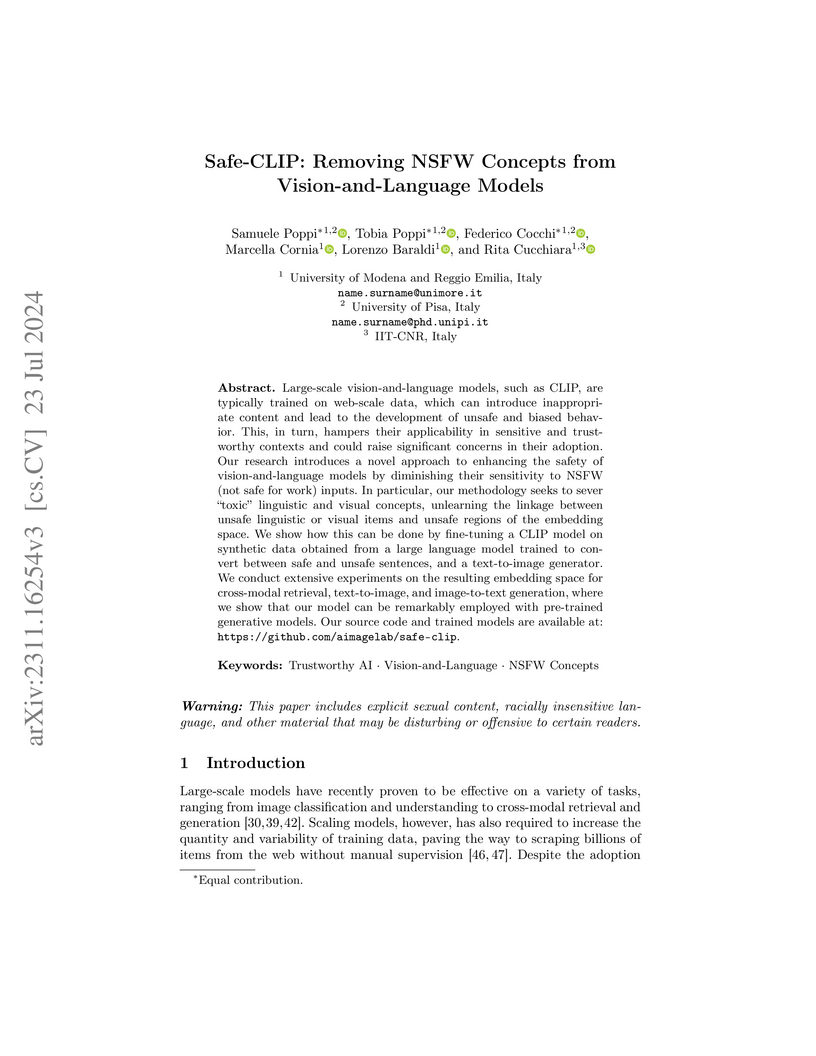

Safe-CLIP introduces a method to fine-tune vision-and-language models like CLIP, creating an embedding space that reduces sensitivity to Not Safe For Work (NSFW) content while preserving performance on safe inputs. This approach demonstrably lowers the probability of generating or retrieving inappropriate content in downstream applications by over 20 percentage points in some cases.

Machine Learning (ML) algorithms have become increasingly popular for supporting Network Intrusion Detection Systems (NIDS). Nevertheless, extensive research has shown their vulnerability to adversarial attacks, which involve subtle perturbations to the inputs of the models aimed at compromising their performance. Recent proposals have effectively leveraged Graph Neural Networks (GNN) to produce predictions based also on the structural patterns exhibited by intrusions to enhance the detection robustness. However, the adoption of GNN-based NIDS introduces new types of risks. In this paper, we propose the first formalization of adversarial attacks specifically tailored for GNN in network intrusion detection. Moreover, we outline and model the problem space constraints that attackers need to consider to carry out feasible structural attacks in real-world scenarios. As a final contribution, we conduct an extensive experimental campaign in which we launch the proposed attacks against state-of-the-art GNN-based NIDS. Our findings demonstrate the increased robustness of the models against classical feature-based adversarial attacks, while highlighting their susceptibility to structure-based attacks.

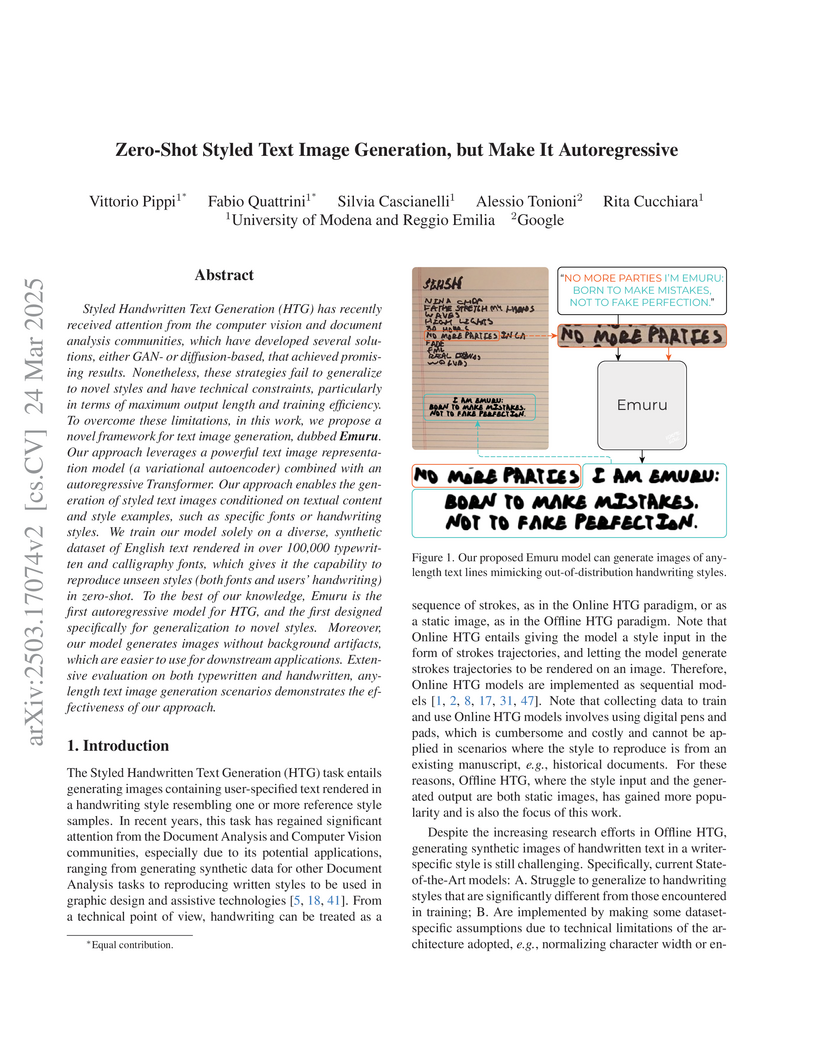

Styled Handwritten Text Generation (HTG) has recently received attention from

the computer vision and document analysis communities, which have developed

several solutions, either GAN- or diffusion-based, that achieved promising

results. Nonetheless, these strategies fail to generalize to novel styles and

have technical constraints, particularly in terms of maximum output length and

training efficiency. To overcome these limitations, in this work, we propose a

novel framework for text image generation, dubbed Emuru. Our approach leverages

a powerful text image representation model (a variational autoencoder) combined

with an autoregressive Transformer. Our approach enables the generation of

styled text images conditioned on textual content and style examples, such as

specific fonts or handwriting styles. We train our model solely on a diverse,

synthetic dataset of English text rendered in over 100,000 typewritten and

calligraphy fonts, which gives it the capability to reproduce unseen styles

(both fonts and users' handwriting) in zero-shot. To the best of our knowledge,

Emuru is the first autoregressive model for HTG, and the first designed

specifically for generalization to novel styles. Moreover, our model generates

images without background artifacts, which are easier to use for downstream

applications. Extensive evaluation on both typewritten and handwritten,

any-length text image generation scenarios demonstrates the effectiveness of

our approach.

Tabular data generation has recently attracted a growing interest due to its

different application scenarios. However, generating time series of tabular

data, where each element of the series depends on the others, remains a largely

unexplored domain. This gap is probably due to the difficulty of jointly

solving different problems, the main of which are the heterogeneity of tabular

data (a problem common to non-time-dependent approaches) and the variable

length of a time series. In this paper, we propose a Diffusion Transformers

(DiTs) based approach for tabular data series generation. Inspired by the

recent success of DiTs in image and video generation, we extend this framework

to deal with heterogeneous data and variable-length sequences. Using extensive

experiments on six datasets, we show that the proposed approach outperforms

previous work by a large margin.

Continual Learning has inspired a plethora of approaches and evaluation settings; however, the majority of them overlooks the properties of a practical scenario, where the data stream cannot be shaped as a sequence of tasks and offline training is not viable. We work towards General Continual Learning (GCL), where task boundaries blur and the domain and class distributions shift either gradually or suddenly. We address it through mixing rehearsal with knowledge distillation and regularization; our simple baseline, Dark Experience Replay, matches the network's logits sampled throughout the optimization trajectory, thus promoting consistency with its past. By conducting an extensive analysis on both standard benchmarks and a novel GCL evaluation setting (MNIST-360), we show that such a seemingly simple baseline outperforms consolidated approaches and leverages limited resources. We further explore the generalization capabilities of our objective, showing its regularization being beneficial beyond mere performance.

There are no more papers matching your filters at the moment.