Oxford Robotics Institute

The Oxford Robotics Institute developed GUARDIAN, a neuro-symbolic verification framework that uses gated uncertainty-aware runtime dual invariants to ensure safety in neural signal-controlled robotics. It achieved 94-97% safety rates, even when underlying neural decoders exhibited low test accuracy and high miscalibration, by integrating real-time physiological and logical checks.

Florensa et al. introduce Reverse Curriculum Generation, an automatic curriculum learning method for goal-oriented reinforcement learning tasks with sparse rewards. This approach trains an agent by progressively expanding the range of solvable start states from the goal outwards, successfully enabling learning for complex robotic manipulation tasks that were previously intractable for standard reinforcement learning algorithms.

16 Oct 2025

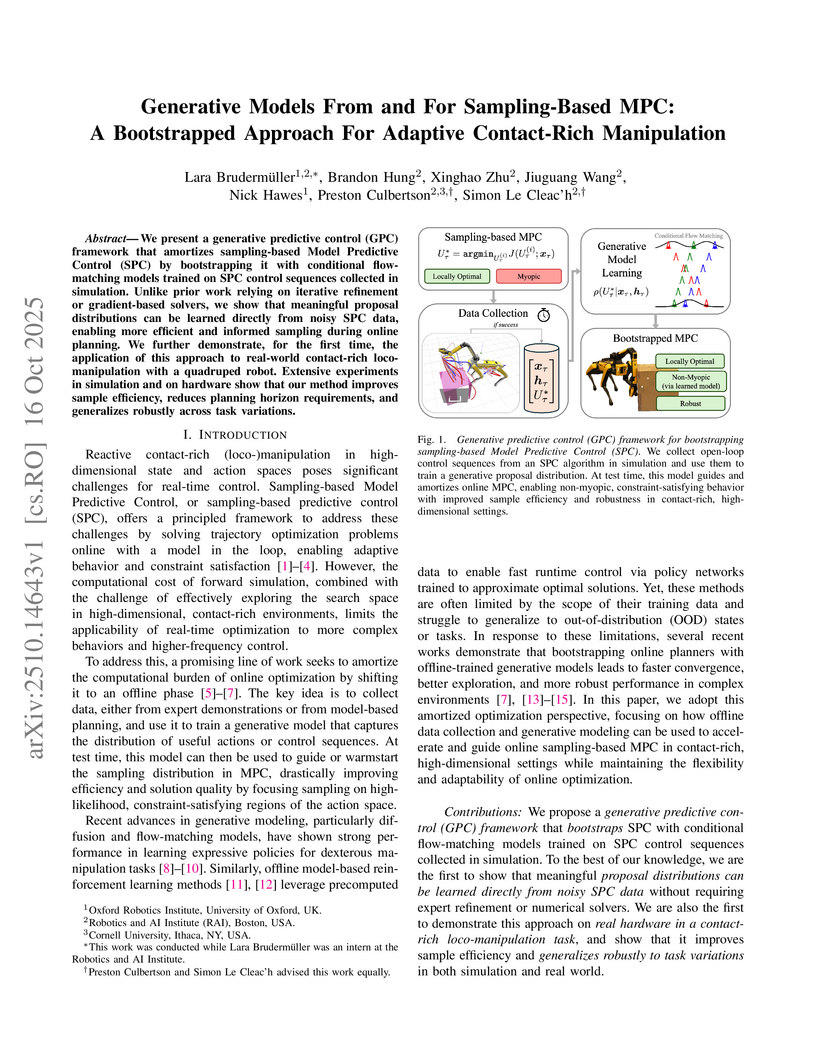

We present a generative predictive control (GPC) framework that amortizes sampling-based Model Predictive Control (SPC) by bootstrapping it with conditional flow-matching models trained on SPC control sequences collected in simulation. Unlike prior work relying on iterative refinement or gradient-based solvers, we show that meaningful proposal distributions can be learned directly from noisy SPC data, enabling more efficient and informed sampling during online planning. We further demonstrate, for the first time, the application of this approach to real-world contact-rich loco-manipulation with a quadruped robot. Extensive experiments in simulation and on hardware show that our method improves sample efficiency, reduces planning horizon requirements, and generalizes robustly across task variations.

For imitation learning algorithms to scale to real-world challenges, they

must handle high-dimensional observations, offline learning, and policy-induced

covariate-shift. We propose DITTO, an offline imitation learning algorithm

which addresses all three of these problems. DITTO optimizes a novel distance

metric in the latent space of a learned world model: First, we train a world

model on all available trajectory data, then, the imitation agent is unrolled

from expert start states in the learned model, and penalized for its latent

divergence from the expert dataset over multiple time steps. We optimize this

multi-step latent divergence using standard reinforcement learning algorithms,

which provably induces imitation learning, and empirically achieves

state-of-the art performance and sample efficiency on a range of Atari

environments from pixels, without any online environment access. We also adapt

other standard imitation learning algorithms to the world model setting, and

show that this considerably improves their performance. Our results show how

creative use of world models can lead to a simple, robust, and

highly-performant policy-learning framework.

14 Sep 2025

Climbing, crouching, bridging gaps, and walking up stairs are just a few of the advantages that quadruped robots have over wheeled robots, making them more suitable for navigating rough and unstructured terrain. However, executing such manoeuvres requires precise temporal coordination and complex agent-environment interactions. Moreover, legged locomotion is inherently more prone to slippage and tripping, and the classical approach of modeling such cases to design a robust controller thus quickly becomes impractical. In contrast, reinforcement learning offers a compelling solution by enabling optimal control through trial and error. We present a generalist reinforcement learning algorithm for quadrupedal agents in dynamic motion scenarios. The learned policy rivals state-of-the-art specialist policies trained using a mixture of experts approach, while using only 25% as many agents during training. Our experiments also highlight the key components of the generalist locomotion policy and the primary factors contributing to its success.

Monte-Carlo Tree Search (MCTS) methods, such as Upper Confidence Bound applied to Trees (UCT), are instrumental to automated planning techniques. However, UCT can be slow to explore an optimal action when it initially appears inferior to other actions. Maximum ENtropy Tree-Search (MENTS) incorporates the maximum entropy principle into an MCTS approach, utilising Boltzmann policies to sample actions, naturally encouraging more exploration. In this paper, we highlight a major limitation of MENTS: optimal actions for the maximum entropy objective do not necessarily correspond to optimal actions for the original objective. We introduce two algorithms, Boltzmann Tree Search (BTS) and Decaying ENtropy Tree-Search (DENTS), that address these limitations and preserve the benefits of Boltzmann policies, such as allowing actions to be sampled faster by using the Alias method. Our empirical analysis shows that our algorithms show consistent high performance across several benchmark domains, including the game of Go.

In this work, we propose a methodology for investigating the use of semantic attention to enhance the explainability of Graph Neural Network (GNN)-based models. Graph Deep Learning (GDL) has emerged as a promising field for tasks like scene interpretation, leveraging flexible graph structures to concisely describe complex features and relationships. As traditional explainability methods used in eXplainable AI (XAI) cannot be directly applied to such structures, graph-specific approaches are introduced. Attention has been previously employed to estimate the importance of input features in GDL, however, the fidelity of this method in generating accurate and consistent explanations has been questioned. To evaluate the validity of using attention weights as feature importance indicators, we introduce semantically-informed perturbations and correlate predicted attention weights with the accuracy of the model. Our work extends existing attention-based graph explainability methods by analysing the divergence in the attention distributions in relation to semantically sorted feature sets and the behaviour of a GNN model, efficiently estimating feature importance. We apply our methodology on a lidar pointcloud estimation model successfully identifying key semantic classes that contribute to enhanced performance, effectively generating reliable post-hoc semantic explanations.

25 Mar 2021

In dynamic and cramped industrial environments, achieving reliable Visual Teach and Repeat (VT&R) with a single-camera is challenging. In this work, we develop a robust method for non-synchronized multi-camera VT&R. Our contribution are expected Camera Performance Models (CPM) which evaluate the camera streams from the teach step to determine the most informative one for localization during the repeat step. By actively selecting the most suitable camera for localization, we are able to successfully complete missions when one of the cameras is occluded, faces into feature poor locations or if the environment has changed. Furthermore, we explore the specific challenges of achieving VT&R on a dynamic quadruped robot, ANYmal. The camera does not follow a linear path (due to the walking gait and holonomicity) such that precise path-following cannot be achieved. Our experiments feature forward and backward facing stereo cameras showing VT&R performance in cluttered indoor and outdoor scenarios. We compared the trajectories the robot executed during the repeat steps demonstrating typical tracking precision of less than 10cm on average. With a view towards omni-directional localization, we show how the approach generalizes to four cameras in simulation. Video: this https URL

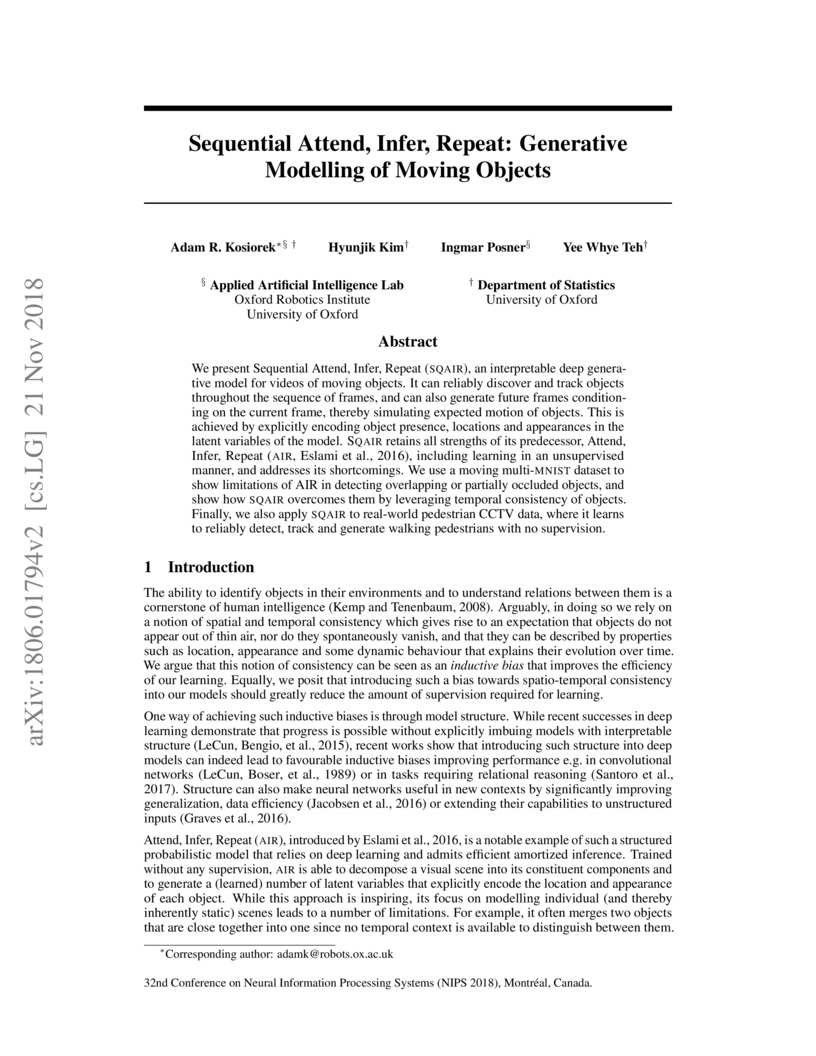

We present Sequential Attend, Infer, Repeat (SQAIR), an interpretable deep generative model for videos of moving objects. It can reliably discover and track objects throughout the sequence of frames, and can also generate future frames conditioning on the current frame, thereby simulating expected motion of objects. This is achieved by explicitly encoding object presence, locations and appearances in the latent variables of the model. SQAIR retains all strengths of its predecessor, Attend, Infer, Repeat (AIR, Eslami et. al., 2016), including learning in an unsupervised manner, and addresses its shortcomings. We use a moving multi-MNIST dataset to show limitations of AIR in detecting overlapping or partially occluded objects, and show how SQAIR overcomes them by leveraging temporal consistency of objects. Finally, we also apply SQAIR to real-world pedestrian CCTV data, where it learns to reliably detect, track and generate walking pedestrians with no supervision.

02 Oct 2023

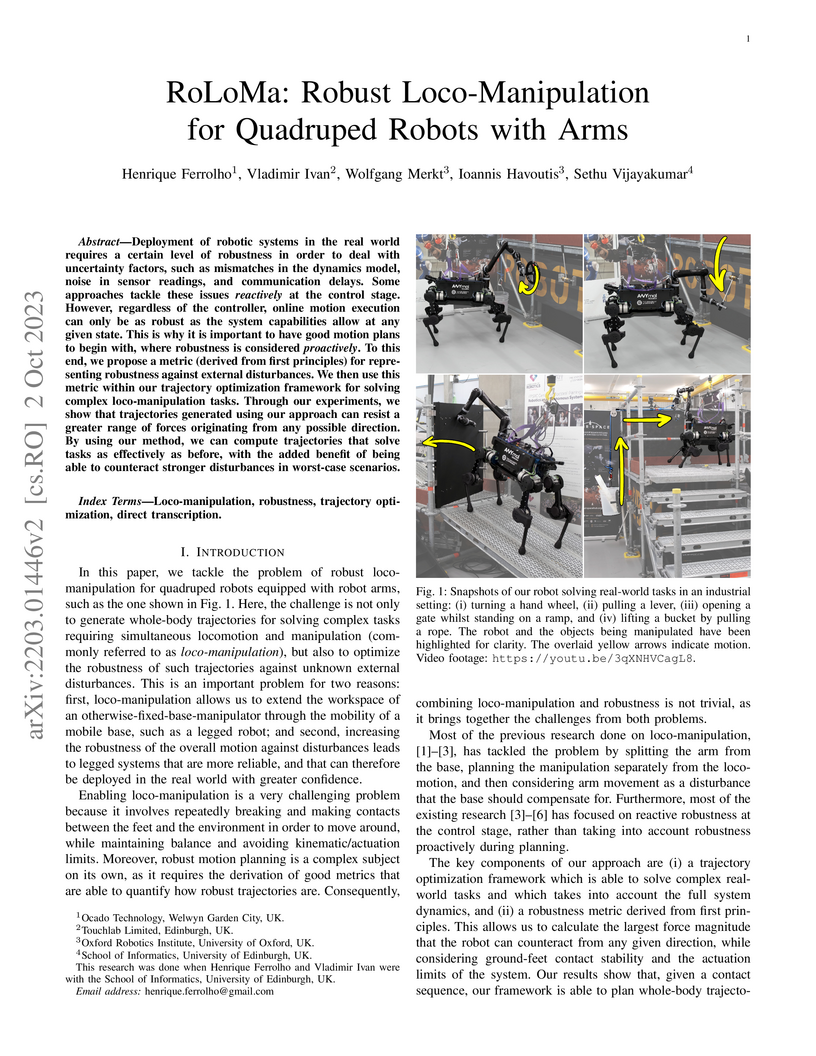

The RoLoMa framework introduces a method for robust loco-manipulation by integrating a novel Smallest Unrejectable Force (SUF) metric into a whole-body trajectory optimization, enabling quadruped robots with arms to proactively plan motions inherently resilient to external disturbances. The approach demonstrates enhanced resistance to forces and successful handling of unmodeled payloads on an ANYmal B robot.

22 Feb 2022

Building differentiable simulations of physical processes has recently

received an increasing amount of attention. Specifically, some efforts develop

differentiable robotic physics engines motivated by the computational benefits

of merging rigid body simulations with modern differentiable machine learning

libraries. Here, we present a library that focuses on the ability to combine

data driven methods with analytical rigid body computations. More concretely,

our library \emph{Differentiable Robot Models} implements both

\emph{differentiable} and \emph{learnable} models of the kinematics and

dynamics of robots in Pytorch. The source-code is available at

\url{this https URL}

23 Apr 2024

Visual motion estimation is a well-studied challenge in autonomous

navigation. Recent work has focused on addressing multimotion estimation in

highly dynamic environments. These environments not only comprise multiple,

complex motions but also tend to exhibit significant occlusion.

Estimating third-party motions simultaneously with the sensor egomotion is

difficult because an object's observed motion consists of both its true motion

and the sensor motion. Most previous works in multimotion estimation simplify

this problem by relying on appearance-based object detection or

application-specific motion constraints. These approaches are effective in

specific applications and environments but do not generalize well to the full

multimotion estimation problem (MEP).

This paper presents Multimotion Visual Odometry (MVO), a multimotion

estimation pipeline that estimates the full SE(3) trajectory of every motion in

the scene, including the sensor egomotion, without relying on appearance-based

information. MVO extends the traditional visual odometry (VO) pipeline with

multimotion segmentation and tracking techniques. It uses physically founded

motion priors to extrapolate motions through temporary occlusions and identify

the reappearance of motions through motion closure. Evaluations on real-world

data from the Oxford Multimotion Dataset (OMD) and the KITTI Vision Benchmark

Suite demonstrate that MVO achieves good estimation accuracy compared to

similar approaches and is applicable to a variety of multimotion estimation

challenges.

The parameters for a Markov Decision Process (MDP) often cannot be specified exactly. Uncertain MDPs (UMDPs) capture this model ambiguity by defining sets which the parameters belong to. Minimax regret has been proposed as an objective for planning in UMDPs to find robust policies which are not overly conservative. In this work, we focus on planning for Stochastic Shortest Path (SSP) UMDPs with uncertain cost and transition functions. We introduce a Bellman equation to compute the regret for a policy. We propose a dynamic programming algorithm that utilises the regret Bellman equation, and show that it optimises minimax regret exactly for UMDPs with independent uncertainties. For coupled uncertainties, we extend our approach to use options to enable a trade off between computation and solution quality. We evaluate our approach on both synthetic and real-world domains, showing that it significantly outperforms existing baselines.

To apply reinforcement learning to safety-critical applications, we ought to provide safety guarantees during both policy training and deployment. In this work, we present theoretical results that place a bound on the probability of violating a safety property for a new task-specific policy in a model-free, episodic setting. This bound, based on a maximum policy ratio computed with respect to a 'safe' base policy, can also be applied to temporally-extended properties (beyond safety) and to robust control problems. To utilize these results, we introduce SPoRt, which provides a data-driven method for computing this bound for the base policy using the scenario approach, and includes Projected PPO, a new projection-based approach for training the task-specific policy while maintaining a user-specified bound on property violation. SPoRt thus enables users to trade off safety guarantees against task-specific performance. Complementing our theoretical results, we present experimental results demonstrating this trade-off and comparing the theoretical bound to posterior bounds derived from empirical violation rates.

12 Feb 2023

The parameters for a Markov Decision Process (MDP) often cannot be specified exactly. Uncertain MDPs (UMDPs) capture this model ambiguity by defining sets which the parameters belong to. Minimax regret has been proposed as an objective for planning in UMDPs to find robust policies which are not overly conservative. In this work, we focus on planning for Stochastic Shortest Path (SSP) UMDPs with uncertain cost and transition functions. We introduce a Bellman equation to compute the regret for a policy. We propose a dynamic programming algorithm that utilises the regret Bellman equation, and show that it optimises minimax regret exactly for UMDPs with independent uncertainties. For coupled uncertainties, we extend our approach to use options to enable a trade off between computation and solution quality. We evaluate our approach on both synthetic and real-world domains, showing that it significantly outperforms existing baselines.

25 Feb 2021

Central Pattern Generators (CPGs) have several properties desirable for

locomotion: they generate smooth trajectories, are robust to perturbations and

are simple to implement. Although conceptually promising, we argue that the

full potential of CPGs has so far been limited by insufficient sensory-feedback

information. This paper proposes a new methodology that allows tuning CPG

controllers through gradient-based optimization in a Reinforcement Learning

(RL) setting. To the best of our knowledge, this is the first time CPGs have

been trained in conjunction with a MultilayerPerceptron (MLP) network in a

Deep-RL context. In particular, we show how CPGs can directly be integrated as

the Actor in an Actor-Critic formulation. Additionally, we demonstrate how this

change permits us to integrate highly non-linear feedback directly from sensory

perception to reshape the oscillators' dynamics. Our results on a locomotion

task using a single-leg hopper demonstrate that explicitly using the CPG as the

Actor rather than as part of the environment results in a significant increase

in the reward gained over time (6x more) compared with previous approaches.

Furthermore, we show that our method without feedback reproduces results

similar to prior work with feedback. Finally, we demonstrate how our

closed-loop CPG progressively improves the hopping behaviour for longer

training epochs relying only on basic reward functions.

In this work we aim to bridge the divide between autonomous vehicles and

causal reasoning. Autonomous vehicles have come to increasingly interact with

human drivers, and in many cases may pose risks to the physical or mental

well-being of those they interact with. Meanwhile causal models, despite their

inherent transparency and ability to offer contrastive explanations, have found

limited usage within such systems. As such, we first identify the challenges

that have limited the integration of structural causal models within autonomous

vehicles. We then introduce a number of theoretical extensions to the

structural causal model formalism in order to tackle these challenges. This

augments these models to possess greater levels of modularisation and

encapsulation, as well presenting temporal causal model representation with

constant space complexity. We also prove through the extensions we have

introduced that dynamically mutable sets (e.g. varying numbers of autonomous

vehicles across time) can be used within a structural causal model while

maintaining a relaxed form of causal stationarity. Finally we discuss the

application of the extensions in the context of the autonomous vehicle and

service robotics domain along with potential directions for future work.

30 Mar 2021

This work presents an approach for control, state-estimation and learning

model (hyper)parameters for robotic manipulators. It is based on the active

inference framework, prominent in computational neuroscience as a theory of the

brain, where behaviour arises from minimizing variational free-energy. The

robotic manipulator shows adaptive and robust behaviour compared to

state-of-the-art methods. Additionally, we show the exact relationship to

classic methods such as PID control. Finally, we show that by learning a

temporal parameter and model variances, our approach can deal with unmodelled

dynamics, damps oscillations, and is robust against disturbances and poor

initial parameters. The approach is validated on the `Franka Emika Panda' 7 DoF

manipulator.

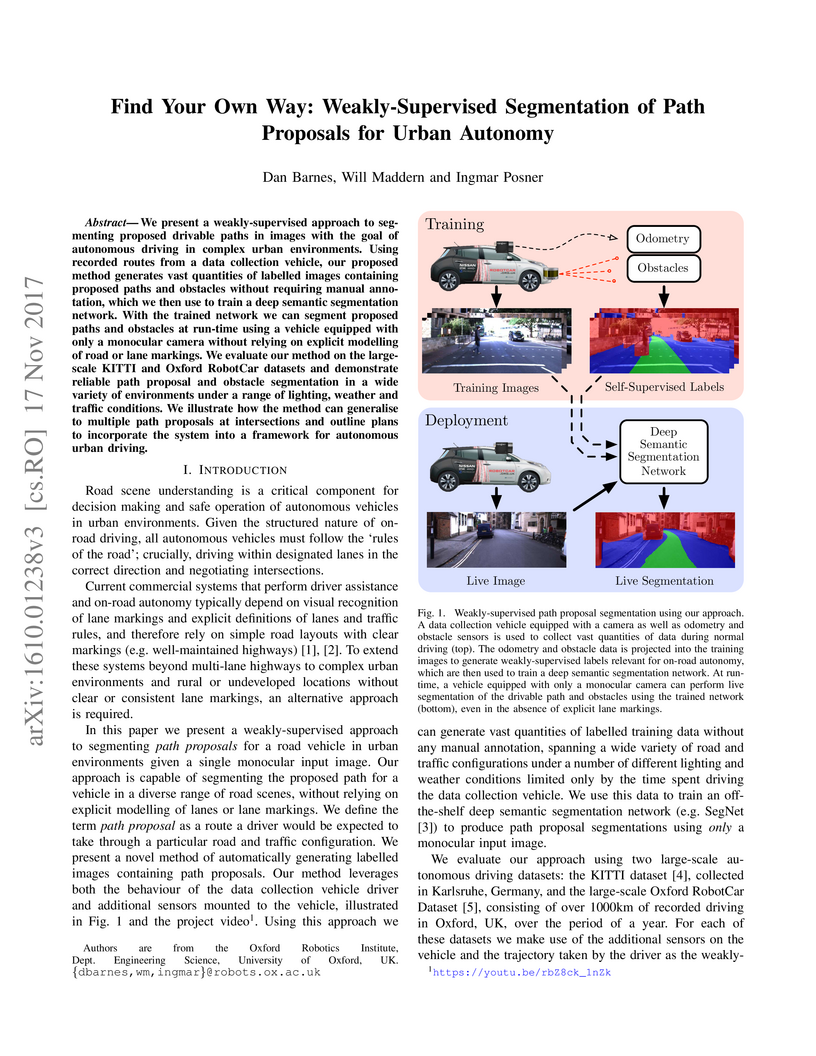

We present a weakly-supervised approach to segmenting proposed drivable paths in images with the goal of autonomous driving in complex urban environments. Using recorded routes from a data collection vehicle, our proposed method generates vast quantities of labelled images containing proposed paths and obstacles without requiring manual annotation, which we then use to train a deep semantic segmentation network. With the trained network we can segment proposed paths and obstacles at run-time using a vehicle equipped with only a monocular camera without relying on explicit modelling of road or lane markings. We evaluate our method on the large-scale KITTI and Oxford RobotCar datasets and demonstrate reliable path proposal and obstacle segmentation in a wide variety of environments under a range of lighting, weather and traffic conditions. We illustrate how the method can generalise to multiple path proposals at intersections and outline plans to incorporate the system into a framework for autonomous urban driving.

30 Jun 2022

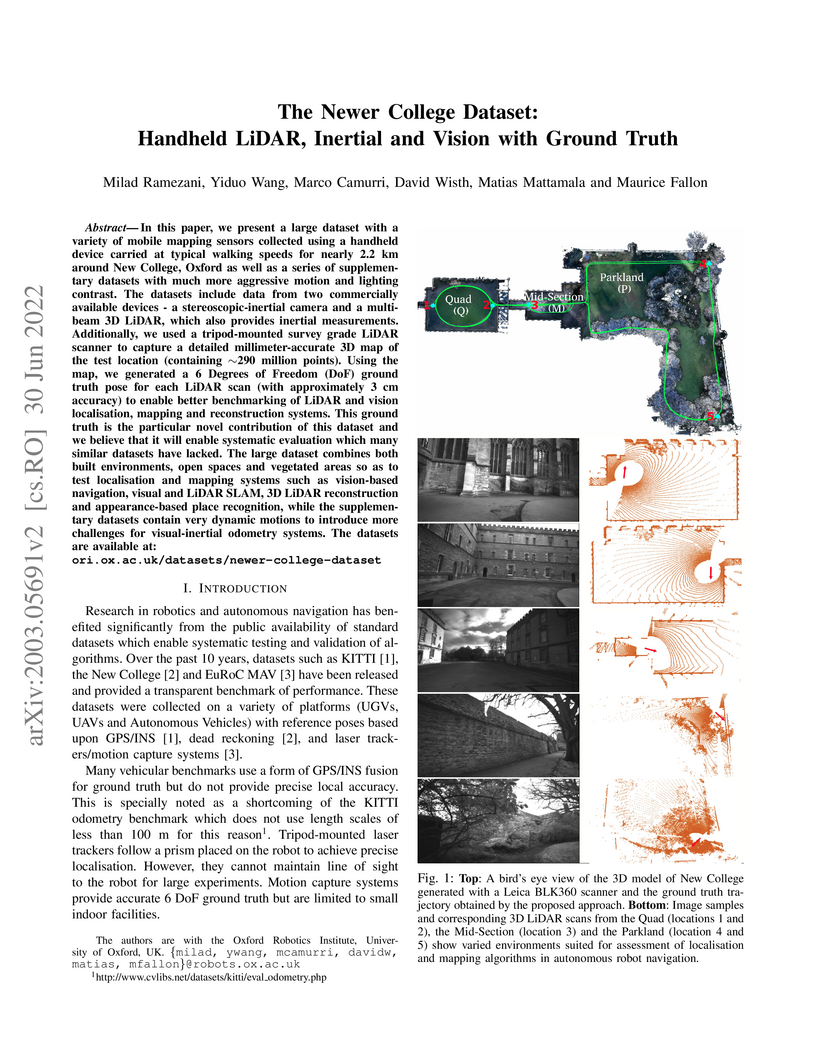

In this paper we present a large dataset with a variety of mobile mapping sensors collected using a handheld device carried at typical walking speeds for nearly 2.2 km through New College, Oxford. The dataset includes data from two commercially available devices - a stereoscopic-inertial camera and a multi-beam 3D LiDAR, which also provides inertial measurements. Additionally, we used a tripod-mounted survey grade LiDAR scanner to capture a detailed millimeter-accurate 3D map of the test location (containing ∼290 million points). Using the map we inferred centimeter-accurate 6 Degree of Freedom (DoF) ground truth for the position of the device for each LiDAR scan to enable better evaluation of LiDAR and vision localisation, mapping and reconstruction systems. This ground truth is the particular novel contribution of this dataset and we believe that it will enable systematic evaluation which many similar datasets have lacked. The dataset combines both built environments, open spaces and vegetated areas so as to test localization and mapping systems such as vision-based navigation, visual and LiDAR SLAM, 3D LIDAR reconstruction and appearance-based place recognition. The dataset is available at: this http URL

There are no more papers matching your filters at the moment.