CENTAI Institute

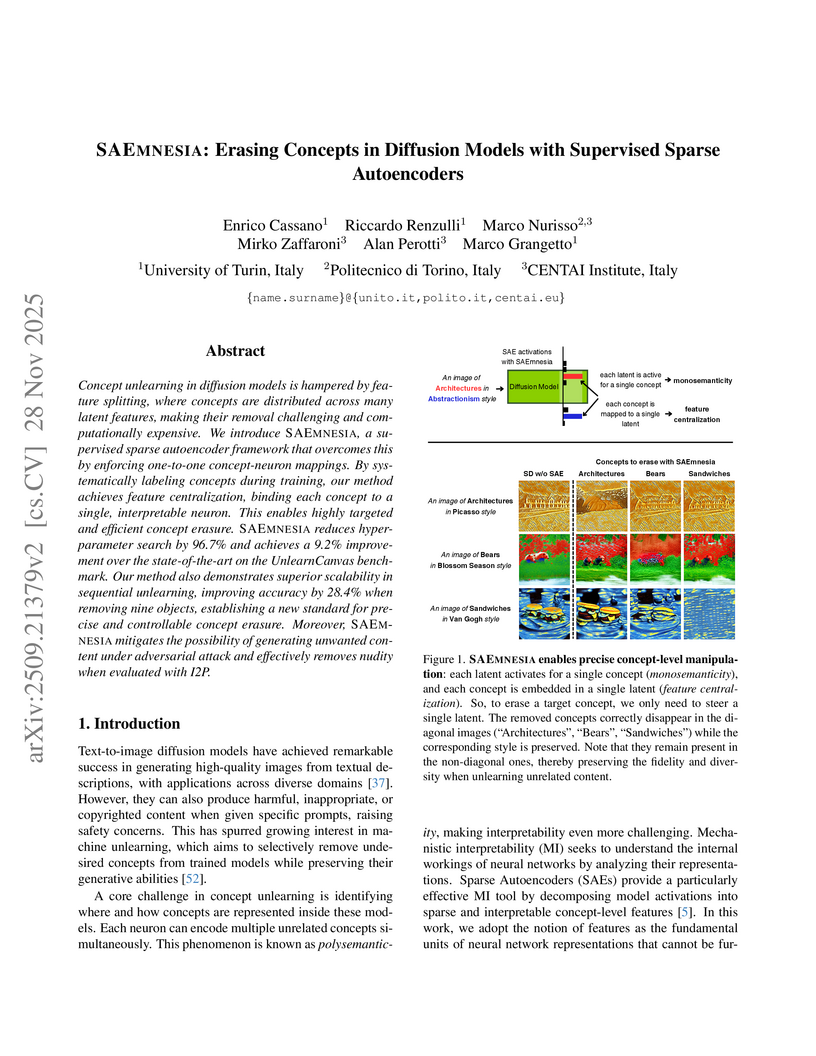

Concept unlearning in diffusion models is hampered by feature splitting, where concepts are distributed across many latent features, making their removal challenging and computationally expensive. We introduce SAEmnesia, a supervised sparse autoencoder framework that overcomes this by enforcing one-to-one concept-neuron mappings. By systematically labeling concepts during training, our method achieves feature centralization, binding each concept to a single, interpretable neuron. This enables highly targeted and efficient concept erasure. SAEmnesia reduces hyperparameter search by 96.7% and achieves a 9.2% improvement over the state-of-the-art on the UnlearnCanvas benchmark. Our method also demonstrates superior scalability in sequential unlearning, improving accuracy by 28.4% when removing nine objects, establishing a new standard for precise and controllable concept erasure. Moreover, SAEmnesia mitigates the possibility of generating unwanted content under adversarial attack and effectively removes nudity when evaluated with I2P.

Universitat Pompeu Fabra University of PennsylvaniaTampere UniversityComplexity Science HubCentral European UniversityUniversity of KonstanzConstructor UniversityBarcelona Supercomputing CenterWroclaw University of Science and TechnologyCENTAI InstituteNational Laboratory for Health SecurityInstitute for Cross-Disciplinary Physics and Complex Systems (IFISC) UIB-CSICHUN-REN Rényi Institute of MathematicsUniversidad Nacional Autóma de México

University of PennsylvaniaTampere UniversityComplexity Science HubCentral European UniversityUniversity of KonstanzConstructor UniversityBarcelona Supercomputing CenterWroclaw University of Science and TechnologyCENTAI InstituteNational Laboratory for Health SecurityInstitute for Cross-Disciplinary Physics and Complex Systems (IFISC) UIB-CSICHUN-REN Rényi Institute of MathematicsUniversidad Nacional Autóma de México

University of PennsylvaniaTampere UniversityComplexity Science HubCentral European UniversityUniversity of KonstanzConstructor UniversityBarcelona Supercomputing CenterWroclaw University of Science and TechnologyCENTAI InstituteNational Laboratory for Health SecurityInstitute for Cross-Disciplinary Physics and Complex Systems (IFISC) UIB-CSICHUN-REN Rényi Institute of MathematicsUniversidad Nacional Autóma de México

University of PennsylvaniaTampere UniversityComplexity Science HubCentral European UniversityUniversity of KonstanzConstructor UniversityBarcelona Supercomputing CenterWroclaw University of Science and TechnologyCENTAI InstituteNational Laboratory for Health SecurityInstitute for Cross-Disciplinary Physics and Complex Systems (IFISC) UIB-CSICHUN-REN Rényi Institute of MathematicsUniversidad Nacional Autóma de MéxicoOpinion dynamics, the study of how individual beliefs and collective public opinion evolve, is a fertile domain for applying statistical physics to complex social phenomena. Like physical systems, societies exhibit macroscopic regularities from localized interactions, leading to outcomes such as consensus or fragmentation. This field has grown significantly, attracting interdisciplinary methods and driven by a surge in large-scale behavioral data. This review covers its rapid progress, bridging the literature dispersion. We begin with essential concepts and definitions, encompassing the nature of opinions, microscopic and macroscopic dynamics. This foundation leads to an overview of empirical research, from lab experiments to large-scale data analysis, which informs and validates models of opinion dynamics. We then present individual-based models, categorized by their macroscopic phenomena (e.g., consensus, polarization, echo chambers) and microscopic mechanisms (e.g., homophily, assimilation). We also review social contagion phenomena, highlighting their connection to opinion dynamics. Furthermore, the review covers common analytical and computational tools, including stochastic processes, treatments, simulations, and optimization. Finally, we explore emerging frontiers, such as connecting empirical data to models and using AI agents as testbeds for novel social phenomena. By systematizing terminology and emphasizing analogies with traditional physics, this review aims to consolidate knowledge, provide a robust theoretical foundation, and shape future research in opinion dynamics.

Understanding how higher-order interactions affect collective behavior is a central problem in nonlinear dynamics and complex systems. Most works have focused on a single higher-order coupling function, neglecting other viable choices. Here we study coupled oscillators with dyadic and three different types of higher-order couplings. By analyzing the stability of different twisted states on rings, we show that many states are stable only for certain combinations of higher-order couplings, and thus the full range of system dynamics cannot be observed unless all types of higher-order couplings are simultaneously considered.

We explore the potential of Large Language Models (LLMs) to replicate human

behavior in economic market experiments. Compared to previous studies, we focus

on dynamic feedback between LLM agents: the decisions of each LLM impact the

market price at the current step, and so affect the decisions of the other LLMs

at the next step. We compare LLM behavior to market dynamics observed in

laboratory settings and assess their alignment with human participants'

behavior. Our findings indicate that LLMs do not adhere strictly to rational

expectations, displaying instead bounded rationality, similarly to human

participants. Providing a minimal context window i.e. memory of three previous

time steps, combined with a high variability setting capturing response

heterogeneity, allows LLMs to replicate broad trends seen in human experiments,

such as the distinction between positive and negative feedback markets.

However, differences remain at a granular level--LLMs exhibit less

heterogeneity in behavior than humans. These results suggest that LLMs hold

promise as tools for simulating realistic human behavior in economic contexts,

though further research is needed to refine their accuracy and increase

behavioral diversity.

Socioeconomic status (SES) fundamentally influences how people interact with

each other and more recently, with digital technologies like Large Language

Models (LLMs). While previous research has highlighted the interaction between

SES and language technology, it was limited by reliance on proxy metrics and

synthetic data. We survey 1,000 individuals from diverse socioeconomic

backgrounds about their use of language technologies and generative AI, and

collect 6,482 prompts from their previous interactions with LLMs. We find

systematic differences across SES groups in language technology usage (i.e.,

frequency, performed tasks), interaction styles, and topics. Higher SES entails

a higher level of abstraction, convey requests more concisely, and topics like

'inclusivity' and 'travel'. Lower SES correlates with higher

anthropomorphization of LLMs (using ''hello'' and ''thank you'') and more

concrete language. Our findings suggest that while generative language

technologies are becoming more accessible to everyone, socioeconomic linguistic

differences still stratify their use to exacerbate the digital divide. These

differences underscore the importance of considering SES in developing language

technologies to accommodate varying linguistic needs rooted in socioeconomic

factors and limit the AI Gap across SES groups.

Face Recognition (FR) tasks have made significant progress with the advent of Deep Neural Networks, particularly through margin-based triplet losses that embed facial images into high-dimensional feature spaces. During training, these contrastive losses focus exclusively on identity information as labels. However, we observe a multiscale geometric structure emerging in the embedding space, influenced by interpretable facial (e.g., hair color) and image attributes (e.g., contrast). We propose a geometric approach to describe the dependence or invariance of FR models to these attributes and introduce a physics-inspired alignment metric. We evaluate the proposed metric on controlled, simplified models and widely used FR models fine-tuned with synthetic data for targeted attribute augmentation. Our findings reveal that the models exhibit varying degrees of invariance across different attributes, providing insight into their strengths and weaknesses and enabling deeper interpretability. Code available here: this https URL}{this https URL

We study the problem of minimizing polarization and disagreement in the Friedkin-Johnsen opinion dynamics model under incomplete information. Unlike prior work that assumes a static setting with full knowledge of users' innate opinions, we address the more realistic online setting where innate opinions are unknown and must be learned through sequential observations. This novel setting, which naturally mirrors periodic interventions on social media platforms, is formulated as a regret minimization problem, establishing a key connection between algorithmic interventions on social media platforms and theory of multi-armed bandits. In our formulation, a learner observes only a scalar feedback of the overall polarization and disagreement after an intervention. For this novel bandit problem, we propose a two-stage algorithm based on low-rank matrix bandits. The algorithm first performs subspace estimation to identify an underlying low-dimensional structure, and then employs a linear bandit algorithm within the compact dimensional representation derived from the estimated subspace. We prove that our algorithm achieves an O(T) cumulative regret over any time horizon T. Empirical results validate that our algorithm significantly outperforms a linear bandit baseline in terms of both cumulative regret and running time.

Studying the interplay between the geometry of the loss landscape and the

optimization trajectories of simple neural networks is a fundamental step for

understanding their behavior in more complex settings. This paper reveals the

presence of topological obstruction in the loss landscape of shallow ReLU

neural networks trained using gradient flow. We discuss how the homogeneous

nature of the ReLU activation function constrains the training trajectories to

lie on a product of quadric hypersurfaces whose shape depends on the particular

initialization of the network's parameters. When the neural network's output is

a single scalar, we prove that these quadrics can have multiple connected

components, limiting the set of reachable parameters during training. We

analytically compute the number of these components and discuss the possibility

of mapping one to the other through neuron rescaling and permutation. In this

simple setting, we find that the non-connectedness results in a topological

obstruction, which, depending on the initialization, can make the global

optimum unreachable. We validate this result with numerical experiments.

It is increasingly important to generate synthetic populations with explicit coordinates rather than coarse geographic areas, yet no established methods exist to achieve this. One reason is that latitude and longitude differ from other continuous variables, exhibiting large empty spaces and highly uneven densities. To address this, we propose a population synthesis algorithm that first maps spatial coordinates into a more regular latent space using Normalizing Flows (NF), and then combines them with other features in a Variational Autoencoder (VAE) to generate synthetic populations. This approach also learns the joint distribution between spatial and non-spatial features, exploiting spatial autocorrelations. We demonstrate the method by generating synthetic homes with the same statistical properties of real homes in 121 datasets, corresponding to diverse geographies. We further propose an evaluation framework that measures both spatial accuracy and practical utility, while ensuring privacy preservation. Our results show that the NF+VAE architecture outperforms popular benchmarks, including copula-based methods and uniform allocation within geographic areas. The ability to generate geolocated synthetic populations at fine spatial resolution opens the door to applications requiring detailed geography, from household responses to floods, to epidemic spread, evacuation planning, and transport modeling.

In the combinatorial semi-bandit (CSB) problem, a player selects an action from a combinatorial action set and observes feedback from the base arms included in the action. While CSB is widely applicable to combinatorial optimization problems, its restriction to binary decision spaces excludes important cases involving non-negative integer flows or allocations, such as the optimal transport and knapsack this http URL overcome this limitation, we propose the multi-play combinatorial semi-bandit (MP-CSB), where a player can select a non-negative integer action and observe multiple feedbacks from a single arm in each round. We propose two algorithms for the MP-CSB. One is a Thompson-sampling-based algorithm that is computationally feasible even when the action space is exponentially large with respect to the number of arms, and attains O(logT) distribution-dependent regret in the stochastic regime, where T is the time horizon. The other is a best-of-both-worlds algorithm, which achieves O(logT) variance-dependent regret in the stochastic regime and the worst-case O~(T) regret in the adversarial regime. Moreover, its regret in adversarial one is data-dependent, adapting to the cumulative loss of the optimal action, the total quadratic variation, and the path-length of the loss sequence. Finally, we numerically show that the proposed algorithms outperform existing methods in the CSB literature.

The paper introduces a novel Conserved Symmetry (CS) Ising model for higher-order interactions on hypergraphs, addressing limitations of traditional p-spin models regarding spin-flip symmetry. It demonstrates that the CS model exhibits a continuous phase transition for three-body interactions, in contrast to the abrupt transition found in p-spin models, and details how transitions become abrupt with interactions of order four or higher.

Santa Fe InstituteCENTAI InstituteSmith School of Enterprise and the Environment, University of OxfordMathematical Institute, University of OxfordInstitute for New Economic Thinking, University of OxfordMacrocosm Inc.Department of Computer Science, University of OxfordInstitute of Economics, Polish Academy of SciencesDepartment of Economics, University of Oxford

In the last few years, economic agent-based models have made the transition from qualitative models calibrated to match stylised facts to quantitative models for time series forecasting, and in some cases, their predictions have performed as well or better than those of standard models (see, e.g. Poledna et al. (2023a); Hommes et al. (2022); Pichler et al. (2022)). Here, we build on the model of Poledna et al., adding several new features such as housing markets, realistic synthetic populations of individuals with income, wealth and consumption heterogeneity, enhanced behavioural rules and market mechanisms, and an enhanced credit market. We calibrate our model for all 38 OECD member countries using state-of-the-art approximate Bayesian inference methods and test it by making out-of-sample forecasts. It outperforms both the Poledna and AR(1) time series models by a highly statistically significant margin. Our model is built within a platform we have developed, making it easy to build, run, and evaluate alternative models, which we hope will encourage future work in this area.

17 Aug 2024

This review paper examines Generative Agent-Based Models (GABMs), which leverage Large Language Models (LLMs) to dynamically guide agent behaviors in complex systems research. It surveys GABM applications in network science, game theory, social dynamics, and epidemic propagation, noting their capacity to mimic human-like behaviors while highlighting challenges such as biases and prompt sensitivity.

Despite the large research effort devoted to learning dependencies between

time series, the state of the art still faces a major limitation: existing

methods learn partial correlations but fail to discriminate across distinct

frequency bands. Motivated by many applications in which this differentiation

is pivotal, we overcome this limitation by learning a block-sparse,

frequency-dependent, partial correlation graph, in which layers correspond to

different frequency bands, and partial correlations can occur over just a few

layers. To this aim, we formulate and solve two nonconvex learning problems:

the first has a closed-form solution and is suitable when there is prior

knowledge about the number of partial correlations; the second hinges on an

iterative solution based on successive convex approximation, and is effective

for the general case where no prior knowledge is available. Numerical results

on synthetic data show that the proposed methods outperform the current state

of the art. Finally, the analysis of financial time series confirms that

partial correlations exist only within a few frequency bands, underscoring how

our methods enable the gaining of valuable insights that would be undetected

without discriminating along the frequency domain.

02 Jul 2025

Has ideological polarization actually increased in the last decades, or have voters simply sorted themselves into parties matching their ideology more closely? We present a novel methodology to quantify multidimensional ideological polarization, by embedding the respondents to a wide variety of political, social, and economic topics from the American National Election Studies (ANES) into a two-dimensional ideological space. By identifying several demographic attributes of the ANES respondents, we chart how political and socio-economic groups move through the ideological space in time. We observe that income and especially racial groups align into parties, but their ideological distance has not increased over time. Instead, Democrats and Republicans have become ideologically more distant in the last 30 years: Both parties moved away from the center, at different rates. Furthermore, Democratic voters have become ideologically more heterogeneous after 2010, indicating that partisan sorting has declined in the last decade.

Graphs are ubiquitous due to their flexibility in representing social and

technological systems as networks of interacting elements. Graph representation

learning methods, such as node embeddings, are powerful approaches to map nodes

into a latent vector space, allowing their use for various graph tasks. Despite

their success, only few studies have focused on explaining node embeddings

locally. Moreover, global explanations of node embeddings remain unexplored,

limiting interpretability and debugging potentials. We address this gap by

developing human-understandable explanations for dimensions in node embeddings.

Towards that, we first develop new metrics that measure the global

interpretability of embedding vectors based on the marginal contribution of the

embedding dimensions to predicting graph structure. We say that an embedding

dimension is more interpretable if it can faithfully map to an understandable

sub-structure in the input graph - like community structure. Having observed

that standard node embeddings have low interpretability, we then introduce DINE

(Dimension-based Interpretable Node Embedding), a novel approach that can

retrofit existing node embeddings by making them more interpretable without

sacrificing their task performance. We conduct extensive experiments on

synthetic and real-world graphs and show that we can simultaneously learn

highly interpretable node embeddings with effective performance in link

prediction.

Santa Fe InstituteCENTAI InstituteNetwork Science Institute, Northeastern UniversityInstitute for Biocomputation and Physics of Complex Systems (BIFI), University of ZaragozaKhoury College of Computer Sciences, Northeastern UniversityDepartment of Electrical and Computer Engineering, University of California, San DiegoDepartment of Theoretical Physics, University of Zaragoza

We propose a team assignment algorithm based on a hypergraph approach focusing on resilience and diffusion optimization. Specifically, our method is based on optimizing the algebraic connectivity of the Laplacian matrix of an edge-dependent vertex-weighted hypergraph. We used constrained simulated annealing, where we constrained the effort agents can exert to perform a task and the minimum effort a task requires to be completed. We evaluated our methods in terms of the number of unsuccessful patches to drive our solution into the feasible region and the cost of patching. We showed that our formulation provides more robust solutions than the original data and the greedy approach. We hope that our methods motivate further research in applying hypergraphs to similar problems in different research areas and in exploring variations of our methods.

Epilepsy is known to drastically alter brain dynamics during seizures (ictal

periods), but its effects on background (non-ictal) brain dynamics remain

poorly understood. To investigate this, we analyzed an in-house dataset of

brain activity recordings from epileptic zebrafish, focusing on two controlled

genetic conditions across two fishlines. After using machine learning to

segment and label recordings, we applied time-delay embedding and Persistent

Homology -- a noise-robust method from Topological Data Analysis (TDA) -- to

uncover topological patterns in brain activity. We find that ictal and

non-ictal periods can be distinguished based on the topology of their dynamics,

independent of genetic condition or fishline, which validates our approach.

Remarkably, within a single wild-type fishline, we identified topological

differences in non-ictal periods between seizure-prone and seizure-free

individuals. These findings suggest that epilepsy leaves detectable topological

signatures in brain dynamics even outside of ictal periods. Overall, this study

demonstrates the utility of TDA as a quantitative framework to screen for

topological markers of epileptic susceptibility, with potential applications

across species.

17 Feb 2025

Floods do not sink prices, historical memory does: How flood risk impacts the Italian housing market

Floods do not sink prices, historical memory does: How flood risk impacts the Italian housing market

Do home prices incorporate flood risk in the immediate aftermath of specific

flood events, or is it the repeated exposure over the years that plays a more

significant role? We address this question through the first systematic study

of the Italian housing market, which is an ideal case study because it is

highly exposed to floods, though unevenly distributed across the national

territory. Using a novel dataset containing about 550,000 mortgage-financed

transactions between 2016 and 2024, as well as hedonic regressions and a

difference-in-difference design, we find that: (i) specific floods do not

decrease home prices in areas at risk; (ii) the repeated exposure to floods in

flood-prone areas leads to a price decline, up to 4\% in the most frequently

flooded regions; (iii) responses are heterogeneous by buyers' income and age.

Young buyers (with limited exposure to prior floods) do not obtain any price

reduction for settling in risky areas, while experienced buyers do. At the same

time, buyers who settle in risky areas have lower incomes than buyers in safe

areas in the most affected regions. Our results emphasize the importance of

cultural and institutional factors in understanding how flood risk affects the

housing market and socioeconomic outcomes.

Concept-based eXplainable AI (C-XAI) is a rapidly growing research field that

enhances AI model interpretability by leveraging intermediate,

human-understandable concepts. This approach not only enhances model

transparency but also enables human intervention, allowing users to interact

with these concepts to refine and improve the model's performance. Concept

Bottleneck Models (CBMs) explicitly predict concepts before making final

decisions, enabling interventions to correct misclassified concepts. While CBMs

remain effective in Out-Of-Distribution (OOD) settings with intervention, they

struggle to match the performance of black-box models. Concept Embedding Models

(CEMs) address this by learning concept embeddings from both concept

predictions and input data, enhancing In-Distribution (ID) accuracy but

reducing the effectiveness of interventions, especially in OOD scenarios. In

this work, we propose the Variational Concept Embedding Model (V-CEM), which

leverages variational inference to improve intervention responsiveness in CEMs.

We evaluated our model on various textual and visual datasets in terms of ID

performance, intervention responsiveness in both ID and OOD settings, and

Concept Representation Cohesiveness (CRC), a metric we propose to assess the

quality of the concept embedding representations. The results demonstrate that

V-CEM retains CEM-level ID performance while achieving intervention

effectiveness similar to CBM in OOD settings, effectively reducing the gap

between interpretability (intervention) and generalization (performance).

There are no more papers matching your filters at the moment.