Meitu Inc.

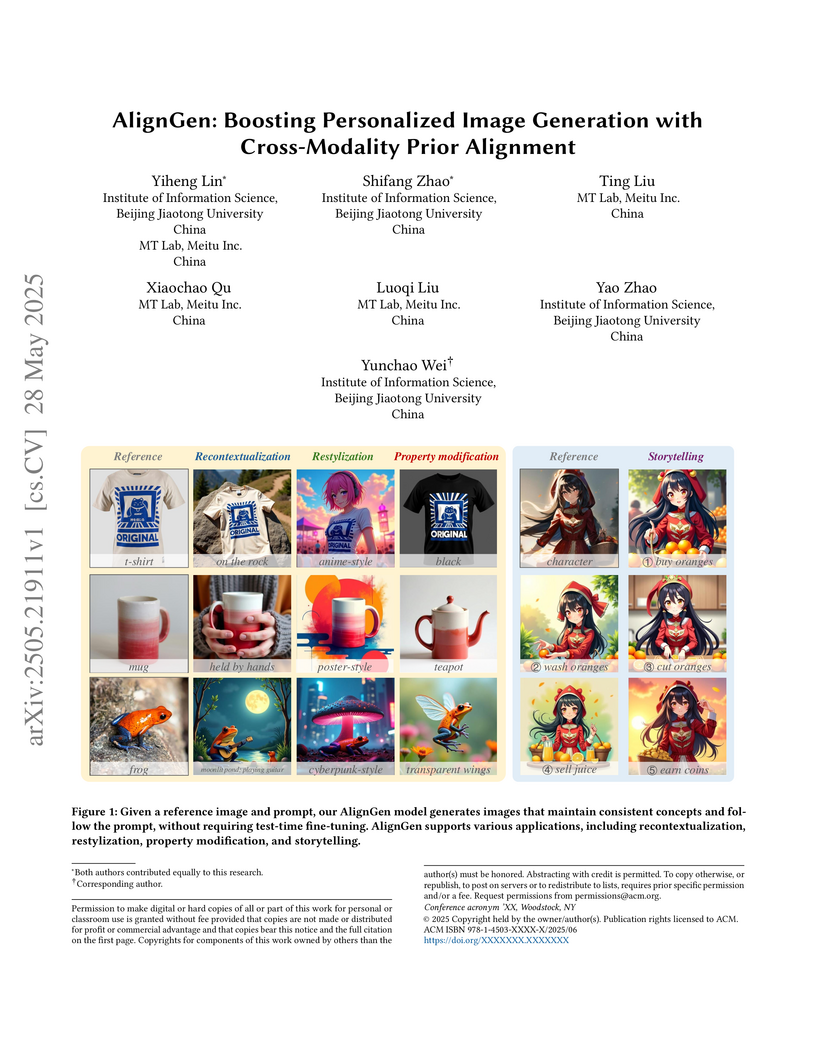

Personalized image generation aims to integrate user-provided concepts into

text-to-image models, enabling the generation of customized content based on a

given prompt. Recent zero-shot approaches, particularly those leveraging

diffusion transformers, incorporate reference image information through

multi-modal attention mechanism. This integration allows the generated output

to be influenced by both the textual prior from the prompt and the visual prior

from the reference image. However, we observe that when the prompt and

reference image are misaligned, the generated results exhibit a stronger bias

toward the textual prior, leading to a significant loss of reference content.

To address this issue, we propose AlignGen, a Cross-Modality Prior Alignment

mechanism that enhances personalized image generation by: 1) introducing a

learnable token to bridge the gap between the textual and visual priors, 2)

incorporating a robust training strategy to ensure proper prior alignment, and

3) employing a selective cross-modal attention mask within the multi-modal

attention mechanism to further align the priors. Experimental results

demonstrate that AlignGen outperforms existing zero-shot methods and even

surpasses popular test-time optimization approaches.

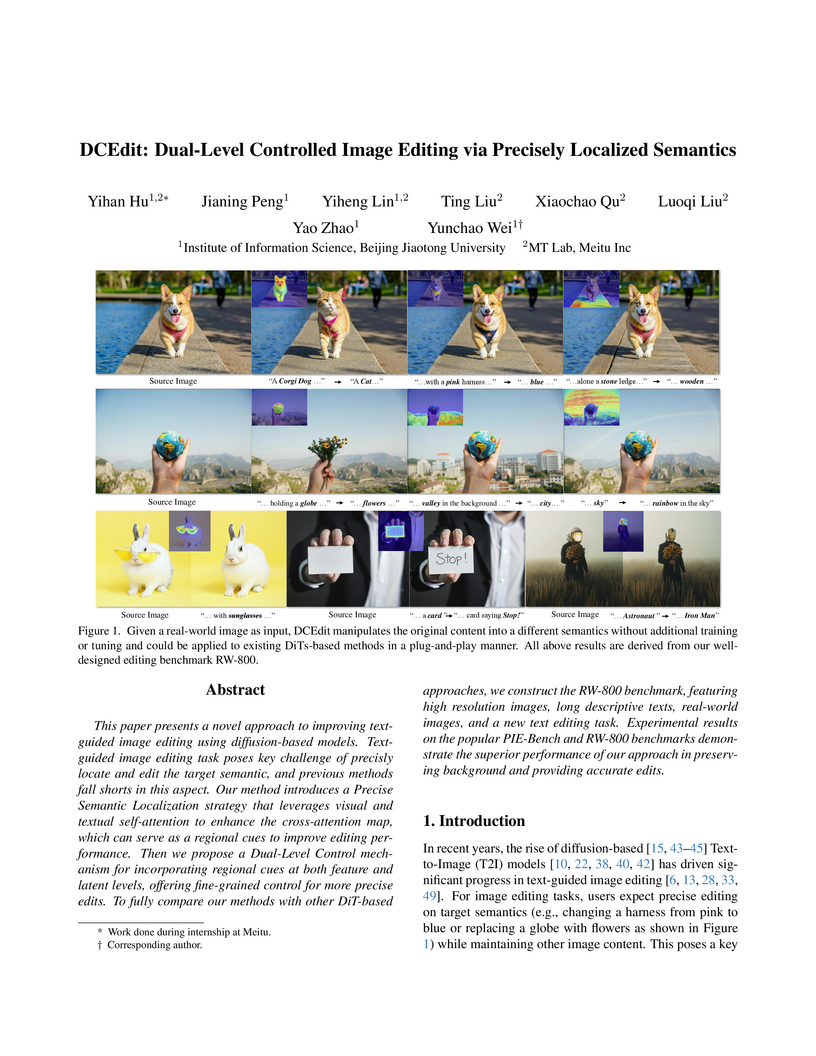

DCEdit, developed by researchers from Beijing Jiaotong University and Meitu Inc., introduces a dual-level control mechanism and a precise semantic localization strategy to enhance text-guided image editing. This approach refines attention maps using self-attention and applies control at both feature and latent levels of the diffusion process, leading to improved semantic localization and background preservation compared to prior methods.

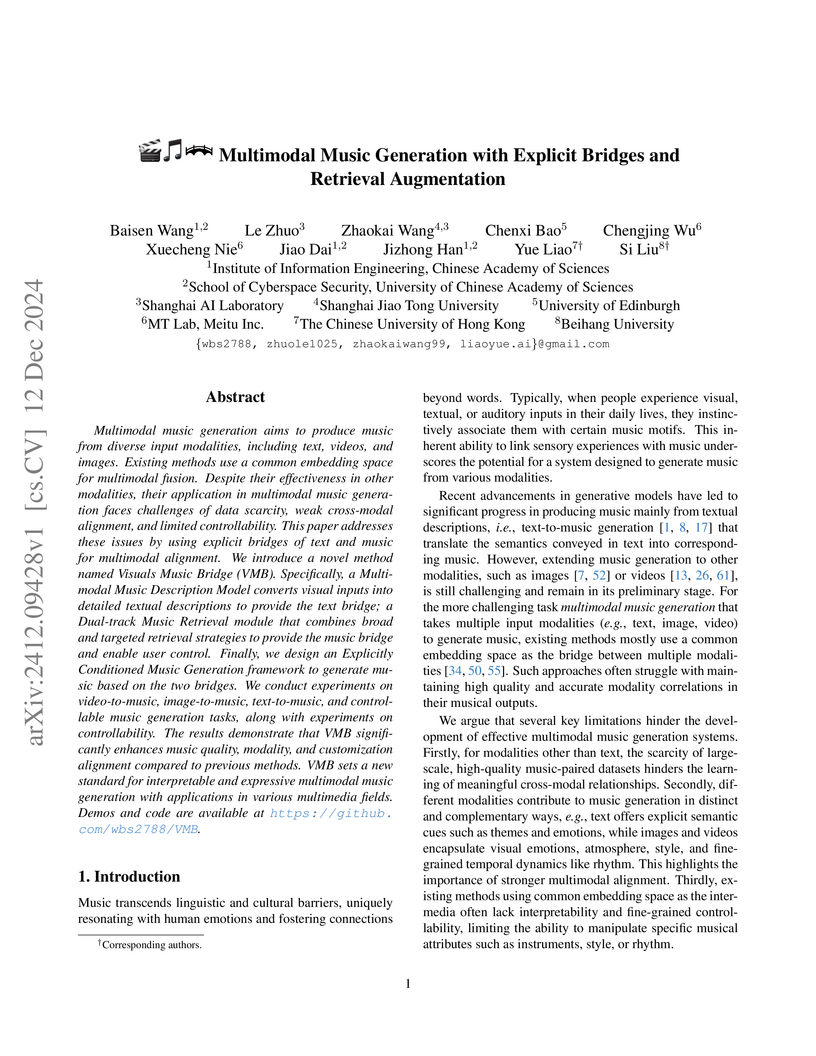

Multimodal music generation aims to produce music from diverse input modalities, including text, videos, and images. Existing methods use a common embedding space for multimodal fusion. Despite their effectiveness in other modalities, their application in multimodal music generation faces challenges of data scarcity, weak cross-modal alignment, and limited controllability. This paper addresses these issues by using explicit bridges of text and music for multimodal alignment. We introduce a novel method named Visuals Music Bridge (VMB). Specifically, a Multimodal Music Description Model converts visual inputs into detailed textual descriptions to provide the text bridge; a Dual-track Music Retrieval module that combines broad and targeted retrieval strategies to provide the music bridge and enable user control. Finally, we design an Explicitly Conditioned Music Generation framework to generate music based on the two bridges. We conduct experiments on video-to-music, image-to-music, text-to-music, and controllable music generation tasks, along with experiments on controllability. The results demonstrate that VMB significantly enhances music quality, modality, and customization alignment compared to previous methods. VMB sets a new standard for interpretable and expressive multimodal music generation with applications in various multimedia fields. Demos and code are available at this https URL.

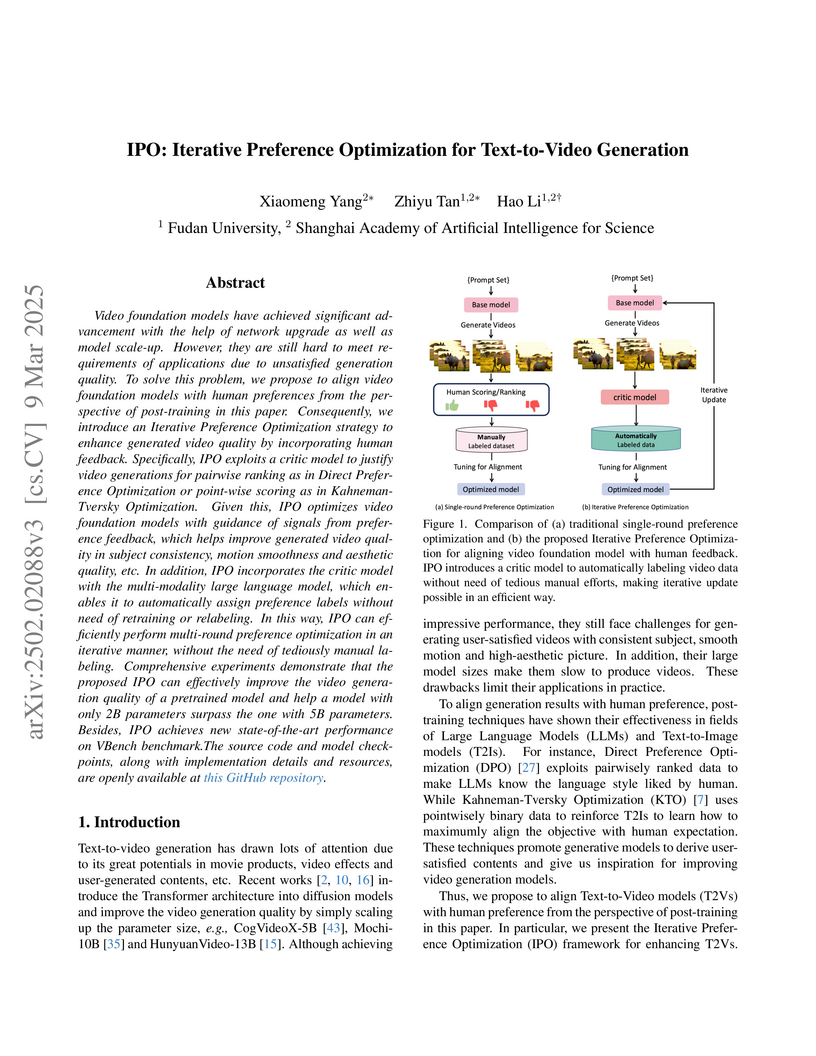

Video foundation models have achieved significant advancement with the help

of network upgrade as well as model scale-up. However, they are still hard to

meet requirements of applications due to unsatisfied generation quality. To

solve this problem, we propose to align video foundation models with human

preferences from the perspective of post-training in this paper. Consequently,

we introduce an Iterative Preference Optimization strategy to enhance generated

video quality by incorporating human feedback. Specifically, IPO exploits a

critic model to justify video generations for pairwise ranking as in Direct

Preference Optimization or point-wise scoring as in Kahneman-Tversky

Optimization. Given this, IPO optimizes video foundation models with guidance

of signals from preference feedback, which helps improve generated video

quality in subject consistency, motion smoothness and aesthetic quality, etc.

In addition, IPO incorporates the critic model with the multi-modality large

language model, which enables it to automatically assign preference labels

without need of retraining or relabeling. In this way, IPO can efficiently

perform multi-round preference optimization in an iterative manner, without the

need of tediously manual labeling. Comprehensive experiments demonstrate that

the proposed IPO can effectively improve the video generation quality of a

pretrained model and help a model with only 2B parameters surpass the one with

5B parameters. Besides, IPO achieves new state-of-the-art performance on VBench

benchmark.

Transformer-based models have recently achieved outstanding performance in image matting. However, their application to high-resolution images remains challenging due to the quadratic complexity of global self-attention. To address this issue, we propose MEMatte, a \textbf{m}emory-\textbf{e}fficient \textbf{m}atting framework for processing high-resolution images. MEMatte incorporates a router before each global attention block, directing informative tokens to the global attention while routing other tokens to a Lightweight Token Refinement Module (LTRM). Specifically, the router employs a local-global strategy to predict the routing probability of each token, and the LTRM utilizes efficient modules to simulate global attention. Additionally, we introduce a Batch-constrained Adaptive Token Routing (BATR) mechanism, which allows each router to dynamically route tokens based on image content and the stages of attention block in the network. Furthermore, we construct an ultra high-resolution image matting dataset, UHR-395, comprising 35,500 training images and 1,000 test images, with an average resolution of 4872×6017. This dataset is created by compositing 395 different alpha mattes across 11 categories onto various backgrounds, all with high-quality manual annotation. Extensive experiments demonstrate that MEMatte outperforms existing methods on both high-resolution and real-world datasets, significantly reducing memory usage by approximately 88% and latency by 50% on the Composition-1K benchmark. Our code is available at this https URL.

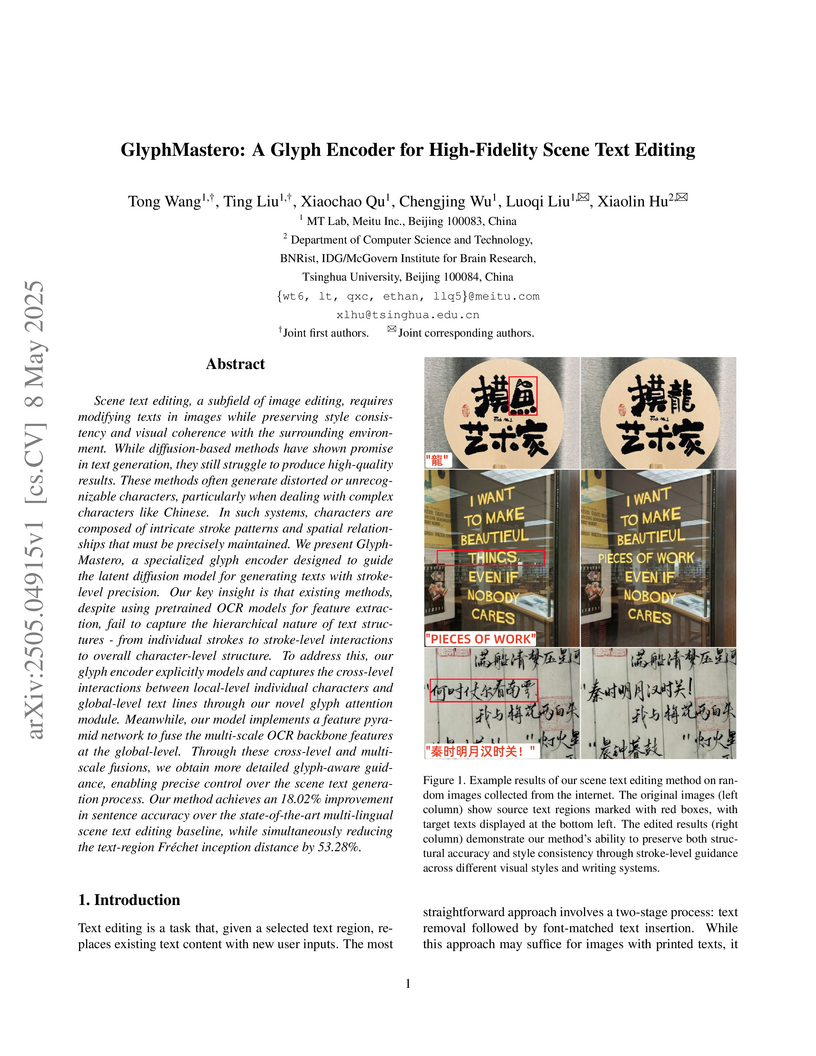

Researchers from Meitu Inc. and Tsinghua University developed GlyphMastero, a learnable glyph encoder that generates high-fidelity scene text, even for complex scripts like Chinese. This method significantly improves text accuracy and style preservation by capturing hierarchical text structures and integrating multi-scale glyph features via a novel Glyph Attention Module.

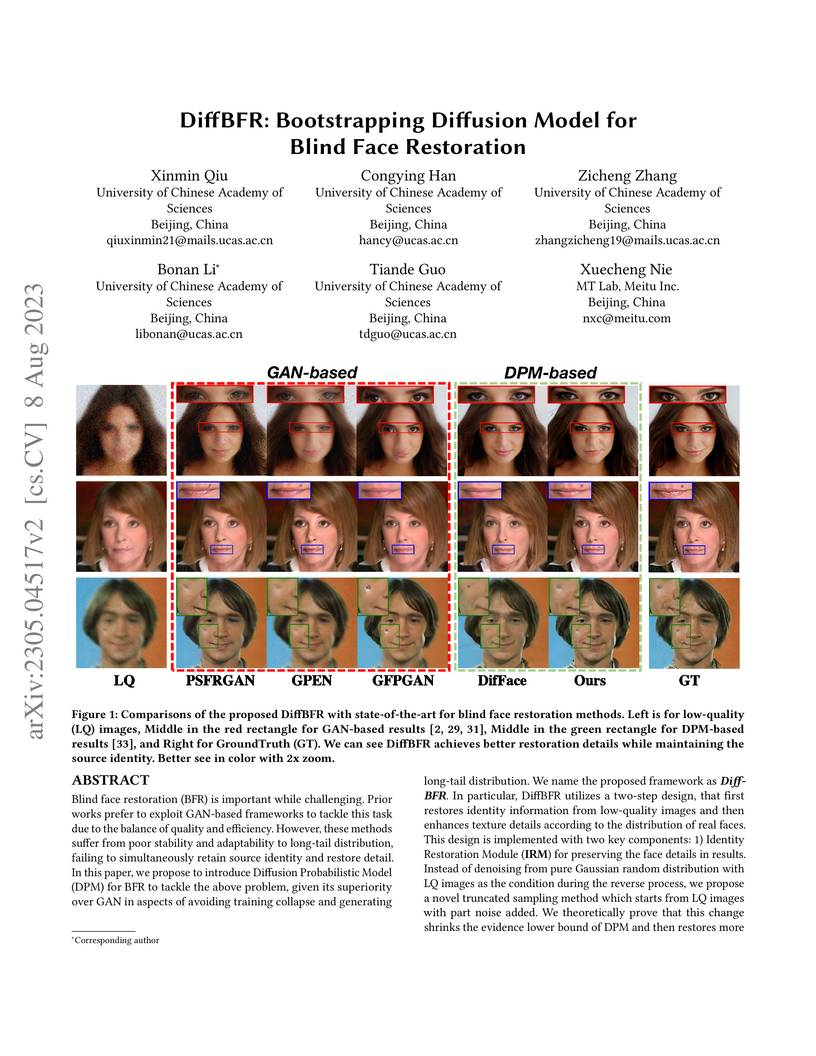

Blind face restoration (BFR) is important while challenging. Prior works prefer to exploit GAN-based frameworks to tackle this task due to the balance of quality and efficiency. However, these methods suffer from poor stability and adaptability to long-tail distribution, failing to simultaneously retain source identity and restore detail. We propose DiffBFR to introduce Diffusion Probabilistic Model (DPM) for BFR to tackle the above problem, given its superiority over GAN in aspects of avoiding training collapse and generating long-tail distribution. DiffBFR utilizes a two-step design, that first restores identity information from low-quality images and then enhances texture details according to the distribution of real faces. This design is implemented with two key components: 1) Identity Restoration Module (IRM) for preserving the face details in results. Instead of denoising from pure Gaussian random distribution with LQ images as the condition during the reverse process, we propose a novel truncated sampling method which starts from LQ images with part noise added. We theoretically prove that this change shrinks the evidence lower bound of DPM and then restores more original details. With theoretical proof, two cascade conditional DPMs with different input sizes are introduced to strengthen this sampling effect and reduce training difficulty in the high-resolution image generated directly. 2) Texture Enhancement Module (TEM) for polishing the texture of the image. Here an unconditional DPM, a LQ-free model, is introduced to further force the restorations to appear realistic. We theoretically proved that this unconditional DPM trained on pure HQ images contributes to justifying the correct distribution of inference images output from IRM in pixel-level space. Truncated sampling with fractional time step is utilized to polish pixel-level textures while preserving identity information.

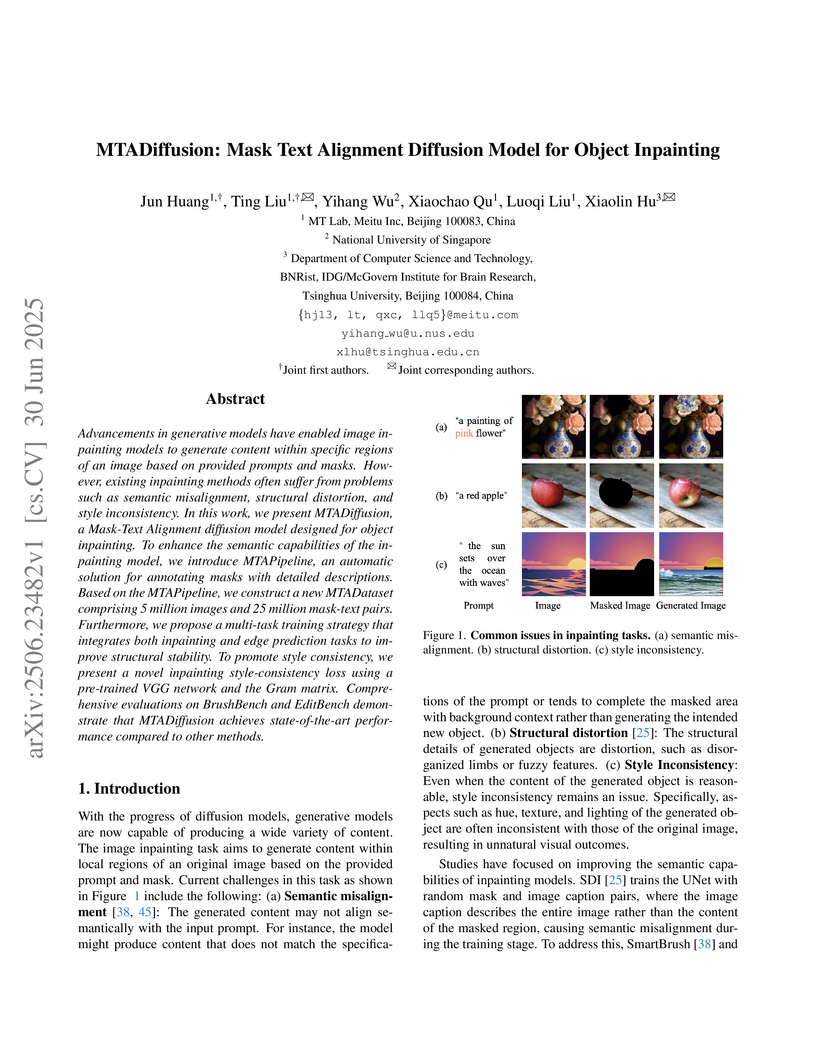

Advancements in generative models have enabled image inpainting models to generate content within specific regions of an image based on provided prompts and masks. However, existing inpainting methods often suffer from problems such as semantic misalignment, structural distortion, and style inconsistency. In this work, we present MTADiffusion, a Mask-Text Alignment diffusion model designed for object inpainting. To enhance the semantic capabilities of the inpainting model, we introduce MTAPipeline, an automatic solution for annotating masks with detailed descriptions. Based on the MTAPipeline, we construct a new MTADataset comprising 5 million images and 25 million mask-text pairs. Furthermore, we propose a multi-task training strategy that integrates both inpainting and edge prediction tasks to improve structural stability. To promote style consistency, we present a novel inpainting style-consistency loss using a pre-trained VGG network and the Gram matrix. Comprehensive evaluations on BrushBench and EditBench demonstrate that MTADiffusion achieves state-of-the-art performance compared to other methods.

Researchers from Meitu Inc., Tencent Inc., Xiamen University, and Shenzhen University developed FLEN, a Field-Leveraged Embedding Network for scalable CTR prediction that effectively models hierarchical feature interactions. The model integrates a novel Field-wise Bi-Interaction pooling layer and a Dicefactor dropout method, leading to a 5.195% CTR improvement in Meitu's production system while reducing memory and computation time by a factor of six compared to previous models.

Developing blind video deflickering (BVD) algorithms to enhance video

temporal consistency, is gaining importance amid the flourish of image

processing and video generation. However, the intricate nature of video data

complicates the training of deep learning methods, leading to high resource

consumption and instability, notably under severe lighting flicker. This

underscores the critical need for a compact representation beyond pixel values

to advance BVD research and applications. Inspired by the classic scale-time

equalization (STE), our work introduces the histogram-assisted solution, called

BlazeBVD, for high-fidelity and rapid BVD. Compared with STE, which directly

corrects pixel values by temporally smoothing color histograms, BlazeBVD

leverages smoothed illumination histograms within STE filtering to ease the

challenge of learning temporal data using neural networks. In technique,

BlazeBVD begins by condensing pixel values into illumination histograms that

precisely capture flickering and local exposure variations. These histograms

are then smoothed to produce singular frames set, filtered illumination maps,

and exposure maps. Resorting to these deflickering priors, BlazeBVD utilizes a

2D network to restore faithful and consistent texture impacted by lighting

changes or localized exposure issues. BlazeBVD also incorporates a lightweight

3D network to amend slight temporal inconsistencies, avoiding the resource

consumption issue. Comprehensive experiments on synthetic, real-world and

generated videos, showcase the superior qualitative and quantitative results of

BlazeBVD, achieving inference speeds up to 10x faster than state-of-the-arts.

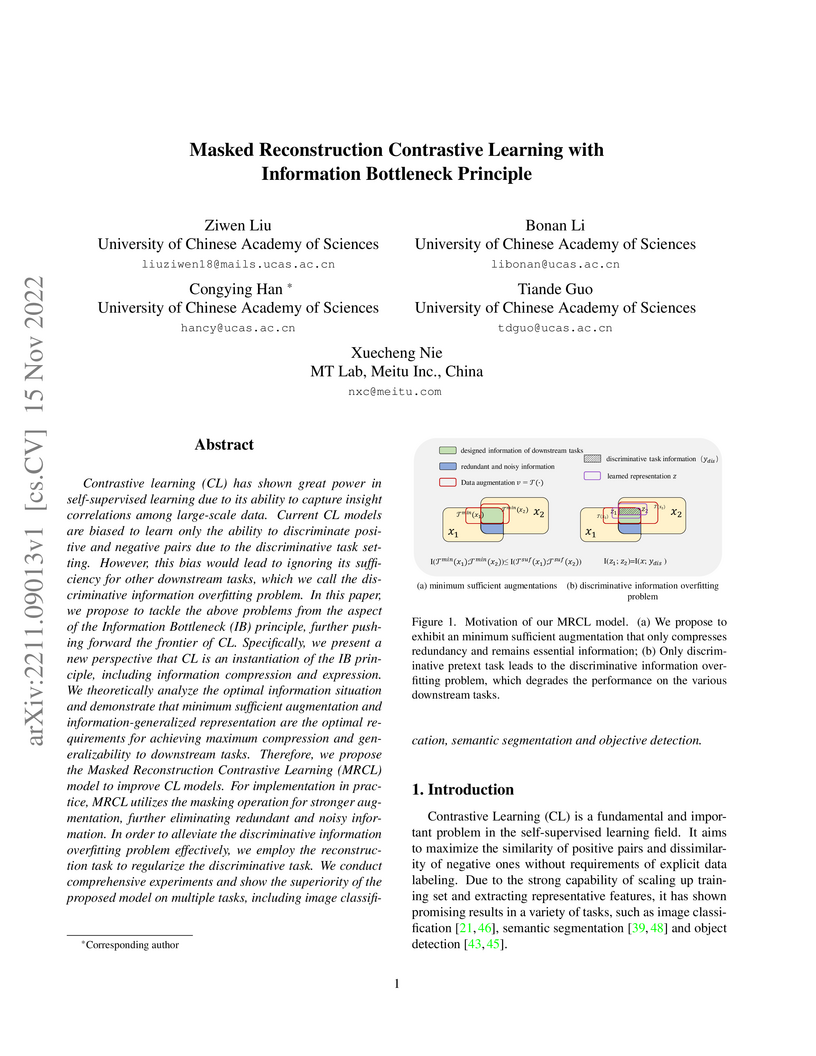

Contrastive learning (CL) has shown great power in self-supervised learning due to its ability to capture insight correlations among large-scale data. Current CL models are biased to learn only the ability to discriminate positive and negative pairs due to the discriminative task setting. However, this bias would lead to ignoring its sufficiency for other downstream tasks, which we call the discriminative information overfitting problem. In this paper, we propose to tackle the above problems from the aspect of the Information Bottleneck (IB) principle, further pushing forward the frontier of CL. Specifically, we present a new perspective that CL is an instantiation of the IB principle, including information compression and expression. We theoretically analyze the optimal information situation and demonstrate that minimum sufficient augmentation and information-generalized representation are the optimal requirements for achieving maximum compression and generalizability to downstream tasks. Therefore, we propose the Masked Reconstruction Contrastive Learning~(MRCL) model to improve CL models. For implementation in practice, MRCL utilizes the masking operation for stronger augmentation, further eliminating redundant and noisy information. In order to alleviate the discriminative information overfitting problem effectively, we employ the reconstruction task to regularize the discriminative task. We conduct comprehensive experiments and show the superiority of the proposed model on multiple tasks, including image classification, semantic segmentation and objective detection.

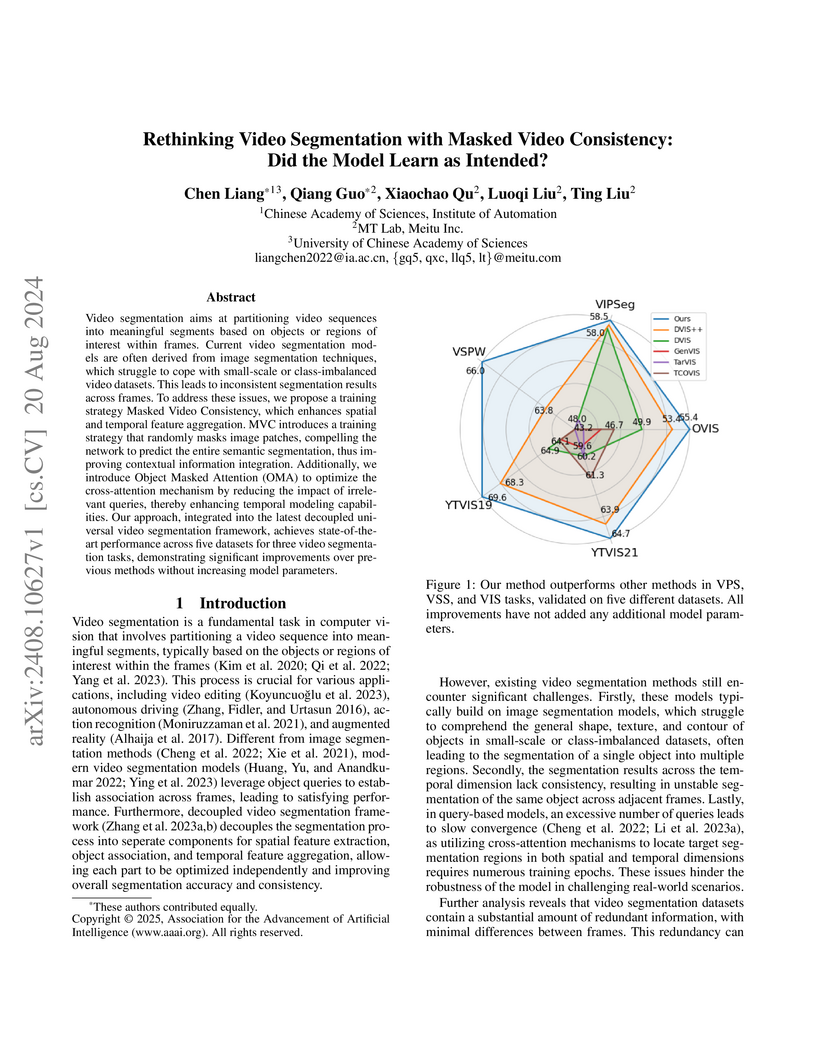

Video segmentation aims at partitioning video sequences into meaningful

segments based on objects or regions of interest within frames. Current video

segmentation models are often derived from image segmentation techniques, which

struggle to cope with small-scale or class-imbalanced video datasets. This

leads to inconsistent segmentation results across frames. To address these

issues, we propose a training strategy Masked Video Consistency, which enhances

spatial and temporal feature aggregation. MVC introduces a training strategy

that randomly masks image patches, compelling the network to predict the entire

semantic segmentation, thus improving contextual information integration.

Additionally, we introduce Object Masked Attention (OMA) to optimize the

cross-attention mechanism by reducing the impact of irrelevant queries, thereby

enhancing temporal modeling capabilities. Our approach, integrated into the

latest decoupled universal video segmentation framework, achieves

state-of-the-art performance across five datasets for three video segmentation

tasks, demonstrating significant improvements over previous methods without

increasing model parameters.

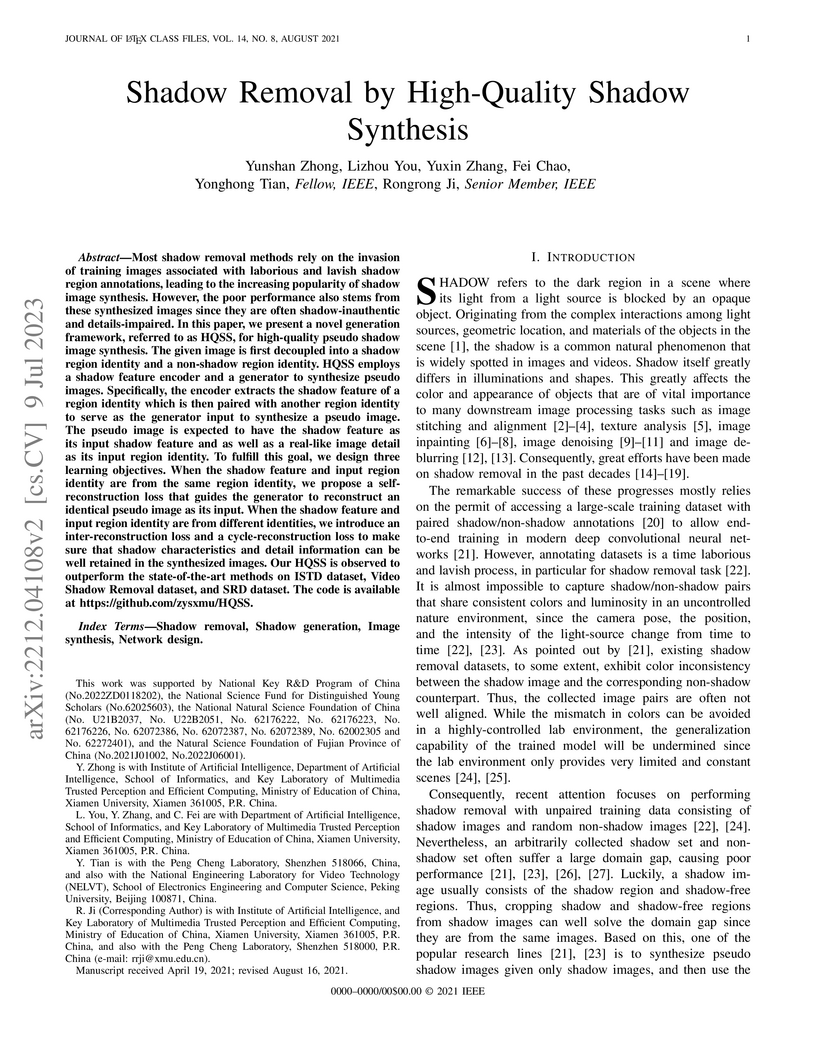

Most shadow removal methods rely on the invasion of training images

associated with laborious and lavish shadow region annotations, leading to the

increasing popularity of shadow image synthesis. However, the poor performance

also stems from these synthesized images since they are often

shadow-inauthentic and details-impaired. In this paper, we present a novel

generation framework, referred to as HQSS, for high-quality pseudo shadow image

synthesis. The given image is first decoupled into a shadow region identity and

a non-shadow region identity. HQSS employs a shadow feature encoder and a

generator to synthesize pseudo images. Specifically, the encoder extracts the

shadow feature of a region identity which is then paired with another region

identity to serve as the generator input to synthesize a pseudo image. The

pseudo image is expected to have the shadow feature as its input shadow feature

and as well as a real-like image detail as its input region identity. To

fulfill this goal, we design three learning objectives. When the shadow feature

and input region identity are from the same region identity, we propose a

self-reconstruction loss that guides the generator to reconstruct an identical

pseudo image as its input. When the shadow feature and input region identity

are from different identities, we introduce an inter-reconstruction loss and a

cycle-reconstruction loss to make sure that shadow characteristics and detail

information can be well retained in the synthesized images. Our HQSS is

observed to outperform the state-of-the-art methods on ISTD dataset, Video

Shadow Removal dataset, and SRD dataset. The code is available at

this https URL

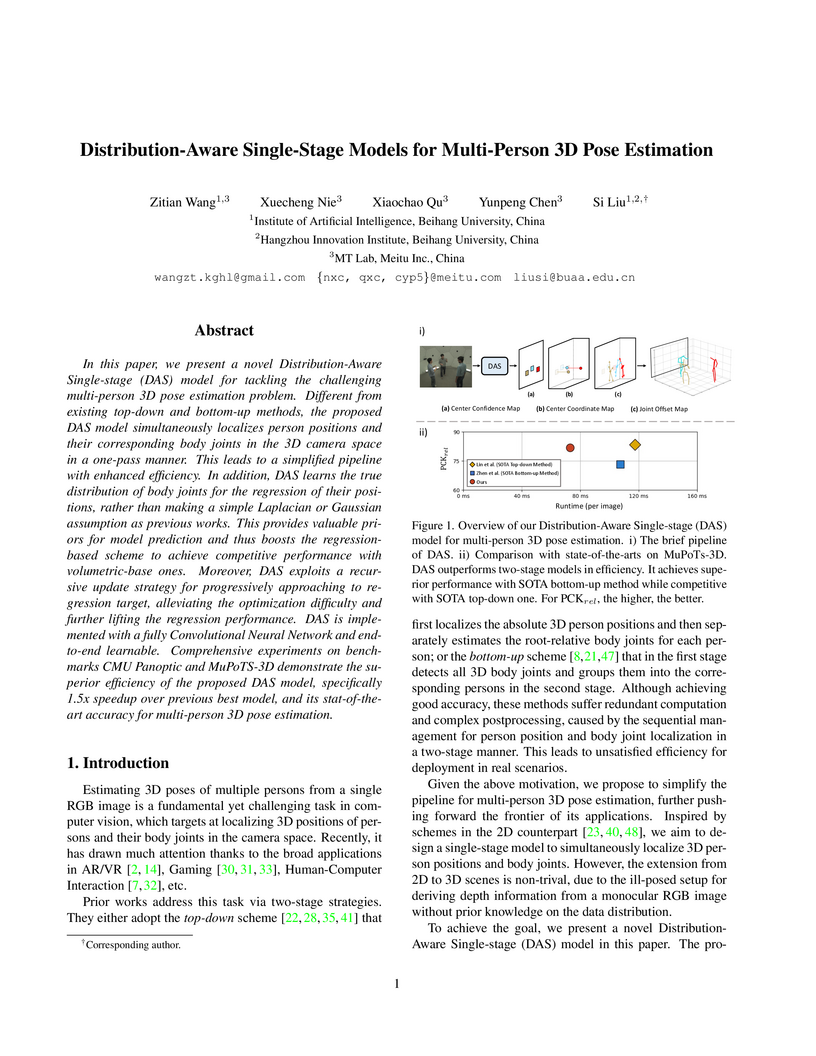

In this paper, we present a novel Distribution-Aware Single-stage (DAS) model for tackling the challenging multi-person 3D pose estimation problem. Different from existing top-down and bottom-up methods, the proposed DAS model simultaneously localizes person positions and their corresponding body joints in the 3D camera space in a one-pass manner. This leads to a simplified pipeline with enhanced efficiency. In addition, DAS learns the true distribution of body joints for the regression of their positions, rather than making a simple Laplacian or Gaussian assumption as previous works. This provides valuable priors for model prediction and thus boosts the regression-based scheme to achieve competitive performance with volumetric-base ones. Moreover, DAS exploits a recursive update strategy for progressively approaching to regression target, alleviating the optimization difficulty and further lifting the regression performance. DAS is implemented with a fully Convolutional Neural Network and end-to-end learnable. Comprehensive experiments on benchmarks CMU Panoptic and MuPoTS-3D demonstrate the superior efficiency of the proposed DAS model, specifically 1.5x speedup over previous best model, and its stat-of-the-art accuracy for multi-person 3D pose estimation.

Recent text-to-image models have achieved impressive results in generating

high-quality images. However, when tasked with multi-concept generation

creating images that contain multiple characters or objects, existing methods

often suffer from semantic entanglement, including concept entanglement and

improper attribute binding, leading to significant text-image inconsistency. We

identify that semantic entanglement arises when certain regions of the latent

features attend to incorrect concept and attribute tokens. In this work, we

propose the Semantic Protection Diffusion Model (SPDiffusion) to address both

concept entanglement and improper attribute binding using only a text prompt as

input. The SPDiffusion framework introduces a novel concept region extraction

method SP-Extraction to resolve region entanglement in cross-attention, along

with SP-Attn, which protects concept regions from the influence of irrelevant

attributes and concepts. To evaluate our method, we test it on existing

benchmarks, where SPDiffusion achieves state-of-the-art results, demonstrating

its effectiveness.

Pixel-level Video Understanding requires effectively integrating

three-dimensional data in both spatial and temporal dimensions to learn

accurate and stable semantic information from continuous frames. However,

existing advanced models on the VSPW dataset have not fully modeled

spatiotemporal relationships. In this paper, we present our solution for the

PVUW competition, where we introduce masked video consistency (MVC) based on

existing models. MVC enforces the consistency between predictions of masked

frames where random patches are withheld. The model needs to learn the

segmentation results of the masked parts through the context of images and the

relationship between preceding and succeeding frames of the video.

Additionally, we employed test-time augmentation, model aggeregation and a

multimodal model-based post-processing method. Our approach achieves 67.27%

mIoU performance on the VSPW dataset, ranking 2nd place in the PVUW2024

challenge VSS track.

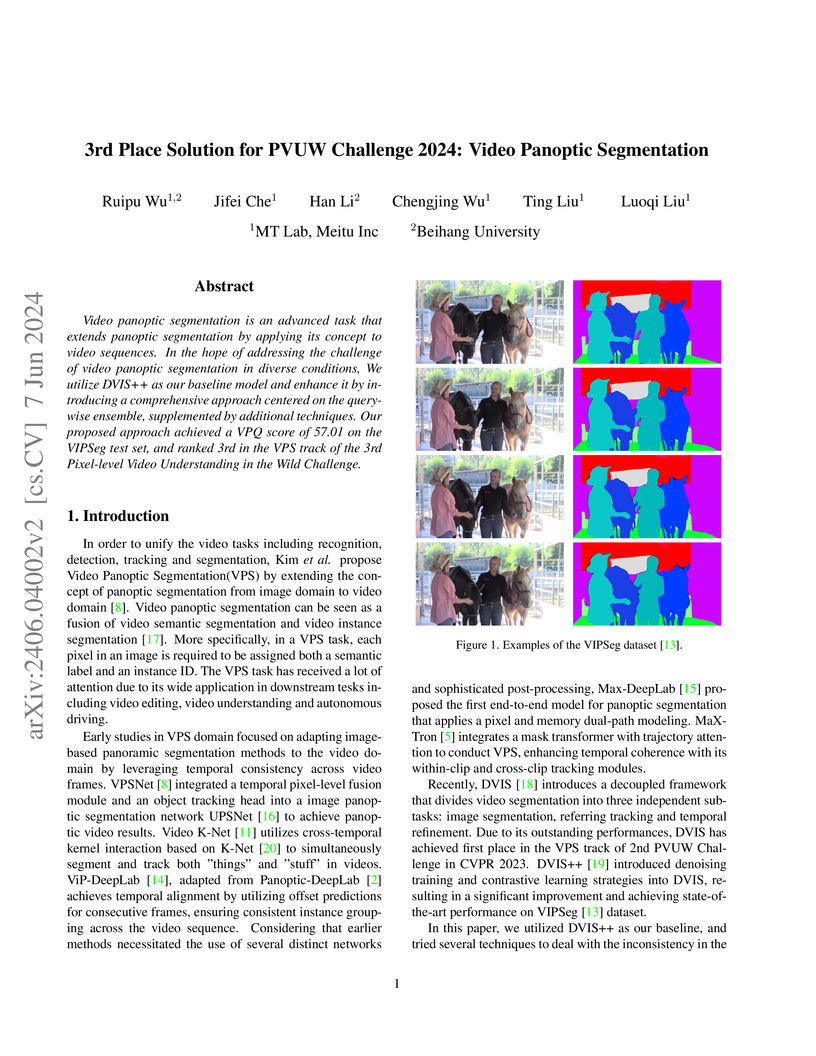

Video panoptic segmentation is an advanced task that extends panoptic

segmentation by applying its concept to video sequences. In the hope of

addressing the challenge of video panoptic segmentation in diverse conditions,

We utilize DVIS++ as our baseline model and enhance it by introducing a

comprehensive approach centered on the query-wise ensemble, supplemented by

additional techniques. Our proposed approach achieved a VPQ score of 57.01 on

the VIPSeg test set, and ranked 3rd in the VPS track of the 3rd Pixel-level

Video Understanding in the Wild Challenge.

This report summarises our method and validation results for the ISIC Challenge 2018 - Skin Lesion Analysis Towards Melanoma Detection - Task 1: Lesion Segmentation. We present a two-stage method for lesion segmentation with optimised training method and ensemble post-process. Our method achieves state-of-the-art performance on lesion segmentation and we win the first place in ISIC 2018 task1.

This paper addresses the problem of 3D hand pose estimation from a monocular

RGB image. While previous methods have shown great success, the structure of

hands has not been fully exploited, which is critical in pose estimation. To

this end, we propose a regularized graph representation learning under a

conditional adversarial learning framework for 3D hand pose estimation, aiming

to capture structural inter-dependencies of hand joints. In particular, we

estimate an initial hand pose from a parametric hand model as a prior of hand

structure, which regularizes the inference of the structural deformation in the

prior pose for accurate graph representation learning via residual graph

convolution. To optimize the hand structure further, we propose two

bone-constrained loss functions, which characterize the morphable structure of

hand poses explicitly. Also, we introduce an adversarial learning framework

conditioned on the input image with a multi-source discriminator, which imposes

the structural constraints onto the distribution of generated 3D hand poses for

anthropomorphically valid hand poses. Extensive experiments demonstrate that

our model sets the new state-of-the-art in 3D hand pose estimation from a

monocular image on five standard benchmarks.

26 Mar 2025

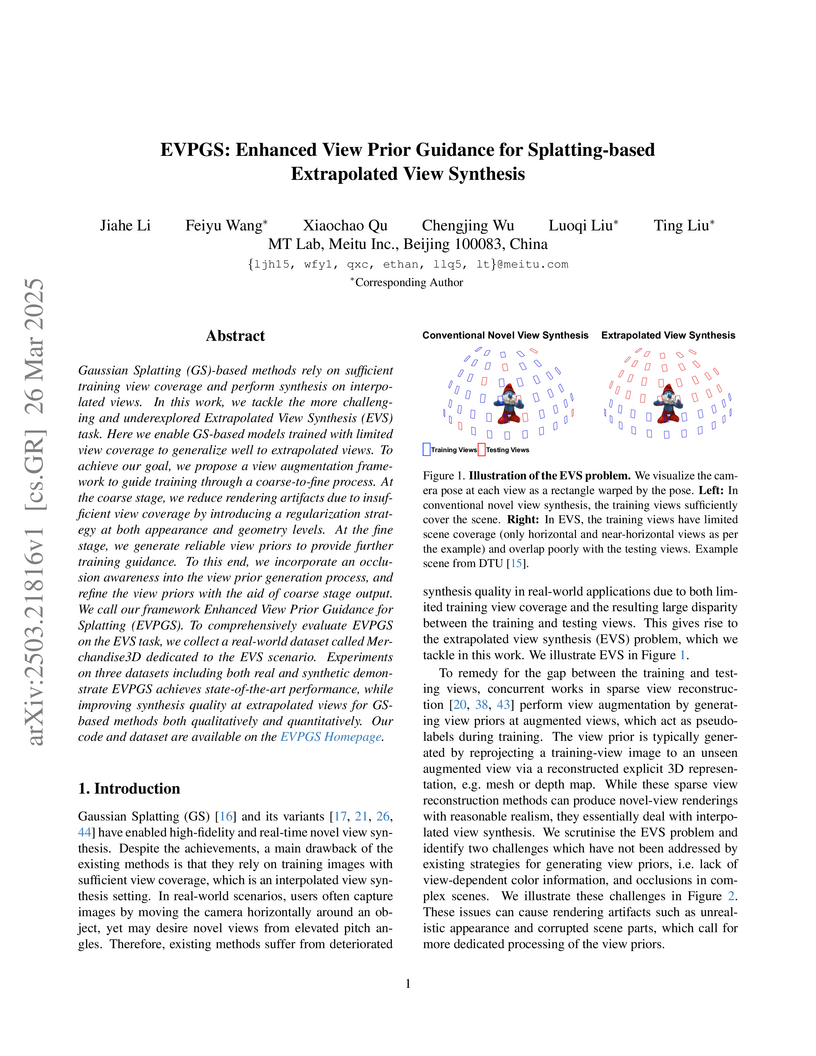

Gaussian Splatting (GS)-based methods rely on sufficient training view

coverage and perform synthesis on interpolated views. In this work, we tackle

the more challenging and underexplored Extrapolated View Synthesis (EVS) task.

Here we enable GS-based models trained with limited view coverage to generalize

well to extrapolated views. To achieve our goal, we propose a view augmentation

framework to guide training through a coarse-to-fine process. At the coarse

stage, we reduce rendering artifacts due to insufficient view coverage by

introducing a regularization strategy at both appearance and geometry levels.

At the fine stage, we generate reliable view priors to provide further training

guidance. To this end, we incorporate an occlusion awareness into the view

prior generation process, and refine the view priors with the aid of coarse

stage output. We call our framework Enhanced View Prior Guidance for Splatting

(EVPGS). To comprehensively evaluate EVPGS on the EVS task, we collect a

real-world dataset called Merchandise3D dedicated to the EVS scenario.

Experiments on three datasets including both real and synthetic demonstrate

EVPGS achieves state-of-the-art performance, while improving synthesis quality

at extrapolated views for GS-based methods both qualitatively and

quantitatively. We will make our code, dataset, and models public.

There are no more papers matching your filters at the moment.