LIGMUniversité Gustave Eiffel

Starting from a stochastic individual-based description of an SIS epidemic spreading on a random network, we study the dynamics when the size n of the network tends to infinity. We recover in the limit an infinite-dimensional integro-differential equation studied by Delmas, Dronnier and Zitt (2022) for an SIS epidemic propagating on a graphon. Our work covers the case of dense and sparse graphs, provided that the number of edges grows faster than n, but not the case of very sparse graphs with O(n) edges. In order to establish our limit theorem, we have to deal with both the convergence of the random graphs to the graphon and the convergence of the stochastic process spreading on top of these random structures: in particular, we propose a coupling between the process of interest and an epidemic that spreads on the complete graph but with a modified infection rate.

Keywords: Random graph, mathematical models of epidemics, measure-valued process, large network limit, limit theorem, graphon.

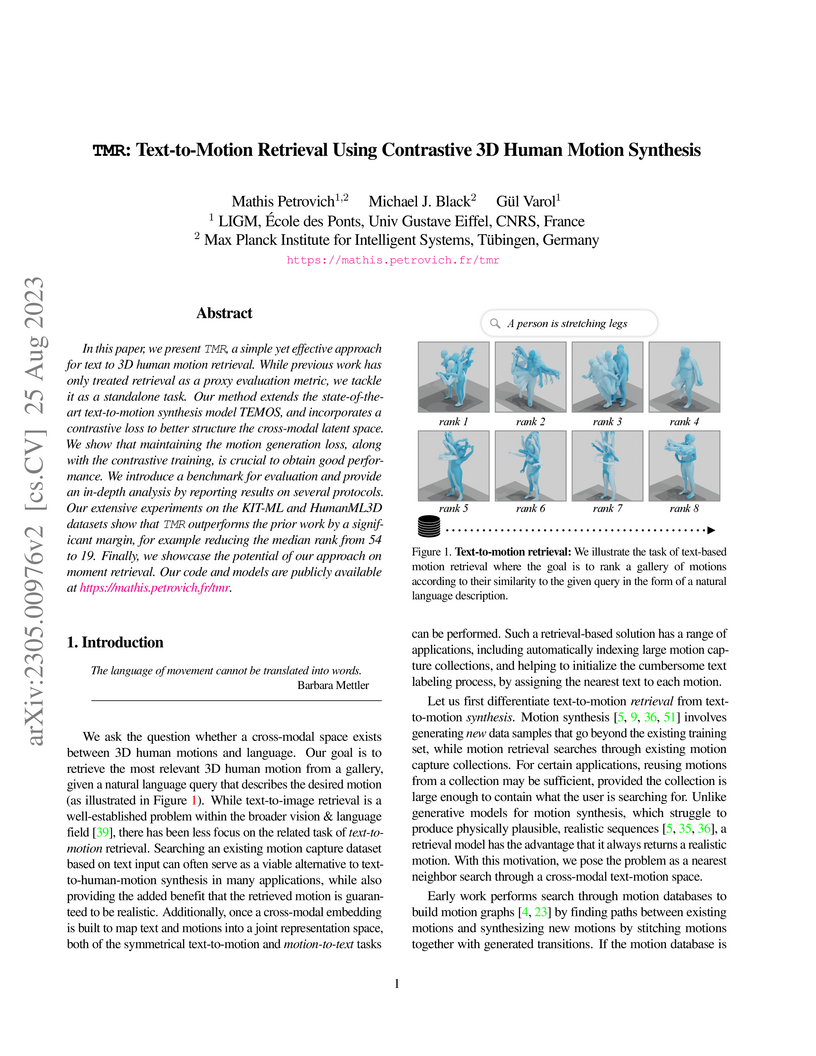

TMR proposes a high-performance system for text-to-3D human motion retrieval, addressing limitations of prior retrieval methods and offering a robust alternative to motion synthesis. It achieves state-of-the-art performance by combining a probabilistic motion synthesis framework with contrastive learning and a novel negative filtering mechanism, while also demonstrating emergent zero-shot moment retrieval capabilities.

Modern medical image registration approaches predict deformations using deep

networks. These approaches achieve state-of-the-art (SOTA) registration

accuracy and are generally fast. However, deep learning (DL) approaches are, in

contrast to conventional non-deep-learning-based approaches, anatomy-specific.

Recently, a universal deep registration approach, uniGradICON, has been

proposed. However, uniGradICON focuses on monomodal image registration. In this

work, we therefore develop multiGradICON as a first step towards universal

*multimodal* medical image registration. Specifically, we show that 1) we can

train a DL registration model that is suitable for monomodal *and* multimodal

registration; 2) loss function randomization can increase multimodal

registration accuracy; and 3) training a model with multimodal data helps

multimodal generalization. Our code and the multiGradICON model are available

at this https URL

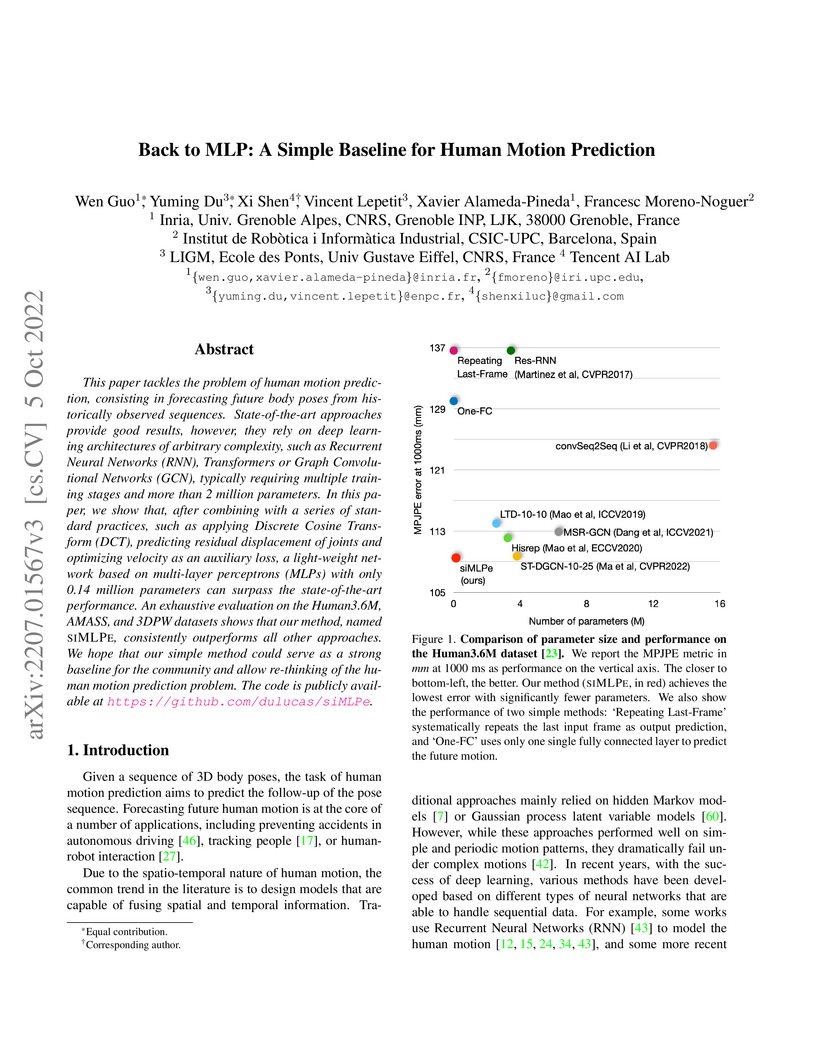

SIMLPE, a lightweight Multi-Layer Perceptron (MLP) network, achieves state-of-the-art performance in human motion prediction by outperforming complex deep learning models with significantly fewer parameters. The research challenges the trend of increasing architectural complexity, demonstrating that simple designs can be highly effective when combined with established practices like DCT and residual learning.

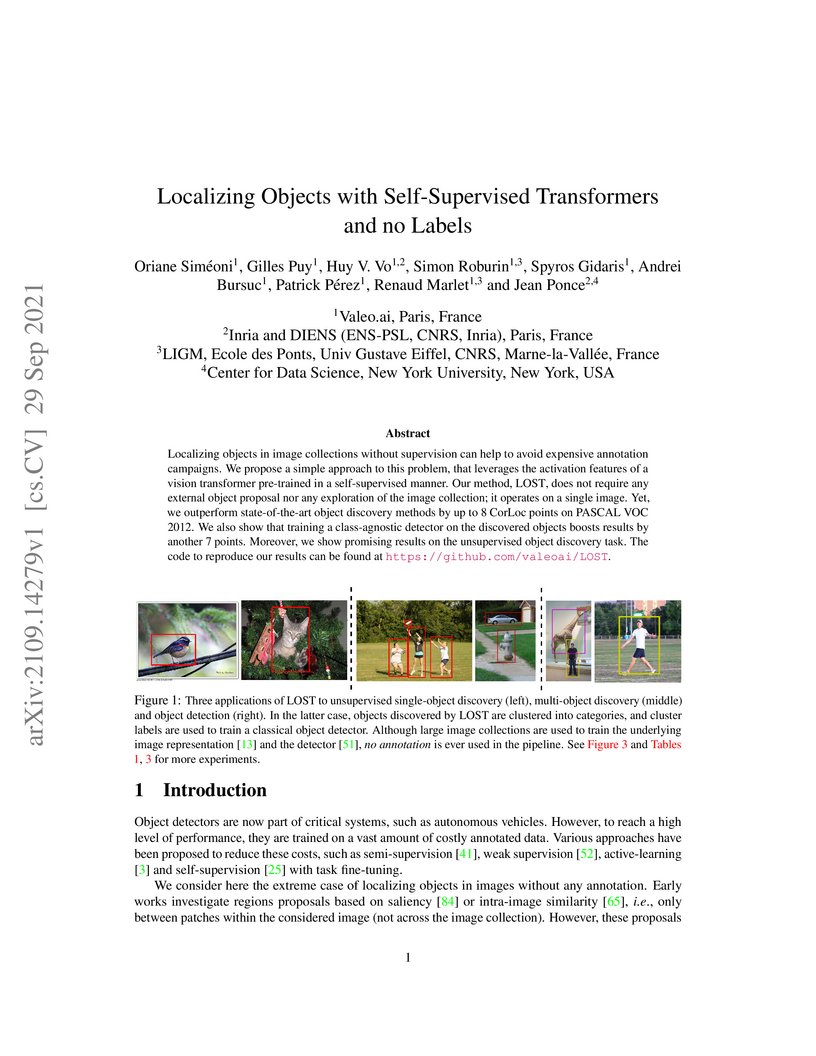

Researchers from Valeo.ai and partner institutions developed LOST, a method that localizes objects in images using self-supervised Vision Transformer features, requiring no human annotations. This approach achieves a CorLoc of 61.9% on PASCAL VOC 2007 `trainval` for single-object discovery and enables training of fully unsupervised class-agnostic and class-aware detectors, outperforming previous unsupervised methods.

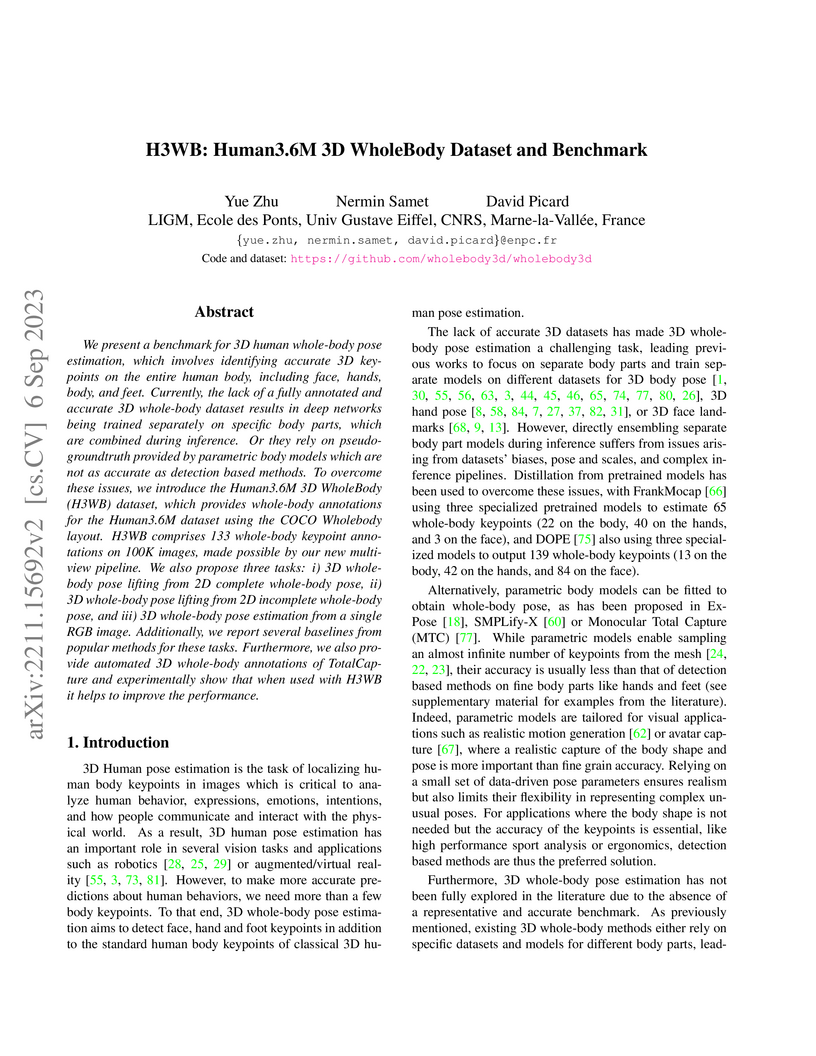

Human3.6M 3D WholeBody (H3WB) is introduced as the first large-scale, high-accuracy benchmark dataset for 3D whole-body pose estimation, featuring 100,000 images with 133 precise 3D keypoints validated at an average 17mm error. It also establishes standardized benchmark tasks and baselines to foster unified model development for comprehensive human understanding.

We introduce the Seismic Language Model (SeisLM), a foundational model designed to analyze seismic waveforms -- signals generated by Earth's vibrations such as the ones originating from earthquakes. SeisLM is pretrained on a large collection of open-source seismic datasets using a self-supervised contrastive loss, akin to BERT in language modeling. This approach allows the model to learn general seismic waveform patterns from unlabeled data without being tied to specific downstream tasks. When fine-tuned, SeisLM excels in seismological tasks like event detection, phase-picking, onset time regression, and foreshock-aftershock classification. The code has been made publicly available on this https URL.

In this paper, we describe a graph-based algorithm that uses the features obtained by a self-supervised transformer to detect and segment salient objects in images and videos. With this approach, the image patches that compose an image or video are organised into a fully connected graph, where the edge between each pair of patches is labeled with a similarity score between patches using features learned by the transformer. Detection and segmentation of salient objects is then formulated as a graph-cut problem and solved using the classical Normalized Cut algorithm. Despite the simplicity of this approach, it achieves state-of-the-art results on several common image and video detection and segmentation tasks. For unsupervised object discovery, this approach outperforms the competing approaches by a margin of 6.1%, 5.7%, and 2.6%, respectively, when tested with the VOC07, VOC12, and COCO20K datasets. For the unsupervised saliency detection task in images, this method improves the score for Intersection over Union (IoU) by 4.4%, 5.6% and 5.2%. When tested with the ECSSD, DUTS, and DUT-OMRON datasets, respectively, compared to current state-of-the-art techniques. This method also achieves competitive results for unsupervised video object segmentation tasks with the DAVIS, SegTV2, and FBMS datasets.

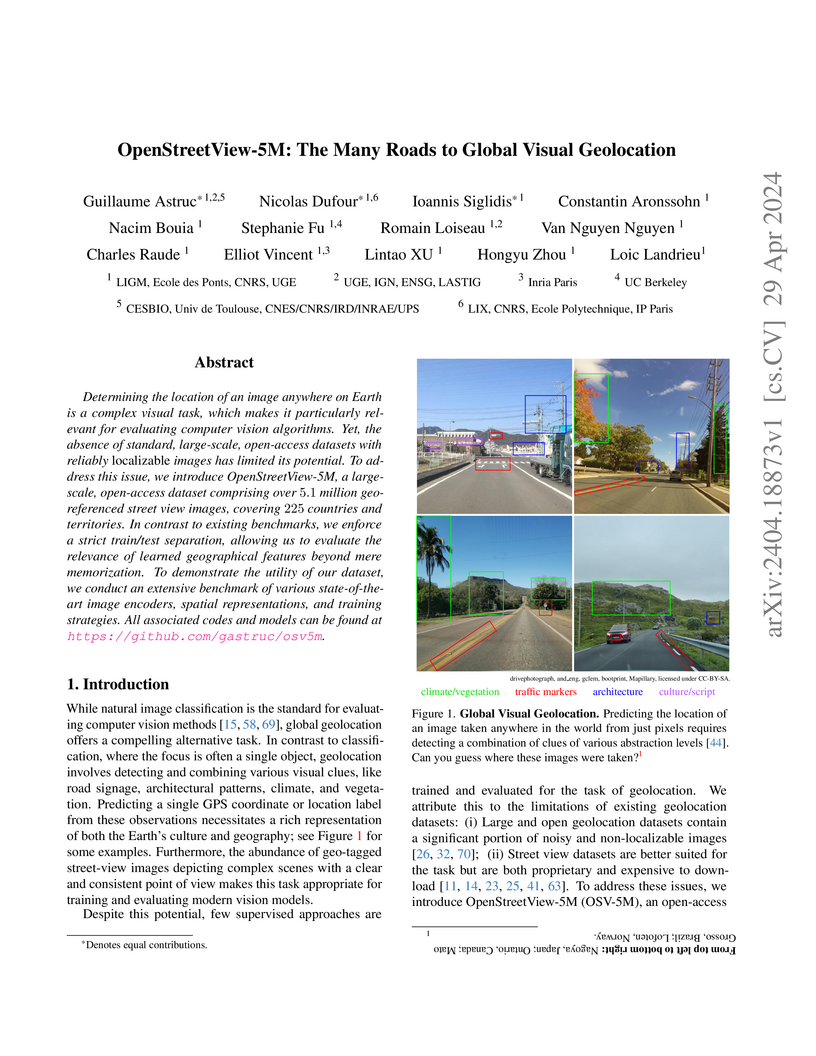

Determining the location of an image anywhere on Earth is a complex visual task, which makes it particularly relevant for evaluating computer vision algorithms. Yet, the absence of standard, large-scale, open-access datasets with reliably localizable images has limited its potential. To address this issue, we introduce OpenStreetView-5M, a large-scale, open-access dataset comprising over 5.1 million geo-referenced street view images, covering 225 countries and territories. In contrast to existing benchmarks, we enforce a strict train/test separation, allowing us to evaluate the relevance of learned geographical features beyond mere memorization. To demonstrate the utility of our dataset, we conduct an extensive benchmark of various state-of-the-art image encoders, spatial representations, and training strategies. All associated codes and models can be found at this https URL.

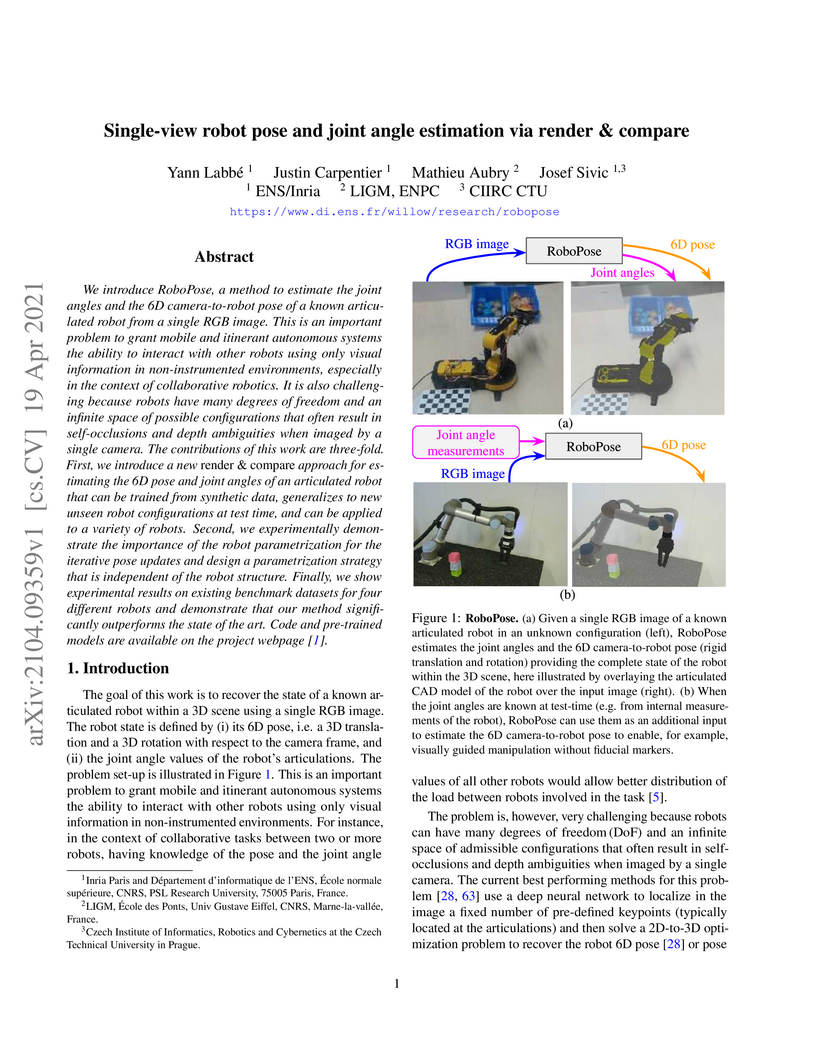

We introduce RoboPose, a method to estimate the joint angles and the 6D

camera-to-robot pose of a known articulated robot from a single RGB image. This

is an important problem to grant mobile and itinerant autonomous systems the

ability to interact with other robots using only visual information in

non-instrumented environments, especially in the context of collaborative

robotics. It is also challenging because robots have many degrees of freedom

and an infinite space of possible configurations that often result in

self-occlusions and depth ambiguities when imaged by a single camera. The

contributions of this work are three-fold. First, we introduce a new render &

compare approach for estimating the 6D pose and joint angles of an articulated

robot that can be trained from synthetic data, generalizes to new unseen robot

configurations at test time, and can be applied to a variety of robots. Second,

we experimentally demonstrate the importance of the robot parametrization for

the iterative pose updates and design a parametrization strategy that is

independent of the robot structure. Finally, we show experimental results on

existing benchmark datasets for four different robots and demonstrate that our

method significantly outperforms the state of the art. Code and pre-trained

models are available on the project webpage

this https URL

UniBench introduces a unified evaluation framework integrating over 50 Vision-Language Model (VLM) benchmarks, providing a standardized tool to assess capabilities and identify limitations. The study evaluates nearly 60 VLMs, revealing that scaling model size or training data offers minimal improvements for visual reasoning and relational understanding, while also exposing surprising underperformance on fundamental tasks like digit recognition.

Our goal is to train a generative model of 3D hand motions, conditioned on natural language descriptions specifying motion characteristics such as handshapes, locations, finger/hand/arm movements. To this end, we automatically build pairs of 3D hand motions and their associated textual labels with unprecedented scale. Specifically, we leverage a large-scale sign language video dataset, along with noisy pseudo-annotated sign categories, which we translate into hand motion descriptions via an LLM that utilizes a dictionary of sign attributes, as well as our complementary motion-script cues. This data enables training a text-conditioned hand motion diffusion model HandMDM, that is robust across domains such as unseen sign categories from the same sign language, but also signs from another sign language and non-sign hand movements. We contribute extensive experimental investigation of these scenarios and will make our trained models and data publicly available to support future research in this relatively new field.

Researchers at Meta AI - FAIR and New York University introduced RankMe, an unsupervised criterion that assesses the quality of self-supervised representations by quantifying the effective rank of their embeddings. This label-free method enables hyperparameter selection for Joint-Embedding Self-Supervised Learning models, often achieving performance comparable to or exceeding label-guided approaches on various downstream tasks with less than a 0.5-point average performance gap for linear probing on ImageNet.

Online continuous action recognition has emerged as a critical research area

due to its practical implications in real-world applications, such as

human-computer interaction, healthcare, and robotics. Among various modalities,

skeleton-based approaches have gained significant popularity, demonstrating

their effectiveness in capturing 3D temporal data while ensuring robustness to

environmental variations. However, most existing works focus on segment-based

recognition, making them unsuitable for real-time, continuous recognition

scenarios. In this paper, we propose a novel online recognition system designed

for real-time skeleton sequence streaming. Our approach leverages a hybrid

architecture combining Spatial Graph Convolutional Networks (S-GCN) for spatial

feature extraction and a Transformer-based Graph Encoder (TGE) for capturing

temporal dependencies across frames. Additionally, we introduce a continual

learning mechanism to enhance model adaptability to evolving data

distributions, ensuring robust recognition in dynamic environments. We evaluate

our method on the SHREC'21 benchmark dataset, demonstrating its superior

performance in online hand gesture recognition. Our approach not only achieves

state-of-the-art accuracy but also significantly reduces false positive rates,

making it a compelling solution for real-time applications. The proposed system

can be seamlessly integrated into various domains, including human-robot

collaboration and assistive technologies, where natural and intuitive

interaction is crucial.

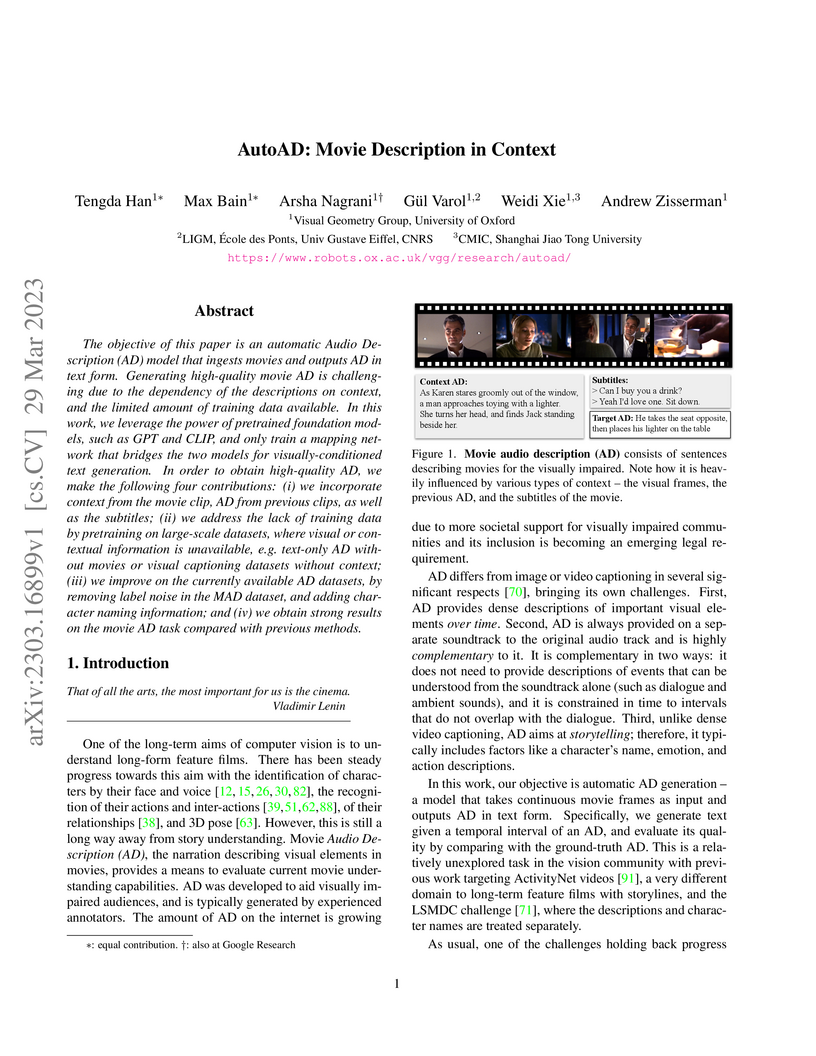

AutoAD, developed by the Visual Geometry Group at the University of Oxford, establishes a system for automatic audio description generation for movies by integrating visual, previous AD, and subtitle context. The model, leveraging frozen foundation models and novel partial-data pretraining, achieves state-of-the-art results on movie AD benchmarks, enhancing accessibility for visually impaired audiences and providing new, denoised datasets.

Recent approaches in self-supervised learning of image representations can be

categorized into different families of methods and, in particular, can be

divided into contrastive and non-contrastive approaches. While differences

between the two families have been thoroughly discussed to motivate new

approaches, we focus more on the theoretical similarities between them. By

designing contrastive and covariance based non-contrastive criteria that can be

related algebraically and shown to be equivalent under limited assumptions, we

show how close those families can be. We further study popular methods and

introduce variations of them, allowing us to relate this theoretical result to

current practices and show the influence (or lack thereof) of design choices on

downstream performance. Motivated by our equivalence result, we investigate the

low performance of SimCLR and show how it can match VICReg's with careful

hyperparameter tuning, improving significantly over known baselines. We also

challenge the popular assumption that non-contrastive methods need large output

dimensions. Our theoretical and quantitative results suggest that the numerical

gaps between contrastive and non-contrastive methods in certain regimes can be

closed given better network design choices and hyperparameter tuning. The

evidence shows that unifying different SOTA methods is an important direction

to build a better understanding of self-supervised learning.

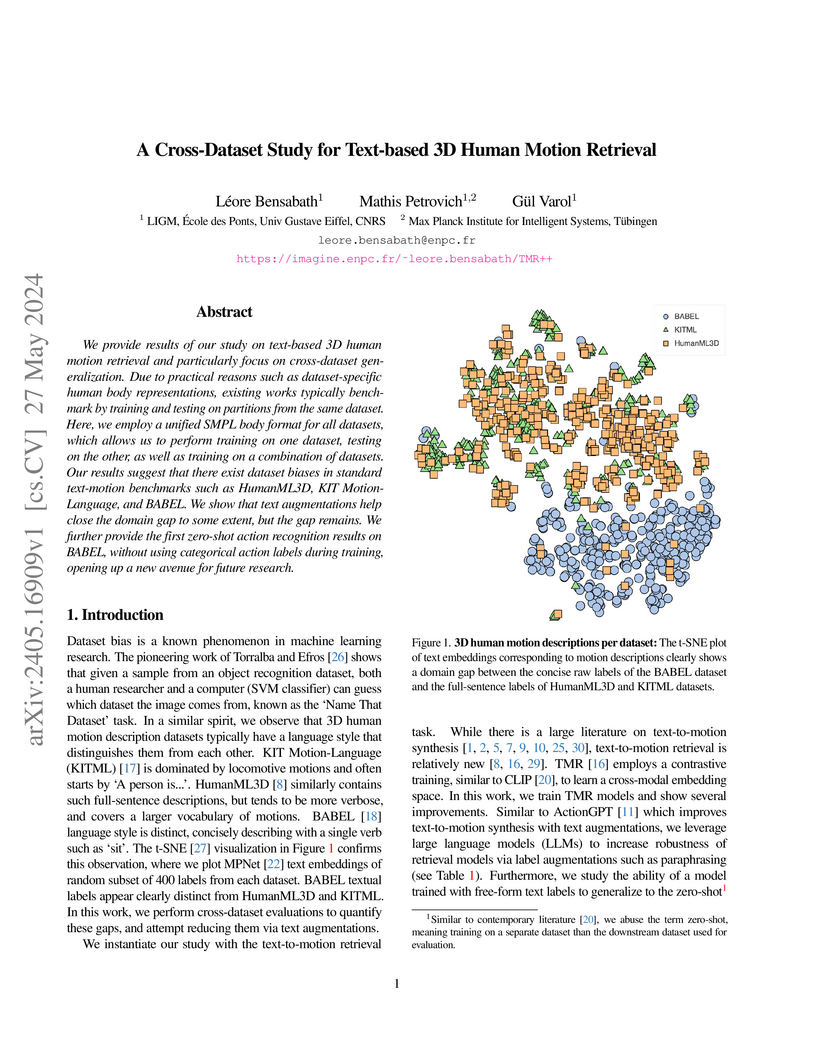

We provide results of our study on text-based 3D human motion retrieval and particularly focus on cross-dataset generalization. Due to practical reasons such as dataset-specific human body representations, existing works typically benchmarkby training and testing on partitions from the same dataset. Here, we employ a unified SMPL body format for all datasets, which allows us to perform training on one dataset, testing on the other, as well as training on a combination of datasets. Our results suggest that there exist dataset biases in standard text-motion benchmarks such as HumanML3D, KIT Motion-Language, and BABEL. We show that text augmentations help close the domain gap to some extent, but the gap remains. We further provide the first zero-shot action recognition results on BABEL, without using categorical action labels during training, opening up a new avenue for future research.

24 May 2025

We present the first global-scale database of 4.3 billion P- and S-wave picks

extracted from 1.3 PB continuous seismic data via a cloud-native workflow.

Using cloud computing services on Amazon Web Services, we launched ~145,000

containerized jobs on continuous records from 47,354 stations spanning

2002-2025, completing in under three days. Phase arrivals were identified with

a deep learning model, PhaseNet, through an open-source Python ecosystem for

deep learning, SeisBench. To visualize and gain a global understanding of these

picks, we present preliminary results about pick time series revealing

Omori-law aftershock decay, seasonal variations linked to noise levels, and

dense regional coverage that will enhance earthquake catalogs and

machine-learning datasets. We provide all picks in a publicly queryable

database, providing a powerful resource for researchers studying seismicity

around the world. This report provides insights into the database and the

underlying workflow, demonstrating the feasibility of petabyte-scale seismic

data mining on the cloud and of providing intelligent data products to the

community in an automated manner.

16 Dec 2024

Entropic Optimal Transport (EOT), also referred to as the Schrödinger problem, seeks to find a random processes with prescribed initial/final marginals and with minimal relative entropy with respect to a reference measure. The relative entropy forces the two measures to share the same support and only the drift of the controlled process can be adjusted, the diffusion being imposed by the reference measure. Therefore, at first sight, Semi-Martingale Optimal Transport (SMOT) problems (see [1]) seem out of the scope of applications of Entropic regularization techniques, which are otherwise very attractive from a computational point of view. However, when the process is observed only at discrete times, and become therefore a Markov chain, its relative entropy can remain finite even with variable diffusion coefficients, and discrete semi-martingales can be obtained as solutions of (multi-marginal) EOT this http URL a (smooth) semi-martingale, the limit of the relative entropy of its time discretizations, scaled by the time step converges to the so-called ``specific relative entropy'', a convex functional of its variance process, similar to those used in this http URL this paper we use this observation to build an entropic time discretization of continuous SMOT problems. This allows to compute discrete approximations of solutions to continuous SMOT problems by a multi-marginal Sinkhorn algorithm, without the need of solving the non-linear Hamilton-Jacobi-Bellman pde's associated to the dual problem, as done for example in [1, 2]. We prove a convergence result of the time discrete entropic problem to the continuous time problem, we propose an implementation and provide numerical experiments supporting the theoretical convergence.

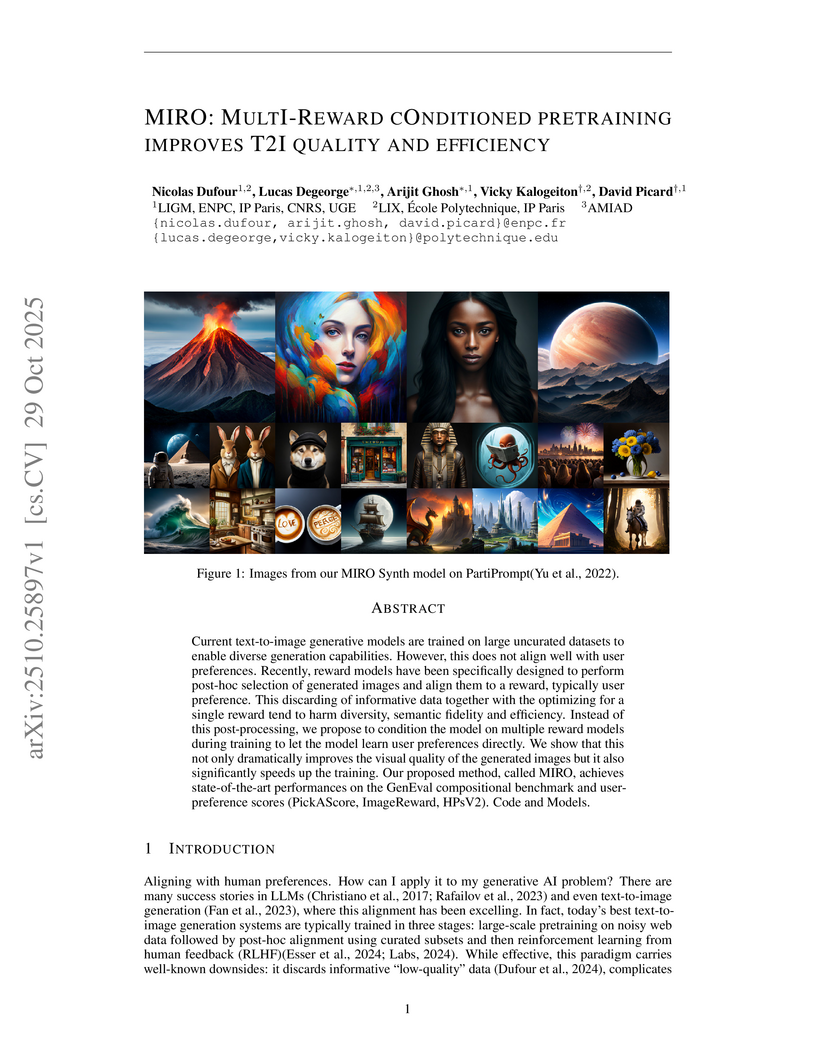

Current text-to-image generative models are trained on large uncurated datasets to enable diverse generation capabilities. However, this does not align well with user preferences. Recently, reward models have been specifically designed to perform post-hoc selection of generated images and align them to a reward, typically user preference. This discarding of informative data together with the optimizing for a single reward tend to harm diversity, semantic fidelity and efficiency. Instead of this post-processing, we propose to condition the model on multiple reward models during training to let the model learn user preferences directly. We show that this not only dramatically improves the visual quality of the generated images but it also significantly speeds up the training. Our proposed method, called MIRO, achieves state-of-the-art performances on the GenEval compositional benchmark and user-preference scores (PickAScore, ImageReward, HPSv2).

There are no more papers matching your filters at the moment.