Örebro University

04 Nov 2025

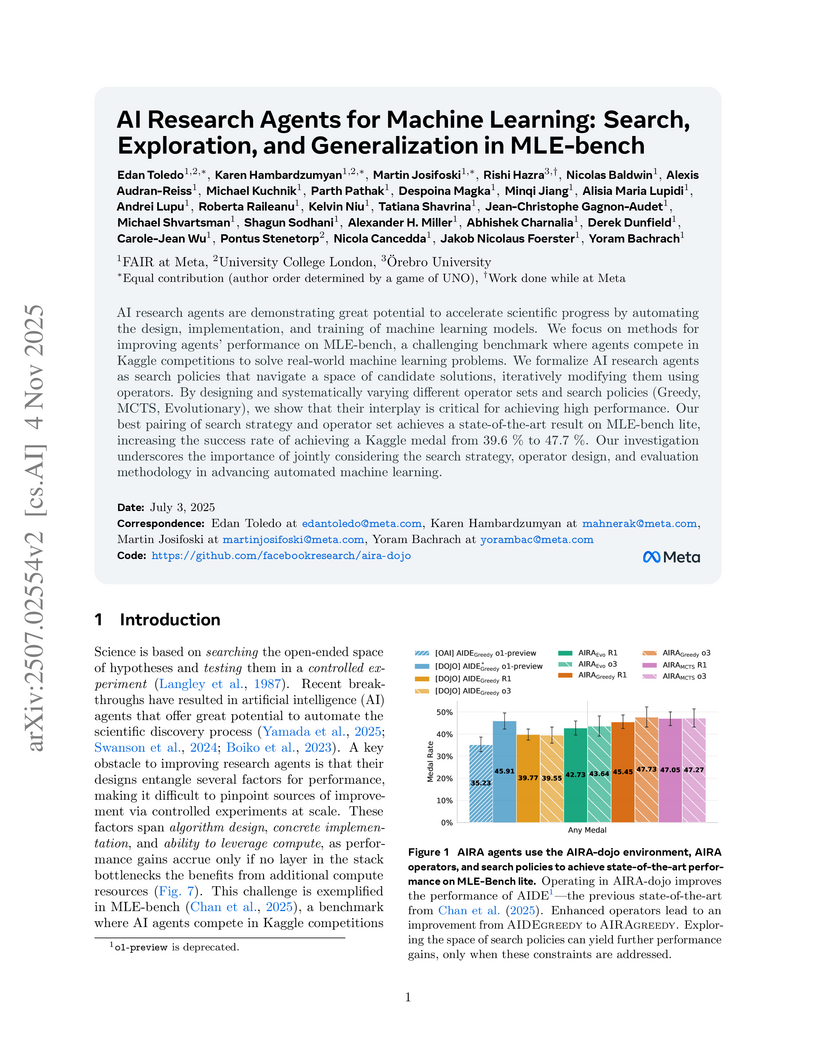

Researchers from FAIR at Meta and UCL systematically investigated AI research agents by formalizing them into search policies and operators, developing the AIRA-dojo framework, and achieving a state-of-the-art 47.7% medal rate on MLE-bench lite. Their work identifies the operator set as a key performance bottleneck and reveals a generalization gap between validation and test scores that can lead to systematic overfitting.

Researchers leverage the 3-SAT phase transition phenomenon to rigorously evaluate reasoning capabilities in large language models, revealing that while most LLMs struggle with harder instances, DeepSeek R1 demonstrates coherent search behaviors and superior performance in challenging regions through reinforcement learning-based training.

REvolve introduces an evolutionary framework that utilizes Large Language Models (LLMs) and direct human feedback to design interpretable reward functions for reinforcement learning. The system, which uses GPT-4 as an intelligent genetic operator within an island model, consistently achieves higher human-rated fitness scores compared to prior greedy LLM-based approaches and expert-designed rewards across complex tasks like autonomous driving, humanoid locomotion, and dexterous manipulation, while requiring less human data than RLHF.

01 Jan 2024

Large Language Models (LLMs) have demonstrated impressive planning abilities due to their vast "world knowledge". Yet, obtaining plans that are both feasible (grounded in affordances) and cost-effective (in plan length), remains a challenge, despite recent progress. This contrasts with heuristic planning methods that employ domain knowledge (formalized in action models such as PDDL) and heuristic search to generate feasible, optimal plans. Inspired by this, we propose to combine the power of LLMs and heuristic planning by leveraging the world knowledge of LLMs and the principles of heuristic search. Our approach, SayCanPay, employs LLMs to generate actions (Say) guided by learnable domain knowledge, that evaluates actions' feasibility (Can) and long-term reward/payoff (Pay), and heuristic search to select the best sequence of actions. Our contributions are (1) a novel framing of the LLM planning problem in the context of heuristic planning, (2) integrating grounding and cost-effective elements into the generated plans, and (3) using heuristic search over actions. Our extensive evaluations show that our model surpasses other LLM planning approaches.

11 May 2025

The first WARA Robotics Mobile Manipulation Challenge, held in December 2024

at ABB Corporate Research in V\"aster{\aa}s, Sweden, addressed the automation

of task-intensive and repetitive manual labor in laboratory environments -

specifically the transport and cleaning of glassware. Designed in collaboration

with AstraZeneca, the challenge invited academic teams to develop autonomous

robotic systems capable of navigating human-populated lab spaces and performing

complex manipulation tasks, such as loading items into industrial dishwashers.

This paper presents an overview of the challenge setup, its industrial

motivation, and the four distinct approaches proposed by the participating

teams. We summarize lessons learned from this edition and propose improvements

in design to enable a more effective second iteration to take place in 2025.

The initiative bridges an important gap in effective academia-industry

collaboration within the domain of autonomous mobile manipulation systems by

promoting the development and deployment of applied robotic solutions in

real-world laboratory contexts.

Learning representations for solutions of constrained optimization problems (COPs) with unknown cost functions is challenging, as models like (Variational) Autoencoders struggle to enforce constraints when decoding structured outputs. We propose an Inverse Optimization Latent Variable Model (IO-LVM) that learns a latent space of COP cost functions from observed solutions and reconstructs feasible outputs by solving a COP with a solver in the loop. Our approach leverages estimated gradients of a Fenchel-Young loss through a non-differentiable deterministic solver to shape the latent space. Unlike standard Inverse Optimization or Inverse Reinforcement Learning methods, which typically recover a single or context-specific cost function, IO-LVM captures a distribution over cost functions, enabling the identification of diverse solution behaviors arising from different agents or conditions not available during the training process. We validate our method on real-world datasets of ship and taxi routes, as well as paths in synthetic graphs, demonstrating its ability to reconstruct paths and cycles, predict their distributions, and yield interpretable latent representations.

Prompt tuning has emerged as a key technique for adapting large pre-trained Decision Transformers (DTs) in offline Reinforcement Learning (RL), particularly in multi-task and few-shot settings. The Prompting Decision Transformer (PDT) enables task generalization via trajectory prompts sampled uniformly from expert demonstrations -- without accounting for prompt informativeness. In this work, we propose a bandit-based prompt-tuning method that learns to construct optimal trajectory prompts from demonstration data at inference time. We devise a structured bandit architecture operating in the trajectory prompt space, achieving linear rather than combinatorial scaling with prompt size. Additionally, we show that the pre-trained PDT itself can serve as a powerful feature extractor for the bandit, enabling efficient reward modeling across various environments. We theoretically establish regret bounds and demonstrate empirically that our method consistently enhances performance across a wide range of tasks, high-dimensional environments, and out-of-distribution scenarios, outperforming existing baselines in prompt tuning.

Sequential problems are ubiquitous in AI, such as in reinforcement learning or natural language processing. State-of-the-art deep sequential models, like transformers, excel in these settings but fail to guarantee the satisfaction of constraints necessary for trustworthy deployment. In contrast, neurosymbolic AI (NeSy) provides a sound formalism to enforce constraints in deep probabilistic models but scales exponentially on sequential problems. To overcome these limitations, we introduce relational neurosymbolic Markov models (NeSy-MMs), a new class of end-to-end differentiable sequential models that integrate and provably satisfy relational logical constraints. We propose a strategy for inference and learning that scales on sequential settings, and that combines approximate Bayesian inference, automated reasoning, and gradient estimation. Our experiments show that NeSy-MMs can solve problems beyond the current state-of-the-art in neurosymbolic AI and still provide strong guarantees with respect to desired properties. Moreover, we show that our models are more interpretable and that constraints can be adapted at test time to out-of-distribution scenarios.

Neurosymbolic AI focuses on integrating learning and reasoning, in particular, on unifying logical and neural representations. Despite the existence of an alphabet soup of neurosymbolic AI systems, the field is lacking a generally accepted formal definition of what neurosymbolic models and inference really are. We introduce a formal definition for neurosymbolic AI that makes abstraction of its key ingredients. More specifically, we define neurosymbolic inference as the computation of an integral over a product of a logical and a belief function. We show that our neurosymbolic AI definition makes abstraction of key representative neurosymbolic AI systems.

Accurate and robust 3D scene reconstruction from casual, in-the-wild videos

can significantly simplify robot deployment to new environments. However,

reliable camera pose estimation and scene reconstruction from such

unconstrained videos remains an open challenge. Existing visual-only SLAM

methods perform well on benchmark datasets but struggle with real-world footage

which often exhibits uncontrolled motion including rapid rotations and pure

forward movements, textureless regions, and dynamic objects. We analyze the

limitations of current methods and introduce a robust pipeline designed to

improve 3D reconstruction from casual videos. We build upon recent deep visual

odometry methods but increase robustness in several ways. Camera intrinsics are

automatically recovered from the first few frames using structure-from-motion.

Dynamic objects and less-constrained areas are masked with a predictive model.

Additionally, we leverage monocular depth estimates to regularize bundle

adjustment, mitigating errors in low-parallax situations. Finally, we integrate

place recognition and loop closure to reduce long-term drift and refine both

intrinsics and pose estimates through global bundle adjustment. We demonstrate

large-scale contiguous 3D models from several online videos in various

environments. In contrast, baseline methods typically produce locally

inconsistent results at several points, producing separate segments or

distorted maps. In lieu of ground-truth pose data, we evaluate map consistency,

execution time and visual accuracy of re-rendered NeRF models. Our proposed

system establishes a new baseline for visual reconstruction from casual

uncontrolled videos found online, demonstrating more consistent reconstructions

over longer sequences of in-the-wild videos than previously achieved.

20 Jul 2022

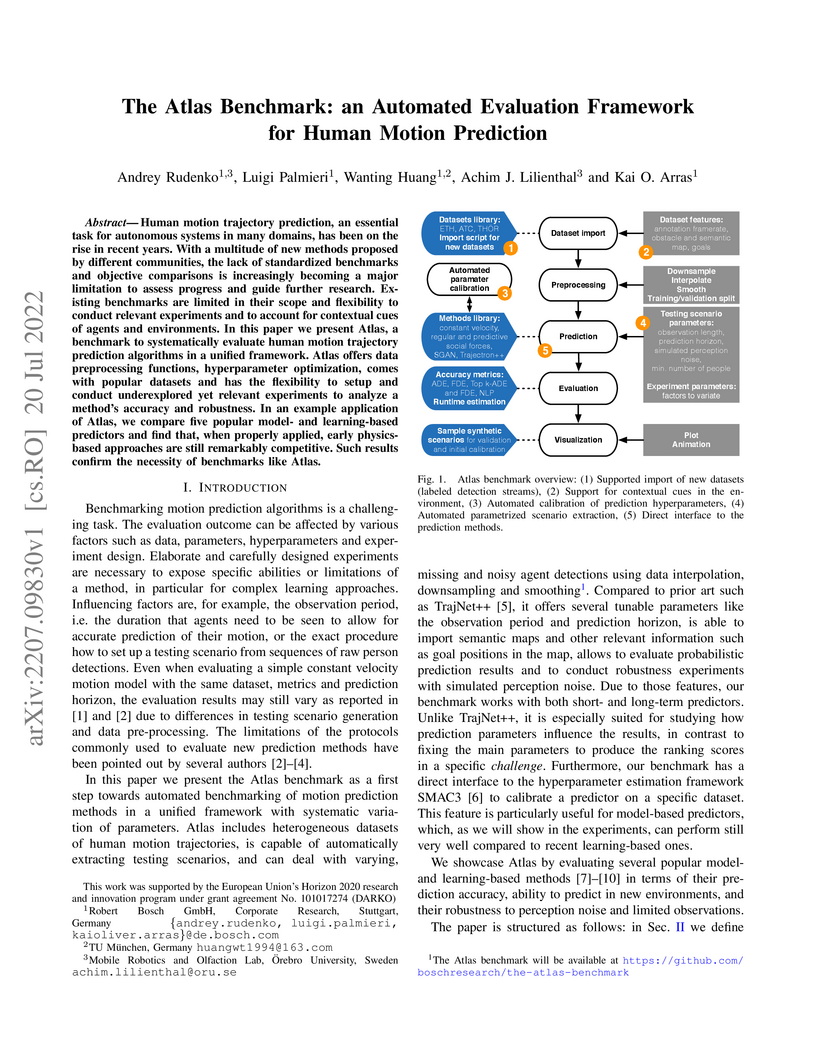

Human motion trajectory prediction, an essential task for autonomous systems in many domains, has been on the rise in recent years. With a multitude of new methods proposed by different communities, the lack of standardized benchmarks and objective comparisons is increasingly becoming a major limitation to assess progress and guide further research. Existing benchmarks are limited in their scope and flexibility to conduct relevant experiments and to account for contextual cues of agents and environments. In this paper we present Atlas, a benchmark to systematically evaluate human motion trajectory prediction algorithms in a unified framework. Atlas offers data preprocessing functions, hyperparameter optimization, comes with popular datasets and has the flexibility to setup and conduct underexplored yet relevant experiments to analyze a method's accuracy and robustness. In an example application of Atlas, we compare five popular model- and learning-based predictors and find that, when properly applied, early physics-based approaches are still remarkably competitive. Such results confirm the necessity of benchmarks like Atlas.

Shapley values have several desirable, theoretically well-supported, properties for explaining black-box model predictions. Traditionally, Shapley values are computed post-hoc, leading to additional computational cost at inference time. To overcome this, a novel method, called ViaSHAP, is proposed, that learns a function to compute Shapley values, from which the predictions can be derived directly by summation. Two approaches to implement the proposed method are explored; one based on the universal approximation theorem and the other on the Kolmogorov-Arnold representation theorem. Results from a large-scale empirical investigation are presented, showing that ViaSHAP using Kolmogorov-Arnold Networks performs on par with state-of-the-art algorithms for tabular data. It is also shown that the explanations of ViaSHAP are significantly more accurate than the popular approximator FastSHAP on both tabular data and images.

Feature attribution methods are widely used for explaining image-based predictions, as they provide feature-level insights that can be intuitively visualized. However, such explanations often vary in their robustness and may fail to faithfully reflect the reasoning of the underlying black-box model. To address these limitations, we propose a novel conformal prediction-based approach that enables users to directly control the fidelity of the generated explanations. The method identifies a subset of salient features that is sufficient to preserve the model's prediction, regardless of the information carried by the excluded features, and without demanding access to ground-truth explanations for calibration. Four conformity functions are proposed to quantify the extent to which explanations conform to the model's predictions. The approach is empirically evaluated using five explainers across six image datasets. The empirical results demonstrate that FastSHAP consistently outperforms the competing methods in terms of both fidelity and informational efficiency, the latter measured by the size of the explanation regions. Furthermore, the results reveal that conformity measures based on super-pixels are more effective than their pixel-wise counterparts.

We develop a new method for solving minimization problems on the Stiefel Manifold using damped dynamical systems. The constraints are satisfied in the limit by an additional damped dynamical system. The method is illustrated by numerical experiments and compared to a state-of-the-art conjugate gradient method.

A comprehensive survey defines a seven-dimensional conceptual framework to compare and unify Statistical Relational AI (StarAI) and Neurosymbolic AI (NeSy), highlighting their shared goal of integrating learning and reasoning. It reveals deep structural and semantic connections between probabilistic logic programs and neural network architectures, promoting cross-fertilization between the two fields.

27 Sep 2025

Large Language Models (LLMs) and Vision-Language Models (VLMs) are increasingly deployed in robotic environments but remain vulnerable to jailbreaking attacks that bypass safety mechanisms and drive unsafe or physically harmful behaviors in the real world. Data-driven defenses such as jailbreak classifiers show promise, yet they struggle to generalize in domains where specialized datasets are scarce, limiting their effectiveness in robotics and other safety-critical contexts. To address this gap, we introduce J-DAPT, a lightweight framework for multimodal jailbreak detection through attention-based fusion and domain adaptation. J-DAPT integrates textual and visual embeddings to capture both semantic intent and environmental grounding, while aligning general-purpose jailbreak datasets with domain-specific reference data. Evaluations across autonomous driving, maritime robotics, and quadruped navigation show that J-DAPT boosts detection accuracy to nearly 100% with minimal overhead. These results demonstrate that J-DAPT provides a practical defense for securing VLMs in robotic applications. Additional materials are made available at: this https URL.

The proliferation of bias and propaganda on social media is an increasingly significant concern, leading to the development of techniques for automatic detection. This article presents a multilingual corpus of 12, 000 Facebook posts fully annotated for bias and propaganda. The corpus was created as part of the FigNews 2024 Shared Task on News Media Narratives for framing the Israeli War on Gaza. It covers various events during the War from October 7, 2023 to January 31, 2024. The corpus comprises 12, 000 posts in five languages (Arabic, Hebrew, English, French, and Hindi), with 2, 400 posts for each language. The annotation process involved 10 graduate students specializing in Law. The Inter-Annotator Agreement (IAA) was used to evaluate the annotations of the corpus, with an average IAA of 80.8% for bias and 70.15% for propaganda annotations. Our team was ranked among the bestperforming teams in both Bias and Propaganda subtasks. The corpus is open-source and available at this https URL

22 Oct 2024

This study characterizes the reasoning abilities of Large Language Models by evaluating them on 3-Satisfiability (3-SAT) problems, a foundational NP-complete task. It finds that while LLMs like GPT-4 show apparent reasoning competence in easy problem regions, their accuracy drops to around 10% in inherently hard instances, yet they achieve nearly 100% accuracy when used as translators for external symbolic solvers.

This systematic literature review synthesizes current knowledge on Large Language Models (LLMs) in code security, categorizing vulnerabilities introduced by LLM-generated code and assessing LLMs' effectiveness in detecting and fixing code vulnerabilities. The study also examines how prompting techniques influence performance and analyzes the impact of data poisoning attacks on LLMs' code security capabilities, identifying key research gaps.

Safe Reinforcement learning (Safe RL) aims at learning optimal policies while staying safe. A popular solution to Safe RL is shielding, which uses a logical safety specification to prevent an RL agent from taking unsafe actions. However, traditional shielding techniques are difficult to integrate with continuous, end-to-end deep RL methods. To this end, we introduce Probabilistic Logic Policy Gradient (PLPG). PLPG is a model-based Safe RL technique that uses probabilistic logic programming to model logical safety constraints as differentiable functions. Therefore, PLPG can be seamlessly applied to any policy gradient algorithm while still providing the same convergence guarantees. In our experiments, we show that PLPG learns safer and more rewarding policies compared to other state-of-the-art shielding techniques.

There are no more papers matching your filters at the moment.