Hubei LuoJia Laboratory

Researchers at Tsinghua University and affiliated institutes developed "General Flow," a language-conditioned 3D flow prediction model trained on large-scale human RGBD video datasets, to enable scalable robot learning. The framework achieves an 81% average success rate in zero-shot human-to-robot skill transfer across 18 diverse real-world manipulation tasks.

Existing All-in-One image restoration methods often fail to perceive

degradation types and severity levels simultaneously, overlooking the

importance of fine-grained quality perception. Moreover, these methods often

utilize highly customized backbones, which hinder their adaptability and

integration into more advanced restoration networks. To address these

limitations, we propose Perceive-IR, a novel backbone-agnostic All-in-One image

restoration framework designed for fine-grained quality control across various

degradation types and severity levels. Its modular structure allows core

components to function independently of specific backbones, enabling seamless

integration into advanced restoration models without significant modifications.

Specifically, Perceive-IR operates in two key stages: 1) multi-level

quality-driven prompt learning stage, where a fine-grained quality perceiver is

meticulously trained to discern three tier quality levels by optimizing the

alignment between prompts and images within the CLIP perception space. This

stage ensures a nuanced understanding of image quality, laying the groundwork

for subsequent restoration; 2) restoration stage, where the quality perceiver

is seamlessly integrated with a difficulty-adaptive perceptual loss, forming a

quality-aware learning strategy. This strategy not only dynamically

differentiates sample learning difficulty but also achieves fine-grained

quality control by driving the restored image toward the ground truth while

pulling it away from both low- and medium-quality samples.

27 Aug 2025

Accurate and reliable navigation is crucial for autonomous unmanned ground vehicle (UGV). However, current UGV datasets fall short in meeting the demands for advancing navigation and mapping techniques due to limitations in sensor configuration, time synchronization, ground truth, and scenario diversity. To address these challenges, we present i2Nav-Robot, a large-scale dataset designed for multi-sensor fusion navigation and mapping in indoor-outdoor environments. We integrate multi-modal sensors, including the newest front-view and 360-degree solid-state LiDARs, 4-dimensional (4D) radar, stereo cameras, odometer, global navigation satellite system (GNSS) receiver, and inertial measurement units (IMU) on an omnidirectional wheeled robot. Accurate timestamps are obtained through both online hardware synchronization and offline calibration for all sensors. The dataset includes ten larger-scale sequences covering diverse UGV operating scenarios, such as outdoor streets, and indoor parking lots, with a total length of about 17060 meters. High-frequency ground truth, with centimeter-level accuracy for position, is derived from post-processing integrated navigation methods using a navigation-grade IMU. The proposed i2Nav-Robot dataset is evaluated by more than ten open-sourced multi-sensor fusion systems, and it has proven to have superior data quality.

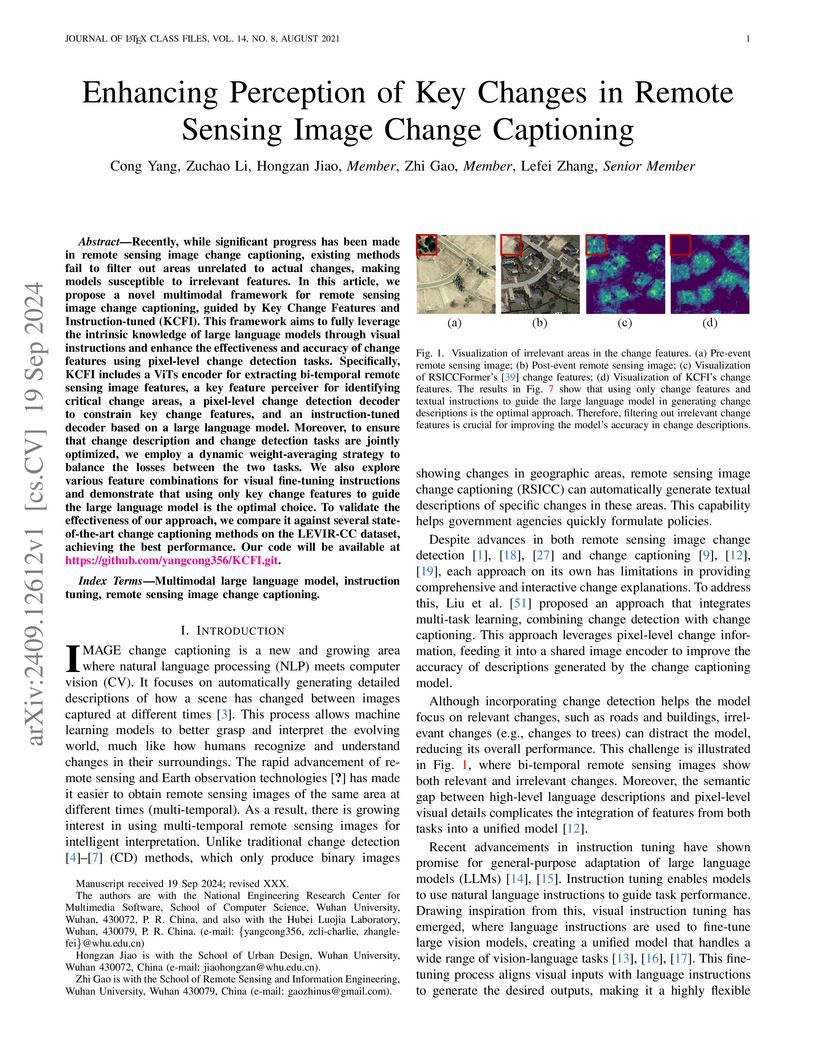

Recently, while significant progress has been made in remote sensing image change captioning, existing methods fail to filter out areas unrelated to actual changes, making models susceptible to irrelevant features. In this article, we propose a novel multimodal framework for remote sensing image change captioning, guided by Key Change Features and Instruction-tuned (KCFI). This framework aims to fully leverage the intrinsic knowledge of large language models through visual instructions and enhance the effectiveness and accuracy of change features using pixel-level change detection tasks. Specifically, KCFI includes a ViTs encoder for extracting bi-temporal remote sensing image features, a key feature perceiver for identifying critical change areas, a pixel-level change detection decoder to constrain key change features, and an instruction-tuned decoder based on a large language model. Moreover, to ensure that change description and change detection tasks are jointly optimized, we employ a dynamic weight-averaging strategy to balance the losses between the two tasks. We also explore various feature combinations for visual fine-tuning instructions and demonstrate that using only key change features to guide the large language model is the optimal choice. To validate the effectiveness of our approach, we compare it against several state-of-the-art change captioning methods on the LEVIR-CC dataset, achieving the best performance. Our code will be available at this https URL.

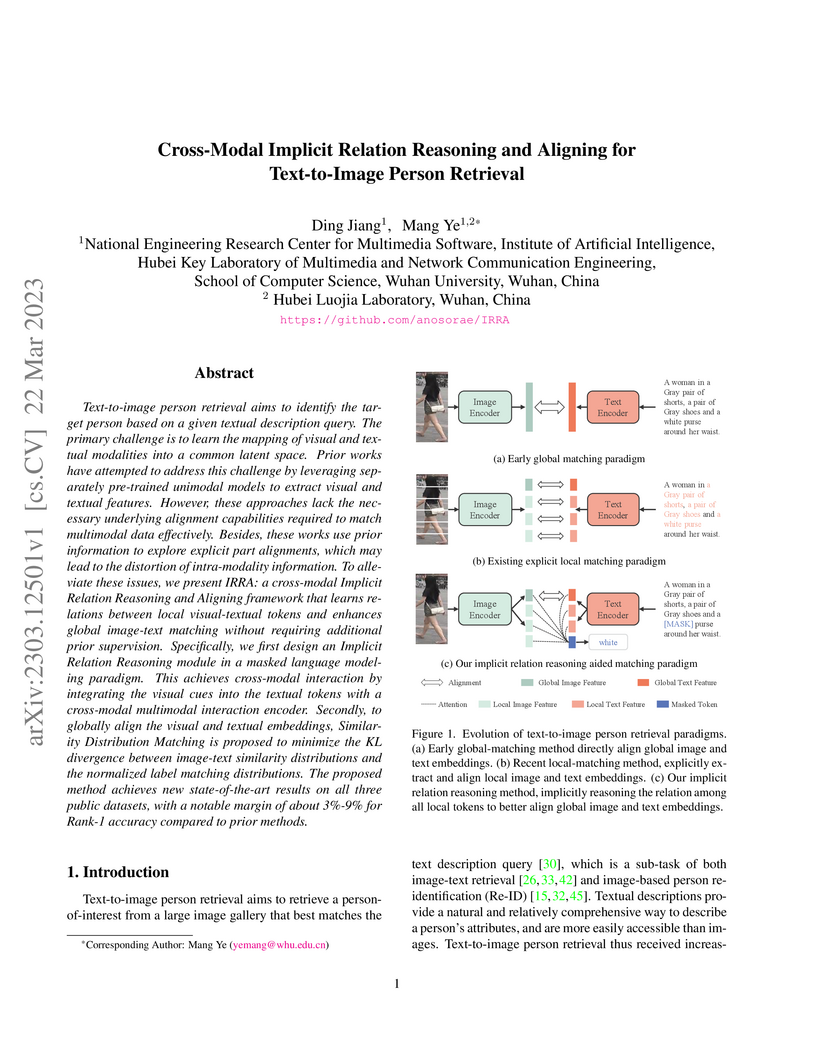

Text-to-image person retrieval aims to identify the target person based on a

given textual description query. The primary challenge is to learn the mapping

of visual and textual modalities into a common latent space. Prior works have

attempted to address this challenge by leveraging separately pre-trained

unimodal models to extract visual and textual features. However, these

approaches lack the necessary underlying alignment capabilities required to

match multimodal data effectively. Besides, these works use prior information

to explore explicit part alignments, which may lead to the distortion of

intra-modality information. To alleviate these issues, we present IRRA: a

cross-modal Implicit Relation Reasoning and Aligning framework that learns

relations between local visual-textual tokens and enhances global image-text

matching without requiring additional prior supervision. Specifically, we first

design an Implicit Relation Reasoning module in a masked language modeling

paradigm. This achieves cross-modal interaction by integrating the visual cues

into the textual tokens with a cross-modal multimodal interaction encoder.

Secondly, to globally align the visual and textual embeddings, Similarity

Distribution Matching is proposed to minimize the KL divergence between

image-text similarity distributions and the normalized label matching

distributions. The proposed method achieves new state-of-the-art results on all

three public datasets, with a notable margin of about 3%-9% for Rank-1 accuracy

compared to prior methods.

20 Oct 2025

With the rapid growth of bike sharing and the increasing diversity of cycling applications, accurate bicycle localization has become essential. traditional GNSS-based methods suffer from multipath effects, while existing inertial navigation approaches rely on precise modeling and show limited robustness. Tight Learned Inertial Odometry (TLIO) achieves low position drift by combining raw IMU data with predicted displacements by neural networks, but its high computational cost restricts deployment on mobile devices. To overcome this, we extend TLIO to bicycle localization and introduce an improved Mixture-of Experts (MoE) model that reduces both training and inference costs. Experiments show that, compared to the state-of-the-art LLIO framework, our method achieves comparable accuracy while reducing parameters by 64.7% and computational cost by 81.8%.

Transformer has achieved satisfactory results in the field of hyperspectral

image (HSI) classification. However, existing Transformer models face two key

challenges when dealing with HSI scenes characterized by diverse land cover

types and rich spectral information: (1) A fixed receptive field overlooks the

effective contextual scales required by various HSI objects; (2) invalid

self-attention features in context fusion affect model performance. To address

these limitations, we propose a novel Dual Selective Fusion Transformer Network

(DSFormer) for HSI classification. DSFormer achieves joint spatial and spectral

contextual modeling by flexibly selecting and fusing features across different

receptive fields, effectively reducing unnecessary information interference by

focusing on the most relevant spatial-spectral tokens. Specifically, we design

a Kernel Selective Fusion Transformer Block (KSFTB) to learn an optimal

receptive field by adaptively fusing spatial and spectral features across

different scales, enhancing the model's ability to accurately identify diverse

HSI objects. Additionally, we introduce a Token Selective Fusion Transformer

Block (TSFTB), which strategically selects and combines essential tokens during

the spatial-spectral self-attention fusion process to capture the most crucial

contexts. Extensive experiments conducted on four benchmark HSI datasets

demonstrate that the proposed DSFormer significantly improves land cover

classification accuracy, outperforming existing state-of-the-art methods.

Specifically, DSFormer achieves overall accuracies of 96.59%, 97.66%, 95.17%,

and 94.59% in the Pavia University, Houston, Indian Pines, and Whu-HongHu

datasets, respectively, reflecting improvements of 3.19%, 1.14%, 0.91%, and

2.80% over the previous model. The code will be available online at

this https URL

Existing underwater image restoration (UIR) methods generally only handle color distortion or jointly address color and haze issues, but they often overlook the more complex degradations that can occur in underwater scenes. To address this limitation, we propose a Universal Underwater Image Restoration method, termed as UniUIR, considering the complex scenario of real-world underwater mixed distortions as an all-in-one manner. To decouple degradation-specific issues and explore the inter-correlations among various degradations in UIR task, we designed the Mamba Mixture-of-Experts module. This module enables each expert to identify distinct types of degradation and collaboratively extract task-specific priors while maintaining global feature representation based on linear complexity. Building upon this foundation, to enhance degradation representation and address the task conflicts that arise when handling multiple types of degradation, we introduce the spatial-frequency prior generator. This module extracts degradation prior information in both spatial and frequency domains, and adaptively selects the most appropriate task-specific prompts based on image content, thereby improving the accuracy of image restoration. Finally, to more effectively address complex, region-dependent distortions in UIR task, we incorporate depth information derived from a large-scale pre-trained depth prediction model, thereby enabling the network to perceive and leverage depth variations across different image regions to handle localized degradation. Extensive experiments demonstrate that UniUIR can produce more attractive results across qualitative and quantitative comparisons, and shows strong generalization than state-of-the-art methods.

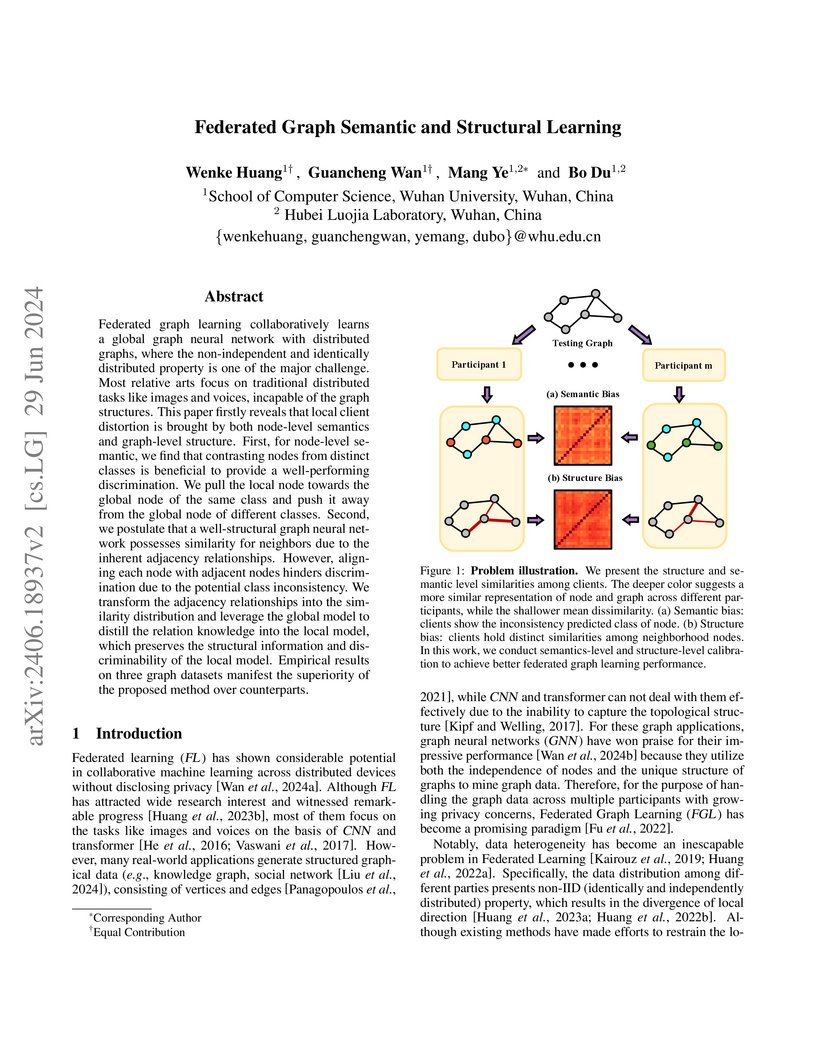

Federated graph learning collaboratively learns a global graph neural network

with distributed graphs, where the non-independent and identically distributed

property is one of the major challenges. Most relative arts focus on

traditional distributed tasks like images and voices, incapable of graph

structures. This paper firstly reveals that local client distortion is brought

by both node-level semantics and graph-level structure. First, for node-level

semantics, we find that contrasting nodes from distinct classes is beneficial

to provide a well-performing discrimination. We pull the local node towards the

global node of the same class and push it away from the global node of

different classes. Second, we postulate that a well-structural graph neural

network possesses similarity for neighbors due to the inherent adjacency

relationships. However, aligning each node with adjacent nodes hinders

discrimination due to the potential class inconsistency. We transform the

adjacency relationships into the similarity distribution and leverage the

global model to distill the relation knowledge into the local model, which

preserves the structural information and discriminability of the local model.

Empirical results on three graph datasets manifest the superiority of the

proposed method over its counterparts.

Wildlife ReID involves utilizing visual technology to identify specific individuals of wild animals in different scenarios, holding significant importance for wildlife conservation, ecological research, and environmental monitoring. Existing wildlife ReID methods are predominantly tailored to specific species, exhibiting limited applicability. Although some approaches leverage extensively studied person ReID techniques, they struggle to address the unique challenges posed by wildlife. Therefore, in this paper, we present a unified, multi-species general framework for wildlife ReID. Given that high-frequency information is a consistent representation of unique features in various species, significantly aiding in identifying contours and details such as fur textures, we propose the Adaptive High-Frequency Transformer model with the goal of enhancing high-frequency information learning. To mitigate the inevitable high-frequency interference in the wilderness environment, we introduce an object-aware high-frequency selection strategy to adaptively capture more valuable high-frequency components. Notably, we unify the experimental settings of multiple wildlife datasets for ReID, achieving superior performance over state-of-the-art ReID methods. In domain generalization scenarios, our approach demonstrates robust generalization to unknown species.

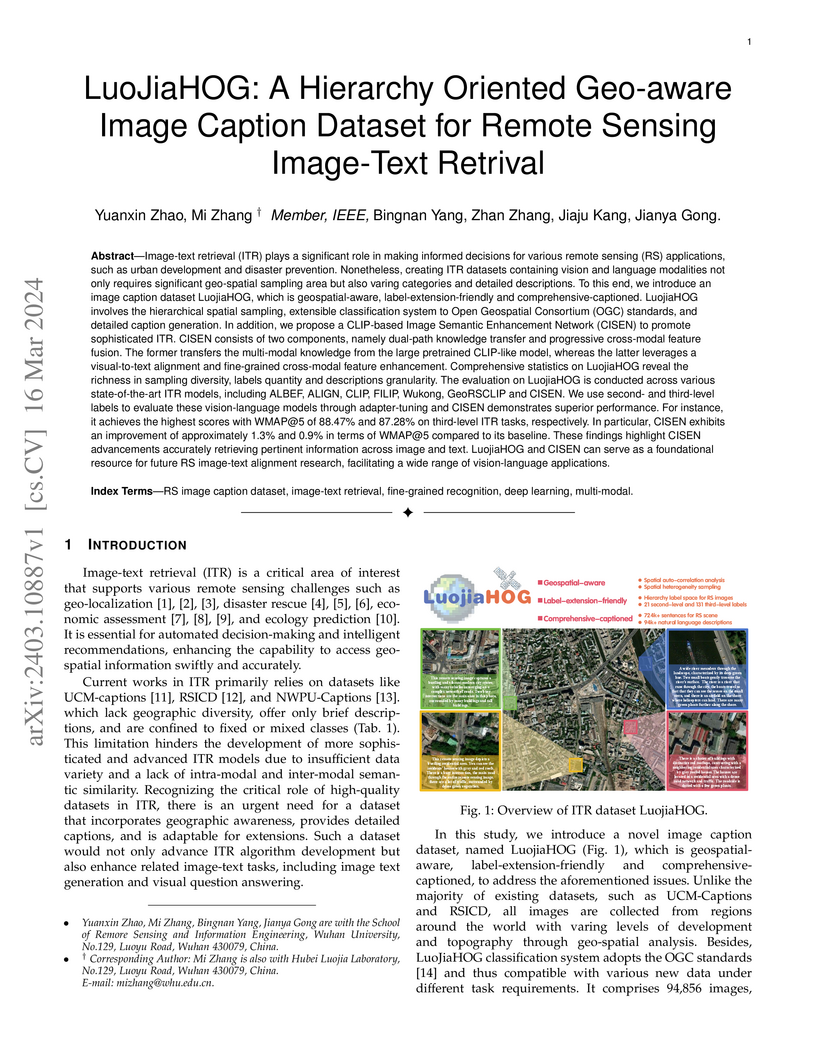

Image-text retrieval (ITR) plays a significant role in making informed

decisions for various remote sensing (RS) applications. Nonetheless, creating

ITR datasets containing vision and language modalities not only requires

significant geo-spatial sampling area but also varing categories and detailed

descriptions. To this end, we introduce an image caption dataset LuojiaHOG,

which is geospatial-aware, label-extension-friendly and

comprehensive-captioned. LuojiaHOG involves the hierarchical spatial sampling,

extensible classification system to Open Geospatial Consortium (OGC) standards,

and detailed caption generation. In addition, we propose a CLIP-based Image

Semantic Enhancement Network (CISEN) to promote sophisticated ITR. CISEN

consists of two components, namely dual-path knowledge transfer and progressive

cross-modal feature fusion. Comprehensive statistics on LuojiaHOG reveal the

richness in sampling diversity, labels quantity and descriptions granularity.

The evaluation on LuojiaHOG is conducted across various state-of-the-art ITR

models, including ALBEF, ALIGN, CLIP, FILIP, Wukong, GeoRSCLIP and CISEN. We

use second- and third-level labels to evaluate these vision-language models

through adapter-tuning and CISEN demonstrates superior performance. For

instance, it achieves the highest scores with WMAP@5 of 88.47\% and 87.28\% on

third-level ITR tasks, respectively. In particular, CISEN exhibits an

improvement of approximately 1.3\% and 0.9\% in terms of WMAP@5 compared to its

baseline. These findings highlight CISEN advancements accurately retrieving

pertinent information across image and text. LuojiaHOG and CISEN can serve as a

foundational resource for future RS image-text alignment research, facilitating

a wide range of vision-language applications.

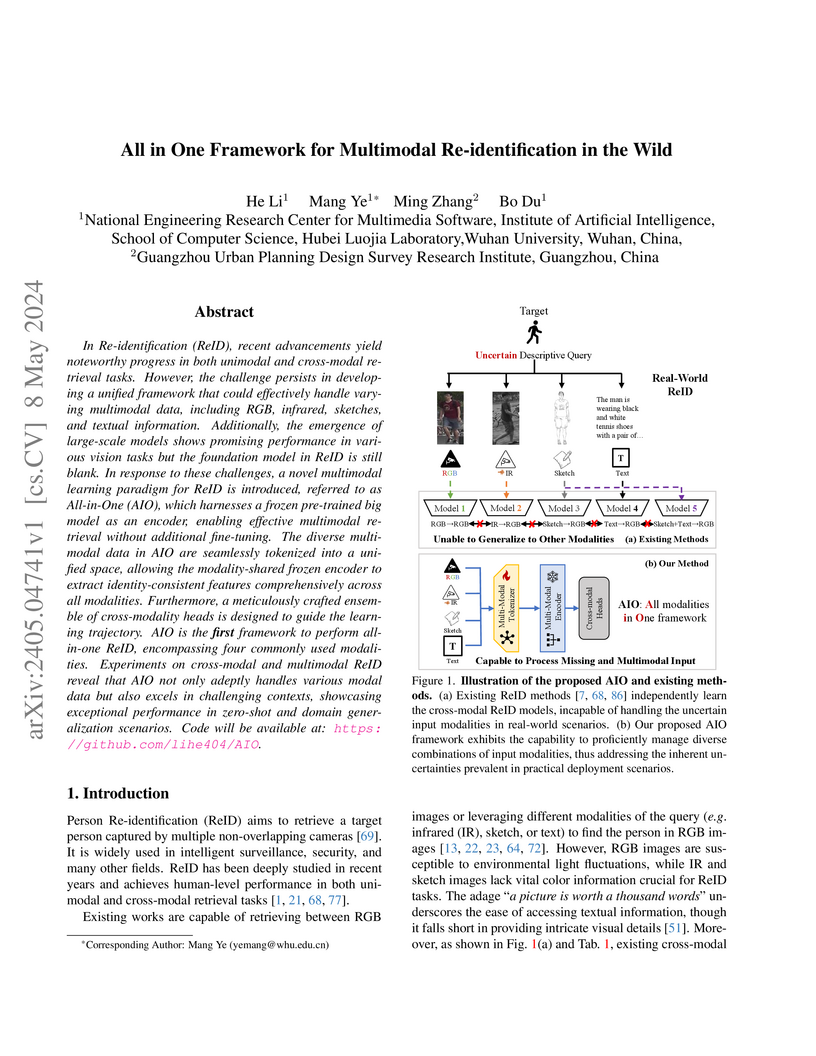

Researchers from Wuhan University developed the All-in-One (AIO) framework, the first unified system capable of simultaneously performing person re-identification across RGB, infrared, sketch, and text modalities. It leverages a frozen large-scale pre-trained foundation model to achieve competitive zero-shot performance in diverse real-world scenarios.

Neural speech coding is a rapidly developing topic, where state-of-the-art

approaches now exhibit superior compression performance than conventional

methods. Despite significant progress, existing methods still have limitations

in preserving and reconstructing fine details for optimal reconstruction,

especially at low bitrates. In this study, we introduce SuperCodec, a neural

speech codec that achieves state-of-the-art performance at low bitrates. It

employs a novel back projection method with selective feature fusion for

augmented representation. Specifically, we propose to use Selective Up-sampling

Back Projection (SUBP) and Selective Down-sampling Back Projection (SDBP)

modules to replace the standard up- and down-sampling layers at the encoder and

decoder, respectively. Experimental results show that our method outperforms

the existing neural speech codecs operating at various bitrates. Specifically,

our proposed method can achieve higher quality reconstructed speech at 1 kbps

than Lyra V2 at 3.2 kbps and Encodec at 6 kbps.

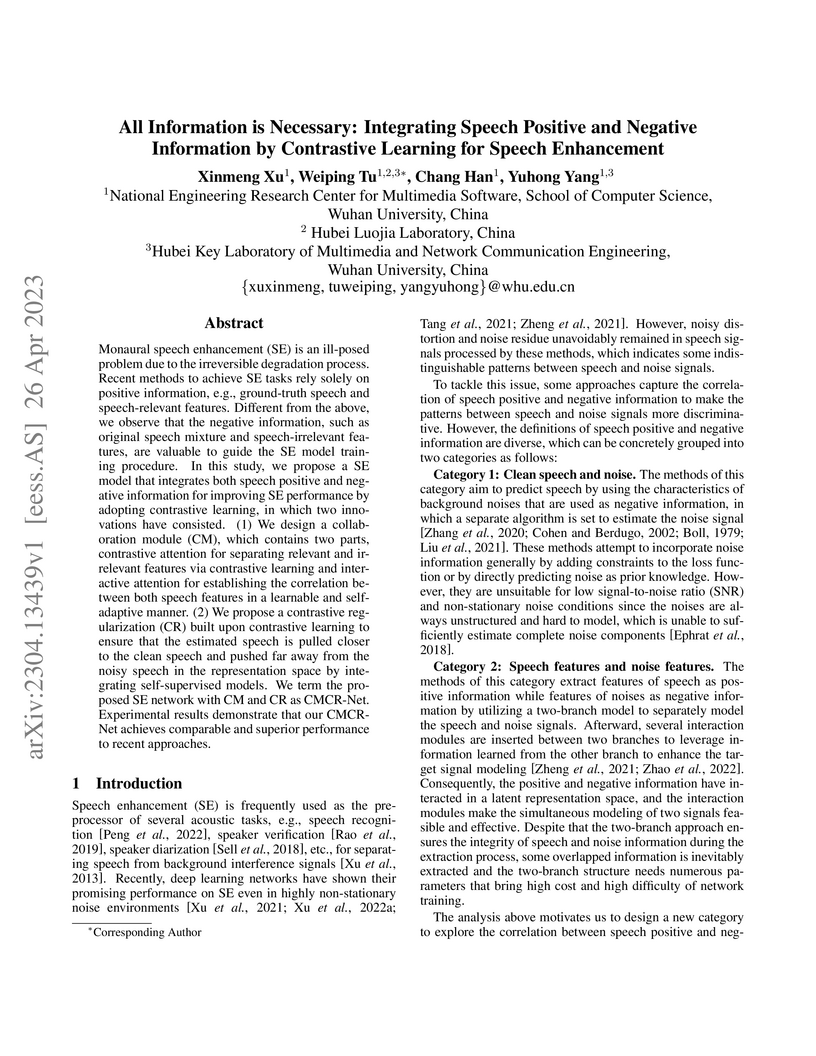

Monaural speech enhancement (SE) is an ill-posed problem due to the

irreversible degradation process. Recent methods to achieve SE tasks rely

solely on positive information, e.g., ground-truth speech and speech-relevant

features. Different from the above, we observe that the negative information,

such as original speech mixture and speech-irrelevant features, are valuable to

guide the SE model training procedure. In this study, we propose a SE model

that integrates both speech positive and negative information for improving SE

performance by adopting contrastive learning, in which two innovations have

consisted. (1) We design a collaboration module (CM), which contains two parts,

contrastive attention for separating relevant and irrelevant features via

contrastive learning and interactive attention for establishing the correlation

between both speech features in a learnable and self-adaptive manner. (2) We

propose a contrastive regularization (CR) built upon contrastive learning to

ensure that the estimated speech is pulled closer to the clean speech and

pushed far away from the noisy speech in the representation space by

integrating self-supervised models. We term the proposed SE network with CM and

CR as CMCR-Net. Experimental results demonstrate that our CMCR-Net achieves

comparable and superior performance to recent approaches.

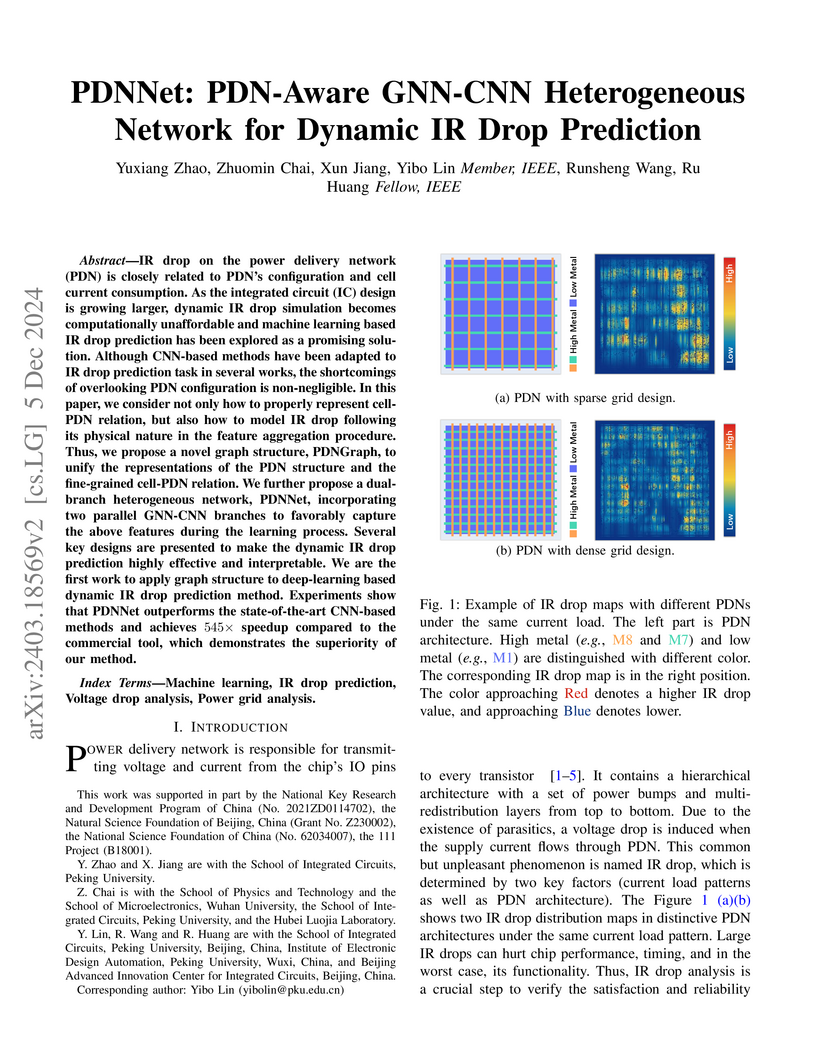

Peking University researchers developed PDNNet, a heterogeneous Graph Neural Network-Convolutional Neural Network (GNN-CNN) architecture for dynamic IR drop prediction in integrated circuits. The approach explicitly models the Power Delivery Network (PDN) using a novel directed graph structure, achieving a 24.3% reduction in prediction error (NMAE of 0.028) and a 545x speedup compared to commercial simulation tools.

05 Jul 2023

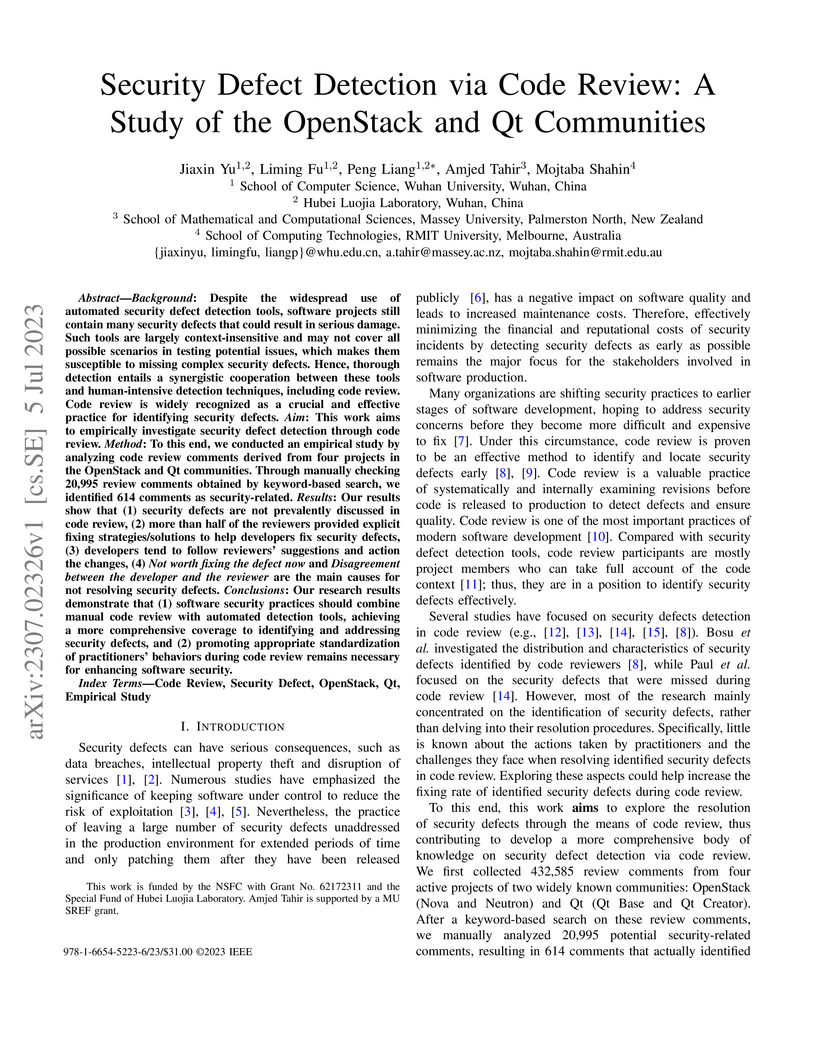

Background: Despite the widespread use of automated security defect detection tools, software projects still contain many security defects that could result in serious damage. Such tools are largely context-insensitive and may not cover all possible scenarios in testing potential issues, which makes them susceptible to missing complex security defects. Hence, thorough detection entails a synergistic cooperation between these tools and human-intensive detection techniques, including code review. Code review is widely recognized as a crucial and effective practice for identifying security defects. Aim: This work aims to empirically investigate security defect detection through code review. Method: To this end, we conducted an empirical study by analyzing code review comments derived from four projects in the OpenStack and Qt communities. Through manually checking 20,995 review comments obtained by keyword-based search, we identified 614 comments as security-related. Results: Our results show that (1) security defects are not prevalently discussed in code review, (2) more than half of the reviewers provided explicit fixing strategies/solutions to help developers fix security defects, (3) developers tend to follow reviewers' suggestions and action the changes, (4) Not worth fixing the defect now and Disagreement between the developer and the reviewer are the main causes for not resolving security defects. Conclusions: Our research results demonstrate that (1) software security practices should combine manual code review with automated detection tools, achieving a more comprehensive coverage to identifying and addressing security defects, and (2) promoting appropriate standardization of practitioners' behaviors during code review remains necessary for enhancing software security.

This paper outlines PracAPR, a vision for an interactive, IDE-integrated automated program repair system designed to assist developers in debugging without relying on comprehensive test suites or frequent program re-execution. It aims to provide rapid, effective repair suggestions for both local and complex multi-location bugs.

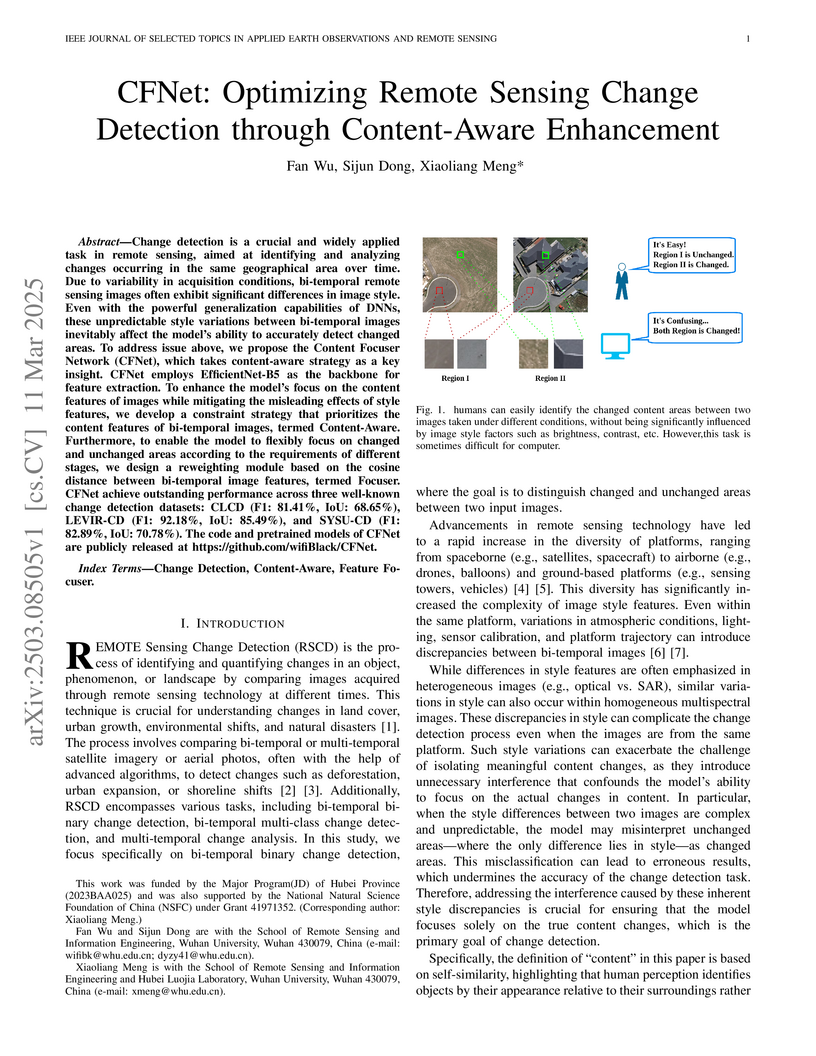

Change detection is a crucial and widely applied task in remote sensing,

aimed at identifying and analyzing changes occurring in the same geographical

area over time. Due to variability in acquisition conditions, bi-temporal

remote sensing images often exhibit significant differences in image style.

Even with the powerful generalization capabilities of DNNs, these unpredictable

style variations between bi-temporal images inevitably affect model's ability

to accurately detect changed areas. To address issue above, we propose the

Content Focuser Network (CFNet), which takes content-aware strategy as a key

insight. CFNet employs EfficientNet-B5 as the backbone for feature extraction.

To enhance the model's focus on the content features of images while mitigating

the misleading effects of style features, we develop a constraint strategy that

prioritizes the content features of bi-temporal images, termed Content-Aware.

Furthermore, to enable the model to flexibly focus on changed and unchanged

areas according to the requirements of different stages, we design a

reweighting module based on the cosine distance between bi-temporal image

features, termed Focuser. CFNet achieve outstanding performance across three

well-known change detection datasets: CLCD (F1: 81.41%, IoU: 68.65%), LEVIR-CD

(F1: 92.18%, IoU: 85.49%), and SYSU-CD (F1: 82.89%, IoU: 70.78%). The code and

pretrained models of CFNet are publicly released at

this https URL

High-quality image segmentation is fundamental to pixel-level geospatial analysis in remote sensing, necessitating robust segmentation quality assessment (SQA), particularly in unsupervised settings lacking ground truth. Although recent deep learning (DL) based unsupervised SQA methods show potential, they often suffer from coarse evaluation granularity, incomplete assessments, and poor transferability. To overcome these limitations, this paper introduces Panoramic Quality Mapping (PQM) as a new paradigm for comprehensive, pixel-wise SQA, and presents SegAssess, a novel deep learning framework realizing this approach. SegAssess distinctively formulates SQA as a fine-grained, four-class panoramic segmentation task, classifying pixels within a segmentation mask under evaluation into true positive (TP), false positive (FP), true negative (TN), and false negative (FN) categories, thereby generating a complete quality map. Leveraging an enhanced Segment Anything Model (SAM) architecture, SegAssess uniquely employs the input mask as a prompt for effective feature integration via cross-attention. Key innovations include an Edge Guided Compaction (EGC) branch with an Aggregated Semantic Filter (ASF) module to refine predictions near challenging object edges, and an Augmented Mixup Sampling (AMS) training strategy integrating multi-source masks to significantly boost cross-domain robustness and zero-shot transferability. Comprehensive experiments across 32 datasets derived from 6 sources demonstrate that SegAssess achieves state-of-the-art (SOTA) performance and exhibits remarkable zero-shot transferability to unseen masks, establishing PQM via SegAssess as a robust and transferable solution for unsupervised SQA. The code is available at this https URL.

11 Aug 2024

The LiDAR-inertial odometry (LIO) and the ultra-wideband (UWB) have been integrated together to achieve driftless positioning in global navigation satellite system (GNSS)-denied environments. However, the UWB may be affected by systematic range errors (such as the clock drift and the antenna phase center offset) and non-line-of-sight (NLOS) signals, resulting in reduced robustness. In this study, we propose a UWB-LiDAR-inertial estimator (MR-ULINS) that tightly integrates the UWB range, LiDAR frame-to-frame, and IMU measurements within the multi-state constraint Kalman filter (MSCKF) framework. The systematic range errors are precisely modeled to be estimated and compensated online. Besides, we propose a multi-epoch outlier rejection algorithm for UWB NLOS by utilizing the relative accuracy of the LIO. Specifically, the relative trajectory of the LIO is employed to verify the consistency of all range measurements within the sliding window. Extensive experiment results demonstrate that MR-ULINS achieves a positioning accuracy of around 0.1 m in complex indoor environments with severe NLOS interference. Ablation experiments show that the online estimation and multi-epoch outlier rejection can effectively improve the positioning accuracy. Besides, MR-ULINS maintains high accuracy and robustness in LiDAR-degenerated scenes and UWB-challenging conditions with spare base stations.

There are no more papers matching your filters at the moment.