East China University of Science and Technology

SE-GUI is a reinforcement learning framework that enhances visual grounding for GUI agents using a self-evolutionary mechanism and dense point rewards. It achieves 47.3% accuracy on the ScreenSpot-Pro benchmark, outperforming larger models while utilizing only 3,018 high-quality training samples.

RoMa introduces a robust dense feature matching model that combines frozen DINOv2 features for coarse matching with specialized ConvNet features for fine localization, alongside a Transformer decoder and a scale-aware loss formulation. The approach achieves state-of-the-art performance across multiple benchmarks, including a 36% improvement in mean Average Accuracy on the WxBS benchmark for robustness.

The research introduces 3DEditVerse, the largest paired 3D editing dataset, and 3DEditFormer, a structure-preserving conditional transformer, to enable precise and consistent 3D edits from text prompts without requiring manual 3D masks. The approach achieves state-of-the-art performance, demonstrating a 13% improvement in 3D metrics over prior methods dependent on ground-truth masks.

Researchers from Fudan University and collaborators developed CIF, a few-shot multimodal industrial anomaly detection method that leverages hypergraphs to extract structural commonality from limited normal training data. CIF significantly improved image-level AUROC on MVTec 3D-AD by 20.2% for 1-shot training-free settings and achieved the highest I-AUROC on the Eyecandies dataset, demonstrating enhanced performance and reduced false positives in anomaly localization.

KungfuBot enables humanoid robots to learn and execute highly-dynamic human skills like martial arts and dancing by integrating a physics-based motion processing pipeline with an adaptive motion tracking mechanism. This approach allows zero-shot transfer to real robots, demonstrating superior tracking performance with a global mean per body position error of 53.25mm on easy motions, and robustly executing complex maneuvers on a Unitree G1 robot.

23 Oct 2025

We present DexCanvas, a large-scale hybrid real-synthetic human manipulation dataset containing 7,000 hours of dexterous hand-object interactions seeded from 70 hours of real human demonstrations, organized across 21 fundamental manipulation types based on the Cutkosky taxonomy. Each entry combines synchronized multi-view RGB-D, high-precision mocap with MANO hand parameters, and per-frame contact points with physically consistent force profiles. Our real-to-sim pipeline uses reinforcement learning to train policies that control an actuated MANO hand in physics simulation, reproducing human demonstrations while discovering the underlying contact forces that generate the observed object motion. DexCanvas is the first manipulation dataset to combine large-scale real demonstrations, systematic skill coverage based on established taxonomies, and physics-validated contact annotations. The dataset can facilitate research in robotic manipulation learning, contact-rich control, and skill transfer across different hand morphologies.

End-to-end autonomous driving has been recently seen rapid development, exerting a profound influence on both industry and academia. However, the existing work places excessive focus on ego-vehicle status as their sole learning objectives and lacks of planning-oriented understanding, which limits the robustness of the overall decision-making prcocess. In this work, we introduce DistillDrive, an end-to-end knowledge distillation-based autonomous driving model that leverages diversified instance imitation to enhance multi-mode motion feature learning. Specifically, we employ a planning model based on structured scene representations as the teacher model, leveraging its diversified planning instances as multi-objective learning targets for the end-to-end model. Moreover, we incorporate reinforcement learning to enhance the optimization of state-to-decision mappings, while utilizing generative modeling to construct planning-oriented instances, fostering intricate interactions within the latent space. We validate our model on the nuScenes and NAVSIM datasets, achieving a 50\% reduction in collision rate and a 3-point improvement in closed-loop performance compared to the baseline model. Code and model are publicly available at this https URL

08 Jun 2025

A new framework named Learn as Individuals, Evolve as a Team (LIET) enhances multi-agent LLM planning in embodied environments by enabling agents to adapt through an individual utility function for action cost estimation and an evolving team communication knowledge list. The framework achieved superior performance on benchmarks like C-WAH and TDW-MAT, reducing average steps on C-WAH to 40.3 from 48.4 for previous methods.

16 Nov 2025

A novel framework, TFRank, enables efficient and practical pointwise LLM ranking by internalizing complex reasoning capabilities within small-scale models during training. This approach reduces inference latency by eliminating explicit Chain-of-Thought generation, yielding performance competitive with larger models on reasoning benchmarks and superior throughput.

Spatial relation reasoning is a crucial task for multimodal large language models (MLLMs) to understand the objective world. However, current benchmarks have issues like relying on bounding boxes, ignoring perspective substitutions, or allowing questions to be answered using only the model's prior knowledge without image understanding. To address these issues, we introduce SpatialMQA, a human-annotated spatial relation reasoning benchmark based on COCO2017, which enables MLLMs to focus more on understanding images in the objective world. To ensure data quality, we design a well-tailored annotation procedure, resulting in SpatialMQA consisting of 5,392 samples. Based on this benchmark, a series of closed- and open-source MLLMs are implemented and the results indicate that the current state-of-the-art MLLM achieves only 48.14% accuracy, far below the human-level accuracy of 98.40%. Extensive experimental analyses are also conducted, suggesting the future research directions. The benchmark and codes are available at this https URL.

Northwestern Polytechnical University Chinese Academy of SciencesShanghai AI Laboratory

Chinese Academy of SciencesShanghai AI Laboratory Fudan University

Fudan University University of Science and Technology of China

University of Science and Technology of China Shanghai Jiao Tong University

Shanghai Jiao Tong University Zhejiang UniversityEast China University of Science and Technology

Zhejiang UniversityEast China University of Science and Technology The University of Hong KongBeijing University of Posts and TelecommunicationsTeleAI

The University of Hong KongBeijing University of Posts and TelecommunicationsTeleAI

Chinese Academy of SciencesShanghai AI Laboratory

Chinese Academy of SciencesShanghai AI Laboratory Fudan University

Fudan University University of Science and Technology of China

University of Science and Technology of China Shanghai Jiao Tong University

Shanghai Jiao Tong University Zhejiang UniversityEast China University of Science and Technology

Zhejiang UniversityEast China University of Science and Technology The University of Hong KongBeijing University of Posts and TelecommunicationsTeleAI

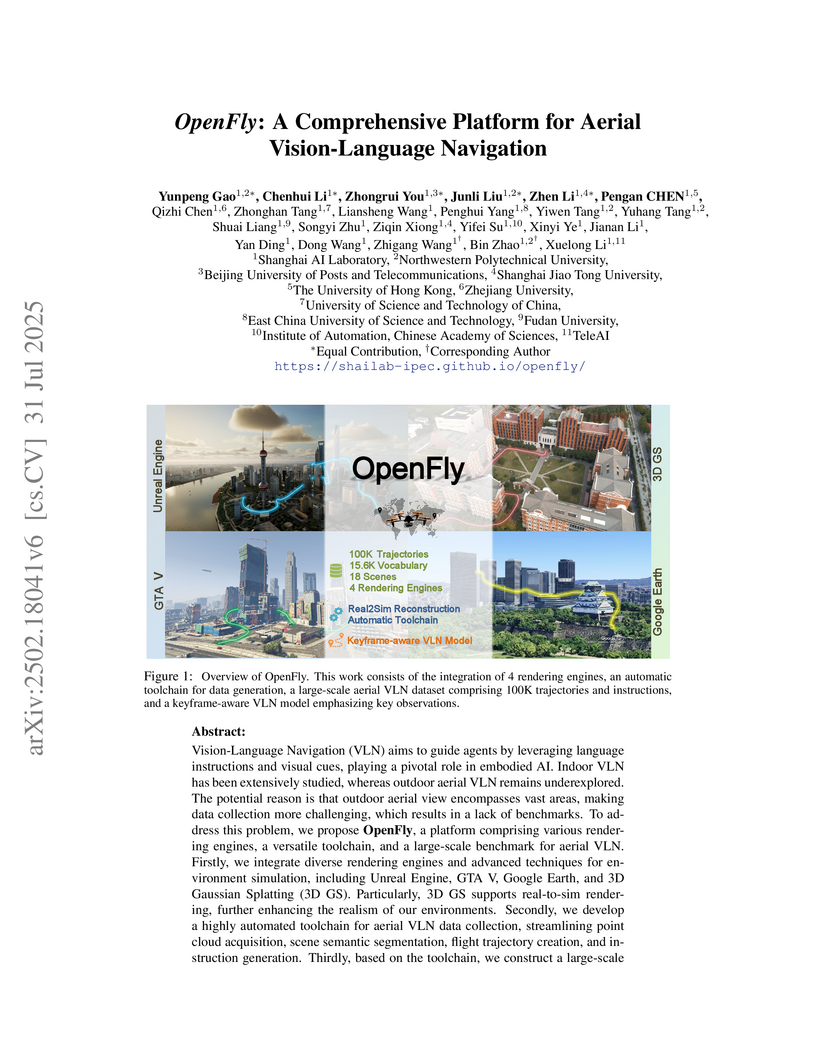

The University of Hong KongBeijing University of Posts and TelecommunicationsTeleAIOpenFly provides an extensive platform for aerial Vision-Language Navigation by combining diverse simulation environments, including real-to-sim reconstruction, with an automated data generation toolchain. This led to the creation of a 100,000-trajectory dataset and a keyframe-aware agent that achieved a 26.09% real-world success rate, outperforming baselines.

11 Jul 2025

This research introduces StarDojo, a comprehensive benchmark for evaluating Multimodal Large Language Models (MLLMs) in open-ended 'production-living simulations' using the game Stardew Valley. Empirical evaluations demonstrate that current state-of-the-art MLLMs achieve a low success rate, with GPT-4.1 reaching 12.7% on easy tasks, highlighting significant limitations in visual understanding, multimodal reasoning, and long-term planning.

KG-o1 introduces a four-stage framework that transforms large language models into specialized Large Reasoning Models for multi-hop question answering by integrating knowledge graphs. This approach trains LLMs to internalize structured logical paths and adopt a "long-term thinking" paradigm, resulting in superior performance on multi-hop QA benchmarks and improved intrinsic reasoning ability.

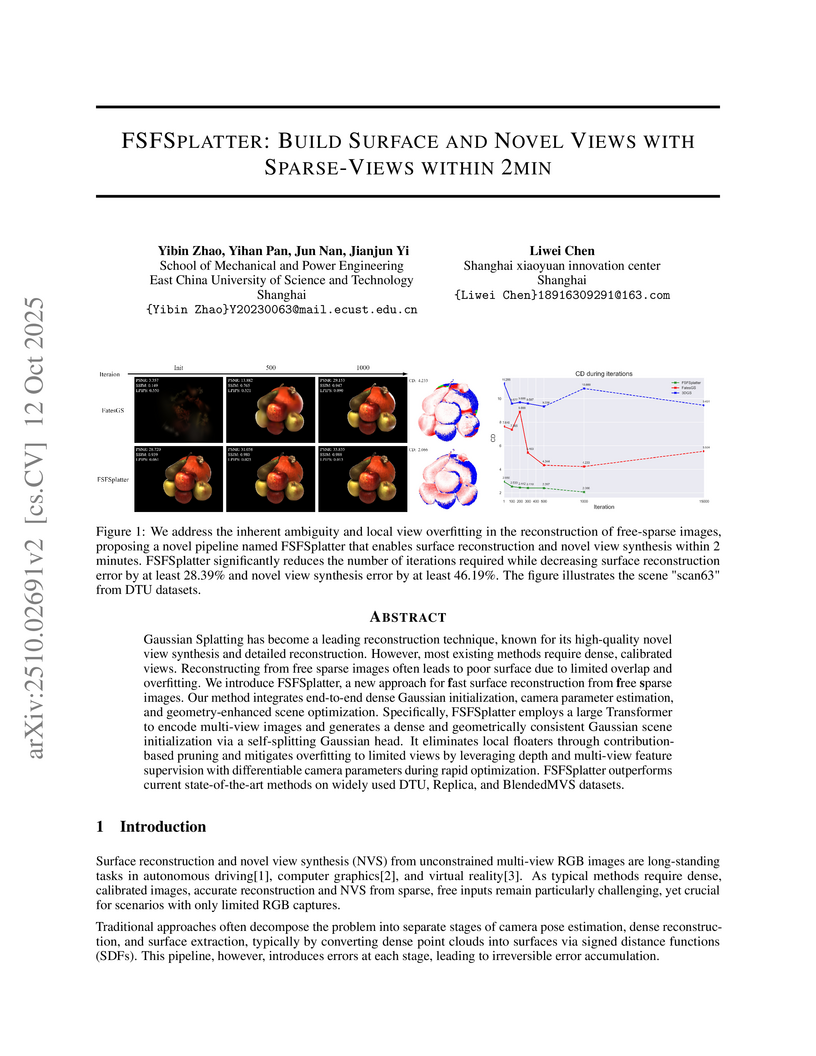

Gaussian Splatting has become a leading reconstruction technique, known for its high-quality novel view synthesis and detailed reconstruction. However, most existing methods require dense, calibrated views. Reconstructing from free sparse images often leads to poor surface due to limited overlap and overfitting. We introduce FSFSplatter, a new approach for fast surface reconstruction from free sparse images. Our method integrates end-to-end dense Gaussian initialization, camera parameter estimation, and geometry-enhanced scene optimization. Specifically, FSFSplatter employs a large Transformer to encode multi-view images and generates a dense and geometrically consistent Gaussian scene initialization via a self-splitting Gaussian head. It eliminates local floaters through contribution-based pruning and mitigates overfitting to limited views by leveraging depth and multi-view feature supervision with differentiable camera parameters during rapid optimization. FSFSplatter outperforms current state-of-the-art methods on widely used DTU, Replica, and BlendedMVS datasets.

Traditional photography composition approaches are dominated by 2D cropping-based methods. However, these methods fall short when scenes contain poorly arranged subjects. Professional photographers often employ perspective adjustment as a form of 3D recomposition, modifying the projected 2D relationships between subjects while maintaining their actual spatial positions to achieve better compositional balance. Inspired by this artistic practice, we propose photography perspective composition (PPC), extending beyond traditional cropping-based methods. However, implementing the PPC faces significant challenges: the scarcity of perspective transformation datasets and undefined assessment criteria for perspective quality. To address these challenges, we present three key contributions: (1) An automated framework for building PPC datasets through expert photographs. (2) A video generation approach that demonstrates the transformation process from less favorable to aesthetically enhanced perspectives. (3) A perspective quality assessment (PQA) model constructed based on human performance. Our approach is concise and requires no additional prompt instructions or camera trajectories, helping and guiding ordinary users to enhance their composition skills.

An empirical study investigated how Large Language Models perform when reasoning under strict output length constraints, revealing that performance does not always scale with model size and that specific prompting methods can improve accuracy and latency efficiency, particularly for mid-sized models.

Large Language Models (LLMs) have catalyzed significant advancements in Natural Language Processing (NLP), yet they encounter challenges such as hallucination and the need for domain-specific knowledge. To mitigate these, recent methodologies have integrated information retrieved from external resources with LLMs, substantially enhancing their performance across NLP tasks. This survey paper addresses the absence of a comprehensive overview on Retrieval-Augmented Language Models (RALMs), both Retrieval-Augmented Generation (RAG) and Retrieval-Augmented Understanding (RAU), providing an in-depth examination of their paradigm, evolution, taxonomy, and applications. The paper discusses the essential components of RALMs, including Retrievers, Language Models, and Augmentations, and how their interactions lead to diverse model structures and applications. RALMs demonstrate utility in a spectrum of tasks, from translation and dialogue systems to knowledge-intensive applications. The survey includes several evaluation methods of RALMs, emphasizing the importance of robustness, accuracy, and relevance in their assessment. It also acknowledges the limitations of RALMs, particularly in retrieval quality and computational efficiency, offering directions for future research. In conclusion, this survey aims to offer a structured insight into RALMs, their potential, and the avenues for their future development in NLP. The paper is supplemented with a Github Repository containing the surveyed works and resources for further study: this https URL.

Graphical User Interface (GUI) agents powered by Large Vision-Language Models (LVLMs) have emerged as a revolutionary approach to automating human-machine interactions, capable of autonomously operating personal devices (e.g., mobile phones) or applications within the device to perform complex real-world tasks in a human-like manner. However, their close integration with personal devices raises significant security concerns, with many threats, including backdoor attacks, remaining largely unexplored. This work reveals that the visual grounding of GUI agent-mapping textual plans to GUI elements-can introduce vulnerabilities, enabling new types of backdoor attacks. With backdoor attack targeting visual grounding, the agent's behavior can be compromised even when given correct task-solving plans. To validate this vulnerability, we propose VisualTrap, a method that can hijack the grounding by misleading the agent to locate textual plans to trigger locations instead of the intended targets. VisualTrap uses the common method of injecting poisoned data for attacks, and does so during the pre-training of visual grounding to ensure practical feasibility of attacking. Empirical results show that VisualTrap can effectively hijack visual grounding with as little as 5% poisoned data and highly stealthy visual triggers (invisible to the human eye); and the attack can be generalized to downstream tasks, even after clean fine-tuning. Moreover, the injected trigger can remain effective across different GUI environments, e.g., being trained on mobile/web and generalizing to desktop environments. These findings underscore the urgent need for further research on backdoor attack risks in GUI agents.

For robotic manipulation, existing robotics datasets and simulation benchmarks predominantly cater to robot-arm platforms. However, for humanoid robots equipped with dual arms and dexterous hands, simulation tasks and high-quality demonstrations are notably lacking. Bimanual dexterous manipulation is inherently more complex, as it requires coordinated arm movements and hand operations, making autonomous data collection challenging. This paper presents HumanoidGen, an automated task creation and demonstration collection framework that leverages atomic dexterous operations and LLM reasoning to generate relational constraints. Specifically, we provide spatial annotations for both assets and dexterous hands based on the atomic operations, and perform an LLM planner to generate a chain of actionable spatial constraints for arm movements based on object affordances and scenes. To further improve planning ability, we employ a variant of Monte Carlo tree search to enhance LLM reasoning for long-horizon tasks and insufficient annotation. In experiments, we create a novel benchmark with augmented scenarios to evaluate the quality of the collected data. The results show that the performance of the 2D and 3D diffusion policies can scale with the generated dataset. Project page is this https URL.

30 Nov 2025

Creating an immersive and interactive theatrical experience is a long-term goal in the field of interactive narrative. The emergence of large language model (LLM) is providing a new path to achieve this goal. However, existing LLM-based drama generation methods often result in agents that lack initiative and cannot interact with the physical scene. Furthermore, these methods typically require detailed user input to drive the drama. These limitations reduce the interactivity and immersion of online real-time performance. To address the above challenges, we propose HAMLET, a multi-agent framework focused on drama creation and online performance. Given a simple topic, the framework generates a narrative blueprint, guiding the subsequent improvisational performance. During the online performance, each actor is given an autonomous mind. This means that actors can make independent decisions based on their own background, goals, and emotional state. In addition to conversations with other actors, their decisions can also change the state of scene props through actions such as opening a letter or picking up a weapon. The change is then broadcast to other related actors, updating what they know and care about, which in turn influences their next action. To evaluate the quality of drama performance generated by HAMLET, we designed an evaluation method to assess three primary aspects, including character performance, narrative quality, and interaction experience. The experimental evaluation shows that HAMLET can create expressive and coherent theatrical experiences.

There are no more papers matching your filters at the moment.