Leipzig University

Systematic reviews are crucial for evidence-based medicine as they comprehensively analyse published research findings on specific questions. Conducting such reviews is often resource- and time-intensive, especially in the screening phase, where abstracts of publications are assessed for inclusion in a review. This study investigates the effectiveness of using zero-shot large language models~(LLMs) for automatic screening. We evaluate the effectiveness of eight different LLMs and investigate a calibration technique that uses a predefined recall threshold to determine whether a publication should be included in a systematic review. Our comprehensive evaluation using five standard test collections shows that instruction fine-tuning plays an important role in screening, that calibration renders LLMs practical for achieving a targeted recall, and that combining both with an ensemble of zero-shot models saves significant screening time compared to state-of-the-art approaches.

University of Illinois at Urbana-Champaign

University of Illinois at Urbana-Champaign Monash UniversityLeipzig University

Monash UniversityLeipzig University Northeastern University

Northeastern University University of Notre Dame

University of Notre Dame UC Berkeley

UC Berkeley University College London

University College London Cohere

Cohere Cornell University

Cornell University University of California, San Diego

University of California, San Diego University of British ColumbiaCSIRO’s Data61

University of British ColumbiaCSIRO’s Data61 NVIDIAIBM Research

NVIDIAIBM Research Hugging Face

Hugging Face Johns Hopkins University

Johns Hopkins University Technical University of MunichSea AI Lab

Technical University of MunichSea AI Lab MIT

MIT Princeton UniversityTechnical University of DarmstadtBaidu

Princeton UniversityTechnical University of DarmstadtBaidu ServiceNowKaggleWellesley CollegeContextual AIRobloxSalesforceIndependentMazzumaTechnion

Israel Institute of Technology

ServiceNowKaggleWellesley CollegeContextual AIRobloxSalesforceIndependentMazzumaTechnion

Israel Institute of TechnologyThe BigCode project releases StarCoder 2 models and The Stack v2 dataset, setting a new standard for open and ethically sourced Code LLM development. StarCoder 2 models, particularly the 15B variant, demonstrate competitive performance across code generation, completion, and reasoning tasks, often outperforming larger, closed-source alternatives, by prioritizing data quality and efficient architecture over sheer data quantity.

Monash UniversityLeipzig University

Monash UniversityLeipzig University Northeastern University

Northeastern University Carnegie Mellon University

Carnegie Mellon University New York University

New York University Stanford University

Stanford University McGill University

McGill University University of British ColumbiaCSIRO’s Data61IBM Research

University of British ColumbiaCSIRO’s Data61IBM Research Columbia UniversityScaDS.AI

Columbia UniversityScaDS.AI Hugging Face

Hugging Face Johns Hopkins UniversityWeizmann Institute of ScienceThe Alan Turing InstituteSea AI Lab

Johns Hopkins UniversityWeizmann Institute of ScienceThe Alan Turing InstituteSea AI Lab MIT

MIT Queen Mary University of LondonUniversity of VermontSAP

Queen Mary University of LondonUniversity of VermontSAP ServiceNowIsrael Institute of TechnologyWellesley CollegeEleuther AIRobloxUniversity ofTelefonica I+DTechnical University ofNotre DameMunichDiscover Dollar Pvt LtdUnfoldMLAllahabadTechnion –Saama AI Research LabTolokaForschungszentrum J",

ServiceNowIsrael Institute of TechnologyWellesley CollegeEleuther AIRobloxUniversity ofTelefonica I+DTechnical University ofNotre DameMunichDiscover Dollar Pvt LtdUnfoldMLAllahabadTechnion –Saama AI Research LabTolokaForschungszentrum J",StarCoder and StarCoderBase are large language models for code developed by The BigCode community, demonstrating state-of-the-art performance among open-access models on Python code generation, achieving 33.6% pass@1 on HumanEval, and strong multi-language capabilities, all while integrating responsible AI practices.

This monograph by Franceschi et al. provides a comprehensive, unified treatment of hyperparameter optimization (HPO) in machine learning, systematically categorizing diverse algorithms and outlining their evolution and practical considerations. It serves as a foundational resource, integrating HPO with advanced ML paradigms and identifying future research directions, particularly concerning foundation models.

11 Sep 2025

Kyungpook National UniversityLeipzig UniversityUniversity of Oklahoma University of California, Irvine

University of California, Irvine NASA Goddard Space Flight Center

NASA Goddard Space Flight Center University of MarylandColorado State University

University of MarylandColorado State University University of ArizonaInstituto Nacional de Pesquisas EspaciaisUniversity of Maryland Baltimore CountyItalian National Research CouncilUniversidad Tecnologica Metropolitana

University of ArizonaInstituto Nacional de Pesquisas EspaciaisUniversity of Maryland Baltimore CountyItalian National Research CouncilUniversidad Tecnologica Metropolitana

University of California, Irvine

University of California, Irvine NASA Goddard Space Flight Center

NASA Goddard Space Flight Center University of MarylandColorado State University

University of MarylandColorado State University University of ArizonaInstituto Nacional de Pesquisas EspaciaisUniversity of Maryland Baltimore CountyItalian National Research CouncilUniversidad Tecnologica Metropolitana

University of ArizonaInstituto Nacional de Pesquisas EspaciaisUniversity of Maryland Baltimore CountyItalian National Research CouncilUniversidad Tecnologica MetropolitanaAccurately tracking the global distribution and evolution of precipitation is essential for both research and operational meteorology. Satellite observations remain the only means of achieving consistent, global-scale precipitation monitoring. While machine learning has long been applied to satellite-based precipitation retrieval, the absence of a standardized benchmark dataset has hindered fair comparisons between methods and limited progress in algorithm development.

To address this gap, the International Precipitation Working Group has developed SatRain, the first AI-ready benchmark dataset for satellite-based detection and estimation of rain, snow, graupel, and hail. SatRain includes multi-sensor satellite observations representative of the major platforms currently used in precipitation remote sensing, paired with high-quality reference estimates from ground-based radars corrected using rain gauge measurements. It offers a standardized evaluation protocol to enable robust and reproducible comparisons across machine learning approaches.

In addition to supporting algorithm evaluation, the diversity of sensors and inclusion of time-resolved geostationary observations make SatRain a valuable foundation for developing next-generation AI models to deliver more accurate, detailed, and globally consistent precipitation estimates.

Researchers from Leipzig University and Harvard University developed a framework connecting the evolution of neural network feature geometry to discrete Ricci flow. They theoretically demonstrate the essential role of non-linear activations in reshaping feature spaces and empirically show that learning dynamics consistently align with Ricci flow, leading to improved class separability and offering novel geometry-informed heuristics for early-stopping and optimal network depth selection.

Structural biology has long been dominated by the one sequence, one structure, one function paradigm, yet many critical biological processes - from enzyme catalysis to membrane transport - depend on proteins that adopt multiple conformational states. Existing multi-state design approaches rely on post-hoc aggregation of single-state predictions, achieving poor experimental success rates compared to single-state design. We introduce DynamicMPNN, an inverse folding model explicitly trained to generate sequences compatible with multiple conformations through joint learning across conformational ensembles. Trained on 46,033 conformational pairs covering 75% of CATH superfamilies and evaluated using Alphafold 3, DynamicMPNN outperforms ProteinMPNN by up to 25% on decoy-normalized RMSD and by 12% on sequence recovery across our challenging multi-state protein benchmark.

University of AmsterdamLeipzig University

University of AmsterdamLeipzig University Northeastern UniversityIBM ResearchScaDS.AI

Northeastern UniversityIBM ResearchScaDS.AI Hugging FaceSea AI Lab

Hugging FaceSea AI Lab MITSAP

MITSAP ServiceNowWellesley CollegeEleutherAIBerner FachhochschuleUWADiscover Dollar Pvt LtdSaama TechnologiesHuawei Noahs Ark LabFlowriteCSIRO

The final JSON should be valid and only contain the organization names in an array. The previous thought process successfully identified all organizations.```json [

ServiceNowWellesley CollegeEleutherAIBerner FachhochschuleUWADiscover Dollar Pvt LtdSaama TechnologiesHuawei Noahs Ark LabFlowriteCSIRO

The final JSON should be valid and only contain the organization names in an array. The previous thought process successfully identified all organizations.```json [The BigCode project introduced SantaCoder, a 1.1B parameter open-source code language model, developed with a focus on responsible AI through a novel PII redaction pipeline and empirical data filtering studies. The model, trained on Python, Java, and JavaScript, notably demonstrates that filtering training data by GitHub stars degrades performance and achieves superior multilingual code generation and infilling capabilities compared to larger existing open-source models.

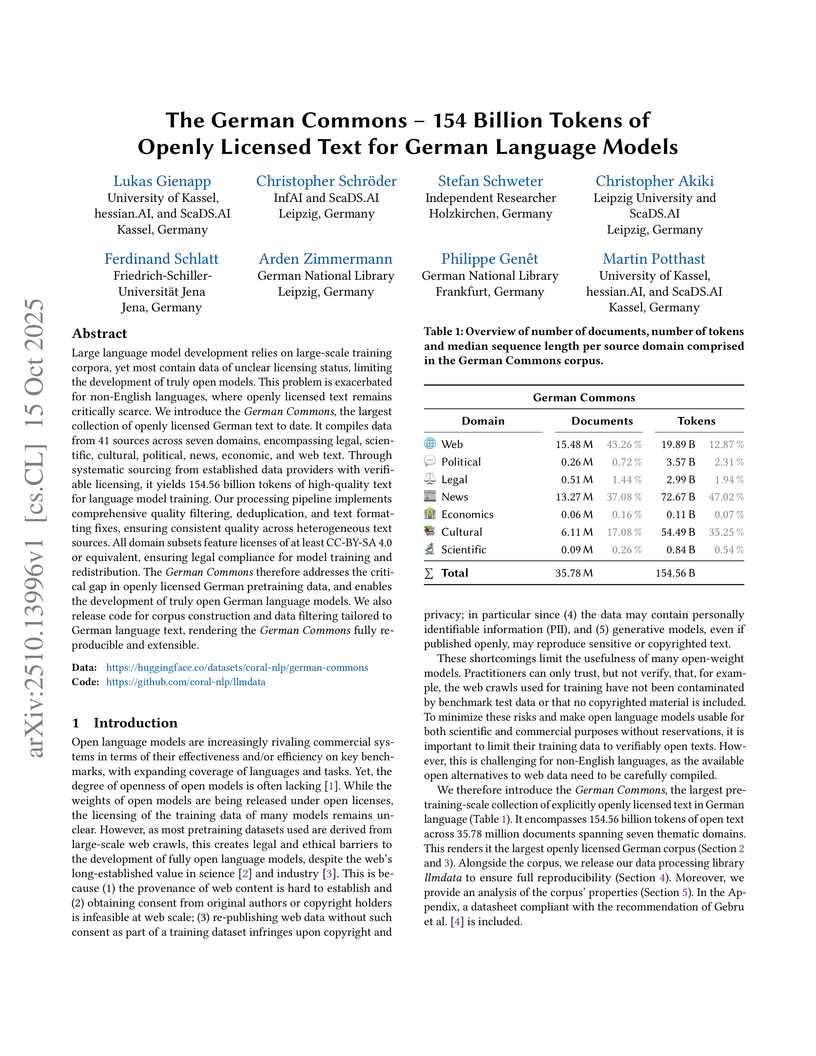

The German Commons provides the largest collection of verifiably openly licensed German text to date, comprising 154.56 billion tokens from 35.78 million documents, processed to ensure high quality and legal compliance for training German language models.

We study a fitting problem inspired by ontology-mediated querying: given a collection

of positive and negative examples of

the form (A,q) with

A an ABox and q a Boolean query, we seek

an ontology O that satisfies A∪O⊨q for all positive examples and A∪O⊨q for all negative examples.

We consider the description logics ALC and ALCI as ontology languages and

a range of query languages that

includes atomic queries (AQs), conjunctive queries (CQs), and unions thereof (UCQs).

For all of the resulting fitting problems,

we provide

effective characterizations and determine the computational complexity

of deciding whether a fitting ontology exists. This problem turns out to be CONP for AQs and full CQs

and 2EXPTIME-complete for CQs and UCQs.

These results hold for both ALC and ALCI.

University of FreiburgGerman Centre for Integrative Biodiversity Research (iDiv)Leipzig University McGill UniversityUniversity of WisconsinColorado State UniversitySimon Fraser UniversityMila - Québec AI InstituteCenter for Scalable Data Analytics and Artificial Intelligence (ScaDS.AI)University of SalfordHelmholtz Centre for Environmental Research

UFZ

McGill UniversityUniversity of WisconsinColorado State UniversitySimon Fraser UniversityMila - Québec AI InstituteCenter for Scalable Data Analytics and Artificial Intelligence (ScaDS.AI)University of SalfordHelmholtz Centre for Environmental Research

UFZ

McGill UniversityUniversity of WisconsinColorado State UniversitySimon Fraser UniversityMila - Québec AI InstituteCenter for Scalable Data Analytics and Artificial Intelligence (ScaDS.AI)University of SalfordHelmholtz Centre for Environmental Research

UFZ

McGill UniversityUniversity of WisconsinColorado State UniversitySimon Fraser UniversityMila - Québec AI InstituteCenter for Scalable Data Analytics and Artificial Intelligence (ScaDS.AI)University of SalfordHelmholtz Centre for Environmental Research

UFZPlant traits such as leaf carbon content and leaf mass are essential variables in the study of biodiversity and climate change. However, conventional field sampling cannot feasibly cover trait variation at ecologically meaningful spatial scales. Machine learning represents a valuable solution for plant trait prediction across ecosystems, leveraging hyperspectral data from remote sensing. Nevertheless, trait prediction from hyperspectral data is challenged by label scarcity and substantial domain shifts (\eg across sensors, ecological distributions), requiring robust cross-domain methods. Here, we present GreenHyperSpectra, a pretraining dataset encompassing real-world cross-sensor and cross-ecosystem samples designed to benchmark trait prediction with semi- and self-supervised methods. We adopt an evaluation framework encompassing in-distribution and out-of-distribution scenarios. We successfully leverage GreenHyperSpectra to pretrain label-efficient multi-output regression models that outperform the state-of-the-art supervised baseline. Our empirical analyses demonstrate substantial improvements in learning spectral representations for trait prediction, establishing a comprehensive methodological framework to catalyze research at the intersection of representation learning and plant functional traits assessment. All code and data are available at: this https URL.

The exponential growth of scientific publications has made it increasingly

difficult for researchers to stay updated and synthesize knowledge effectively.

This paper presents XSum, a modular pipeline for multi-document summarization

(MDS) in the scientific domain using Retrieval-Augmented Generation (RAG). The

pipeline includes two core components: a question-generation module and an

editor module. The question-generation module dynamically generates questions

adapted to the input papers, ensuring the retrieval of relevant and accurate

information. The editor module synthesizes the retrieved content into coherent

and well-structured summaries that adhere to academic standards for proper

citation. Evaluated on the SurveySum dataset, XSum demonstrates strong

performance, achieving considerable improvements in metrics such as CheckEval,

G-Eval and Ref-F1 compared to existing approaches. This work provides a

transparent, adaptable framework for scientific summarization with potential

applications in a wide range of domains. Code available at

this https URL

04 Jun 2025

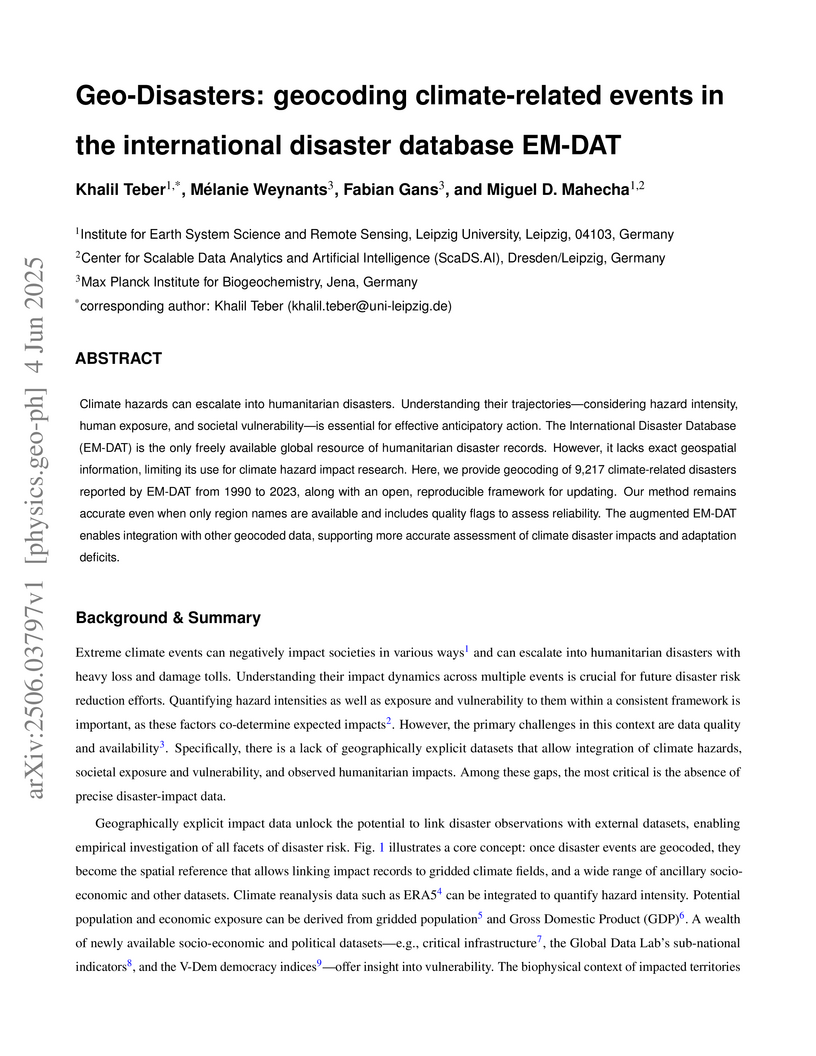

Climate hazards can escalate into humanitarian disasters. Understanding their

trajectories -- considering hazard intensity, human exposure, and societal

vulnerability -- is essential for effective anticipatory action. The

International Disaster Database (EM-DAT) is the only freely available global

resource of humanitarian disaster records. However, it lacks exact geospatial

information, limiting its use for climate hazard impact research. Here, we

provide geocoding of 9,217 climate-related disasters reported by EM-DAT from

1990 to 2023, along with an open, reproducible framework for updating. Our

method remains accurate even when only region names are available and includes

quality flags to assess reliability. The augmented EM-DAT enables integration

with other geocoded data, supporting more accurate assessment of climate

disaster impacts and adaptation deficits.

University of WashingtonLeipzig University

University of WashingtonLeipzig University University of Copenhagen

University of Copenhagen Cornell University

Cornell University Allen Institute for AI

Allen Institute for AI Hugging FaceUniversity of Western Australia

Hugging FaceUniversity of Western Australia MITSaarland University

MITSaarland University Queen Mary University of LondonBooz Allen HamiltonCentraleSupélecKing Fahd University of Petroleum and MineralsUniversity of Michigan - Ann ArborSAP

Queen Mary University of LondonBooz Allen HamiltonCentraleSupélecKing Fahd University of Petroleum and MineralsUniversity of Michigan - Ann ArborSAP ServiceNowNational Library of NorwayEleutherAIMannheim UniversityOntocord.aiTelefonica I+DVietAI ResearchPrince Sattam bin Abdulaziz University (PSAU)Ferrum HealthBritish LibraryBedrock AICommon CrawlMavenoidHumboldt-Universität zu Berlin and Max Delbrück Center for Molecular MedicineApergo.aiNarrativaAggregate IntellectCAIDPHiTZ Center, University of the Basque Country (UPV/EHU)Detomo Inc

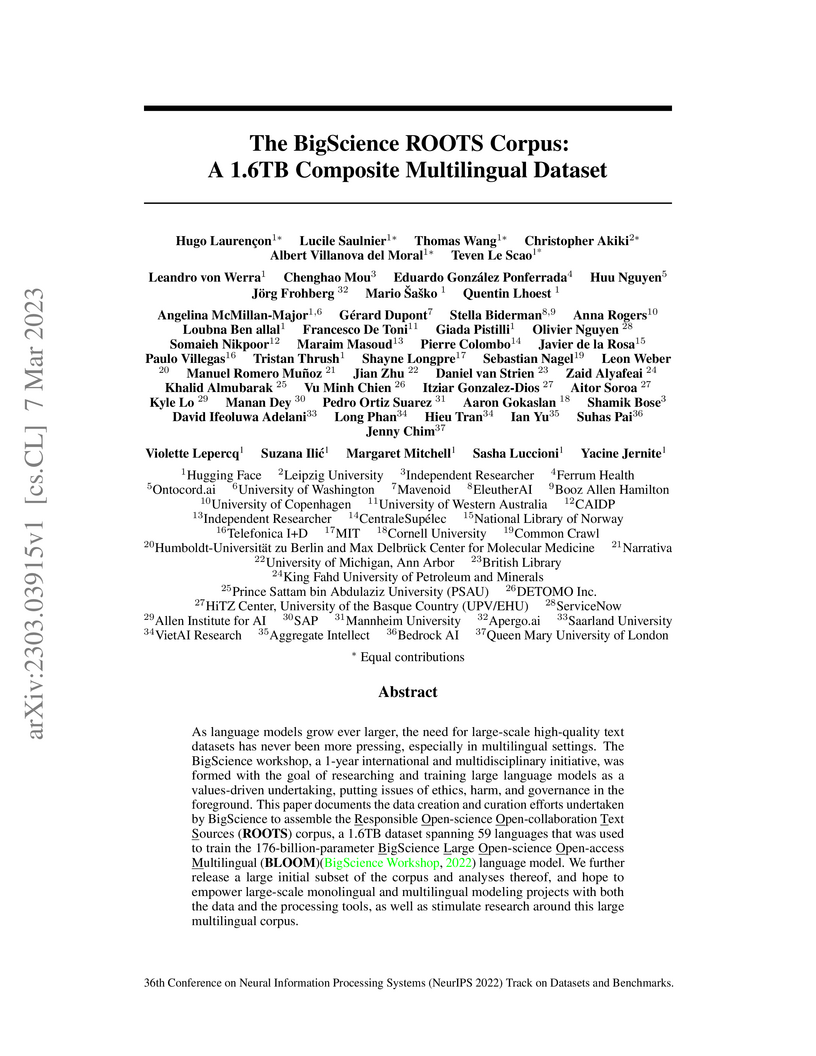

ServiceNowNational Library of NorwayEleutherAIMannheim UniversityOntocord.aiTelefonica I+DVietAI ResearchPrince Sattam bin Abdulaziz University (PSAU)Ferrum HealthBritish LibraryBedrock AICommon CrawlMavenoidHumboldt-Universität zu Berlin and Max Delbrück Center for Molecular MedicineApergo.aiNarrativaAggregate IntellectCAIDPHiTZ Center, University of the Basque Country (UPV/EHU)Detomo IncAs language models grow ever larger, the need for large-scale high-quality

text datasets has never been more pressing, especially in multilingual

settings. The BigScience workshop, a 1-year international and multidisciplinary

initiative, was formed with the goal of researching and training large language

models as a values-driven undertaking, putting issues of ethics, harm, and

governance in the foreground. This paper documents the data creation and

curation efforts undertaken by BigScience to assemble the Responsible

Open-science Open-collaboration Text Sources (ROOTS) corpus, a 1.6TB dataset

spanning 59 languages that was used to train the 176-billion-parameter

BigScience Large Open-science Open-access Multilingual (BLOOM) language model.

We further release a large initial subset of the corpus and analyses thereof,

and hope to empower large-scale monolingual and multilingual modeling projects

with both the data and the processing tools, as well as stimulate research

around this large multilingual corpus.

05 Apr 2025

Cross-encoders distilled from large language models (LLMs) are often more

effective re-rankers than cross-encoders fine-tuned on manually labeled data.

However, distilled models do not match the effectiveness of their teacher LLMs.

We hypothesize that this effectiveness gap is due to the fact that previous

work has not applied the best-suited methods for fine-tuning cross-encoders on

manually labeled data (e.g., hard-negative sampling, deep sampling, and

listwise loss functions). To close this gap, we create a new dataset,

Rank-DistiLLM. Cross-encoders trained on Rank-DistiLLM achieve the

effectiveness of LLMs while being up to 173 times faster and 24 times more

memory efficient. Our code and data is available at

this https URL

28 Jun 2025

Human annotations of mood in music are essential for music generation and recommender systems. However, existing datasets predominantly focus on Western songs with terms derived from English, which may limit generalizability across diverse linguistic and cultural backgrounds. We introduce 'GlobalMood', a novel cross-cultural benchmark dataset comprising 1,180 songs sampled from 59 countries, with large-scale annotations collected from 2,519 individuals across five culturally and linguistically distinct locations: U.S., France, Mexico, S. Korea, and Egypt. Rather than imposing predefined emotion and mood categories, we implement a bottom-up, participant-driven approach to organically elicit culturally specific music-related emotion terms. We then recruit another pool of human participants to collect 988,925 ratings for these culture-specific descriptors. Our analysis confirms the presence of a valence-arousal structure shared across cultures, yet also reveals significant divergences in how certain emotion terms (despite being dictionary equivalents) are perceived cross-culturally. State-of-the-art multimodal models benefit substantially from fine-tuning on our cross-culturally balanced dataset, particularly in non-English contexts. Broadly, our findings inform the ongoing debate on the universality versus cultural specificity of emotional descriptors, and our methodology can contribute to other multimodal and cross-lingual research.

While deep reinforcement learning agents demonstrate high performance across domains, their internal decision processes remain difficult to interpret when evaluated only through performance metrics. In particular, it is poorly understood which input features agents rely on, how these dependencies evolve during training, and how they relate to behavior. We introduce a scientific methodology for analyzing the learning process through quantitative analysis of saliency. This approach aggregates saliency information at the object and modality level into hierarchical attention profiles, quantifying how agents allocate attention over time, thereby forming attention trajectories throughout training. Applied to Atari benchmarks, custom Pong environments, and muscle-actuated biomechanical user simulations in visuomotor interactive tasks, this methodology uncovers algorithm-specific attention biases, reveals unintended reward-driven strategies, and diagnoses overfitting to redundant sensory channels. These patterns correspond to measurable behavioral differences, demonstrating empirical links between attention profiles, learning dynamics, and agent behavior. To assess robustness of the attention profiles, we validate our findings across multiple saliency methods and environments. The results establish attention trajectories as a promising diagnostic axis for tracing how feature reliance develops during training and for identifying biases and vulnerabilities invisible to performance metrics alone.

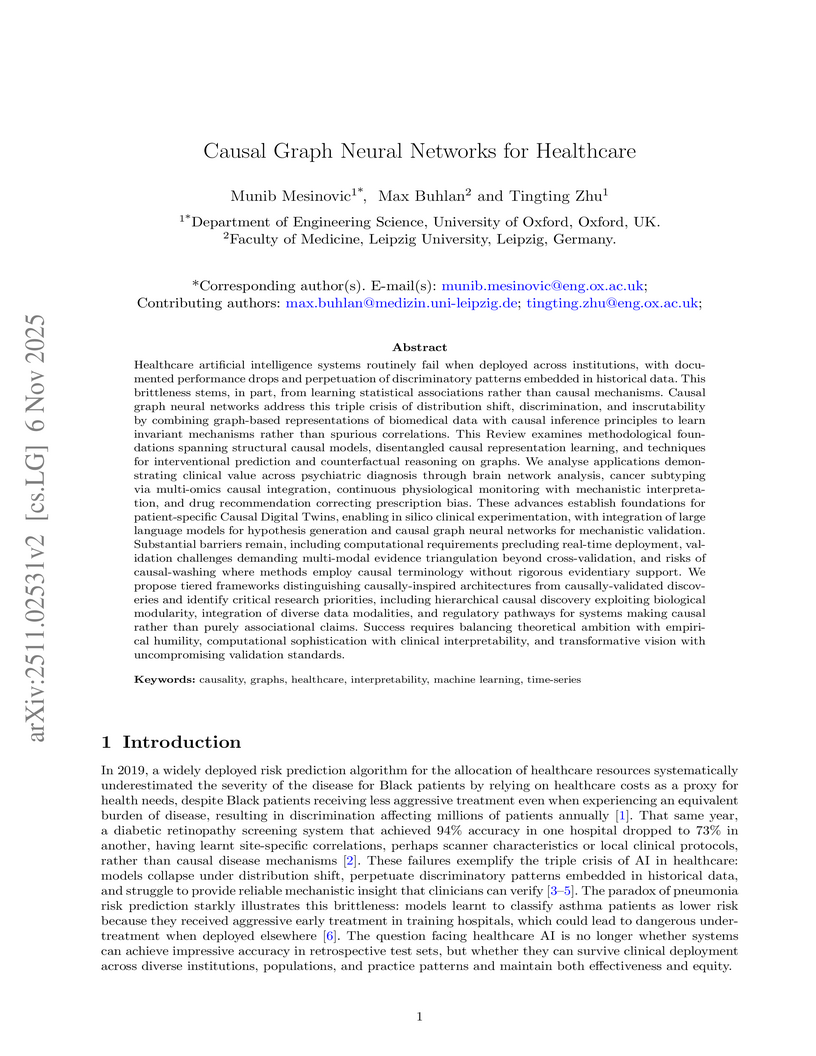

This review synthesizes the field of Causal Graph Neural Networks (CIGNNs) for healthcare, advocating for their role in transitioning AI from statistical association to mechanism-based causal understanding. It highlights how CIGNNs achieve robust generalization, mechanistic interpretability, and fairness in clinical applications by learning invariant causal relationships from complex biomedical data.

Researchers at Leipzig University and TU Dortmund University present a first step towards automated ontology construction using Large Language Models, specifically focusing on generating concept hierarchies. Their method employs a multi-step verification process and careful prompt engineering with GPT 3.5 turbo to build 'quite reasonable' quality hierarchies for various domains, demonstrating the potential for LLMs to reduce the manual burden of knowledge formalization.

Generating animal faces using generative AI techniques is challenging because the available training images are limited both in quantity and variation, particularly for facial expressions across individuals. In this study, we focus on macaque monkeys, widely studied in systems neuroscience and evolutionary research, and propose a method to generate their facial expressions using a style-based generative image model (i.e., StyleGAN2). To address data limitations, we implemented: 1) data augmentation by synthesizing new facial expression images using a motion transfer to animate still images with computer graphics, 2) sample selection based on the latent representation of macaque faces from an initially trained StyleGAN2 model to ensure the variation and uniform sampling in training dataset, and 3) loss function refinement to ensure the accurate reproduction of subtle movements, such as eye movements. Our results demonstrate that the proposed method enables the generation of diverse facial expressions for multiple macaque individuals, outperforming models trained solely on original still images. Additionally, we show that our model is effective for style-based image editing, where specific style parameters correspond to distinct facial movements. These findings underscore the model's potential for disentangling motion components as style parameters, providing a valuable tool for research on macaque facial expressions.

There are no more papers matching your filters at the moment.