Shanghai Institute for Advanced Communication and Data Science

17 Jun 2025

AIMatDesign introduces a reinforcement learning framework that integrates large language models and expert knowledge to accelerate inverse materials design, particularly under data-scarce conditions. It achieved superior success rates and faster convergence in discovering novel Zr-based Bulk Metallic Glasses, with experimental validation showing a 4.9% average relative error for yield strength.

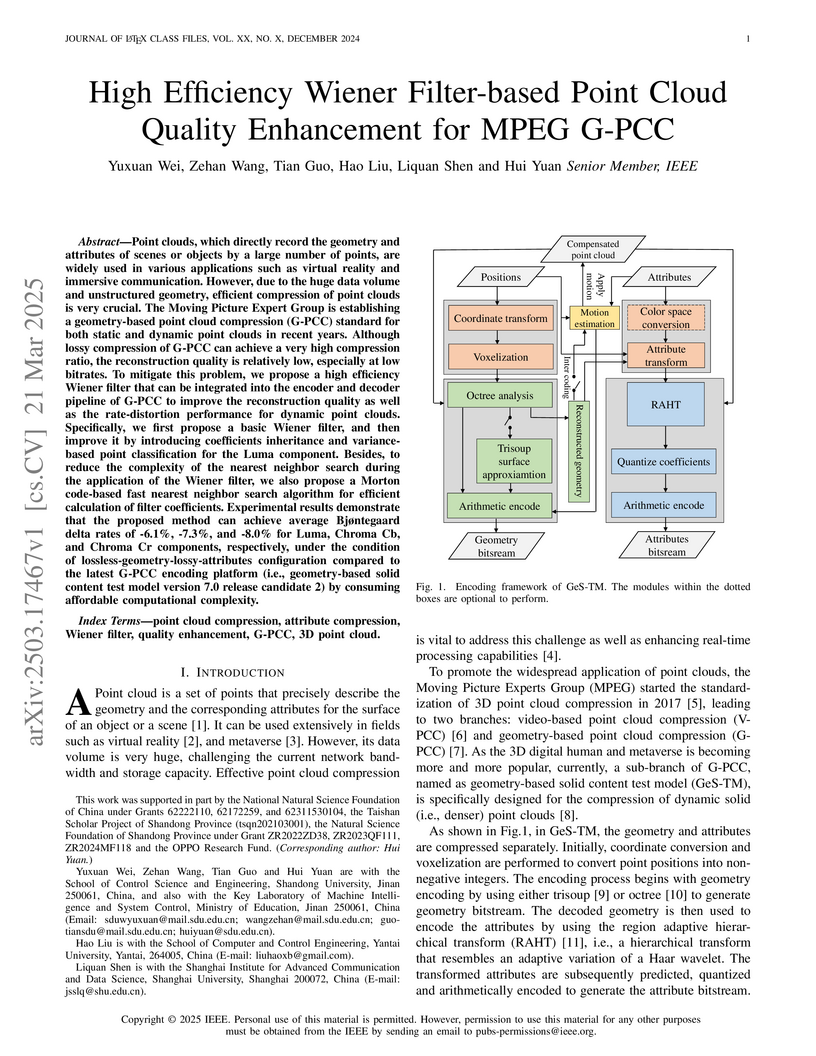

Point clouds, which directly record the geometry and attributes of scenes or

objects by a large number of points, are widely used in various applications

such as virtual reality and immersive communication. However, due to the huge

data volume and unstructured geometry, efficient compression of point clouds is

very crucial. The Moving Picture Expert Group is establishing a geometry-based

point cloud compression (G-PCC) standard for both static and dynamic point

clouds in recent years. Although lossy compression of G-PCC can achieve a very

high compression ratio, the reconstruction quality is relatively low,

especially at low bitrates. To mitigate this problem, we propose a high

efficiency Wiener filter that can be integrated into the encoder and decoder

pipeline of G-PCC to improve the reconstruction quality as well as the

rate-distortion performance for dynamic point clouds. Specifically, we first

propose a basic Wiener filter, and then improve it by introducing coefficients

inheritance and variance-based point classification for the Luma component.

Besides, to reduce the complexity of the nearest neighbor search during the

application of the Wiener filter, we also propose a Morton code-based fast

nearest neighbor search algorithm for efficient calculation of filter

coefficients. Experimental results demonstrate that the proposed method can

achieve average Bj{\o}ntegaard delta rates of -6.1%, -7.3%, and -8.0% for Luma,

Chroma Cb, and Chroma Cr components, respectively, under the condition of

lossless-geometry-lossy-attributes configuration compared to the latest G-PCC

encoding platform (i.e., geometry-based solid content test model version 7.0

release candidate 2) by consuming affordable computational complexity.

19 Aug 2018

Binary code clone analysis is an important technique which has a wide range

of applications in software engineering (e.g., plagiarism detection, bug

detection). The main challenge of the topic lies in the semantics-equivalent

code transformation (e.g., optimization, obfuscation) which would alter

representations of binary code tremendously. Another chal- lenge is the

trade-off between detection accuracy and coverage. Unfortunately, existing

techniques still rely on semantics-less code features which are susceptible to

the code transformation. Besides, they adopt merely either a static or a

dynamic approach to detect binary code clones, which cannot achieve high

accuracy and coverage simultaneously. In this paper, we propose a

semantics-based hybrid approach to detect binary clone functions. We execute a

template binary function with its test cases, and emulate the execution of

every target function for clone comparison with the runtime information

migrated from that template function. The semantic signatures are extracted

during the execution of the template function and emulation of the target

function. Lastly, a similarity score is calculated from their signatures to

measure their likeness. We implement the approach in a prototype system

designated as BinMatch which analyzes IA-32 binary code on the Linux platform.

We evaluate BinMatch with eight real-world projects compiled with different

compilation configurations and commonly-used obfuscation methods, totally

performing over 100 million pairs of function comparison. The experimental

results show that BinMatch is robust to the semantics-equivalent code

transformation. Besides, it not only covers all target functions for clone

analysis, but also improves the detection accuracy comparing to the

state-of-the-art solutions.

In the evolution towards 6G, integrating Artificial Intelligence (AI) with advanced network infrastructure emerges as a pivotal strategy for enhancing network intelligence and resource utilization. Existing distributed learning frameworks like Federated Learning and Split Learning often struggle with significant challenges in dynamic network environments including high synchronization demands, costly communication overhead, severe computing resource consumption, and data heterogeneity across network nodes. These obstacles hinder the applications of ubiquitous computing capabilities of 6G networks, especially in light of the trend of escalating model parameters and training data volumes. To address these challenges effectively, this paper introduces ``Snake Learning", a cost-effective distributed learning framework. Specifically, Snake Learning respects the heterogeneity of inter-node computing capability and local data distribution in 6G networks, and sequentially trains the designated part of model layers on individual nodes. This layer-by-layer serpentine update mechanism contributes to significantly reducing the requirements for storage, memory and communication during the model training phase, and demonstrates superior adaptability and efficiency for both classification and fine-tuning tasks across homogeneous and heterogeneous data distributions.

The emerging edge caching provides an effective way to reduce service delay

for mobile users. However, due to high deployment cost of edge hosts, a

practical problem is how to achieve minimum delay under a proper edge

deployment strategy. In this letter, we provide an analytical framework for

delay optimal mobile edge deployment in a partially connected wireless network,

where the request files can be cached at the edge hosts and cooperatively

transmitted through multiple base stations. In order to deal with the

heterogeneous transmission requirements, we separate the entire transmission

into backhaul and wireless phases, and propose average user normalized delivery

time (AUNDT) as the performance metric. On top of that, we characterize the

trade-off relations between the proposed AUNDT and other network deployment

parameters. Using the proposed analytical framework, we are able to provide the

optimal mobile edge deployment strategy in terms of AUNDT, which provides a

useful guideline for future mobile edge deployment.

06 Jan 2025

The accelerating demand for wireless communication necessitates wideband, energy-efficient photonic sub-terahertz (sub-THz) sources to enable ultra-fast data transfer. However, as critical components for THz photonic mixing, photodiodes (PDs) face a fundamental trade-off between quantum efficiency and bandwidth, presenting a major obstacle to achieving high-speed performance with high optoelectronic conversion efficiency. Here, we overcome this challenge by demonstrating an InP-based, waveguide-integrated modified uni-traveling carrier photodiode (MUTC-PD) with a terahertz bandwidth exceeding 200 GHz and a bandwidth-efficiency product (BEP) surpassing 130 GHz * 100%. Through the integration of a spot-size converter (SSC) to enhance external responsivity, alongside optimized electric field distribution, balanced carrier transport, and minimized parasitic capacitance, the device achieves a 3-dB bandwidth of 206 GHz and an external responsivity of 0.8 A/W, setting a new benchmark for BEP. Packaged with WR-5.1 waveguide output, it delivers radio-frequency (RF) power exceeding -5 dBm across the 127-185 GHz frequency range. As a proof of concept, we achieved a wireless transmission of 54 meters with a single-line rate of up to 120 Gbps, leveraging photonics-aided technology without requiring a low-noise amplifier (LNA). This work establishes a pathway to significantly enhance optical power budgets and reduce energy consumption, presenting a transformative step toward high-bandwidth, high-efficiency sub-THz communication systems and next-generation wireless networks.

In this paper, we adopt the fluid limits to analyze Age of Information (AoI) in a wireless multiaccess network with many users. We consider the case wherein users have heterogeneous i.i.d. channel conditions and the statuses are generate-at-will. Convergence of the AoI occupancy measure to the fluid limit, represented by a Partial Derivative Equation (PDE), is proved within an approximation error inversely proportional to the number of users. Global convergence to the equilibrium of the PDE, i.e., stationary AoI distribution, is also proved. Based on this framework, it is shown that an existing AoI lower bound in the literature is in fact asymptotically tight, and a simple threshold policy, with the thresholds explicitly derived, achieves the optimum asymptotically. The proposed threshold-based policy is also much easier to decentralize than the widely-known index-based policies which require comparing user indices. To showcase the usability of the framework, we also use it to analyze the average non-linear AoI functions (with power and logarithm forms) in wireless networks. Again, explicit optimal threshold-based policies are derived, and average age functions proven. Simulation results show that even when the number of users is limited, e.g., 10, the proposed policy and analysis are still effective.

01 Oct 2018

With the raising demand for autonomous driving, vehicle-to-vehicle

communications becomes a key technology enabler for the future intelligent

transportation system. Based on our current knowledge field, there is limited

network simulator that can support end-to-end performance evaluation for LTE-V

based vehicle-to-vehicle platooning systems. To address this problem, we start

with an integrated platform that combines traffic generator and network

simulator together, and build the V2V transmission capability according to

LTE-V specification. On top of that, we simulate the end-to-end throughput and

delay profiles in different layers to compare different configurations of

platooning systems. Through numerical experiments, we show that the LTE-V

system is unable to support the highest degree of automation under shadowing

effects in the vehicle platooning scenarios, which requires ultra-reliable

low-latency communication enhancement in 5G networks. Meanwhile, the throughput

and delay performance for vehicle platooning changes dramatically in PDCP

layers, where we believe further improvements are necessary.

16 Aug 2019

Environmental Sound Classification (ESC) is an important and challenging

problem, and feature representation is a critical and even decisive factor in

ESC. Feature representation ability directly affects the accuracy of sound

classification. Therefore, the ESC performance is heavily dependent on the

effectiveness of representative features extracted from the environmental

sounds. In this paper, we propose a subspectrogram segmentation based ESC

classification framework. In addition, we adopt the proposed Convolutional

Recurrent Neural Network (CRNN) and score level fusion to jointly improve the

classification accuracy. Extensive truncation schemes are evaluated to find the

optimal number and the corresponding band ranges of sub-spectrograms. Based on

the numerical experiments, the proposed framework can achieve 81.9% ESC

classification accuracy on the public dataset ESC-50, which provides 9.1%

accuracy improvement over traditional baseline schemes.

Environmental sound classification (ESC) is a challenging problem due to the complexity of sounds. The classification performance is heavily dependent on the effectiveness of representative features extracted from the environmental sounds. However, ESC often suffers from the semantically irrelevant frames and silent frames. In order to deal with this, we employ a frame-level attention model to focus on the semantically relevant frames and salient frames. Specifically, we first propose a convolutional recurrent neural network to learn spectro-temporal features and temporal correlations. Then, we extend our convolutional RNN model with a frame-level attention mechanism to learn discriminative feature representations for ESC. We investigated the classification performance when using different attention scaling function and applying different layers. Experiments were conducted on ESC-50 and ESC-10 datasets. Experimental results demonstrated the effectiveness of the proposed method and our method achieved the state-of-the-art or competitive classification accuracy with lower computational complexity. We also visualized our attention results and observed that the proposed attention mechanism was able to lead the network tofocus on the semantically relevant parts of environmental sounds.

Many learning tasks require us to deal with graph data which contains rich relational information among elements, leading increasing graph neural network (GNN) models to be deployed in industrial products for improving the quality of service. However, they also raise challenges to model authentication. It is necessary to protect the ownership of the GNN models, which motivates us to present a watermarking method to GNN models in this paper. In the proposed method, an Erdos-Renyi (ER) random graph with random node feature vectors and labels is randomly generated as a trigger to train the GNN to be protected together with the normal samples. During model training, the secret watermark is embedded into the label predictions of the ER graph nodes. During model verification, by activating a marked GNN with the trigger ER graph, the watermark can be reconstructed from the output to verify the ownership. Since the ER graph was randomly generated, by feeding it to a non-marked GNN, the label predictions of the graph nodes are random, resulting in a low false alarm rate (of the proposed work). Experimental results have also shown that, the performance of a marked GNN on its original task will not be impaired. Moreover, it is robust against model compression and fine-tuning, which has shown the superiority and applicability.

Text detection/localization, as an important task in computer vision, has

witnessed substantialadvancements in methodology and performance with

convolutional neural networks. However, the vastmajority of popular methods use

rectangles or quadrangles to describe text regions. These representationshave

inherent drawbacks, especially relating to dense adjacent text and loose

regional text boundaries,which usually cause difficulty detecting arbitrarily

shaped text. In this paper, we propose a novel text regionrepresentation

method, with a robust pipeline, which can precisely detect dense adjacent text

instances witharbitrary shapes. We consider a text instance to be composed of

an adaptive central text region mask anda corresponding expanding ratio between

the central text region and the full text region. More specifically,our

pipeline generates adaptive central text regions and corresponding expanding

ratios with a proposedtraining strategy, followed by a new proposed

post-processing algorithm which expands central text regionsto the complete

text instance with the corresponding expanding ratios. We demonstrated that our

new textregion representation is effective, and that the pipeline can precisely

detect closely adjacent text instances ofarbitrary shapes. Experimental results

on common datasets demonstrate superior performance o

Transmission control protocol (TCP) congestion control is one of the key techniques to improve network performance. TCP congestion control algorithm identification (TCP identification) can be used to significantly improve network efficiency. Existing TCP identification methods can only be applied to limited number of TCP congestion control algorithms and focus on wired networks. In this paper, we proposed a machine learning based passive TCP identification method for wired and wireless networks. After comparing among three typical machine learning models, we concluded that the 4-layers Long Short Term Memory (LSTM) model achieves the best identification accuracy. Our approach achieves better than 98% accuracy in wired and wireless networks and works for newly proposed TCP congestion control algorithms.

17 Feb 2019

Indoor localization becomes a raising demand in our daily lives. Due to the

massive deployment in the indoor environment nowadays, WiFi systems have been

applied to high accurate localization recently. Although the traditional model

based localization scheme can achieve sub-meter level accuracy by fusing

multiple channel state information (CSI) observations, the corresponding

computational overhead is significant. To address this issue, the model-free

localization approach using deep learning framework has been proposed and the

classification based technique is applied. In this paper, instead of using

classification based mechanism, we propose to use a logistic regression based

scheme under the deep learning framework, which is able to achieve sub-meter

level accuracy (97.2cm medium distance error) in the standard laboratory

environment and maintain reasonable online prediction overhead under the single

WiFi AP settings. We hope the proposed logistic regression based scheme can

shed some light on the model-free localization technique and pave the way for

the practical deployment of deep learning based WiFi localization systems.

Recently, video scene text detection has received increasing attention due to its comprehensive applications. However, the lack of annotated scene text video datasets has become one of the most important problems, which hinders the development of video scene text detection. The existing scene text video datasets are not large-scale due to the expensive cost caused by manual labeling. In addition, the text instances in these datasets are too clear to be a challenge. To address the above issues, we propose a tracking based semi-automatic labeling strategy for scene text videos in this paper. We get semi-automatic scene text annotation by labeling manually for the first frame and tracking automatically for the subsequent frames, which avoid the huge cost of manual labeling. Moreover, a paired low-quality scene text video dataset named Text-RBL is proposed, consisting of raw videos, blurry videos, and low-resolution videos, labeled by the proposed convenient semi-automatic labeling strategy. Through an averaging operation and bicubic down-sampling operation over the raw videos, we can efficiently obtain blurry videos and low-resolution videos paired with raw videos separately. To verify the effectiveness of Text-RBL, we propose a baseline model combined with the text detector and tracker for video scene text detection. Moreover, a failure detection scheme is designed to alleviate the baseline model drift issue caused by complex scenes. Extensive experiments demonstrate that Text-RBL with paired low-quality videos labeled by the semi-automatic method can significantly improve the performance of the text detector in low-quality scenes.

Segmenting anatomical structures and lesions from ultrasound images contributes to disease assessment. Weakly supervised learning (WSL) based on sparse annotation has achieved encouraging performance and demonstrated the potential to reduce annotation costs. This study attempts to introduce scribble-based WSL into ultrasound image segmentation tasks. However, ultrasound images often suffer from poor contrast and unclear edges, coupled with insufficient supervison signals for edges, posing challenges to edge prediction. Uncertainty modeling has been proven to facilitate models in dealing with these issues. Nevertheless, existing uncertainty estimation paradigms are not robust enough and often filter out predictions near decision boundaries, resulting in unstable edge predictions. Therefore, we propose leveraging predictions near decision boundaries effectively. Specifically, we introduce Dempster-Shafer Theory (DST) of evidence to design an Evidence-Guided Consistency strategy. This strategy utilizes high-evidence predictions, which are more likely to occur near high-density regions, to guide the optimization of low-evidence predictions that may appear near decision boundaries. Furthermore, the diverse sizes and locations of lesions in ultrasound images pose a challenge for CNNs with local receptive fields, as they struggle to model global information. Therefore, we introduce Visual Mamba based on structured state space sequence models, which achieves long-range dependency with linear computational complexity, and we construct a novel hybrid CNN-Mamba framework. During training, the collaboration between the CNN branch and the Mamba branch in the proposed framework draws inspiration from each other based on the EGC strategy. Experiments demonstrate the competitiveness of the proposed method. Dataset and code will be available on this https URL.

There are no more papers matching your filters at the moment.