University of Illinois at Urbana–Champaign

Jailbreaks on large language models (LLMs) have recently received increasing attention. For a comprehensive assessment of LLM safety, it is essential to consider jailbreaks with diverse attributes, such as contextual coherence and sentiment/stylistic variations, and hence it is beneficial to study controllable jailbreaking, i.e. how to enforce control on LLM attacks. In this paper, we formally formulate the controllable attack generation problem, and build a novel connection between this problem and controllable text generation, a well-explored topic of natural language processing. Based on this connection, we adapt the Energy-based Constrained Decoding with Langevin Dynamics (COLD), a state-of-the-art, highly efficient algorithm in controllable text generation, and introduce the COLD-Attack framework which unifies and automates the search of adversarial LLM attacks under a variety of control requirements such as fluency, stealthiness, sentiment, and left-right-coherence. The controllability enabled by COLD-Attack leads to diverse new jailbreak scenarios which not only cover the standard setting of generating fluent (suffix) attack with continuation constraint, but also allow us to address new controllable attack settings such as revising a user query adversarially with paraphrasing constraint, and inserting stealthy attacks in context with position constraint. Our extensive experiments on various LLMs (Llama-2, Mistral, Vicuna, Guanaco, GPT-3.5, and GPT-4) show COLD-Attack's broad applicability, strong controllability, high success rate, and attack transferability. Our code is available at this https URL.

Simulated Policy Learning (SimPLe) is an iterative model-based reinforcement learning algorithm that achieves superior sample efficiency on Atari games by training a policy within a learned stochastic world model. The approach consistently outperforms state-of-the-art model-free baselines like Rainbow and PPO, sometimes by over 10 times, under a strict budget of 100,000 real-environment interactions, addressing a long-standing challenge in model-based RL on high-dimensional visual inputs.

PromptGuard introduces a soft prompt-guided moderation technique for text-to-image (T2I) models, effectively reducing the generation of Not-Safe-For-Work (NSFW) content by steering models towards safe and realistic outputs without altering core model parameters. The method achieves an average unsafe ratio of 5.84% across diverse NSFW categories while maintaining benign image generation quality and significantly improving inference efficiency over prior techniques.

Prompt quality plays a critical role in the performance of large language models (LLMs), motivating a growing body of work on prompt optimization. Most existing methods optimize prompts over a fixed dataset, assuming static input distributions and offering limited support for iterative improvement. We introduce SIPDO (Self-Improving Prompts through Data-Augmented Optimization), a closed-loop framework for prompt learning that integrates synthetic data generation into the optimization process. SIPDO couples a synthetic data generator with a prompt optimizer, where the generator produces new examples that reveal current prompt weaknesses and the optimizer incrementally refines the prompt in response. This feedback-driven loop enables systematic improvement of prompt performance without assuming access to external supervision or new tasks. Experiments across question answering and reasoning benchmarks show that SIPDO outperforms standard prompt tuning methods, highlighting the value of integrating data synthesis into prompt learning workflows.

In the context of Byzantine consensus problems such as Byzantine broadcast (BB) and Byzantine agreement (BA), the good-case setting aims to study the minimal possible latency of a BB or BA protocol under certain favorable conditions, namely the designated leader being correct (for BB), or all parties having the same input value (for BA). We provide a full characterization of the feasibility and impossibility of good-case latency, for both BA and BB, in the synchronous sleepy model. Surprisingly to us, we find irrational resilience thresholds emerging: 2-round good-case BB is possible if and only if at all times, at least φ1≈0.618 fraction of the active parties are correct, where φ=21+5≈1.618 is the golden ratio; 1-round good-case BA is possible if and only if at least 21≈0.707 fraction of the active parties are correct.

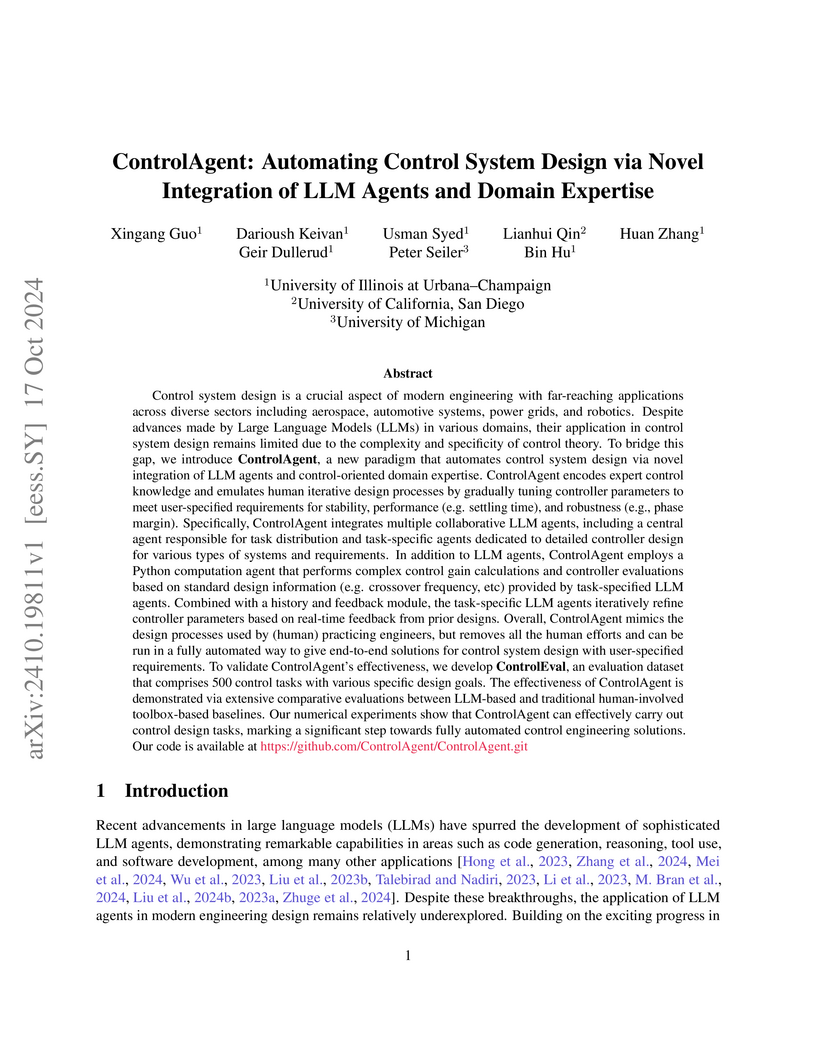

Control system design is a crucial aspect of modern engineering with far-reaching applications across diverse sectors including aerospace, automotive systems, power grids, and robotics. Despite advances made by Large Language Models (LLMs) in various domains, their application in control system design remains limited due to the complexity and specificity of control theory. To bridge this gap, we introduce ControlAgent, a new paradigm that automates control system design via novel integration of LLM agents and control-oriented domain expertise. ControlAgent encodes expert control knowledge and emulates human iterative design processes by gradually tuning controller parameters to meet user-specified requirements for stability, performance, and robustness. ControlAgent integrates multiple collaborative LLM agents, including a central agent responsible for task distribution and task-specific agents dedicated to detailed controller design for various types of systems and requirements. ControlAgent also employs a Python computation agent that performs complex calculations and controller evaluations based on standard design information provided by task-specified LLM agents. Combined with a history and feedback module, the task-specific LLM agents iteratively refine controller parameters based on real-time feedback from prior designs. Overall, ControlAgent mimics the design processes used by (human) practicing engineers, but removes all the human efforts and can be run in a fully automated way to give end-to-end solutions for control system design with user-specified requirements. To validate ControlAgent's effectiveness, we develop ControlEval, an evaluation dataset that comprises 500 control tasks with various specific design goals. The effectiveness of ControlAgent is demonstrated via extensive comparative evaluations between LLM-based and traditional human-involved toolbox-based baselines.

Backdoor attack has emerged as a major security threat to deep neural networks (DNNs). While existing defense methods have demonstrated promising results on detecting or erasing backdoors, it is still not clear whether robust training methods can be devised to prevent the backdoor triggers being injected into the trained model in the first place. In this paper, we introduce the concept of \emph{anti-backdoor learning}, aiming to train \emph{clean} models given backdoor-poisoned data. We frame the overall learning process as a dual-task of learning the \emph{clean} and the \emph{backdoor} portions of data. From this view, we identify two inherent characteristics of backdoor attacks as their weaknesses: 1) the models learn backdoored data much faster than learning with clean data, and the stronger the attack the faster the model converges on backdoored data; 2) the backdoor task is tied to a specific class (the backdoor target class). Based on these two weaknesses, we propose a general learning scheme, Anti-Backdoor Learning (ABL), to automatically prevent backdoor attacks during training. ABL introduces a two-stage \emph{gradient ascent} mechanism for standard training to 1) help isolate backdoor examples at an early training stage, and 2) break the correlation between backdoor examples and the target class at a later training stage. Through extensive experiments on multiple benchmark datasets against 10 state-of-the-art attacks, we empirically show that ABL-trained models on backdoor-poisoned data achieve the same performance as they were trained on purely clean data. Code is available at \url{this https URL}.

This paper presents DIMES, a differentiable meta solver designed to tackle large-scale combinatorial optimization problems by learning a compact, continuous parameterization of solution distributions. The framework, which combines a GNN with a MAML-inspired meta-learning approach and REINFORCE, achieves superior scalability and solution quality on problems like TSP-10000 and large MIS instances, outperforming previous deep learning methods without requiring ground-truth optimal solutions.

10 Jun 2025

University of Cincinnati University of CambridgeCharles University

University of CambridgeCharles University University of Southern California

University of Southern California New York University

New York University University of California, Irvine

University of California, Irvine Cornell UniversityCSIC

Cornell UniversityCSIC Argonne National Laboratory

Argonne National Laboratory University of PennsylvaniaPacific Northwest National Laboratory

University of PennsylvaniaPacific Northwest National Laboratory The Pennsylvania State University

The Pennsylvania State University Lawrence Berkeley National LaboratoryUniversity of Duisburg-EssenOak Ridge National LaboratoryUniversity of GenevaNational Renewable Energy LaboratoryUniversity of Colorado BoulderTechnical University of DenmarkUniversidad de ChileColorado School of MinesUniversity of Tennessee, KnoxvilleUniversity of Illinois at Urbana–ChampaignJustus Liebig University GiessenUniversity of TuebingenSolid Power Operating IncUniversity of Missouri/Columbia

Lawrence Berkeley National LaboratoryUniversity of Duisburg-EssenOak Ridge National LaboratoryUniversity of GenevaNational Renewable Energy LaboratoryUniversity of Colorado BoulderTechnical University of DenmarkUniversidad de ChileColorado School of MinesUniversity of Tennessee, KnoxvilleUniversity of Illinois at Urbana–ChampaignJustus Liebig University GiessenUniversity of TuebingenSolid Power Operating IncUniversity of Missouri/Columbia

University of CambridgeCharles University

University of CambridgeCharles University University of Southern California

University of Southern California New York University

New York University University of California, Irvine

University of California, Irvine Cornell UniversityCSIC

Cornell UniversityCSIC Argonne National Laboratory

Argonne National Laboratory University of PennsylvaniaPacific Northwest National Laboratory

University of PennsylvaniaPacific Northwest National Laboratory The Pennsylvania State University

The Pennsylvania State University Lawrence Berkeley National LaboratoryUniversity of Duisburg-EssenOak Ridge National LaboratoryUniversity of GenevaNational Renewable Energy LaboratoryUniversity of Colorado BoulderTechnical University of DenmarkUniversidad de ChileColorado School of MinesUniversity of Tennessee, KnoxvilleUniversity of Illinois at Urbana–ChampaignJustus Liebig University GiessenUniversity of TuebingenSolid Power Operating IncUniversity of Missouri/Columbia

Lawrence Berkeley National LaboratoryUniversity of Duisburg-EssenOak Ridge National LaboratoryUniversity of GenevaNational Renewable Energy LaboratoryUniversity of Colorado BoulderTechnical University of DenmarkUniversidad de ChileColorado School of MinesUniversity of Tennessee, KnoxvilleUniversity of Illinois at Urbana–ChampaignJustus Liebig University GiessenUniversity of TuebingenSolid Power Operating IncUniversity of Missouri/ColumbiaMicroscopy is a primary source of information on materials structure and

functionality at nanometer and atomic scales. The data generated is often

well-structured, enriched with metadata and sample histories, though not always

consistent in detail or format. The adoption of Data Management Plans (DMPs) by

major funding agencies promotes preservation and access. However, deriving

insights remains difficult due to the lack of standardized code ecosystems,

benchmarks, and integration strategies. As a result, data usage is inefficient

and analysis time is extensive. In addition to post-acquisition analysis, new

APIs from major microscope manufacturers enable real-time, ML-based analytics

for automated decision-making and ML-agent-controlled microscope operation.

Yet, a gap remains between the ML and microscopy communities, limiting the

impact of these methods on physics, materials discovery, and optimization.

Hackathons help bridge this divide by fostering collaboration between ML

researchers and microscopy experts. They encourage the development of novel

solutions that apply ML to microscopy, while preparing a future workforce for

instrumentation, materials science, and applied ML. This hackathon produced

benchmark datasets and digital twins of microscopes to support community growth

and standardized workflows. All related code is available at GitHub:

this https URL

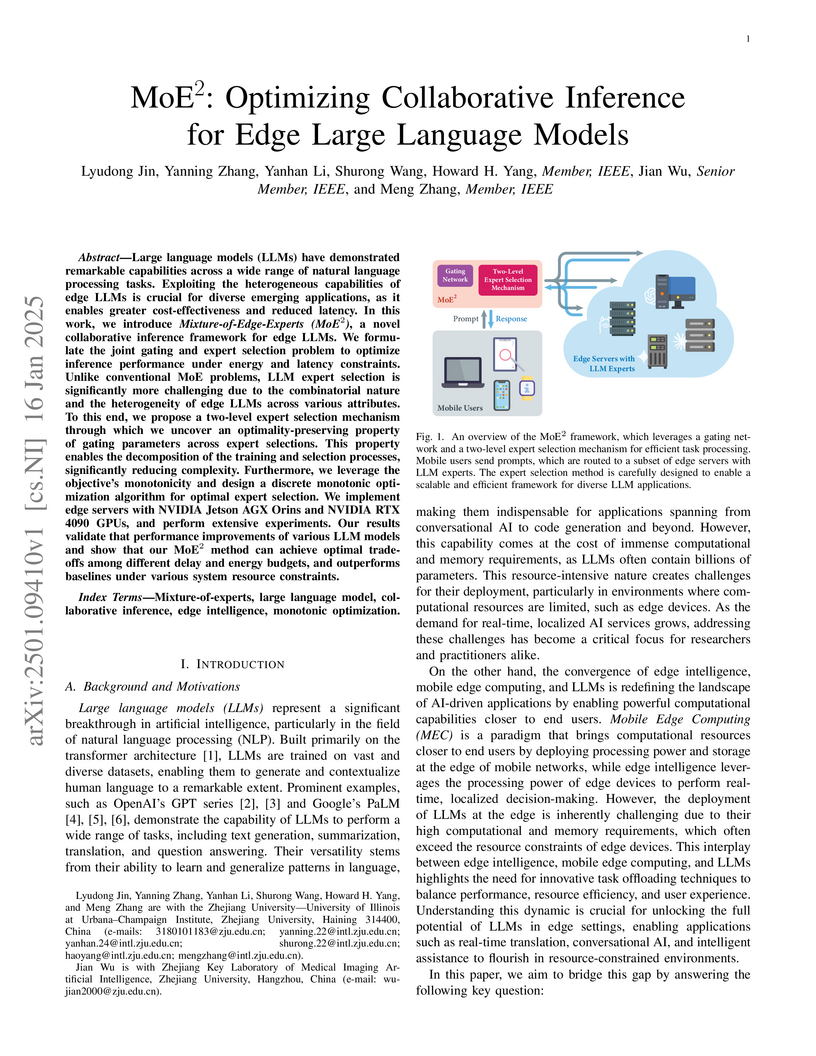

Researchers at Zhejiang University developed MoE2, a collaborative inference framework designed to optimize the deployment of large language models (LLMs) at the network edge. This framework efficiently balances performance, latency, and energy consumption by intelligently selecting heterogeneous edge LLM experts for diverse task requests, demonstrating superior accuracy and resource management on a real-world edge testbed.

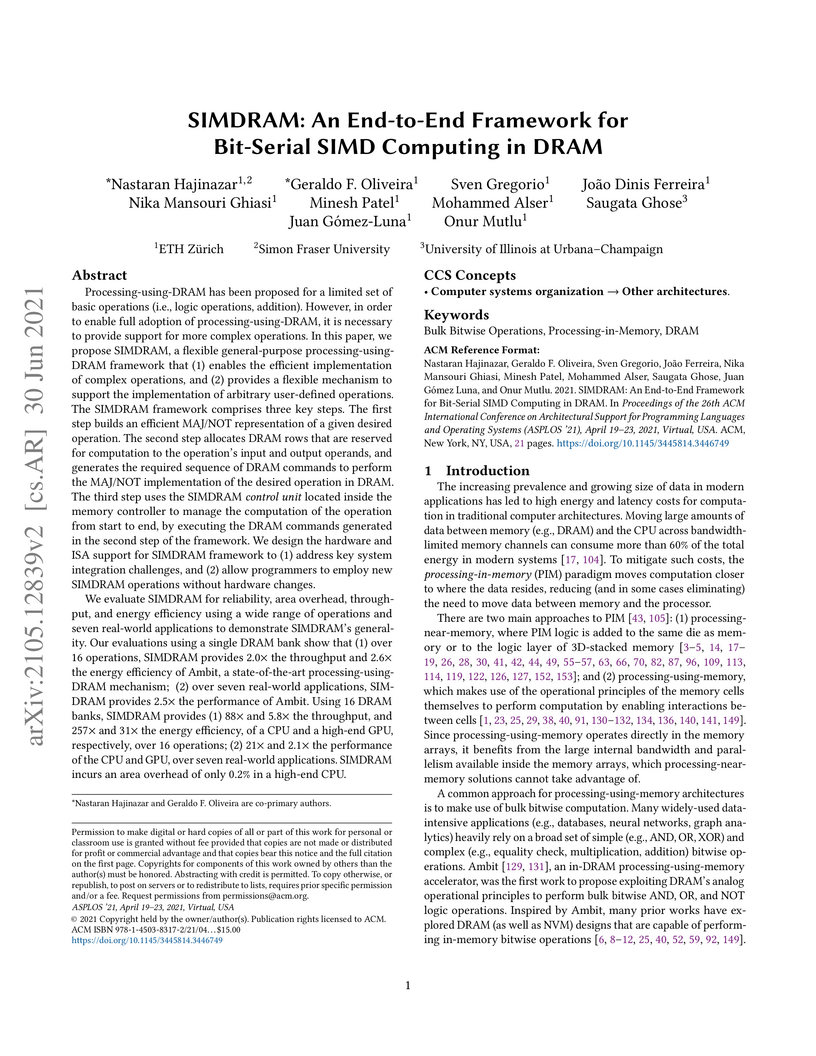

Processing-using-DRAM has been proposed for a limited set of basic operations (i.e., logic operations, addition). However, in order to enable full adoption of processing-using-DRAM, it is necessary to provide support for more complex operations. In this paper, we propose SIMDRAM, a flexible general-purpose processing-using-DRAM framework that (1) enables the efficient implementation of complex operations, and (2) provides a flexible mechanism to support the implementation of arbitrary user-defined operations. The SIMDRAM framework comprises three key steps. The first step builds an efficient MAJ/NOT representation of a given desired operation. The second step allocates DRAM rows that are reserved for computation to the operation's input and output operands, and generates the required sequence of DRAM commands to perform the MAJ/NOT implementation of the desired operation in DRAM. The third step uses the SIMDRAM control unit located inside the memory controller to manage the computation of the operation from start to end, by executing the DRAM commands generated in the second step of the framework. We design the hardware and ISA support for SIMDRAM framework to (1) address key system integration challenges, and (2) allow programmers to employ new SIMDRAM operations without hardware changes.

We evaluate SIMDRAM for reliability, area overhead, throughput, and energy efficiency using a wide range of operations and seven real-world applications to demonstrate SIMDRAM's generality. Using 16 DRAM banks, SIMDRAM provides (1) 88x and 5.8x the throughput, and 257x and 31x the energy efficiency, of a CPU and a high-end GPU, respectively, over 16 operations; (2) 21x and 2.1x the performance of the CPU and GPU, over seven real-world applications. SIMDRAM incurs an area overhead of only 0.2% in a high-end CPU.

PREMISE introduces a prompt-only framework for efficient mathematical reasoning in large language models by using trace-level diagnostics and multi-objective textual optimization. This approach reduces token usage by up to 87.5% and monetary costs by 69-82% for black-box models like Claude and Gemini, while maintaining or improving answer accuracy.

In 1975, Erdős and Sauer asked to estimate, for any constant r, the maximum number of edges an n-vertex graph can have without containing an r-regular subgraph. In a recent breakthrough, Janzer and Sudakov proved that any n-vertex graph with no r-regular subgraph has at most Crnloglogn edges, matching an earlier lower bound by Pyber, Rödl and Szemerédi and thereby resolving the Erdős-Sauer problem up to a constant depending on r. We prove that every n-vertex graph without an r-regular subgraph has at most Cr2nloglogn edges. This bound is tight up to the value of C for n≥n0(r) and hence resolves the Erdős-Sauer problem up to an absolute constant.

Moreover, we obtain similarly tight results for the whole range of possible values of r (i.e., not just when r is a constant), apart from a small error term at a transition point near r≈logn, where, perhaps surprisingly, the answer changes. More specifically, we show that every n-vertex graph with average degree at least min(Crlog(n/r),Cr2loglogn) contains an r-regular subgraph. The bound Crlog(n/r) is tight for r≥logn, while the bound Cr2loglogn is tight for r<(\log n)^{1-\Omega(1)}. These results resolve a problem of Rödl and Wysocka from 1997 for almost all values of r.

Among other tools, we develop a novel random process that efficiently finds a very nearly regular subgraph in any almost-regular graph. A key step in our proof uses this novel random process to show that every K-almost-regular graph with average degree d contains an r-regular subgraph for some r=ΩK(d), which is of independent interest.

DRAM is the dominant main memory technology used in modern computing systems. Computing systems implement a memory controller that interfaces with DRAM via DRAM commands. DRAM executes the given commands using internal components (e.g., access transistors, sense amplifiers) that are orchestrated by DRAM internal timings, which are fixed foreach DRAM command. Unfortunately, the use of fixed internal timings limits the types of operations that DRAM can perform and hinders the implementation of new functionalities and custom mechanisms that improve DRAM reliability, performance and energy. To overcome these limitations, we propose enabling programmable DRAM internal timings for controlling in-DRAM components. To this end, we design CODIC, a new low-cost DRAM substrate that enables fine-grained control over four previously fixed internal DRAM timings that are key to many DRAM operations. We implement CODIC with only minimal changes to the DRAM chip and the DDRx interface. To demonstrate the potential of CODIC, we propose two new CODIC-based security mechanisms that outperform state-of-the-art mechanisms in several ways: (1) a new DRAM Physical Unclonable Function (PUF) that is more robust and has significantly higher throughput than state-of-the-art DRAM PUFs, and (2) the first cold boot attack prevention mechanism that does not introduce any performance or energy overheads at runtime.

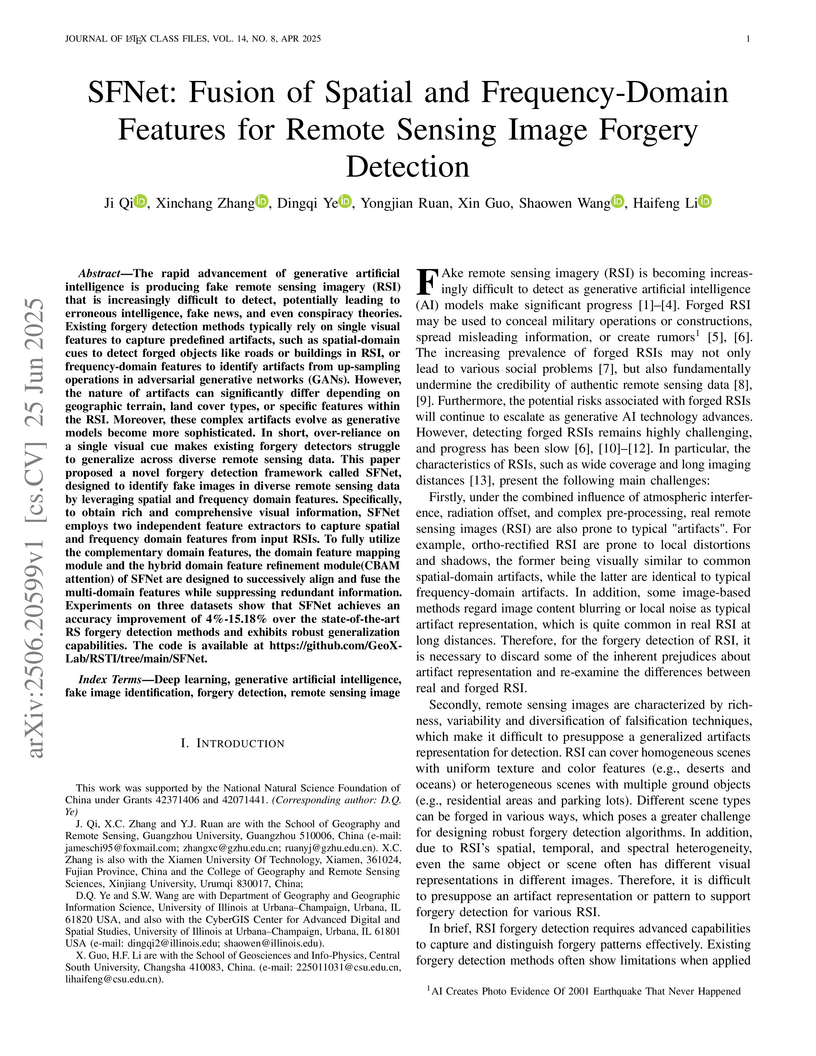

The rapid advancement of generative artificial intelligence is producing fake remote sensing imagery (RSI) that is increasingly difficult to detect, potentially leading to erroneous intelligence, fake news, and even conspiracy theories. Existing forgery detection methods typically rely on single visual features to capture predefined artifacts, such as spatial-domain cues to detect forged objects like roads or buildings in RSI, or frequency-domain features to identify artifacts from up-sampling operations in adversarial generative networks (GANs). However, the nature of artifacts can significantly differ depending on geographic terrain, land cover types, or specific features within the RSI. Moreover, these complex artifacts evolve as generative models become more sophisticated. In short, over-reliance on a single visual cue makes existing forgery detectors struggle to generalize across diverse remote sensing data. This paper proposed a novel forgery detection framework called SFNet, designed to identify fake images in diverse remote sensing data by leveraging spatial and frequency domain features. Specifically, to obtain rich and comprehensive visual information, SFNet employs two independent feature extractors to capture spatial and frequency domain features from input RSIs. To fully utilize the complementary domain features, the domain feature mapping module and the hybrid domain feature refinement module(CBAM attention) of SFNet are designed to successively align and fuse the multi-domain features while suppressing redundant information. Experiments on three datasets show that SFNet achieves an accuracy improvement of 4%-15.18% over the state-of-the-art RS forgery detection methods and exhibits robust generalization capabilities. The code is available at this https URL.

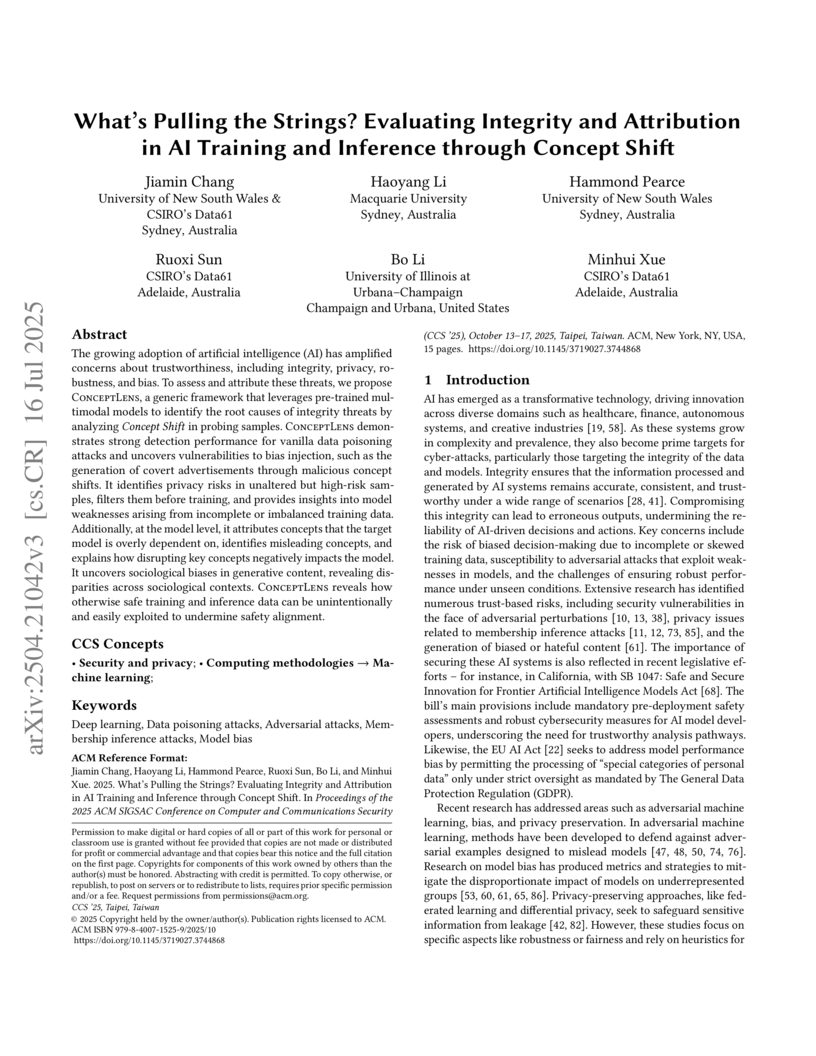

The growing adoption of artificial intelligence (AI) has amplified concerns about trustworthiness, including integrity, privacy, robustness, and bias. To assess and attribute these threats, we propose ConceptLens, a generic framework that leverages pre-trained multimodal models to identify the root causes of integrity threats by analyzing Concept Shift in probing samples. ConceptLens demonstrates strong detection performance for vanilla data poisoning attacks and uncovers vulnerabilities to bias injection, such as the generation of covert advertisements through malicious concept shifts. It identifies privacy risks in unaltered but high-risk samples, filters them before training, and provides insights into model weaknesses arising from incomplete or imbalanced training data. Additionally, at the model level, it attributes concepts that the target model is overly dependent on, identifies misleading concepts, and explains how disrupting key concepts negatively impacts the model. Furthermore, it uncovers sociological biases in generative content, revealing disparities across sociological contexts. Strikingly, ConceptLens reveals how safe training and inference data can be unintentionally and easily exploited, potentially undermining safety alignment. Our study informs actionable insights to breed trust in AI systems, thereby speeding adoption and driving greater innovation.

This paper studies social system inference from a single trajectory of public

evolving opinions, wherein observation noise leads to the statistical

dependence of samples on time and coordinates. We first propose a cyber-social

system that comprises individuals in a social network and a set of information

sources in a cyber layer, whose opinion dynamics explicitly takes confirmation

bias, novelty bias and process noise into account. Based on the proposed social

model, we then study the sample complexity of least-square auto-regressive

model estimation, which governs the number of observations that are sufficient

for the identified model to achieve the prescribed levels of accuracy and

confidence. Building on the identified social model, we then investigate social

inference, with particular focus on the weighted network topology, the

subconscious bias and the model parameters of confirmation bias and novelty

bias. Finally, the theoretical results and the effectiveness of the proposed

social model and inference algorithm are validated by the US Senate Member

Ideology data.

Recent studies have highlighted adversarial examples as a ubiquitous threat to different neural network models and many downstream applications. Nonetheless, as unique data properties have inspired distinct and powerful learning principles, this paper aims to explore their potentials towards mitigating adversarial inputs. In particular, our results reveal the importance of using the temporal dependency in audio data to gain discriminate power against adversarial examples. Tested on the automatic speech recognition (ASR) tasks and three recent audio adversarial attacks, we find that (i) input transformation developed from image adversarial defense provides limited robustness improvement and is subtle to advanced attacks; (ii) temporal dependency can be exploited to gain discriminative power against audio adversarial examples and is resistant to adaptive attacks considered in our experiments. Our results not only show promising means of improving the robustness of ASR systems, but also offer novel insights in exploiting domain-specific data properties to mitigate negative effects of adversarial examples.

Deep neural networks (DNNs) have been found to be vulnerable to backdoor attacks, raising security concerns about their deployment in mission-critical applications. While existing defense methods have demonstrated promising results, it is still not clear how to effectively remove backdoor-associated neurons in backdoored DNNs. In this paper, we propose a novel defense called \emph{Reconstructive Neuron Pruning} (RNP) to expose and prune backdoor neurons via an unlearning and then recovering process. Specifically, RNP first unlearns the neurons by maximizing the model's error on a small subset of clean samples and then recovers the neurons by minimizing the model's error on the same data. In RNP, unlearning is operated at the neuron level while recovering is operated at the filter level, forming an asymmetric reconstructive learning procedure. We show that such an asymmetric process on only a few clean samples can effectively expose and prune the backdoor neurons implanted by a wide range of attacks, achieving a new state-of-the-art defense performance. Moreover, the unlearned model at the intermediate step of our RNP can be directly used to improve other backdoor defense tasks including backdoor removal, trigger recovery, backdoor label detection, and backdoor sample detection. Code is available at \url{this https URL}.

Many problems of interest in engineering, medicine, and the fundamental

sciences rely on high-fidelity flow simulation, making performant computational

fluid dynamics solvers a mainstay of the open-source software community. A

previous work (Bryngelson et al., Comp. Phys. Comm. (2021)) published MFC 3.0

with numerous physical features, numerics, and scalability. MFC 5.0 is a marked

update to MFC 3.0, including a broad set of well-established and novel physical

models and numerical methods, and the introduction of XPU acceleration. We

exhibit state-of-the-art performance and ideal scaling on the first two

exascale supercomputers, OLCF Frontier and LLNL El Capitan. Combined with MFC's

single-accelerator performance, MFC achieves exascale computation in practice.

New physical features include the immersed boundary method, N-fluid phase

change, Euler--Euler and Euler--Lagrange sub-grid bubble models,

fluid-structure interaction, hypo- and hyper-elastic materials, chemically

reacting flow, two-material surface tension, magnetohydrodynamics (MHD), and

more. Numerical techniques now represent the current state-of-the-art,

including general relaxation characteristic boundary conditions, WENO variants,

Strang splitting for stiff sub-grid flow features, and low Mach number

treatments. Weak scaling to tens of thousands of GPUs on OLCF Summit and

Frontier and LLNL El Capitan sees efficiencies within 5% of ideal to their full

system sizes. Strong scaling results for a 16-times increase in device count

show parallel efficiencies over 90% on OLCF Frontier. MFC's software stack has

improved, including continuous integration, ensuring code resilience and

correctness through over 300 regression tests; metaprogramming, reducing code

length and maintaining performance portability; and code generation for

computing chemical reactions.

There are no more papers matching your filters at the moment.