Cornell

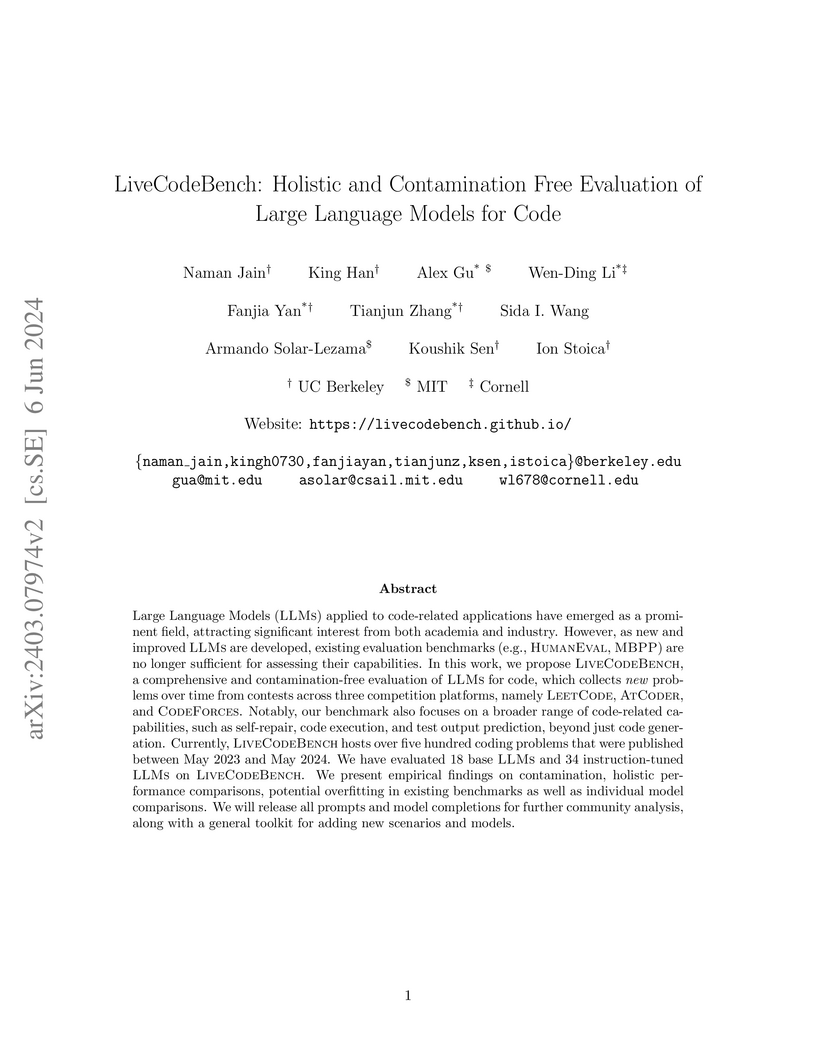

LiveCodeBench introduces a dynamic, contamination-free, and holistic benchmark for evaluating Large Language Models for code, utilizing problems from recent competitive programming contests. Its evaluation of 52 models demonstrates evidence of data contamination in existing benchmarks and highlights a persistent performance gap between state-of-the-art closed models and open-access models across diverse coding tasks.

DataComp-LM introduces a standardized, large-scale benchmark for evaluating language model training data curation strategies, complete with an openly released corpus, framework, and models. Its DCLM-BASELINE 7B model, trained on carefully filtered Common Crawl data, achieves 64% MMLU 5-shot accuracy, outperforming previous open-data state-of-the-art models while requiring substantially less compute.

This research explores combining inductive and transductive reasoning for few-shot abstract reasoning on the Abstraction and Reasoning Corpus (ARC), demonstrating a strong, stable complementarity between these paradigms. The approach, which uses fine-tuned Llama3.1-8B-instruct models and a novel LLM-driven synthetic data generation pipeline, achieved 56.75% accuracy on ARC validation tasks, approaching average human performance.

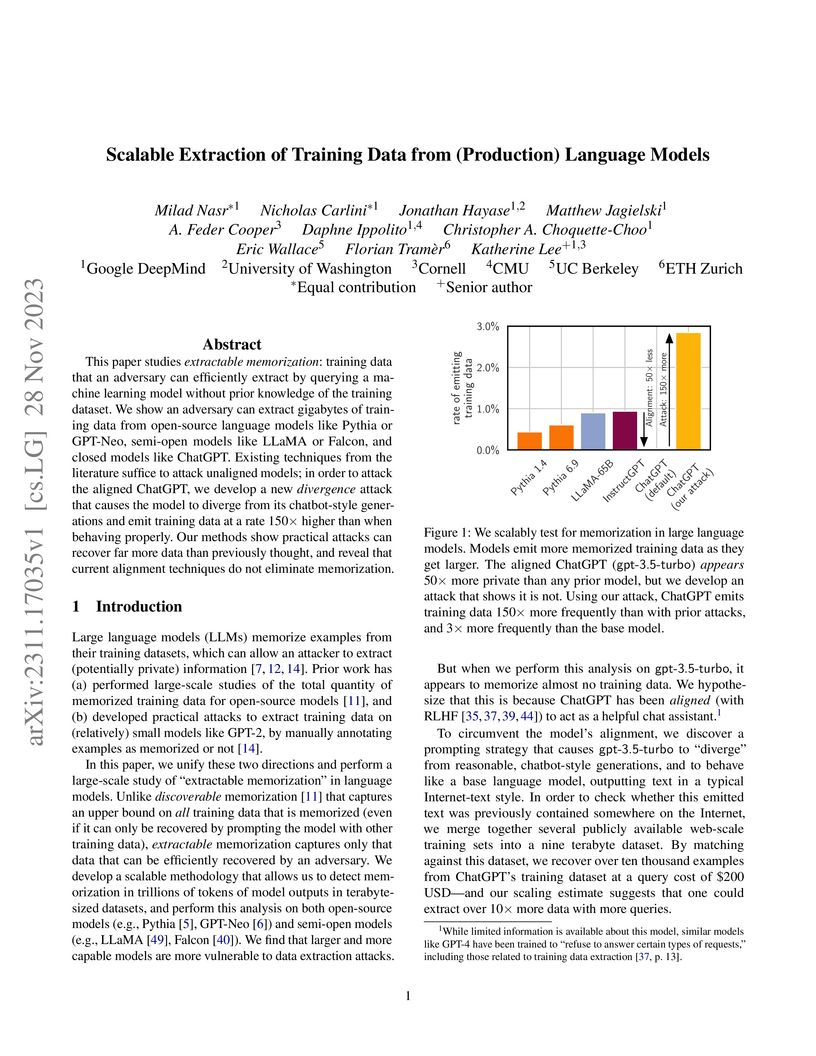

This research from Google DeepMind and academic institutions developed scalable methods to extract and quantify memorized training data from various Large Language Models, including aligned production models like ChatGPT. The study demonstrated that current alignment techniques offer limited protection against extraction, enabling the retrieval of millions of unique memorized sequences, including sensitive personal information and copyrighted content.

CURIE introduces a multidisciplinary benchmark for evaluating Large Language Models on complex, long-context scientific understanding and reasoning tasks derived from full-length research papers. Evaluations across eight state-of-the-art models reveal that even the best-performing model, Claude-3 Opus, achieves only 32% overall accuracy, indicating substantial room for improvement in scientific problem-solving capabilities.

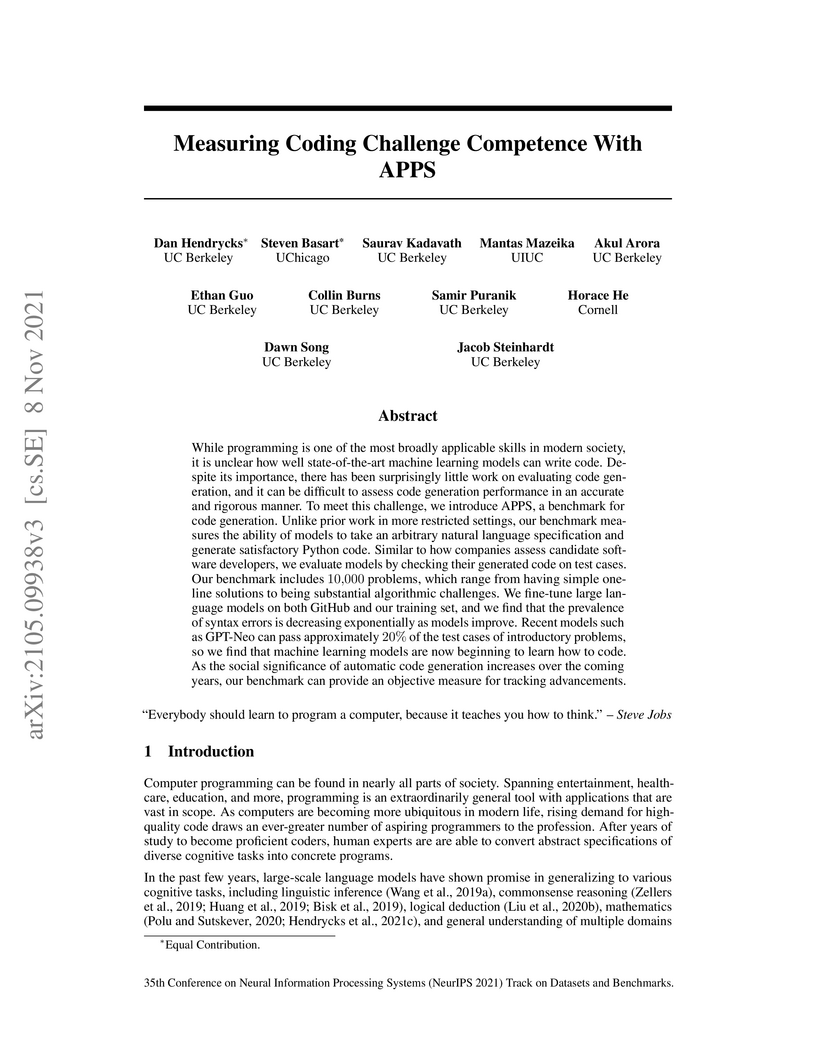

APPS introduces a comprehensive benchmark for evaluating large language models' ability to generate functional Python code from natural language specifications, utilizing a vast set of verifiable test cases. The benchmark demonstrates that current models can achieve some functional correctness on simpler tasks but struggle significantly with complex algorithmic challenges, highlighting the importance of fine-tuning on code and the inadequacy of traditional metrics like BLEU.

Researchers from Apple, Cornell, and Weill Cornell Medicine developed PRISM-Bench, a new benchmark using puzzle-based visual tasks to diagnose the step-by-step reasoning fidelity of Multimodal Large Language Models (MLLMs). It revealed that state-of-the-art MLLMs achieve around 50-60% accuracy in identifying a single logical error in a multi-step solution, and that general puzzle-solving ability is only moderately correlated with error detection.

02 Dec 2024

The application of language models (LMs) to molecular structure generation using line notations such as SMILES and SELFIES has been well-established in the field of cheminformatics. However, extending these models to generate 3D molecular structures presents significant challenges. Two primary obstacles emerge: (1) the difficulty in designing a 3D line notation that ensures SE(3)-invariant atomic coordinates, and (2) the non-trivial task of tokenizing continuous coordinates for use in LMs, which inherently require discrete inputs. To address these challenges, we propose Mol-StrucTok, a novel method for tokenizing 3D molecular structures. Our approach comprises two key innovations: (1) We design a line notation for 3D molecules by extracting local atomic coordinates in a spherical coordinate system. This notation builds upon existing 2D line notations and remains agnostic to their specific forms, ensuring compatibility with various molecular representation schemes. (2) We employ a Vector Quantized Variational Autoencoder (VQ-VAE) to tokenize these coordinates, treating them as generation descriptors. To further enhance the representation, we incorporate neighborhood bond lengths and bond angles as understanding descriptors. Leveraging this tokenization framework, we train a GPT-2 style model for 3D molecular generation tasks. Results demonstrate strong performance with significantly faster generation speeds and competitive chemical stability compared to previous methods. Further, by integrating our learned discrete representations into Graphormer model for property prediction on QM9 dataset, Mol-StrucTok reveals consistent improvements across various molecular properties, underscoring the versatility and robustness of our approach.

Revisiting VerilogEval: A Year of Improvements in Large-Language Models for Hardware Code Generation

Revisiting VerilogEval: A Year of Improvements in Large-Language Models for Hardware Code Generation

This research provides a contemporary evaluation of large-language models (LLMs) for Verilog code generation, demonstrating significant performance gains with GPT-4o reaching a 63% pass rate and the open-source Llama3.1 405B achieving 58%. It introduces VerilogEval v2, an enhanced benchmark supporting specification-to-RTL tasks, in-context learning, and automatic failure classification for more robust evaluation.

Researchers at Cornell University and DeepMind developed Bidirectional Gated SSM (BiGS), an architecture that achieves performance comparable to BERT on standard NLP benchmarks without self-attention, utilizing State-Space Models combined with multiplicative gating. The model demonstrated effective processing of sequences up to 4096 tokens and exhibited distinct inductive biases for syntactic understanding.

Estimating the geographical range of a species from sparse observations is a challenging and important geospatial prediction problem. Given a set of locations where a species has been observed, the goal is to build a model to predict whether the species is present or absent at any location. This problem has a long history in ecology, but traditional methods struggle to take advantage of emerging large-scale crowdsourced datasets which can include tens of millions of records for hundreds of thousands of species. In this work, we use Spatial Implicit Neural Representations (SINRs) to jointly estimate the geographical range of 47k species simultaneously. We find that our approach scales gracefully, making increasingly better predictions as we increase the number of species and the amount of data per species when training. To make this problem accessible to machine learning researchers, we provide four new benchmarks that measure different aspects of species range estimation and spatial representation learning. Using these benchmarks, we demonstrate that noisy and biased crowdsourced data can be combined with implicit neural representations to approximate expert-developed range maps for many species.

Collaborative machine learning and related techniques such as federated learning allow multiple participants, each with his own training dataset, to build a joint model by training locally and periodically exchanging model updates. We demonstrate that these updates leak unintended information about participants' training data and develop passive and active inference attacks to exploit this leakage. First, we show that an adversarial participant can infer the presence of exact data points -- for example, specific locations -- in others' training data (i.e., membership inference). Then, we show how this adversary can infer properties that hold only for a subset of the training data and are independent of the properties that the joint model aims to capture. For example, he can infer when a specific person first appears in the photos used to train a binary gender classifier. We evaluate our attacks on a variety of tasks, datasets, and learning configurations, analyze their limitations, and discuss possible defenses.

Researchers from Microsoft Research and collaborators introduce "Loopy," a toolchain that leverages large language models (LLMs) to generate inductive loop invariants for C programs. Integrating LLM-generated candidates with symbolic verification (Houdini) and an LLM-based repair mechanism, Loopy successfully verified 398 out of 469 benchmarks and solved 31 unique instances where a state-of-the-art symbolic verifier failed.

Simulating transition dynamics between metastable states is a fundamental challenge in dynamical systems and stochastic processes with wide real-world applications in understanding protein folding, chemical reactions and neural activities. However, the computational challenge often lies on sampling exponentially many paths in which only a small fraction ends in the target metastable state due to existence of high energy barriers. To amortize the cost, we propose a data-driven approach to warm-up the simulation by learning nonlinear interpolations from local dynamics. Specifically, we infer a potential energy function from local dynamics data. To find plausible paths between two metastable states, we formulate a generalized flow matching framework that learns a vector field to sample propable paths between the two marginal densities under the learned energy function. Furthermore, we iteratively refine the model by assigning importance weights to the sampled paths and buffering more likely paths for training. We validate the effectiveness of the proposed method to sample probable paths on both synthetic and real-world molecular systems.

We consider the problem of learning a realization of a partially observed bilinear dynamical system (BLDS) from noisy input-output data. Given a single trajectory of input-output samples, we provide a finite time analysis for learning the system's Markov-like parameters, from which a balanced realization of the bilinear system can be obtained. Our bilinear system identification algorithm learns the system's Markov-like parameters by regressing the outputs to highly correlated, nonlinear, and heavy-tailed covariates. Moreover, the stability of BLDS depends on the sequence of inputs used to excite the system. These properties, unique to partially observed bilinear dynamical systems, pose significant challenges to the analysis of our algorithm for learning the unknown dynamics. We address these challenges and provide high probability error bounds on our identification algorithm under a uniform stability assumption. Our analysis provides insights into system theoretic quantities that affect learning accuracy and sample complexity. Lastly, we perform numerical experiments with synthetic data to reinforce these insights.

We present Hookpad Aria, a generative AI system designed to assist musicians

in writing Western pop songs. Our system is seamlessly integrated into Hookpad,

a web-based editor designed for the composition of lead sheets: symbolic music

scores that describe melody and harmony. Hookpad Aria has numerous generation

capabilities designed to assist users in non-sequential composition workflows,

including: (1) generating left-to-right continuations of existing material, (2)

filling in missing spans in the middle of existing material, and (3) generating

harmony from melody and vice versa. Hookpad Aria is also a scalable data

flywheel for music co-creation -- since its release in March 2024, Aria has

generated 318k suggestions for 3k users who have accepted 74k into their songs.

More information about Hookpad Aria is available at

this https URL

Random projections reduce the dimension of a set of vectors while preserving structural information, such as distances between vectors in the set. This paper proposes a novel use of row-product random matrices in random projection, where we call it Tensor Random Projection (TRP). It requires substantially less memory than existing dimension reduction maps. The TRP map is formed as the Khatri-Rao product of several smaller random projections, and is compatible with any base random projection including sparse maps, which enable dimension reduction with very low query cost and no floating point operations. We also develop a reduced variance extension. We provide a theoretical analysis of the bias and variance of the TRP, and a non-asymptotic error analysis for a TRP composed of two smaller maps. Experiments on both synthetic and MNIST data show that our method performs as well as conventional methods with substantially less storage.

We devise an online learning algorithm -- titled Switching via Monotone Adapted Regret Traces (SMART) -- that adapts to the data and achieves regret that is instance optimal, i.e., simultaneously competitive on every input sequence compared to the performance of the follow-the-leader (FTL) policy and the worst case guarantee of any other input policy. We show that the regret of the SMART policy on any input sequence is within a multiplicative factor e/(e−1)≈1.58 of the smaller of: 1) the regret obtained by FTL on the sequence, and 2) the upper bound on regret guaranteed by the given worst-case policy. This implies a strictly stronger guarantee than typical `best-of-both-worlds' bounds as the guarantee holds for every input sequence regardless of how it is generated. SMART is simple to implement as it begins by playing FTL and switches at most once during the time horizon to the worst-case algorithm. Our approach and results follow from an operational reduction of instance optimal online learning to competitive analysis for the ski-rental problem. We complement our competitive ratio upper bounds with a fundamental lower bound showing that over all input sequences, no algorithm can get better than a 1.43-fraction of the minimum regret achieved by FTL and the minimax-optimal policy. We also present a modification of SMART that combines FTL with a ``small-loss" algorithm to achieve instance optimality between the regret of FTL and the small loss regret bound.

We study a nonstationary bandit problem where rewards depend on both actions and latent states, the latter governed by unknown linear dynamics. Crucially, the state dynamics also depend on the actions, resulting in tension between short-term and long-term rewards. We propose an explore-then-commit algorithm for a finite horizon T. During the exploration phase, random Rademacher actions enable estimation of the Markov parameters of the linear dynamics, which characterize the action-reward relationship. In the commit phase, the algorithm uses the estimated parameters to design an optimized action sequence for long-term reward. Our proposed algorithm achieves O~(T2/3) regret. Our analysis handles two key challenges: learning from temporally correlated rewards, and designing action sequences with optimal long-term reward. We address the first challenge by providing near-optimal sample complexity and error bounds for system identification using bilinear rewards. We address the second challenge by proving an equivalence with indefinite quadratic optimization over a hypercube, a known NP-hard problem. We provide a sub-optimality guarantee for this problem, enabling our regret upper bound. Lastly, we propose a semidefinite relaxation with Goemans-Williamson rounding as a practical approach.

Speculative attacks such as Spectre can leak secret information without being

discovered by the operating system. Speculative execution vulnerabilities are

finicky and deep in the sense that to exploit them, it requires intensive

manual labor and intimate knowledge of the hardware. In this paper, we

introduce SpecRL, a framework that utilizes reinforcement learning to find

speculative execution leaks in post-silicon (black box) microprocessors.

There are no more papers matching your filters at the moment.