Hochschule Bonn-Rhein-Sieg

When performing manipulation-based activities such as picking objects, a

mobile robot needs to position its base at a location that supports successful

execution. To address this problem, prominent approaches typically rely on

costly grasp planners to provide grasp poses for a target object, which are

then are then analysed to identify the best robot placements for achieving each

grasp pose. In this paper, we propose instead to first find robot placements

that would not result in collision with the environment and from where picking

up the object is feasible, then evaluate them to find the best placement

candidate. Our approach takes into account the robot's reachability, as well as

RGB-D images and occupancy grid maps of the environment for identifying

suitable robot poses. The proposed algorithm is embedded in a service robotic

workflow, in which a person points to select the target object for grasping. We

evaluate our approach with a series of grasping experiments, against an

existing baseline implementation that sends the robot to a fixed navigation

goal. The experimental results show how the approach allows the robot to grasp

the target object from locations that are very challenging to the baseline

implementation.

Traffic sign recognition is an important component of many advanced driving assistance systems, and it is required for full autonomous driving. Computational performance is usually the bottleneck in using large scale neural networks for this purpose. SqueezeNet is a good candidate for efficient image classification of traffic signs, but in our experiments it does not reach high accuracy, and we believe this is due to lack of data, requiring data augmentation. Generative adversarial networks can learn the high dimensional distribution of empirical data, allowing the generation of new data points. In this paper we apply pix2pix GANs architecture to generate new traffic sign images and evaluate the use of these images in data augmentation. We were motivated to use pix2pix to translate symbolic sign images to real ones due to the mode collapse in Conditional GANs. Through our experiments we found that data augmentation using GAN can increase classification accuracy for circular traffic signs from 92.1% to 94.0%, and for triangular traffic signs from 93.8% to 95.3%, producing an overall improvement of 2%. However some traditional augmentation techniques can outperform GAN data augmentation, for example contrast variation in circular traffic signs (95.5%) and displacement on triangular traffic signs (96.7 %). Our negative results shows that while GANs can be naively used for data augmentation, they are not always the best choice, depending on the problem and variability in the data.

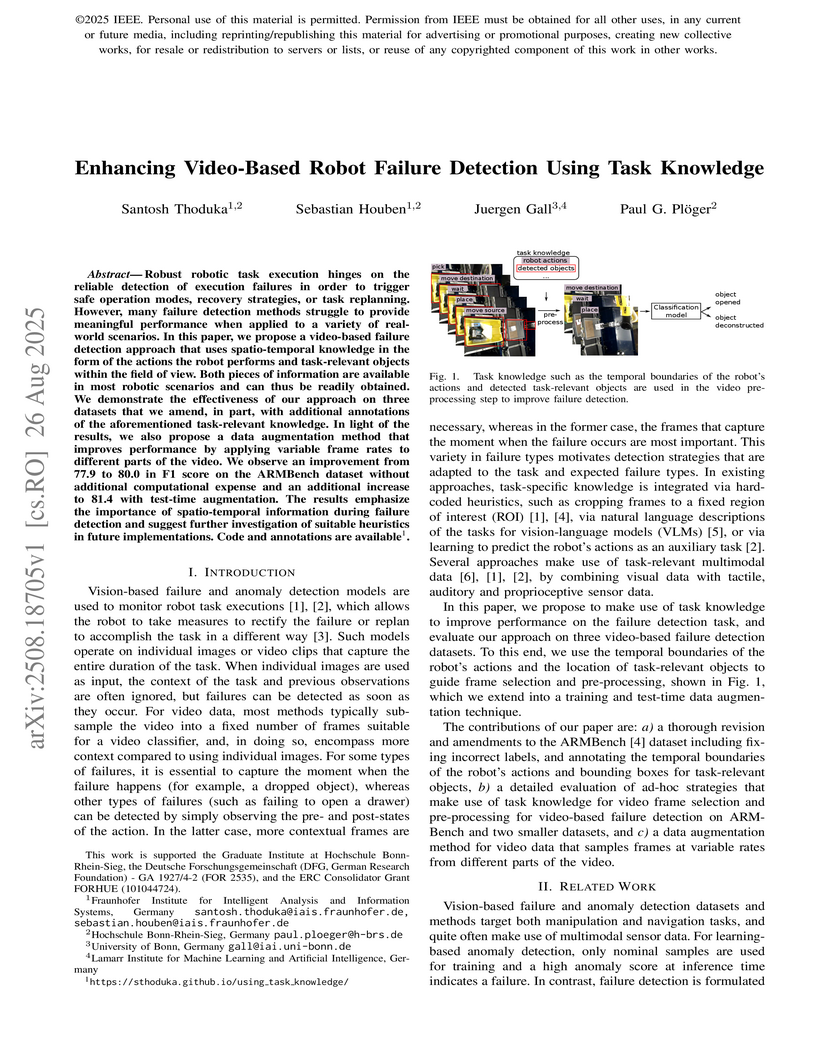

Robust robotic task execution hinges on the reliable detection of execution failures in order to trigger safe operation modes, recovery strategies, or task replanning. However, many failure detection methods struggle to provide meaningful performance when applied to a variety of real-world scenarios. In this paper, we propose a video-based failure detection approach that uses spatio-temporal knowledge in the form of the actions the robot performs and task-relevant objects within the field of view. Both pieces of information are available in most robotic scenarios and can thus be readily obtained. We demonstrate the effectiveness of our approach on three datasets that we amend, in part, with additional annotations of the aforementioned task-relevant knowledge. In light of the results, we also propose a data augmentation method that improves performance by applying variable frame rates to different parts of the video. We observe an improvement from 77.9 to 80.0 in F1 score on the ARMBench dataset without additional computational expense and an additional increase to 81.4 with test-time augmentation. The results emphasize the importance of spatio-temporal information during failure detection and suggest further investigation of suitable heuristics in future implementations. Code and annotations are available.

An object handover between a robot and a human is a coordinated action which is prone to failure for reasons such as miscommunication, incorrect actions and unexpected object properties. Existing works on handover failure detection and prevention focus on preventing failures due to object slip or external disturbances. However, there is a lack of datasets and evaluation methods that consider unpreventable failures caused by the human participant. To address this deficit, we present the multimodal Handover Failure Detection dataset, which consists of failures induced by the human participant, such as ignoring the robot or not releasing the object. We also present two baseline methods for handover failure detection: (i) a video classification method using 3D CNNs and (ii) a temporal action segmentation approach which jointly classifies the human action, robot action and overall outcome of the action. The results show that video is an important modality, but using force-torque data and gripper position help improve failure detection and action segmentation accuracy.

Contrastive learning (CL) approaches have gained great recognition as a very

successful subset of self-supervised learning (SSL) methods. SSL enables

learning from unlabeled data, a crucial step in the advancement of deep

learning, particularly in computer vision (CV), given the plethora of unlabeled

image data. CL works by comparing different random augmentations (e.g.,

different crops) of the same image, thus achieving self-labeling. Nevertheless,

randomly augmenting images and especially random cropping can result in an

image that is semantically very distant from the original and therefore leads

to false labeling, hence undermining the efficacy of the methods. In this

research, two novel parameterized cropping methods are introduced that increase

the robustness of self-labeling and consequently increase the efficacy. The

results show that the use of these methods significantly improves the accuracy

of the model by between 2.7\% and 12.4\% on the downstream task of classifying

CIFAR-10, depending on the crop size compared to that of the non-parameterized

random cropping method.

27 Oct 2025

Learned robot policies have consistently been shown to be versatile, but they typically have no built-in mechanism for handling the complexity of open environments, making them prone to execution failures; this implies that deploying policies without the ability to recognise and react to failures may lead to unreliable and unsafe robot behaviour. In this paper, we present a framework that couples a learned policy with a method to detect visual anomalies during policy deployment and to perform recovery behaviours when necessary, thereby aiming to prevent failures. Specifically, we train an anomaly detection model using data collected during nominal executions of a trained policy. This model is then integrated into the online policy execution process, so that deviations from the nominal execution can trigger a three-level sequential recovery process that consists of (i) pausing the execution temporarily, (ii) performing a local perturbation of the robot's state, and (iii) resetting the robot to a safe state by sampling from a learned execution success model. We verify our proposed method in two different scenarios: (i) a door handle reaching task with a Kinova Gen3 arm using a policy trained in simulation and transferred to the real robot, and (ii) an object placing task with a UFactory xArm 6 using a general-purpose policy model. Our results show that integrating policy execution with anomaly detection and recovery increases the execution success rate in environments with various anomalies, such as trajectory deviations and adversarial human interventions.

Air pollution is an emerging problem that needs to be solved especially in

developed and developing countries. In Vietnam, air pollution is also a

concerning issue in big cities such as Hanoi and Ho Chi Minh cities where air

pollution comes mostly from vehicles such as cars and motorbikes. In order to

tackle the problem, the paper focuses on developing a solution that can

estimate the emitted PM2.5 pollutants by counting the number of vehicles in the

traffic. We first investigated among the recent object detection models and

developed our own traffic surveillance system. The observed traffic density

showed a similar trend to the measured PM2.5 with a certain lagging in time,

suggesting a relation between traffic density and PM2.5. We further express

this relationship with a mathematical model which can estimate the PM2.5 value

based on the observed traffic density. The estimated result showed a great

correlation with the measured PM2.5 plots in the urban area context.

In mobile network research, the integration of real-world components such as

User Equipment (UE) with open-source network infrastructure is essential yet

challenging. To address these issues, we introduce open5Gcube, a modular

framework designed to integrate popular open-source mobile network projects

into a unified management environment. Our publicly available framework allows

researchers to flexibly combine different open-source implementations,

including different versions, and simplifies experimental setups through

containerization and lightweight orchestration. We demonstrate the practical

usability of open5Gcube by evaluating its compatibility with various commercial

off-the-shelf (COTS) smartphones and modems across multiple mobile generations

(2G, 4G, and 5G). The results underline the versatility and reproducibility of

our approach, significantly advancing the accessibility of rigorous

experimentation in mobile network laboratories.

08 Dec 2022

Vietnam requires a sustainable urbanization, for which city sensing is used

in planning and de-cision-making. Large cities need portable, scalable, and

inexpensive digital technology for this purpose. End-to-end air quality

monitoring companies such as AirVisual and Plume Air have shown their

reliability with portable devices outfitted with superior air sensors. They are

pricey, yet homeowners use them to get local air data without evaluating the

causal effect. Our air quality inspection system is scalable, reasonably

priced, and flexible. Minicomputer of the sys-tem remotely monitors PMS7003 and

BME280 sensor data through a microcontroller processor. The 5-megapixel camera

module enables researchers to infer the causal relationship between traffic

intensity and dust concentration. The design enables inexpensive,

commercial-grade hardware, with Azure Blob storing air pollution data and

surrounding-area imagery and pre-venting the system from physically expanding.

In addition, by including an air channel that re-plenishes and distributes

temperature, the design improves ventilation and safeguards electrical

components. The gadget allows for the analysis of the correlation between

traffic and air quali-ty data, which might aid in the establishment of

sustainable urban development plans and poli-cies.

Automatic Short Answer Grading (ASAG) is the process of grading the student

answers by computational approaches given a question and the desired answer.

Previous works implemented the methods of concept mapping, facet mapping, and

some used the conventional word embeddings for extracting semantic features.

They extracted multiple features manually to train on the corresponding

datasets. We use pretrained embeddings of the transfer learning models, ELMo,

BERT, GPT, and GPT-2 to assess their efficiency on this task. We train with a

single feature, cosine similarity, extracted from the embeddings of these

models. We compare the RMSE scores and correlation measurements of the four

models with previous works on Mohler dataset. Our work demonstrates that ELMo

outperformed the other three models. We also, briefly describe the four

transfer learning models and conclude with the possible causes of poor results

of transfer learning models.

12 Oct 2016

We present a practical and highly secure method for the authentication of chips based on a new concept for implementing strong Physical Unclonable Function (PUF) on field programmable gate arrays (FPGA). Its qualitatively novel feature is a remote reconfiguration in which the delay stages of the PUF are arranged to a random pattern within a subset of the FPGA's gates. Before the reconfiguration is performed during authentication the PUF simply does not exist. Hence even if an attacker has the chip under control previously she can gain no useful information about the PUF. This feature, together with a strict renunciation of any error correction and challenge selection criteria that depend on individual properties of the PUF that goes into the field make our strong PUF construction immune to all machine learning attacks presented in the literature. More sophisticated attacks on our strong-PUF construction will be difficult, because they require the attacker to learn or directly measure the properties of the complete FPGA. A fully functional reference implementation for a secure "chip biometrics" is presented. We remotely configure ten 64-stage arbiter PUFs out of 1428 lookup tables within a time of 25 seconds and then receive one "fingerprint" from each PUF within 1 msec.

27 Oct 2020

Reinforcement learning (RL) algorithms should learn as much as possible about

the environment but not the properties of the physics engines that generate the

environment. There are multiple algorithms that solve the task in a physics

engine based environment but there is no work done so far to understand if the

RL algorithms can generalize across physics engines. In this work, we compare

the generalization performance of various deep reinforcement learning

algorithms on a variety of control tasks. Our results show that MuJoCo is the

best engine to transfer the learning to other engines. On the other hand, none

of the algorithms generalize when trained on PyBullet. We also found out that

various algorithms have a promising generalizability if the effect of random

seeds can be minimized on their performance.

Application of underwater robots are on the rise, most of them are dependent

on sonar for underwater vision, but the lack of strong perception capabilities

limits them in this task. An important issue in sonar perception is matching

image patches, which can enable other techniques like localization, change

detection, and mapping. There is a rich literature for this problem in color

images, but for acoustic images, it is lacking, due to the physics that produce

these images. In this paper we improve on our previous results for this problem

(Valdenegro-Toro et al, 2017), instead of modeling features manually, a

Convolutional Neural Network (CNN) learns a similarity function and predicts if

two input sonar images are similar or not. With the objective of improving the

sonar image matching problem further, three state of the art CNN architectures

are evaluated on the Marine Debris dataset, namely DenseNet, and VGG, with a

siamese or two-channel architecture, and contrastive loss. To ensure a fair

evaluation of each network, thorough hyper-parameter optimization is executed.

We find that the best performing models are DenseNet Two-Channel network with

0.955 AUC, VGG-Siamese with contrastive loss at 0.949 AUC and DenseNet Siamese

with 0.921 AUC. By ensembling the top performing DenseNet two-channel and

DenseNet-Siamese models overall highest prediction accuracy obtained is 0.978

AUC, showing a large improvement over the 0.91 AUC in the state of the art.

This study presents findings from long-term biometric evaluations conducted at the Biometric Evaluation Center (bez). Over the course of two and a half years, our ongoing research with over 400 participants representing diverse ethnicities, genders, and age groups were regularly assessed using a variety of biometric tools and techniques at the controlled testing facilities. Our findings are based on the General Data Protection Regulation-compliant local bez database with more than 238.000 biometric data sets categorized into multiple biometric modalities such as face and finger. We used state-of-the-art face recognition algorithms to analyze long-term comparison scores. Our results show that these scores fluctuate more significantly between individual days than over the entire measurement period. These findings highlight the importance of testing biometric characteristics of the same individuals over a longer period of time in a controlled measurement environment and lays the groundwork for future advancements in biometric data analysis.

Execution monitoring is essential for robots to detect and respond to failures. Since it is impossible to enumerate all failures for a given task, we learn from successful executions of the task to detect visual anomalies during runtime. Our method learns to predict the motions that occur during the nominal execution of a task, including camera and robot body motion. A probabilistic U-Net architecture is used to learn to predict optical flow, and the robot's kinematics and 3D model are used to model camera and body motion. The errors between the observed and predicted motion are used to calculate an anomaly score. We evaluate our method on a dataset of a robot placing a book on a shelf, which includes anomalies such as falling books, camera occlusions, and robot disturbances. We find that modeling camera and body motion, in addition to the learning-based optical flow prediction, results in an improvement of the area under the receiver operating characteristic curve from 0.752 to 0.804, and the area under the precision-recall curve from 0.467 to 0.549.

This work reports on a measurement study to estimate the attenuation of 450 MHz LTE networks. The LTE band 72 is currently deployed in Germany, in particular for smart grid applications. Due to this use-case, we assume that a significant amount of future devices will be deployed stationary and indoor which motivated our campaign. We designed a custom measurement device which uses commercial off-the-shelf hardware to assess the downlink RSRP of a public mobile network. In addition, a software has been developed to provide non-experts the possibility to conduct these measurements in the future. This software provides the possibility to determine the indoor position based on ground plans. We conducted measurements at three different buildings. Our results reveal, that the building attenuation of 450 MHz LTE networks is highly heterogeneous and mainly depends on the type of the building, the indoor position and in particular the height of the floor where the device is located.

State-of-the-art object detectors are treated as black boxes due to their highly non-linear internal computations. Even with unprecedented advancements in detector performance, the inability to explain how their outputs are generated limits their use in safety-critical applications. Previous work fails to produce explanations for both bounding box and classification decisions, and generally make individual explanations for various detectors. In this paper, we propose an open-source Detector Explanation Toolkit (DExT) which implements the proposed approach to generate a holistic explanation for all detector decisions using certain gradient-based explanation methods. We suggests various multi-object visualization methods to merge the explanations of multiple objects detected in an image as well as the corresponding detections in a single image. The quantitative evaluation show that the Single Shot MultiBox Detector (SSD) is more faithfully explained compared to other detectors regardless of the explanation methods. Both quantitative and human-centric evaluations identify that SmoothGrad with Guided Backpropagation (GBP) provides more trustworthy explanations among selected methods across all detectors. We expect that DExT will motivate practitioners to evaluate object detectors from the interpretability perspective by explaining both bounding box and classification decisions.

21 Mar 2024

We present a historical outline of the research and developments of Virtual

Reality at the Fraunhofer Institute for Computer Graphics (IGD) in Darmstadt,

Germany, from 1990 through 2000.

Deep learning has become a one-size-fits-all solution for technical and business domains thanks to its flexibility and adaptability. It is implemented using opaque models, which unfortunately undermines the outcome trustworthiness. In order to have a better understanding of the behavior of a system, particularly one driven by time series, a look inside a deep learning model so-called posthoc eXplainable Artificial Intelligence (XAI) approaches, is important. There are two major types of XAI for time series data, namely model-agnostic and model-specific. Model-specific approach is considered in this work. While other approaches employ either Class Activation Mapping (CAM) or Attention Mechanism, we merge the two strategies into a single system, simply called the Temporally Weighted Spatiotemporal Explainable Neural Network for Multivariate Time Series (TSEM). TSEM combines the capabilities of RNN and CNN models in such a way that RNN hidden units are employed as attention weights for the CNN feature maps temporal axis. The result shows that TSEM outperforms XCM. It is similar to STAM in terms of accuracy, while also satisfying a number of interpretability criteria, including causality, fidelity, and spatiotemporality.

Urban LoRa networks promise to provide a cost-efficient and scalable communication backbone for smart cities. One core challenge in rolling out and operating these networks is radio network planning, i.e., precise predictions about possible new locations and their impact on network coverage. Path loss models aid in this task, but evaluating and comparing different models requires a sufficiently large set of high-quality received packet power samples. In this paper, we report on a corresponding large-scale measurement study covering an urban area of 200km2 over a period of 230 days using sensors deployed on garbage trucks, resulting in more than 112 thousand high-quality samples for received packet power. Using this data, we compare eleven previously proposed path loss models and additionally provide new coefficients for the Log-distance model. Our results reveal that the Log-distance model and other well-known empirical models such as Okumura or Winner+ provide reasonable estimations in an urban environment, and terrain based models such as ITM or ITWOM have no advantages. In addition, we derive estimations for the needed sample size in similar measurement campaigns. To stimulate further research in this direction, we make all our data publicly available.

There are no more papers matching your filters at the moment.