Saarland University

This research formally demonstrates that the standard Group Relative Policy Optimization (GRPO) algorithm inherently induces a Monte-Carlo-based Process Reward Model for large language models. It introduces \u03bb-GRPO, a minor modification that corrects a scaling flaw in GRPO's objective, leading to a \u223c2x training speedup and improved performance on downstream reasoning tasks compared to standard GRPO.

ETH Zurich

ETH Zurich KAIST

KAIST University of WashingtonRensselaer Polytechnic Institute

University of WashingtonRensselaer Polytechnic Institute Google DeepMind

Google DeepMind University of Amsterdam

University of Amsterdam University of Illinois at Urbana-Champaign

University of Illinois at Urbana-Champaign University of CambridgeHeidelberg University

University of CambridgeHeidelberg University University of WaterlooFacebook

University of WaterlooFacebook Carnegie Mellon University

Carnegie Mellon University University of Southern California

University of Southern California Google

Google New York UniversityUniversity of Stuttgart

New York UniversityUniversity of Stuttgart UC Berkeley

UC Berkeley National University of Singapore

National University of Singapore University College London

University College London University of OxfordLMU Munich

University of OxfordLMU Munich Shanghai Jiao Tong University

Shanghai Jiao Tong University University of California, Irvine

University of California, Irvine Tsinghua University

Tsinghua University Stanford University

Stanford University University of Michigan

University of Michigan University of Copenhagen

University of Copenhagen The Chinese University of Hong KongUniversity of Melbourne

The Chinese University of Hong KongUniversity of Melbourne MetaUniversity of Edinburgh

MetaUniversity of Edinburgh OpenAI

OpenAI The University of Texas at Austin

The University of Texas at Austin Cornell University

Cornell University University of California, San DiegoYonsei University

University of California, San DiegoYonsei University McGill University

McGill University Boston UniversityUniversity of Bamberg

Boston UniversityUniversity of Bamberg Nanyang Technological University

Nanyang Technological University Microsoft

Microsoft KU Leuven

KU Leuven Columbia UniversityUC Santa Barbara

Columbia UniversityUC Santa Barbara Allen Institute for AIGerman Research Center for Artificial Intelligence (DFKI)

Allen Institute for AIGerman Research Center for Artificial Intelligence (DFKI) University of Pennsylvania

University of Pennsylvania Johns Hopkins University

Johns Hopkins University Arizona State University

Arizona State University University of Maryland

University of Maryland University of TokyoUniversity of North Carolina at Chapel HillHebrew University of JerusalemAmazonTilburg UniversityUniversity of Massachusetts AmherstUniversity of RochesterUniversity of Duisburg-EssenSapienza University of RomeUniversity of Sheffield

University of TokyoUniversity of North Carolina at Chapel HillHebrew University of JerusalemAmazonTilburg UniversityUniversity of Massachusetts AmherstUniversity of RochesterUniversity of Duisburg-EssenSapienza University of RomeUniversity of Sheffield Princeton University

Princeton University HKUSTUniversity of TübingenTU BerlinSaarland UniversityTechnical University of DarmstadtUniversity of HaifaUniversity of TrentoUniversity of MontrealBilkent UniversityUniversity of Cape TownBar Ilan UniversityIBMUniversity of Mannheim

HKUSTUniversity of TübingenTU BerlinSaarland UniversityTechnical University of DarmstadtUniversity of HaifaUniversity of TrentoUniversity of MontrealBilkent UniversityUniversity of Cape TownBar Ilan UniversityIBMUniversity of Mannheim ServiceNowPotsdam UniversityPolish-Japanese Academy of Information TechnologySalesforceASAPPAI21 LabsValencia Polytechnic UniversityUniversity of Trento, Italy

ServiceNowPotsdam UniversityPolish-Japanese Academy of Information TechnologySalesforceASAPPAI21 LabsValencia Polytechnic UniversityUniversity of Trento, ItalyA large-scale and diverse benchmark, BIG-bench, was introduced to rigorously evaluate the capabilities and limitations of large language models across 204 tasks. The evaluation revealed that even state-of-the-art models currently achieve aggregate scores below 20 (on a 0-100 normalized scale), indicating significantly lower performance compared to human experts.

AppWorld is a comprehensive framework introducing a high-fidelity simulation of nine everyday applications and a benchmark of 750 complex tasks to evaluate large language model agents. It reveals that state-of-the-art models like GPT-4 complete less than half of normal tasks and around 30% of challenge tasks, indicating significant room for improvement in real-world digital environments.

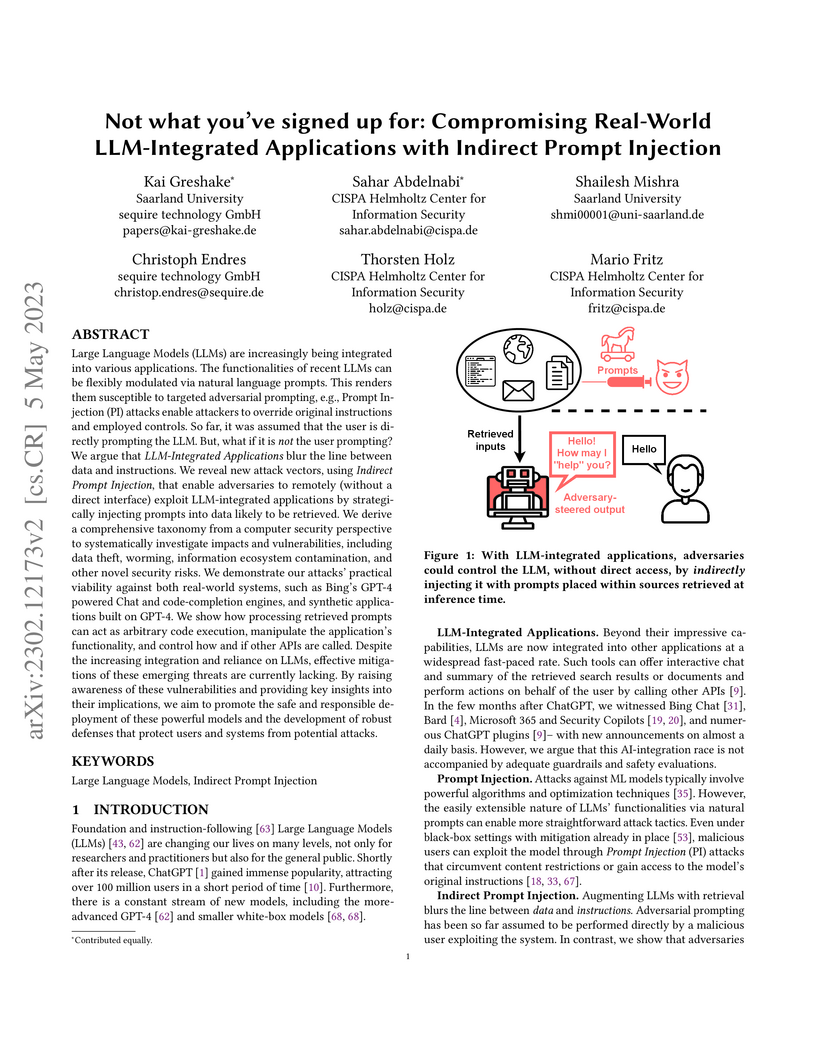

This paper identifies and categorizes a novel threat called Indirect Prompt Injection (IPI), demonstrating how malicious prompts embedded in external data can compromise LLM-integrated applications like Bing Chat and GitHub Copilot. The research illustrates that LLMs can be manipulated to exfiltrate data, spread malware, or generate misleading content, often bypassing existing security filters by treating retrieved data as executable instructions.

ADAPT, a framework from UNC Chapel Hill, AI2, and Saarland University, enables Large Language Models to act as robust agents by dynamically decomposing complex tasks into simpler sub-tasks only when needed. This approach significantly increased success rates by up to 33% across diverse interactive environments like ALFWorld, WebShop, and a new TextCraft dataset, outperforming existing plan-and-execute methods and other adaptive baselines.

CNRSFreie Universität Berlin

CNRSFreie Universität Berlin University of OxfordTU Dortmund UniversityGerman Research Center for Artificial Intelligence (DFKI)University of InnsbruckCollège de FranceMax Planck Institute for the Science of LightFriedrich-Alexander-Universität Erlangen-NürnbergInstitut Polytechnique de ParisUniversity of LatviaUniversity of TurkuSaarland UniversityFondazione Bruno KesslerTU Wien

University of OxfordTU Dortmund UniversityGerman Research Center for Artificial Intelligence (DFKI)University of InnsbruckCollège de FranceMax Planck Institute for the Science of LightFriedrich-Alexander-Universität Erlangen-NürnbergInstitut Polytechnique de ParisUniversity of LatviaUniversity of TurkuSaarland UniversityFondazione Bruno KesslerTU Wien Chalmers University of TechnologyForschungszentrum JülichUniversity of RegensburgUniversity of FlorenceUniversity of AugsburgUniversity of GothenburgLeiden Institute of PhysicsDonostia International Physics CenterJohannes Kepler University LinzFraunhofer Heinrich-Hertz-InstituteSAP SEFriedrich-Schiller-University JenaEuropean Centre for Theoretical Studies in Nuclear Physics and Related Areas (ECT*)EPITA Research LabLeiden Institute of Advanced Computer ScienceÖAWVienna Center for Quantum Science and TechnologyAtominstitutUniversity of Applied Sciences Zittau/GörlitzIQOQI ViennaFraunhofer IOSB-ASTUniversit PSLInria Paris–SaclayUniversit

Paris Diderot`Ecole PolytechniqueUniversity of Naples

“Federico II”INFN

Sezione di Firenze

Chalmers University of TechnologyForschungszentrum JülichUniversity of RegensburgUniversity of FlorenceUniversity of AugsburgUniversity of GothenburgLeiden Institute of PhysicsDonostia International Physics CenterJohannes Kepler University LinzFraunhofer Heinrich-Hertz-InstituteSAP SEFriedrich-Schiller-University JenaEuropean Centre for Theoretical Studies in Nuclear Physics and Related Areas (ECT*)EPITA Research LabLeiden Institute of Advanced Computer ScienceÖAWVienna Center for Quantum Science and TechnologyAtominstitutUniversity of Applied Sciences Zittau/GörlitzIQOQI ViennaFraunhofer IOSB-ASTUniversit PSLInria Paris–SaclayUniversit

Paris Diderot`Ecole PolytechniqueUniversity of Naples

“Federico II”INFN

Sezione di FirenzeA collaborative white paper coordinated by the Quantum Community Network comprehensively analyzes the current status and future perspectives of Quantum Artificial Intelligence, categorizing its potential into "Quantum for AI" and "AI for Quantum" applications. It proposes a strategic research and development agenda to bolster Europe's competitive position in this rapidly converging technological domain.

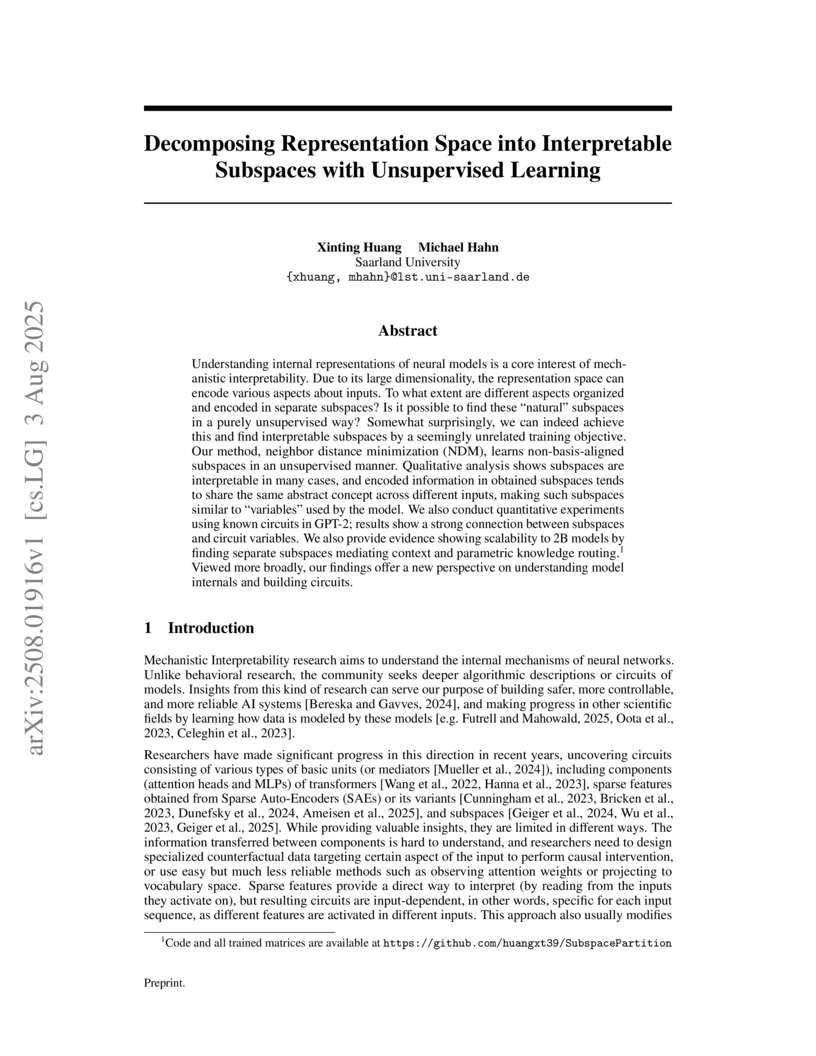

Researchers from Saarland University introduced Neighbor Distance Minimization (NDM), an unsupervised learning method that decomposes neural network representation space into interpretable, non-basis-aligned subspaces. The approach quantitatively demonstrated superior concentration of task-relevant information within identified subspaces and yielded qualitatively distinct feature encodings in GPT-2 Small and larger 2B-parameter models.

A comprehensive empirical study assesses the reliability of Large Language Models (LLMs) as automated evaluators across 20 diverse Natural Language Processing tasks. The research evaluates 11 different LLMs, including both proprietary and open-weight models, against human judgments, revealing that LLM performance varies substantially by task and property evaluated and is generally below human inter-annotator agreement.

LawBench introduces the first comprehensive evaluation benchmark for assessing Large Language Models' legal knowledge and capabilities within the Chinese civil law system. The benchmark, featuring 20 diverse tasks categorized into memorization, understanding, and application, reveals GPT-4's leading performance while highlighting significant limitations and areas for improvement across other models in this specialized domain.

27 May 2025

Researchers systematically investigated factors influencing the distillation of Chain-of-Thought (CoT) reasoning into Small Language Models (SLMs), identifying that optimal CoT granularity is non-monotonic and student-dependent, format impact is minimal, and teacher choice effectiveness varies by task. The study revealed a 'Matthew Effect,' where stronger SLMs gained more from CoT distillation, challenging assumptions about knowledge transfer.

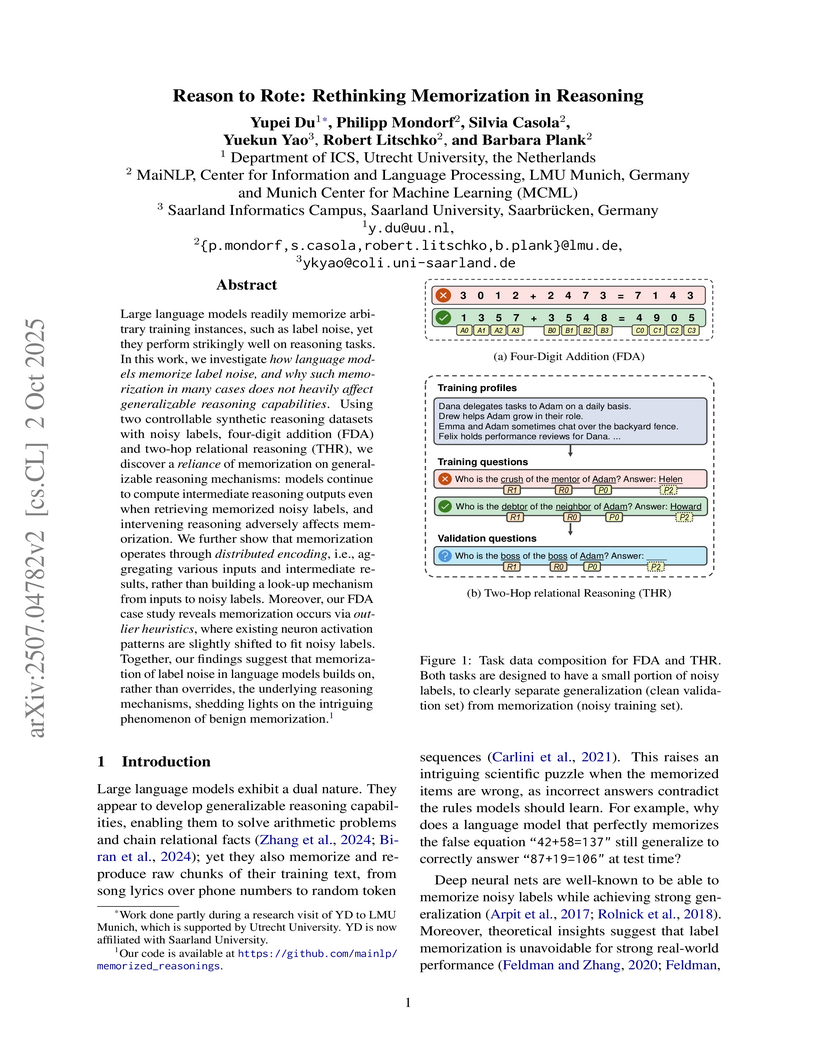

A study explores how large language models reconcile memorizing incorrect labels with applying generalizable reasoning. It reveals that models retain correct intermediate computations even for noisy instances, employing "outlier heuristics" in specific neurons to override these results for memorized outputs.

latentSplat introduces a novel framework that integrates the efficiency of 3D Gaussian Splatting with the generative capabilities of variational autoencoders and GANs, enabling fast and generalizable 3D reconstruction and high-quality novel view synthesis from just two input images. The method achieves superior perceptual and generative quality while maintaining near real-time rendering speeds and can be trained purely on real video data.

Machine unlearning is concerned with the task of removing knowledge learned from particular data points from a trained model. In the context of large language models (LLMs), unlearning has recently received increased attention, particularly for removing knowledge about named entities from models for privacy purposes. While various approaches have been proposed to address the unlearning problem, most existing approaches treat all data points to be unlearned equally, i.e., unlearning that Montreal is a city in Canada is treated exactly the same as unlearning the phone number of the first author of this paper. In this work, we show that this all data is equal assumption does not hold for LLM unlearning. We study how the success of unlearning depends on the frequency of the knowledge we want to unlearn in the pre-training data of a model and find that frequency strongly affects unlearning, i.e., more frequent knowledge is harder to unlearn. Additionally, we uncover a misalignment between probability and generation-based evaluations of unlearning and show that this problem worsens as models become larger. Overall, our experiments highlight the need for better evaluation practices and novel methods for LLM unlearning that take the training data of models into account.

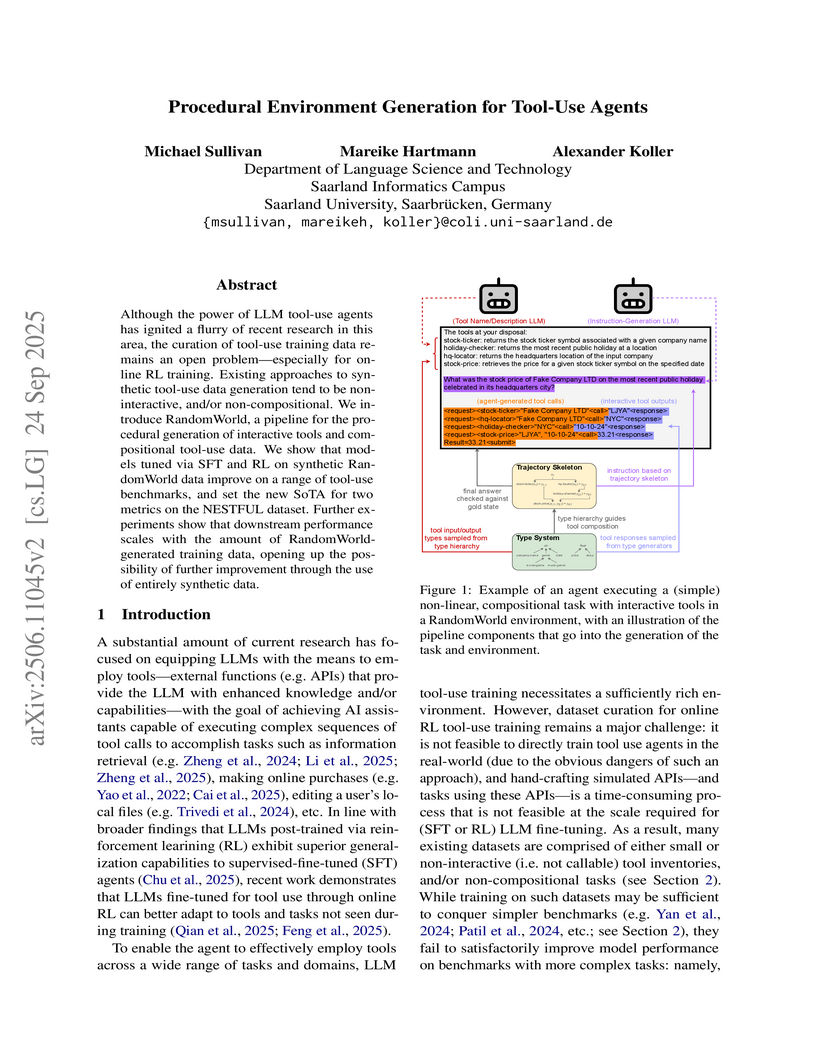

24 Sep 2025

RandomWorld introduces a pipeline for procedurally generating interactive tools and compositional tool-use data for LLM agents, enabling large-scale training for online reinforcement learning. This method leads to a new State-of-the-Art on NESTFUL F1-Function (0.96) and F1-Parameter (0.71), significantly enhancing agent performance and generalization.

30 Sep 2025

Recently, a new optimization method based on the linear minimization oracle (LMO), called Muon, has been attracting increasing attention since it can train neural networks faster than existing adaptive optimization methods, such as Adam. In this paper, we study how Muon can be utilized in federated learning. We first show that straightforwardly using Muon as the local optimizer of FedAvg does not converge to the stationary point since the LMO is a biased operator. We then propose FedMuon which can mitigate this issue. We also analyze how solving the LMO approximately affects the convergence rate and find that, surprisingly, FedMuon can converge for any number of Newton-Schulz iterations, while it can converge faster as we solve the LMO more accurately. Through experiments, we demonstrated that FedMuon can outperform the state-of-the-art federated learning methods.

Fine-tuning pre-trained transformer-based language models such as BERT has become a common practice dominating leaderboards across various NLP benchmarks. Despite the strong empirical performance of fine-tuned models, fine-tuning is an unstable process: training the same model with multiple random seeds can result in a large variance of the task performance. Previous literature (Devlin et al., 2019; Lee et al., 2020; Dodge et al., 2020) identified two potential reasons for the observed instability: catastrophic forgetting and small size of the fine-tuning datasets. In this paper, we show that both hypotheses fail to explain the fine-tuning instability. We analyze BERT, RoBERTa, and ALBERT, fine-tuned on commonly used datasets from the GLUE benchmark, and show that the observed instability is caused by optimization difficulties that lead to vanishing gradients. Additionally, we show that the remaining variance of the downstream task performance can be attributed to differences in generalization where fine-tuned models with the same training loss exhibit noticeably different test performance. Based on our analysis, we present a simple but strong baseline that makes fine-tuning BERT-based models significantly more stable than the previously proposed approaches. Code to reproduce our results is available online: this https URL.

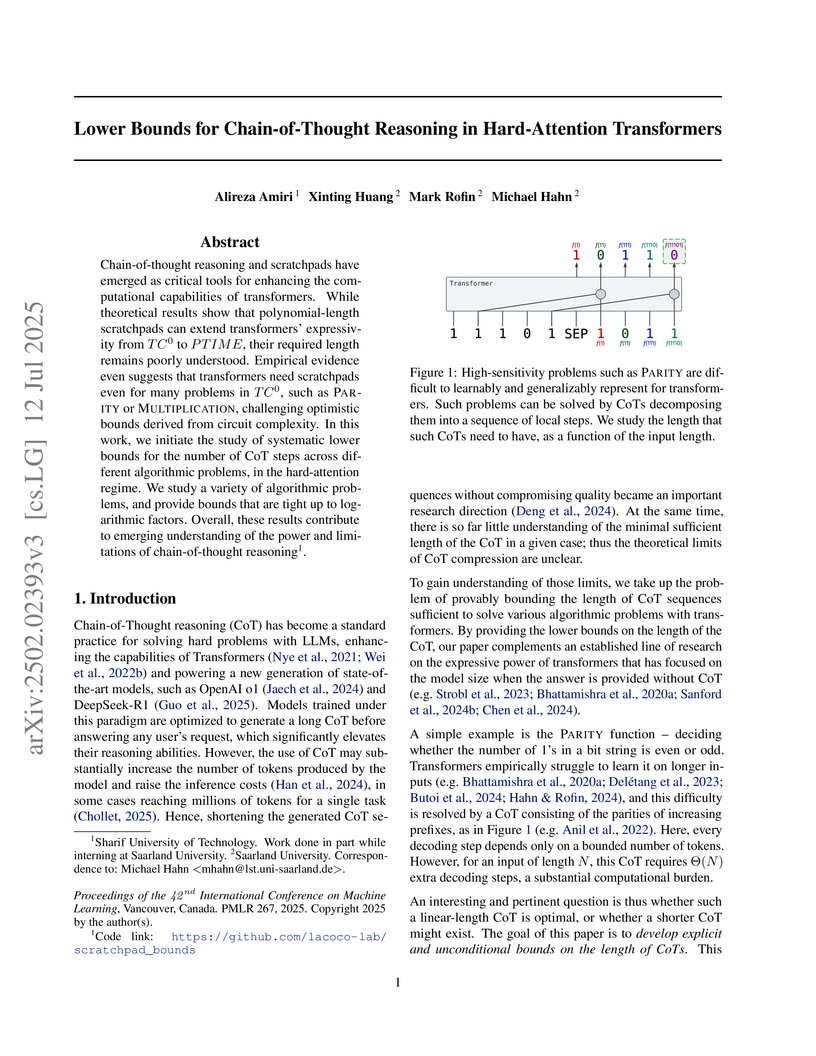

Chain-of-thought reasoning and scratchpads have emerged as critical tools for enhancing the computational capabilities of transformers. While theoretical results show that polynomial-length scratchpads can extend transformers' expressivity from TC0 to PTIME, their required length remains poorly understood. Empirical evidence even suggests that transformers need scratchpads even for many problems in TC0, such as Parity or Multiplication, challenging optimistic bounds derived from circuit complexity. In this work, we initiate the study of systematic lower bounds for the number of chain-of-thought steps across different algorithmic problems, in the hard-attention regime. We study a variety of algorithmic problems, and provide bounds that are tight up to logarithmic factors. Overall, these results contribute to emerging understanding of the power and limitations of chain-of-thought reasoning.

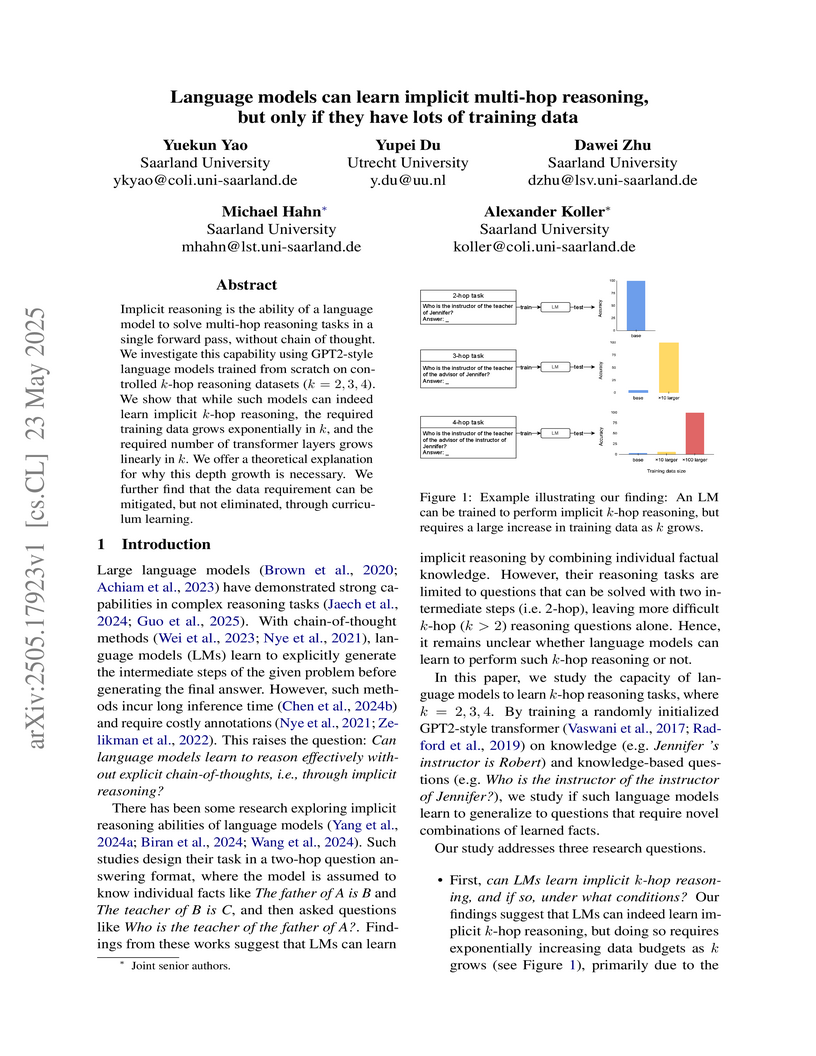

Language models can perform implicit multi-hop reasoning up to 4 hops, achieving high accuracy when provided with sufficient training data. This capability, however, incurs an exponential increase in data requirements which curriculum learning can substantially reduce.

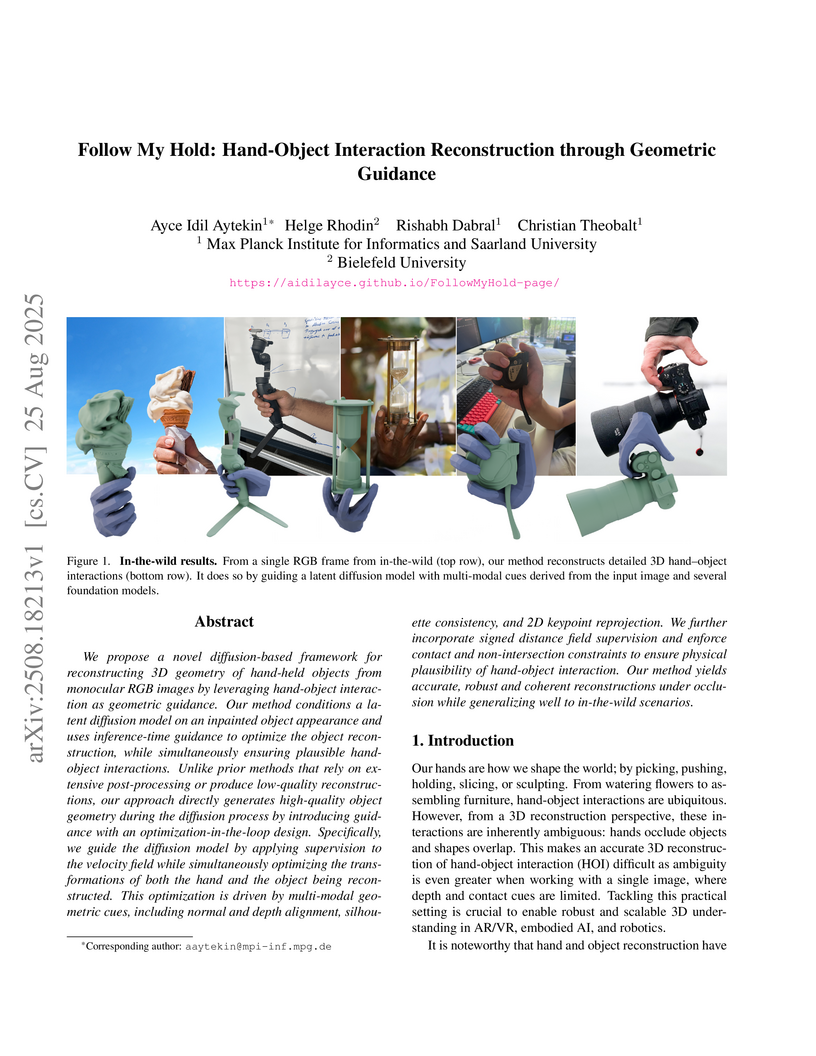

We propose a novel diffusion-based framework for reconstructing 3D geometry of hand-held objects from monocular RGB images by leveraging hand-object interaction as geometric guidance. Our method conditions a latent diffusion model on an inpainted object appearance and uses inference-time guidance to optimize the object reconstruction, while simultaneously ensuring plausible hand-object interactions. Unlike prior methods that rely on extensive post-processing or produce low-quality reconstructions, our approach directly generates high-quality object geometry during the diffusion process by introducing guidance with an optimization-in-the-loop design. Specifically, we guide the diffusion model by applying supervision to the velocity field while simultaneously optimizing the transformations of both the hand and the object being reconstructed. This optimization is driven by multi-modal geometric cues, including normal and depth alignment, silhouette consistency, and 2D keypoint reprojection. We further incorporate signed distance field supervision and enforce contact and non-intersection constraints to ensure physical plausibility of hand-object interaction. Our method yields accurate, robust and coherent reconstructions under occlusion while generalizing well to in-the-wild scenarios.

Ensuring the moral reasoning capabilities of Large Language Models (LLMs) is a growing concern as these systems are used in socially sensitive tasks. Nevertheless, current evaluation benchmarks present two major shortcomings: a lack of annotations that justify moral classifications, which limits transparency and interpretability; and a predominant focus on English, which constrains the assessment of moral reasoning across diverse cultural settings. In this paper, we introduce MFTCXplain, a multilingual benchmark dataset for evaluating the moral reasoning of LLMs via multi-hop hate speech explanation using the Moral Foundations Theory. MFTCXplain comprises 3,000 tweets across Portuguese, Italian, Persian, and English, annotated with binary hate speech labels, moral categories, and text span-level rationales. Our results show a misalignment between LLM outputs and human annotations in moral reasoning tasks. While LLMs perform well in hate speech detection (F1 up to 0.836), their ability to predict moral sentiments is notably weak (F1 < 0.35). Furthermore, rationale alignment remains limited mainly in underrepresented languages. Our findings show the limited capacity of current LLMs to internalize and reflect human moral reasoning

There are no more papers matching your filters at the moment.