University of Houston

Verma et al. introduce a new computational definition and a comprehensive taxonomy for deception, offering a foundational framework for domain-independent detection. Their work provides empirical evidence through linguistic analysis and deep learning experiments that generalizable linguistic cues for deception exist and can be transferred across diverse domains, challenging previous skepticism in the field.

08 Jul 2025

Materials with high thermal conductivity are needed to conduct heat away from hot spots in high power electronics and optoelectronic devices. Cubic boron arsenide (c-BAs) has a high thermal conductivity due to its special phonon dispersion relation. Previous experimental studies of c-BAs report a room-temperature thermal conductivity between 1000 and 1300 W m-1 K-1. We synthesized high purity isotopically enriched c-BAs single crystals with room-temperature thermal conductivity of around 1500 W m-1 K-1. Using time-domain thermoreflectance (TDTR), we measured thermal conductivity and found a 1/T2 temperature dependence between 300 K and 600 K - slightly stronger than predictions from state-of-the-art theoretical models. Brillouin and Raman scattering revealed minimal changes in phonon frequencies over the same temperature range, suggesting that the observed 1/T2 dependence is not caused by temperature dependent changes in phonon dispersion. To probe defect densities in the BAs crystals we studied, we conducted transient reflectivity microscopy (TRM) measurements of absorption at sub-bandgap photon energies. We observe a correlation between TRM signal intensity and thermal conductivity. Notably, samples with thermal conductivity near 1500 W m-1 K-1 still exhibited nonzero TRM signals, suggesting the presence of defects despite the high thermal conductivity.

This work provides an exhaustive, up-to-date survey of Mixture-of-Experts (MoE) models, covering their fundamental designs, algorithmic integrations across various machine learning paradigms, theoretical foundations, and diverse applications in computer vision and natural language processing. It consolidates recent advancements to serve as a comprehensive reference for the rapidly evolving field.

17 Mar 2025

A comprehensive survey and tutorial from researchers at UConn, University of Houston, Morgan Stanley, and NEC Labs introduces a unified framework for multi-modal time series analysis, categorizing methods based on fusion, alignment, and transference while providing a systematic review of datasets, techniques, and applications across different domains.

MAIN-RAG is a training-free, multi-agent LLM framework designed to filter noisy documents in Retrieval-Augmented Generation (RAG) systems. It consistently outperforms training-free baselines and achieves competitive performance with training-based RAG models by using an adaptive filtering mechanism that quantifies document relevance based on LLM judgments.

This research develops a CLIP-conditioned Diffusion Transformer (DiT) for paired image-to-image translation, leveraging CLIP image embeddings for semantic guidance. The framework generates images with superior quality, sharpness, and detail preservation compared to GAN-based methods, particularly on larger datasets.

This paper provides the first explicit theoretical analysis of Mixture-of-Experts (MoE) models in continual learning (CL), demonstrating how MoE mitigates catastrophic forgetting through expert specialization and identifying that early termination of gating network updates is crucial for stable performance.

The DMMV framework transforms numerical time series into multi-modal views (numerical and visual) and integrates them via an adaptive decomposition strategy to improve long-term forecasting. This method specifically accounts for the inductive biases of Large Vision Models (LVMs) and sets a new state-of-the-art by achieving the best Mean Squared Error on 6 of 8 benchmark datasets.

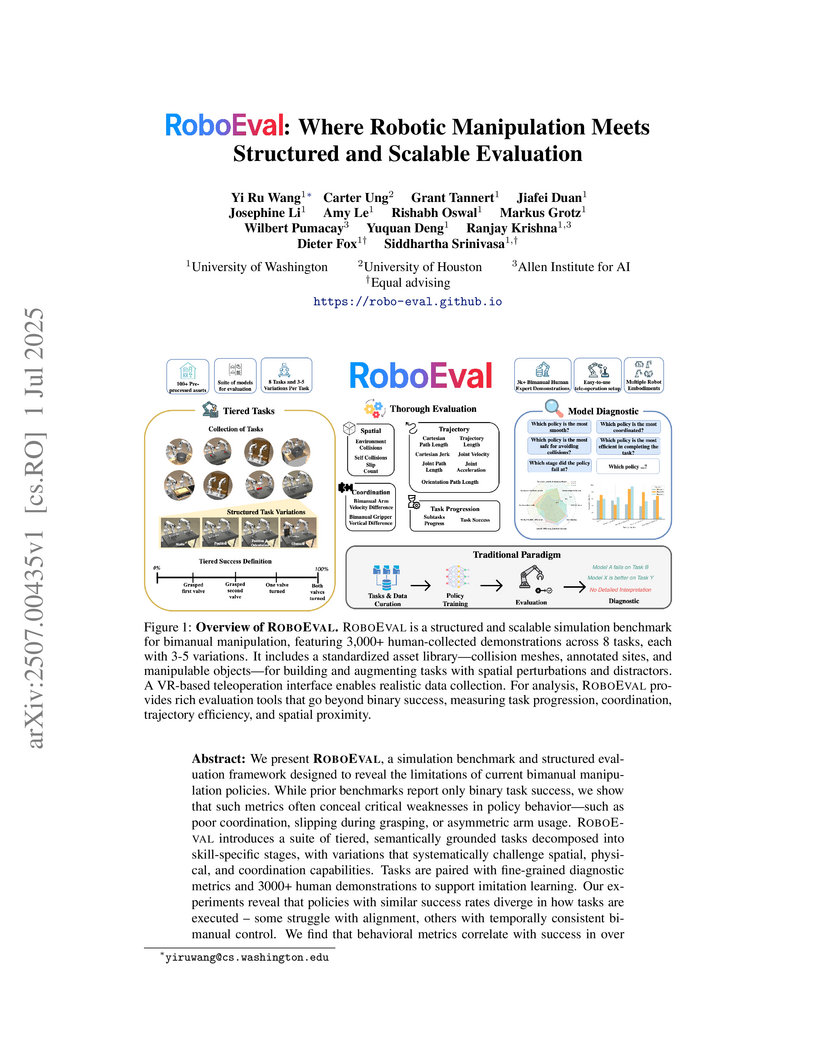

ROBOEVAL presents a diagnostic benchmark for bimanual robotic manipulation, featuring 8 tiered tasks with over 3,000 human demonstrations and a multi-dimensional evaluation framework. This approach reveals that fine-grained behavioral and outcome metrics provide a substantially deeper understanding of policy limitations and failure modes than traditional binary success rates.

As an important component of the sixth generation communication technologies,

the space-air-ground integrated network (SAGIN) attracts increasing attentions

in recent years. However, due to the mobility and heterogeneity of the

components such as satellites and unmanned aerial vehicles in multi-layer

SAGIN, the challenges of inefficient resource allocation and management

complexity are aggregated. To this end, the network function virtualization

technology is introduced and can be implemented via service function chains

(SFCs) deployment. However, urgent unexpected tasks may bring conflicts and

resource competition during SFC deployment, and how to schedule the SFCs of

multiple tasks in SAGIN is a key issue. In this paper, we address the dynamic

and complexity of SAGIN by presenting a reconfigurable time extension graph and

further propose the dynamic SFC scheduling model. Then, we formulate the SFC

scheduling problem to maximize the number of successful deployed SFCs within

limited resources and time horizons. Since the problem is in the form of

integer linear programming and intractable to solve, we propose the algorithm

by incorporating deep reinforcement learning. Finally, simulation results show

that the proposed algorithm has better convergence and performance compared to

other benchmark algorithms.

Time series analysis has witnessed the inspiring development from traditional autoregressive models, deep learning models, to recent Transformers and Large Language Models (LLMs). Efforts in leveraging vision models for time series analysis have also been made along the way but are less visible to the community due to the predominant research on sequence modeling in this domain. However, the discrepancy between continuous time series and the discrete token space of LLMs, and the challenges in explicitly modeling the correlations of variates in multivariate time series have shifted some research attentions to the equally successful Large Vision Models (LVMs) and Vision Language Models (VLMs). To fill the blank in the existing literature, this survey discusses the advantages of vision models over LLMs in time series analysis. It provides a comprehensive and in-depth overview of the existing methods, with dual views of detailed taxonomy that answer the key research questions including how to encode time series as images and how to model the imaged time series for various tasks. Additionally, we address the challenges in the pre- and post-processing steps involved in this framework and outline future directions to further advance time series analysis with vision models.

We introduce a generalized framework for Scene Change Detection (SCD) that addresses the core ambiguity of distinguishing "relevant" from "nuisance" changes, enabling effective joint training of a single model across diverse domains and applications. Existing methods struggle to generalize due to differences in dataset labeling, where changes such as vegetation growth or lane marking alterations may be labeled as relevant in one dataset and irrelevant in another. To resolve this ambiguity, we propose ViewDelta, a text conditioned change detection framework that uses natural language prompts to define relevant changes precisely, such as a single attribute, a specific set of classes, or all observable differences. To facilitate training in this paradigm, we release the Conditional Change Segmentation dataset (CSeg), the first large-scale synthetic dataset for text conditioned SCD, consisting of over 500,000 image pairs with more than 300,000 unique textual prompts describing relevant changes. Experiments demonstrate that a single ViewDelta model trained jointly on CSeg, SYSU-CD, PSCD, VL-CMU-CD, and their unaligned variants achieves performance competitive with or superior to dataset specific models, highlighting text conditioning as a powerful approach for generalizable SCD. Our code and dataset are available at this https URL.

30 May 2025

Retrieval-Augmented Generation (RAG) systems are increasingly diverse, yet

many suffer from monolithic designs that tightly couple core functions like

query reformulation, retrieval, reasoning, and verification. This limits their

interpretability, systematic evaluation, and targeted improvement, especially

for complex multi-hop question answering. We introduce ComposeRAG, a novel

modular abstraction that decomposes RAG pipelines into atomic, composable

modules. Each module, such as Question Decomposition, Query Rewriting,

Retrieval Decision, and Answer Verification, acts as a parameterized

transformation on structured inputs/outputs, allowing independent

implementation, upgrade, and analysis. To enhance robustness against errors in

multi-step reasoning, ComposeRAG incorporates a self-reflection mechanism that

iteratively revisits and refines earlier steps upon verification failure.

Evaluated on four challenging multi-hop QA benchmarks, ComposeRAG consistently

outperforms strong baselines in both accuracy and grounding fidelity.

Specifically, it achieves up to a 15% accuracy improvement over

fine-tuning-based methods and up to a 5% gain over reasoning-specialized

pipelines under identical retrieval conditions. Crucially, ComposeRAG

significantly enhances grounding: its verification-first design reduces

ungrounded answers by over 10% in low-quality retrieval settings, and by

approximately 3% even with strong corpora. Comprehensive ablation studies

validate the modular architecture, demonstrating distinct and additive

contributions from each component. These findings underscore ComposeRAG's

capacity to deliver flexible, transparent, scalable, and high-performing

multi-hop reasoning with improved grounding and interpretability.

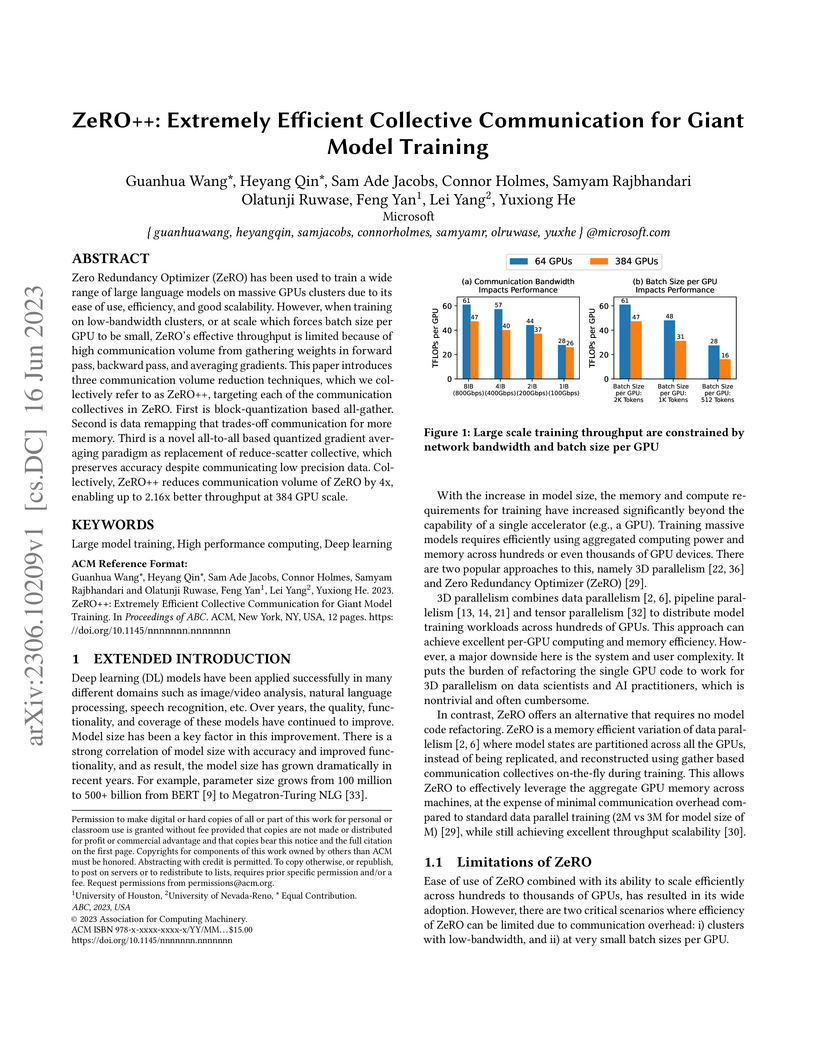

Zero Redundancy Optimizer (ZeRO) has been used to train a wide range of large language models on massive GPUs clusters due to its ease of use, efficiency, and good scalability. However, when training on low-bandwidth clusters, or at scale which forces batch size per GPU to be small, ZeRO's effective throughput is limited because of high communication volume from gathering weights in forward pass, backward pass, and averaging gradients. This paper introduces three communication volume reduction techniques, which we collectively refer to as ZeRO++, targeting each of the communication collectives in ZeRO. First is block-quantization based all-gather. Second is data remapping that trades-off communication for more memory. Third is a novel all-to-all based quantized gradient averaging paradigm as replacement of reduce-scatter collective, which preserves accuracy despite communicating low precision data. Collectively, ZeRO++ reduces communication volume of ZeRO by 4x, enabling up to 2.16x better throughput at 384 GPU scale.

With the rapid adoption of large language models (LLMs) in recommendation systems, the computational and communication bottlenecks caused by their massive parameter sizes and large data volumes have become increasingly prominent. This paper systematically investigates two classes of optimization methods-model parallelism and data parallelism-for distributed training of LLMs in recommendation scenarios. For model parallelism, we implement both tensor parallelism and pipeline parallelism, and introduce an adaptive load-balancing mechanism to reduce cross-device communication overhead. For data parallelism, we compare synchronous and asynchronous modes, combining gradient compression and sparsification techniques with an efficient aggregation communication framework to significantly improve bandwidth utilization. Experiments conducted on a real-world recommendation dataset in a simulated service environment demonstrate that our proposed hybrid parallelism scheme increases training throughput by over 30% and improves resource utilization by approximately 20% compared to traditional single-mode parallelism, while maintaining strong scalability and robustness. Finally, we discuss trade-offs among different parallel strategies in online deployment and outline future directions involving heterogeneous hardware integration and automated scheduling technologies.

13 Aug 2025

Edge General Intelligence (EGI) represents a transformative evolution of edge computing, where distributed agents possess the capability to perceive, reason, and act autonomously across diverse, dynamic environments. Central to this vision are world models, which act as proactive internal simulators that not only predict but also actively imagine future trajectories, reason under uncertainty, and plan multi-step actions with foresight. This proactive nature allows agents to anticipate potential outcomes and optimize decisions ahead of real-world interactions. While prior works in robotics and gaming have showcased the potential of world models, their integration into the wireless edge for EGI remains underexplored. This survey bridges this gap by offering a comprehensive analysis of how world models can empower agentic artificial intelligence (AI) systems at the edge. We first examine the architectural foundations of world models, including latent representation learning, dynamics modeling, and imagination-based planning. Building on these core capabilities, we illustrate their proactive applications across EGI scenarios such as vehicular networks, unmanned aerial vehicle (UAV) networks, the Internet of Things (IoT) systems, and network functions virtualization, thereby highlighting how they can enhance optimization under latency, energy, and privacy constraints. We then explore their synergy with foundation models and digital twins, positioning world models as the cognitive backbone of EGI. Finally, we highlight open challenges, such as safety guarantees, efficient training, and constrained deployment, and outline future research directions. This survey provides both a conceptual foundation and a practical roadmap for realizing the next generation of intelligent, autonomous edge systems.

Researchers from Universidad Nacional de Colombia, University of Houston, and INAOE developed Gated Multimodal Units (GMUs) for integrating heterogeneous data, achieving a macro f-score of 0.541 on a new MM-IMDb dataset for movie genre classification, outperforming baseline fusion methods. The GMU demonstrated an ability to adaptively weigh modality contributions based on input, improving predictions for 16 out of 23 genres.

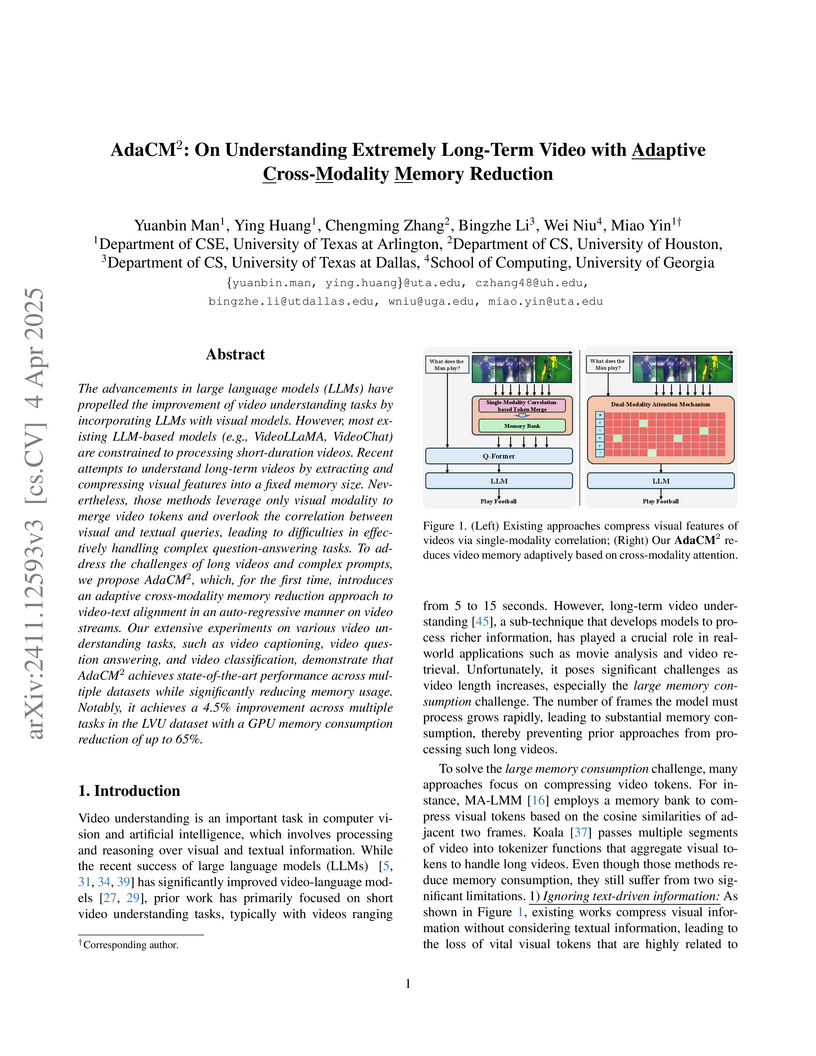

AdaCM^2 introduces an adaptive cross-modality memory reduction framework for understanding extremely long-term videos. It processes unbounded video lengths with a bounded memory footprint by dynamically pruning visual tokens based on their relevance to textual queries, reducing GPU memory consumption by up to 65% and achieving improved accuracy on long-term video understanding and captioning benchmarks.

An ideal detection system for machine generated content is supposed to work well on any generator as many more advanced LLMs come into existence day by day. Existing systems often struggle with accurately identifying AI-generated content over shorter texts. Further, not all texts might be entirely authored by a human or LLM, hence we focused more over partial cases i.e human-LLM co-authored texts. Our paper introduces a set of models built for the task of token classification which are trained on an extensive collection of human-machine co-authored texts, which performed well over texts of unseen domains, unseen generators, texts by non-native speakers and those with adversarial inputs. We also introduce a new dataset of over 2.4M such texts mostly co-authored by several popular proprietary LLMs over 23 languages. We also present findings of our models' performance over each texts of each domain and generator. Additional findings include comparison of performance against each adversarial method, length of input texts and characteristics of generated texts compared to the original human authored texts.

Timely identification and accurate risk stratification of cardiovascular disease (CVD) remain essential for reducing global mortality. While existing prediction models primarily leverage structured data, unstructured clinical notes contain valuable early indicators. This study introduces a novel LLM-augmented clinical NLP pipeline that employs domain-adapted large language models for symptom extraction, contextual reasoning, and correlation from free-text reports. Our approach integrates cardiovascular-specific fine-tuning, prompt-based inference, and entity-aware reasoning. Evaluations on MIMIC-III and CARDIO-NLP datasets demonstrate improved performance in precision, recall, F1-score, and AUROC, with high clinical relevance (kappa = 0.82) assessed by cardiologists. Challenges such as contextual hallucination, which occurs when plausible information contracts with provided source, and temporal ambiguity, which is related with models struggling with chronological ordering of events are addressed using prompt engineering and hybrid rule-based verification. This work underscores the potential of LLMs in clinical decision support systems (CDSS), advancing early warning systems and enhancing the translation of patient narratives into actionable risk assessments.

There are no more papers matching your filters at the moment.