Yandex Research

Researchers from ISTA, Red Hat AI, and ETH Zürich present Micro-Rotated-GPTQ (MR-GPTQ), an algorithm tailored for 4-bit floating-point (FP4) quantization of large language models. This approach, supported by optimized QuTLASS GPU kernels, significantly enhances MXFP4 accuracy and achieves up to 2.2x end-to-end inference speedup on NVIDIA B200 and 4x on RTX5090 GPUs.

08 Apr 2025

Optimization with matrix gradient orthogonalization has recently demonstrated

impressive results in the training of deep neural networks (Jordan et al.,

2024; Liu et al., 2025). In this paper, we provide a theoretical analysis of

this approach. In particular, we show that the orthogonalized gradient method

can be seen as a first-order trust-region optimization method, where the

trust-region is defined in terms of the matrix spectral norm. Motivated by this

observation, we develop the stochastic non-Euclidean trust-region gradient

method with momentum, which recovers the Muon optimizer (Jordan et al., 2024)

as a special case, along with normalized SGD and signSGD with momentum

(Cutkosky and Mehta, 2020; Sun et al., 2023). In addition, we prove

state-of-the-art convergence results for the proposed algorithm in a range of

scenarios, which involve arbitrary non-Euclidean norms, constrained and

composite problems, and non-convex, star-convex, first- and second-order smooth

functions. Finally, our theoretical findings provide an explanation for several

practical observations, including the practical superiority of Muon compared to

the Orthogonal-SGDM algorithm of Tuddenham et al. (2022) and the importance of

weight decay in the training of large-scale language models.

While foundation models have revolutionized such fields as natural language processing and computer vision, their potential in graph machine learning remains largely unexplored. One of the key challenges in designing graph foundation models (GFMs) is handling diverse node features that can vary across different graph datasets. While many works on GFMs have focused exclusively on text-attributed graphs, the problem of handling arbitrary features of other types in GFMs has not been fully addressed. However, this problem is not unique to the graph domain, as it also arises in the field of machine learning for tabular data. In this work, motivated by the recent success of tabular foundation models (TFMs) like TabPFNv2 or LimiX, we propose G2T-FM, a simple framework for turning tabular foundation models into graph foundation models. Specifically, G2T-FM augments the original node features with neighborhood feature aggregation, adds structural embeddings, and then applies a TFM to the constructed node representations. Even in a fully in-context regime, our model achieves strong results, significantly outperforming publicly available GFMs and performing competitively with, and often better than, well-tuned GNNs trained from scratch. Moreover, after finetuning, G2T-FM surpasses well-tuned GNN baselines. In particular, when combined with LimiX, G2T-FM often outperforms the best GNN by a significant margin. In summary, our paper reveals the potential of a previously overlooked direction of utilizing tabular foundation models for graph machine learning tasks.

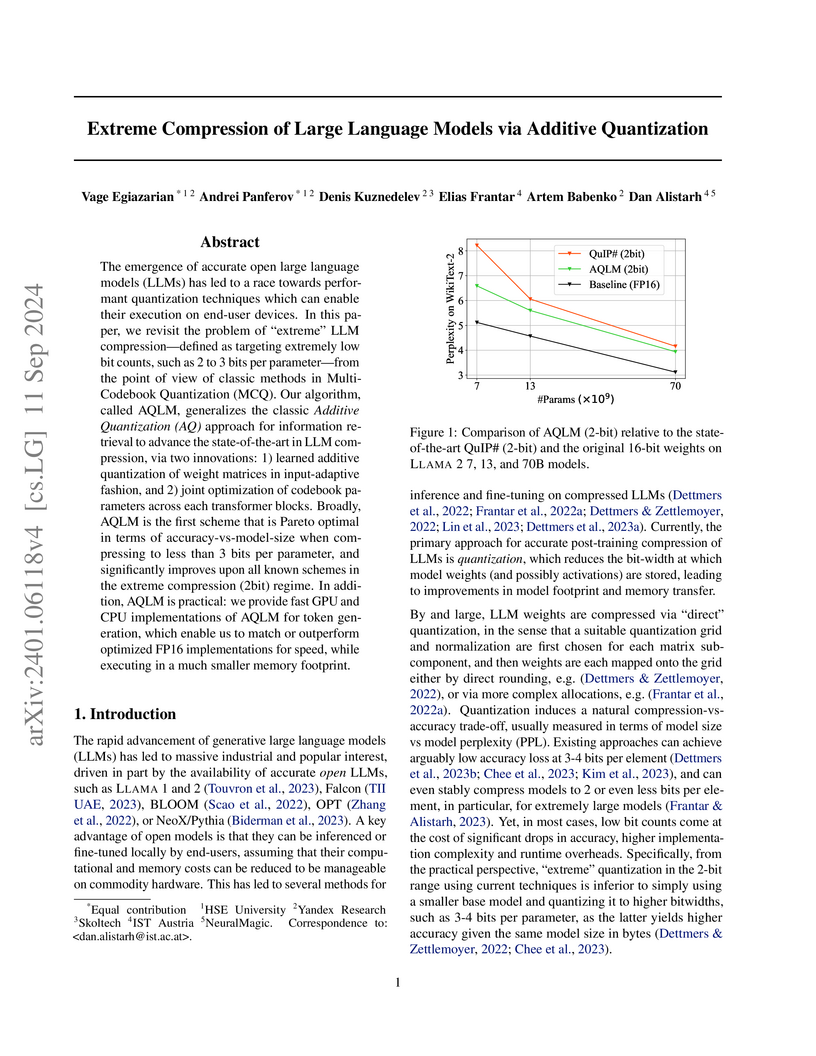

Researchers introduce AQLM, a method that adapts Additive Quantization for extreme compression of Large Language Models to 2-3 bits per parameter. This approach achieves state-of-the-art perplexity and zero-shot accuracy across various LLM architectures, becoming the first to reach Pareto optimality at these low bit-widths.

Node classification is a classical graph machine learning task on which Graph Neural Networks (GNNs) have recently achieved strong results. However, it is often believed that standard GNNs only work well for homophilous graphs, i.e., graphs where edges tend to connect nodes of the same class. Graphs without this property are called heterophilous, and it is typically assumed that specialized methods are required to achieve strong performance on such graphs. In this work, we challenge this assumption. First, we show that the standard datasets used for evaluating heterophily-specific models have serious drawbacks, making results obtained by using them unreliable. The most significant of these drawbacks is the presence of a large number of duplicate nodes in the datasets Squirrel and Chameleon, which leads to train-test data leakage. We show that removing duplicate nodes strongly affects GNN performance on these datasets. Then, we propose a set of heterophilous graphs of varying properties that we believe can serve as a better benchmark for evaluating the performance of GNNs under heterophily. We show that standard GNNs achieve strong results on these heterophilous graphs, almost always outperforming specialized models. Our datasets and the code for reproducing our experiments are available at this https URL

A unified, principled framework is presented for uncertainty estimation in autoregressive structured prediction, employing information-theoretic measures and Monte-Carlo approximations. The work demonstrates robust error and out-of-domain input detection capabilities across neural machine translation and automatic speech recognition models, with token-level Bayesian model averaging consistently improving both predictive performance and uncertainty estimates.

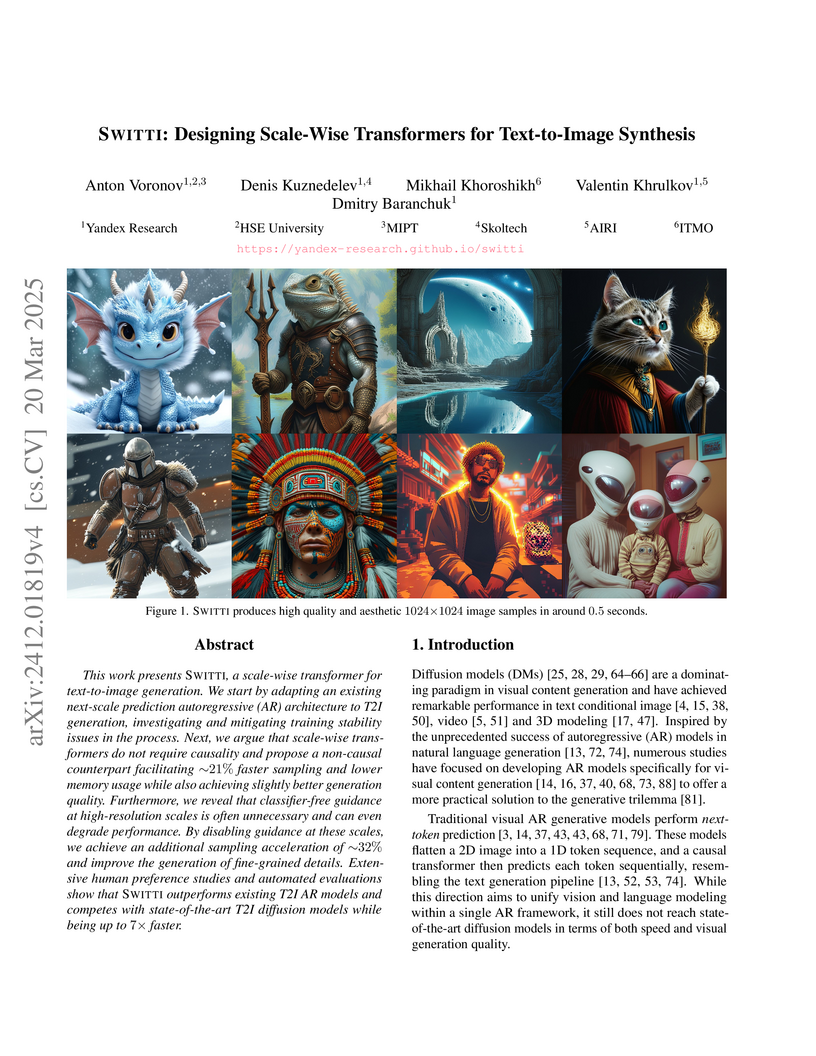

This work presents Switti, a scale-wise transformer for text-to-image

generation. We start by adapting an existing next-scale prediction

autoregressive (AR) architecture to T2I generation, investigating and

mitigating training stability issues in the process. Next, we argue that

scale-wise transformers do not require causality and propose a non-causal

counterpart facilitating ~21% faster sampling and lower memory usage while also

achieving slightly better generation quality. Furthermore, we reveal that

classifier-free guidance at high-resolution scales is often unnecessary and can

even degrade performance. By disabling guidance at these scales, we achieve an

additional sampling acceleration of ~32% and improve the generation of

fine-grained details. Extensive human preference studies and automated

evaluations show that Switti outperforms existing T2I AR models and competes

with state-of-the-art T2I diffusion models while being up to 7x faster.

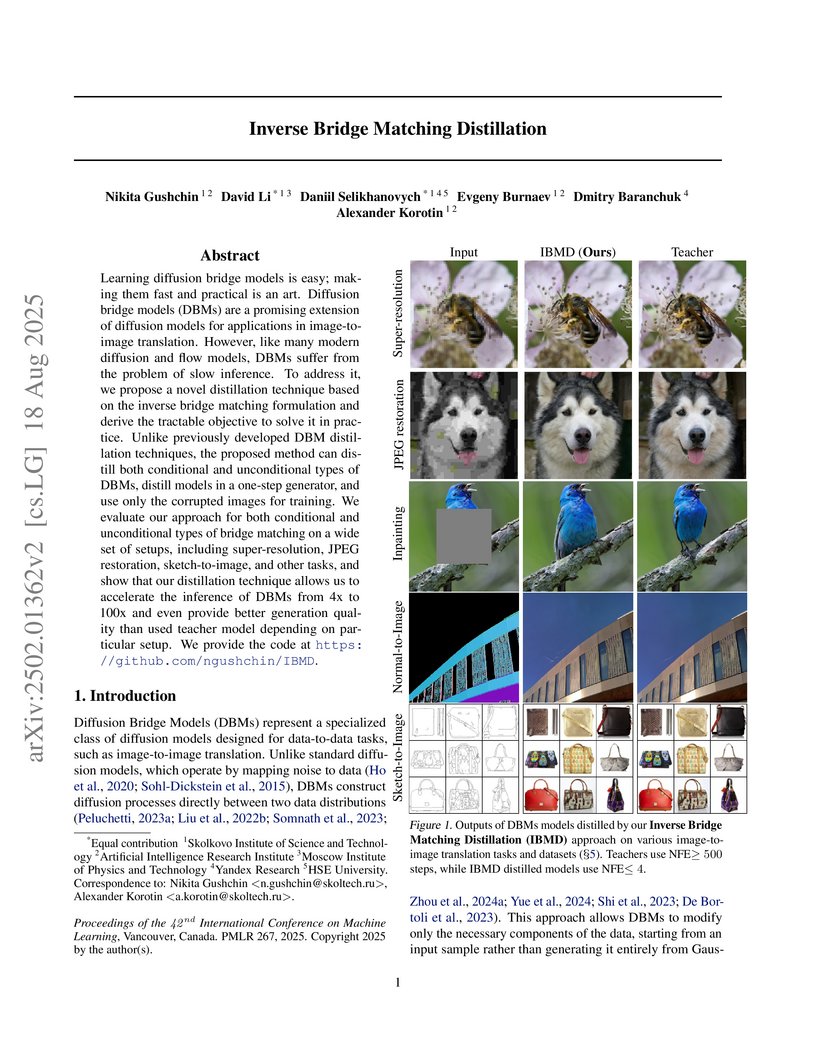

Inverse Bridge Matching Distillation (IBMD) presents a universal framework for accelerating Diffusion Bridge Models (DBMs), achieving up to 100x faster inference for both conditional and unconditional DBMs. The method often maintains or even improves generation quality, as demonstrated by superior FID and Classifier Accuracy in 4x super-resolution tasks.

Although data that can be naturally represented as graphs is widespread in real-world applications across diverse industries, popular graph ML benchmarks for node property prediction only cover a surprisingly narrow set of data domains, and graph neural networks (GNNs) are often evaluated on just a few academic citation networks. This issue is particularly pressing in light of the recent growing interest in designing graph foundation models. These models are supposed to be able to transfer to diverse graph datasets from different domains, and yet the proposed graph foundation models are often evaluated on a very limited set of datasets from narrow applications. To alleviate this issue, we introduce GraphLand: a benchmark of 14 diverse graph datasets for node property prediction from a range of different industrial applications. GraphLand allows evaluating graph ML models on a wide range of graphs with diverse sizes, structural characteristics, and feature sets, all in a unified setting. Further, GraphLand allows investigating such previously underexplored research questions as how realistic temporal distributional shifts under transductive and inductive settings influence graph ML model performance. To mimic realistic industrial settings, we use GraphLand to compare GNNs with gradient-boosted decision trees (GBDT) models that are popular in industrial applications and show that GBDTs provided with additional graph-based input features can sometimes be very strong baselines. Further, we evaluate currently available general-purpose graph foundation models and find that they fail to produce competitive results on our proposed datasets.

Diffusion models for super-resolution (SR) produce high-quality visual results but require expensive computational costs. Despite the development of several methods to accelerate diffusion-based SR models, some (e.g., SinSR) fail to produce realistic perceptual details, while others (e.g., OSEDiff) may hallucinate non-existent structures. To overcome these issues, we present RSD, a new distillation method for ResShift, one of the top diffusion-based SR models. Our method is based on training the student network to produce such images that a new fake ResShift model trained on them will coincide with the teacher model. RSD achieves single-step restoration and outperforms the teacher by a large margin. We show that our distillation method can surpass the other distillation-based method for ResShift - SinSR - making it on par with state-of-the-art diffusion-based SR distillation methods. Compared to SR methods based on pre-trained text-to-image models, RSD produces competitive perceptual quality, provides images with better alignment to degraded input images, and requires fewer parameters and GPU memory. We provide experimental results on various real-world and synthetic datasets, including RealSR, RealSet65, DRealSR, ImageNet, and DIV2K.

While traditional Deep Learning (DL) optimization methods treat all training samples equally, Distributionally Robust Optimization (DRO) adaptively assigns importance weights to different samples. However, a significant gap exists between DRO and current DL practices. Modern DL optimizers require adaptivity and the ability to handle stochastic gradients, as these methods demonstrate superior performance. Additionally, for practical applications, a method should allow weight assignment not only to individual samples, but also to groups of objects (for example, all samples of the same class). This paper aims to bridge this gap by introducing ALSO \unicodex2013 Adaptive Loss Scaling Optimizer \unicodex2013 an adaptive algorithm for a modified DRO objective that can handle weight assignment to sample groups. We prove the convergence of our proposed algorithm for non-convex objectives, which is the typical case for DL models. Empirical evaluation across diverse Deep Learning tasks, from Tabular DL to Split Learning tasks, demonstrates that ALSO outperforms both traditional optimizers and existing DRO methods.

The paper proposes a novel machine learning-based approach to the pathfinding

problem on extremely large graphs. This method leverages diffusion distance

estimation via a neural network and uses beam search for pathfinding. We

demonstrate its efficiency by finding solutions for 4x4x4 and 5x5x5 Rubik's

cubes with unprecedentedly short solution lengths, outperforming all available

solvers and introducing the first machine learning solver beyond the 3x3x3

case. In particular, it surpasses every single case of the combined best

results in the Kaggle Santa 2023 challenge, which involved over 1,000 teams.

For the 3x3x3 Rubik's cube, our approach achieves an optimality rate exceeding

98%, matching the performance of task-specific solvers and significantly

outperforming prior solutions such as DeepCubeA (60.3%) and EfficientCube

(69.6%). Additionally, our solution is more than 26 times faster in solving

3x3x3 Rubik's cubes while requiring up to 18.5 times less model training time

than the most efficient state-of-the-art competitor.

Traffic forecasting on road networks is a complex task of significant practical importance that has recently attracted considerable attention from the machine learning community, with spatiotemporal graph neural networks (GNNs) becoming the most popular approach. The proper evaluation of traffic forecasting methods requires realistic datasets, but current publicly available benchmarks have significant drawbacks, including the absence of information about road connectivity for road graph construction, limited information about road properties, and a relatively small number of road segments that falls short of real-world applications. Further, current datasets mostly contain information about intercity highways with sparsely located sensors, while city road networks arguably present a more challenging forecasting task due to much denser roads and more complex urban traffic patterns. In this work, we provide a more complete, realistic, and challenging benchmark for traffic forecasting by releasing datasets representing the road networks of two major cities, with the largest containing almost 100,000 road segments (more than a 10-fold increase relative to existing datasets). Our datasets contain rich road features and provide fine-grained data about both traffic volume and traffic speed, allowing for building more holistic traffic forecasting systems. We show that most current implementations of neural spatiotemporal models for traffic forecasting have problems scaling to datasets of our size. To overcome this issue, we propose an alternative approach to neural traffic forecasting that uses a GNN without a dedicated module for temporal sequence processing, thus achieving much better scalability, while also demonstrating stronger forecasting performance. We hope our datasets and modeling insights will serve as a valuable resource for research in traffic forecasting.

A scale-wise distillation framework reduces diffusion model computation by progressively increasing spatial resolution during sampling, achieving 2.5-10x faster inference while matching or exceeding image generation quality of full-resolution models through patch distribution matching and dynamic resolution scheduling.

GraphPFN introduces a Graph Foundation Model that integrates trainable graph-aware message-passing adapters into a tabular foundation model, pretrained on a sophisticated synthetic graph prior to enable in-context learning. This approach achieves state-of-the-art results on diverse medium-scale real-world graph datasets for node-level tasks after fine-tuning, outperforming existing GNNs and prior GFM iterations.

Yandex researchers develop Alchemist, a supervised fine-tuning dataset created through a novel multi-stage filtering pipeline that uses a pre-trained diffusion model's cross-attention activations to assess image quality from large-scale web data, achieving up to 20% win rate improvements in aesthetic quality and image complexity when fine-tuning five publicly available text-to-image models (SD1.5, SD2.1, SDXL1.0, SD3.5 Medium, and SD3.5 Large) while demonstrating that exceptional sample quality matters more than dataset volume for effective supervised fine-tuning.

In this paper, we revisit stochastic gradient descent (SGD) with AdaGrad-type preconditioning. Our contributions are twofold. First, we develop a unified convergence analysis of SGD with adaptive preconditioning under anisotropic or matrix smoothness and noise assumptions. This allows us to recover state-of-the-art convergence results for several popular adaptive gradient methods, including AdaGrad-Norm, AdaGrad, and ASGO/One-sided Shampoo. In addition, we establish the fundamental connection between two recently proposed algorithms, Scion and DASGO, and provide the first theoretical guarantees for the latter. Second, we show that the convergence of methods like AdaGrad and DASGO can be provably accelerated beyond the best-known rates using Nesterov momentum. Consequently, we obtain the first theoretical justification that AdaGrad-type algorithms can simultaneously benefit from both diagonal preconditioning and momentum, which may provide an ultimate explanation for the practical efficiency of Adam.

This work investigates text-to-texture synthesis using diffusion models to

generate physically-based texture maps. We aim to achieve realistic model

appearances under varying lighting conditions. A prominent solution for the

task is score distillation sampling. It allows recovering a complex texture

using gradient guidance given a differentiable rasterization and shading

pipeline. However, in practice, the aforementioned solution in conjunction with

the widespread latent diffusion models produces severe visual artifacts and

requires additional regularization such as implicit texture parameterization.

As a more direct alternative, we propose an approach using cascaded diffusion

models for texture synthesis (CasTex). In our setup, score distillation

sampling yields high-quality textures out-of-the box. In particular, we were

able to omit implicit texture parameterization in favor of an explicit

parameterization to improve the procedure. In the experiments, we show that our

approach significantly outperforms state-of-the-art optimization-based

solutions on public texture synthesis benchmarks.

MADrive introduces a memory-augmented framework for driving scene modeling, which overcomes limitations in novel-view synthesis of dynamic objects by replacing sparsely observed vehicles with photorealistic 3D models from a large external database. This approach enables the generation of significantly altered driving scenarios and quantitatively improves tracking and segmentation performance on synthesized data for autonomous driving applications.

Learning conditional distributions π∗(⋅∣x) is a central problem in machine learning, which is typically approached via supervised methods with paired data (x,y)∼π∗. However, acquiring paired data samples is often challenging, especially in problems such as domain translation. This necessitates the development of semi-supervised models that utilize both limited paired data and additional unpaired i.i.d. samples x∼πx∗ and y∼πy∗ from the marginal distributions. The usage of such combined data is complex and often relies on heuristic approaches. To tackle this issue, we propose a new learning paradigm that integrates both paired and unpaired data seamlessly using the data likelihood maximization techniques. We demonstrate that our approach also connects intriguingly with inverse entropic optimal transport (OT). This finding allows us to apply recent advances in computational OT to establish an end-to-end learning algorithm to get π∗(⋅∣x). In addition, we derive the universal approximation property, demonstrating that our approach can theoretically recover true conditional distributions with arbitrarily small error. Furthermore, we demonstrate through empirical tests that our method effectively learns conditional distributions using paired and unpaired data simultaneously.

There are no more papers matching your filters at the moment.