Brno University of Technology

In few-shot learning, such as meta-learning, few-shot fine-tuning or in-context learning, the limited number of samples used to train a model have a significant impact on the overall success. Although a large number of sample selection strategies exist, their impact on the performance of few-shot learning is not extensively known, as most of them have been so far evaluated in typical supervised settings only. In this paper, we thoroughly investigate the impact of 20 sample selection strategies on the performance of 5 few-shot learning approaches over 8 image and 6 text datasets. In addition, we propose a new method for automatic combination of sample selection strategies (ACSESS) that leverages the strengths and complementary information of the individual strategies. The experimental results show that our method consistently outperforms the individual selection strategies, as well as the recently proposed method for selecting support examples for in-context learning. We also show a strong modality, dataset and approach dependence for the majority of strategies as well as their dependence on the number of shots - demonstrating that the sample selection strategies play a significant role for lower number of shots, but regresses to random selection at higher number of shots.

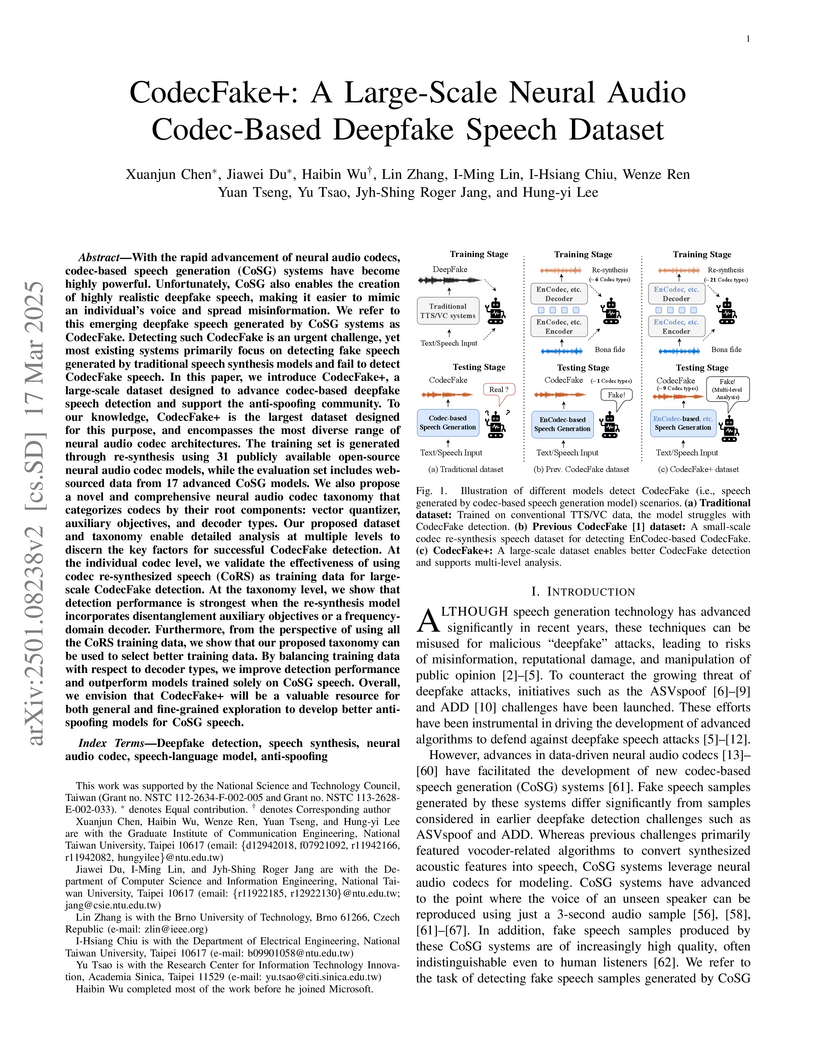

Researchers at National Taiwan University introduced CodecFake+, a large-scale dataset, and a novel taxonomy for neural audio codecs, enabling improved deepfake speech detection. A taxonomy-guided data balancing strategy achieved an 11.91% Equal Error Rate on a diverse set of unseen CodecFake samples.

KAISTShanghai Artificial Intelligence Laboratory

KAISTShanghai Artificial Intelligence Laboratory Carnegie Mellon UniversityBrno University of Technology

Carnegie Mellon UniversityBrno University of Technology Tsinghua University

Tsinghua University University of Maryland, College Park

University of Maryland, College Park Microsoft

Microsoft Johns Hopkins UniversityUniversidad Autónoma de MadridIndian Institute of Technology, BombayTufts UniversityUniversidad de Buenos AiresUniversiti Sains MalaysiaMiddlebury CollegeAthens University of Economics and BusinessPhonexiaTelefónicaUniversity of Texas, Austin

Johns Hopkins UniversityUniversidad Autónoma de MadridIndian Institute of Technology, BombayTufts UniversityUniversidad de Buenos AiresUniversiti Sains MalaysiaMiddlebury CollegeAthens University of Economics and BusinessPhonexiaTelefónicaUniversity of Texas, AustinMMAU-Pro introduces a comprehensive benchmark of 5,305 expert-annotated instances designed to holistically evaluate audio general intelligence in AI models across complex, real-world scenarios. The benchmark reveals that even state-of-the-art models exhibit substantial limitations in multi-audio reasoning, spatial understanding, and long-form audio comprehension, indicating significant room for improvement.

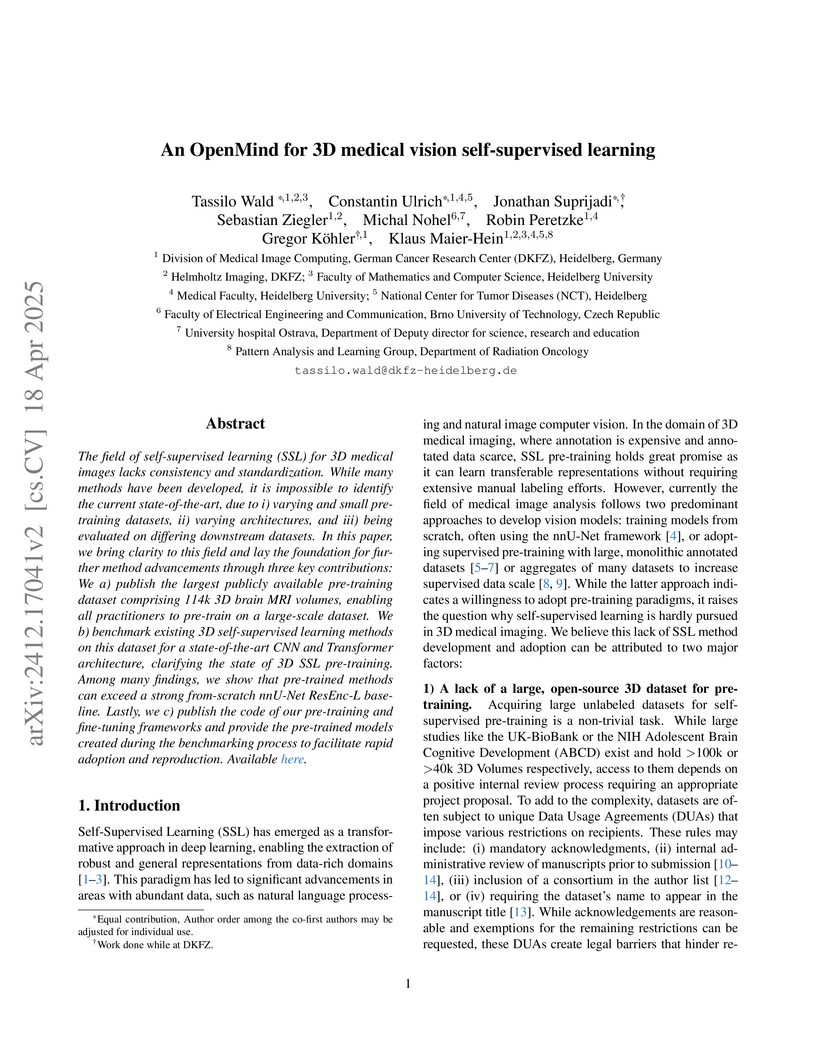

The field of self-supervised learning (SSL) for 3D medical images lacks

consistency and standardization. While many methods have been developed, it is

impossible to identify the current state-of-the-art, due to i) varying and

small pretraining datasets, ii) varying architectures, and iii) being evaluated

on differing downstream datasets. In this paper, we bring clarity to this field

and lay the foundation for further method advancements through three key

contributions: We a) publish the largest publicly available pre-training

dataset comprising 114k 3D brain MRI volumes, enabling all practitioners to

pre-train on a large-scale dataset. We b) benchmark existing 3D self-supervised

learning methods on this dataset for a state-of-the-art CNN and Transformer

architecture, clarifying the state of 3D SSL pre-training. Among many findings,

we show that pre-trained methods can exceed a strong from-scratch nnU-Net

ResEnc-L baseline. Lastly, we c) publish the code of our pre-training and

fine-tuning frameworks and provide the pre-trained models created during the

benchmarking process to facilitate rapid adoption and reproduction.

The dissemination of false information on online platforms presents a serious societal challenge. While manual fact-checking remains crucial, Large Language Models (LLMs) offer promising opportunities to support fact-checkers with their vast knowledge and advanced reasoning capabilities. This survey explores the application of generative LLMs in fact-checking, highlighting various approaches and techniques for prompting or fine-tuning these models. By providing an overview of existing methods and their limitations, the survey aims to enhance the understanding of how LLMs can be used in fact-checking and to facilitate further progress in their integration into the fact-checking process.

We present ESPnet-SpeechLM, an open toolkit designed to democratize the

development of speech language models (SpeechLMs) and voice-driven agentic

applications. The toolkit standardizes speech processing tasks by framing them

as universal sequential modeling problems, encompassing a cohesive workflow of

data preprocessing, pre-training, inference, and task evaluation. With

ESPnet-SpeechLM, users can easily define task templates and configure key

settings, enabling seamless and streamlined SpeechLM development. The toolkit

ensures flexibility, efficiency, and scalability by offering highly

configurable modules for every stage of the workflow. To illustrate its

capabilities, we provide multiple use cases demonstrating how competitive

SpeechLMs can be constructed with ESPnet-SpeechLM, including a 1.7B-parameter

model pre-trained on both text and speech tasks, across diverse benchmarks. The

toolkit and its recipes are fully transparent and reproducible at:

this https URL

15 Oct 2025

Chat assistants increasingly integrate web search functionality, enabling them to retrieve and cite external sources. While this promises more reliable answers, it also raises the risk of amplifying misinformation from low-credibility sources. In this paper, we introduce a novel methodology for evaluating assistants' web search behavior, focusing on source credibility and the groundedness of responses with respect to cited sources. Using 100 claims across five misinformation-prone topics, we assess GPT-4o, GPT-5, Perplexity, and Qwen Chat. Our findings reveal differences between the assistants, with Perplexity achieving the highest source credibility, whereas GPT-4o exhibits elevated citation of non-credibility sources on sensitive topics. This work provides the first systematic comparison of commonly used chat assistants for fact-checking behavior, offering a foundation for evaluating AI systems in high-stakes information environments.

Researchers from Brno University of Technology and TU Delft developed a comprehensive pipeline for automatically generating 3D building models from 2D raster-wise floor plans, achieving up to 16 percentage points higher mean IoU compared to state-of-the-art methods on the CubiCasa benchmark.

Self-supervised models such as WavLM have demonstrated strong performance for neural speaker diarization. However, these models are typically pre-trained on single-channel recordings, limiting their effectiveness in multi-channel scenarios. Existing diarization systems built on these models often rely on DOVER-Lap to combine outputs from individual channels. Although effective, this approach incurs substantial computational overhead and fails to fully exploit spatial information. In this work, building on DiariZen, a pipeline that combines WavLM-based local endto-end neural diarization with speaker embedding clustering, we introduce a lightweight approach to make pre-trained WavLM spatially aware by inserting channel communication modules into the early layers. Our method is agnostic to both the number of microphone channels and array topologies, ensuring broad applicability. We further propose to fuse multi-channel speaker embeddings by leveraging spatial attention weights. Evaluations on five public datasets show consistent improvements over single-channel baselines and demonstrate superior performance and efficiency compared with DOVER-Lap. Our source code is publicly available at this https URL.

Brno University of Technology's Speech@FIT demonstrates that leveraging the self-supervised WavLM model substantially reduces the labeled data required for neural speaker diarization training, achieving new state-of-the-art performance on far-field datasets like AMI and AISHELL-4. The approach, which integrates WavLM with a Conformer architecture, shows that large amounts of simulated data are often unnecessary and can even be detrimental.

We propose a speaker-attributed (SA) Whisper-based model for multi-talker speech recognition that combines target-speaker modeling with serialized output training (SOT). Our approach leverages a Diarization-Conditioned Whisper (DiCoW) encoder to extract target-speaker embeddings, which are concatenated into a single representation and passed to a shared decoder. This enables the model to transcribe overlapping speech as a serialized output stream with speaker tags and timestamps. In contrast to target-speaker ASR systems such as DiCoW, which decode each speaker separately, our approach performs joint decoding, allowing the decoder to condition on the context of all speakers simultaneously. Experiments show that the model outperforms existing SOT-based approaches and surpasses DiCoW on multi-talker mixtures (e.g., LibriMix).

In our era of widespread false information, human fact-checkers often face the challenge of duplicating efforts when verifying claims that may have already been addressed in other countries or languages. As false information transcends linguistic boundaries, the ability to automatically detect previously fact-checked claims across languages has become an increasingly important task. This paper presents the first comprehensive evaluation of large language models (LLMs) for multilingual previously fact-checked claim detection. We assess seven LLMs across 20 languages in both monolingual and cross-lingual settings. Our results show that while LLMs perform well for high-resource languages, they struggle with low-resource languages. Moreover, translating original texts into English proved to be beneficial for low-resource languages. These findings highlight the potential of LLMs for multilingual previously fact-checked claim detection and provide a foundation for further research on this promising application of LLMs.

We present CS-FLEURS, a new dataset for developing and evaluating code-switched speech recognition and translation systems beyond high-resourced languages. CS-FLEURS consists of 4 test sets which cover in total 113 unique code-switched language pairs across 52 languages: 1) a 14 X-English language pair set with real voices reading synthetically generated code-switched sentences, 2) a 16 X-English language pair set with generative text-to-speech 3) a 60 {Arabic, Mandarin, Hindi, Spanish}-X language pair set with the generative text-to-speech, and 4) a 45 X-English lower-resourced language pair test set with concatenative text-to-speech. Besides the four test sets, CS-FLEURS also provides a training set with 128 hours of generative text-to-speech data across 16 X-English language pairs. Our hope is that CS-FLEURS helps to broaden the scope of future code-switched speech research. Dataset link: this https URL.

Researchers from Johns Hopkins University and Brno University of Technology introduce Polynomial Chaos Expansion (PCE) as a framework for operator learning, offering intrinsic uncertainty quantification and both data-driven and physics-informed variants. The physics-constrained PCE (PC^2) approach demonstrates high accuracy and computational efficiency for learning PDE solution operators, particularly excelling in high-dimensional and data-scarce scenarios.

06 Sep 2025

Target Speech Extraction (TSE) aims to isolate a target speaker's voice from a mixture of multiple speakers by leveraging speaker-specific cues, typically provided as auxiliary audio (a.k.a. cue audio). Although recent advancements in TSE have primarily employed discriminative models that offer high perceptual quality, these models often introduce unwanted artifacts, reduce naturalness, and are sensitive to discrepancies between training and testing environments. On the other hand, generative models for TSE lag in perceptual quality and intelligibility. To address these challenges, we present SoloSpeech, a novel cascaded generative pipeline that integrates compression, extraction, reconstruction, and correction processes. SoloSpeech features a speaker-embedding-free target extractor that utilizes conditional information from the cue audio's latent space, aligning it with the mixture audio's latent space to prevent mismatches. Evaluated on the widely-used Libri2Mix dataset, SoloSpeech achieves the new state-of-the-art intelligibility and quality in target speech extraction while demonstrating exceptional generalization on out-of-domain data and real-world scenarios.

Multilingual Large Language Models (LLMs) offer powerful capabilities for cross-lingual fact-checking. However, these models often exhibit language bias, performing disproportionately better on high-resource languages such as English than on low-resource counterparts. We also present and inspect a novel concept - retrieval bias, when information retrieval systems tend to favor certain information over others, leaving the retrieval process skewed. In this paper, we study language and retrieval bias in the context of Previously Fact-Checked Claim Detection (PFCD). We evaluate six open-source multilingual LLMs across 20 languages using a fully multilingual prompting strategy, leveraging the AMC-16K dataset. By translating task prompts into each language, we uncover disparities in monolingual and cross-lingual performance and identify key trends based on model family, size, and prompting strategy. Our findings highlight persistent bias in LLM behavior and offer recommendations for improving equity in multilingual fact-checking. To investigate retrieval bias, we employed multilingual embedding models and look into the frequency of retrieved claims. Our analysis reveals that certain claims are retrieved disproportionately across different posts, leading to inflated retrieval performance for popular claims while under-representing less common ones.

FLEXIO presents a versatile speech separation and enhancement system capable of processing variable numbers of microphones and speakers, providing user control through prompt vectors. The system achieves strong performance and robust generalization across diverse input/output conditions, including unseen microphone counts and challenging real-world data, often outperforming dedicated baselines and maintaining low Word Error Rates.

Low-resource languages (LRLs) face significant challenges in natural language

processing (NLP) due to limited data. While current state-of-the-art large

language models (LLMs) still struggle with LRLs, smaller multilingual models

(mLMs) such as mBERT and XLM-R offer greater promise due to a better fit of

their capacity to low training data sizes. This study systematically

investigates parameter-efficient adapter-based methods for adapting mLMs to

LRLs, evaluating three architectures: Sequential Bottleneck, Invertible

Bottleneck, and Low-Rank Adaptation. Using unstructured text from GlotCC and

structured knowledge from ConceptNet, we show that small adaptation datasets

(e.g., up to 1 GB of free-text or a few MB of knowledge graph data) yield gains

in intrinsic (masked language modeling) and extrinsic tasks (topic

classification, sentiment analysis, and named entity recognition). We find that

Sequential Bottleneck adapters excel in language modeling, while Invertible

Bottleneck adapters slightly outperform other methods on downstream tasks due

to better embedding alignment and larger parameter counts. Adapter-based

methods match or outperform full fine-tuning while using far fewer parameters,

and smaller mLMs prove more effective for LRLs than massive LLMs like LLaMA-3,

GPT-4, and DeepSeek-R1-based distilled models. While adaptation improves

performance, pre-training data size remains the dominant factor, especially for

languages with extensive pre-training coverage.

Micro-gesture recognition (MGR) targets the identification of subtle and fine-grained human motions and requires accurate modeling of both long-range and local spatiotemporal dependencies. While CNNs are effective at capturing local patterns, they struggle with long-range dependencies due to their limited receptive fields. Transformer-based models address this limitation through self-attention mechanisms but suffer from high computational costs. Recently, Mamba has shown promise as an efficient model, leveraging state space models (SSMs) to enable linear-time processing However, directly applying the vanilla Mamba to MGR may not be optimal. This is because Mamba processes inputs as 1D sequences, with state updates relying solely on the previous state, and thus lacks the ability to model local spatiotemporal dependencies. In addition, previous methods lack a design of motion-awareness, which is crucial in MGR. To overcome these limitations, we propose motion-aware state fusion mamba (MSF-Mamba), which enhances Mamba with local spatiotemporal modeling by fusing local contextual neighboring states. Our design introduces a motion-aware state fusion module based on central frame difference (CFD). Furthermore, a multiscale version named MSF-Mamba+ has been proposed. Specifically, MSF-Mamba supports multiscale motion-aware state fusion, as well as an adaptive scale weighting module that dynamically weighs the fused states across different scales. These enhancements explicitly address the limitations of vanilla Mamba by enabling motion-aware local spatiotemporal modeling, allowing MSF-Mamba and MSF-Mamba to effectively capture subtle motion cues for MGR. Experiments on two public MGR datasets demonstrate that even the lightweight version, namely, MSF-Mamba, achieves SoTA performance, outperforming existing CNN-, Transformer-, and SSM-based models while maintaining high efficiency.

01 Jul 2020

A transimpedance amplifier has been designed for scanning tunneling

microscopy (STM). The amplifier features low noise (limited by the Johnson

noise of the 1 G{\Omega} feedback resistor at low input current and low

frequencies), sufficient bandwidth for most STM applications (50 kHz at 35 pF

input capacitance), a large dynamic range (0.1 pA--50 nA without range

switching), and a low input voltage offset. The amplifier is also suited for

placing its first stage into the cryostat of a low-temperature STM, minimizing

the input capacitance and reducing the Johnson noise of the feedback resistor.

The amplifier may also find applications for specimen current imaging and

electron-beam-induced current measurements in scanning electron microscopy and

as a photodiode amplifier with a large dynamic range. This paper also discusses

the sources of noise including the often neglected effect of non-balanced input

impedance of operational amplifiers and describes how to accurately measure and

adjust the frequency response of low-current transimpedance amplifiers.

There are no more papers matching your filters at the moment.