Rensselaer Polytechnic Institute

05 Dec 2024

Advancements in sensor technology offer significant insights into vehicle conditions, unlocking new venues to enhance fleet operations. While current vehicle health management models provide accurate predictions of vehicle failures, they often fail to integrate these forecasts into operational decision-making, limiting their practical impact. This paper addresses this gap by incorporating sensor-driven failure predictions into a single-vehicle routing problem with time windows. A maintenance cost function is introduced to balance two critical trade-offs: premature maintenance, which leads to underutilization of remaining useful life, and delayed maintenance, which increases the likelihood of breakdowns. Routing problems with time windows are inherently challenging, and integrating maintenance considerations adds significantly to its computational complexity. To address this, we develop a new solution method, called the Iterative Alignment Method (IAM), building on the structural properties of the problem. IAM generates high-quality solutions even in large-size instances where Gurobi cannot find any solutions. Moreover, compared to the traditional periodic maintenance strategy, our sensor-driven approach to maintenance decisions shows improvements in operational and maintenance costs as well as in overall vehicle reliability.

Researchers from Rensselaer Polytechnic Institute and Northeastern University leveraged Large Language Models, specifically GPT-4, to automatically identify milestone achievements in transcripts of group discussions. GPT-4 significantly enhanced detection accuracy for specific milestones, such as increasing "dual" from 25% to 90% and "quadruple" from 65% to 93.5% compared to BERT-based semantic similarity, yet faced challenges with non-deterministic outputs and formatting inconsistencies.

ETH Zurich

ETH Zurich KAIST

KAIST University of WashingtonRensselaer Polytechnic Institute

University of WashingtonRensselaer Polytechnic Institute Google DeepMind

Google DeepMind University of Amsterdam

University of Amsterdam University of Illinois at Urbana-Champaign

University of Illinois at Urbana-Champaign University of CambridgeHeidelberg University

University of CambridgeHeidelberg University University of WaterlooFacebook

University of WaterlooFacebook Carnegie Mellon University

Carnegie Mellon University University of Southern California

University of Southern California Google

Google New York UniversityUniversity of Stuttgart

New York UniversityUniversity of Stuttgart UC Berkeley

UC Berkeley National University of Singapore

National University of Singapore University College London

University College London University of OxfordLMU Munich

University of OxfordLMU Munich Shanghai Jiao Tong University

Shanghai Jiao Tong University University of California, Irvine

University of California, Irvine Tsinghua University

Tsinghua University Stanford University

Stanford University University of Michigan

University of Michigan University of Copenhagen

University of Copenhagen The Chinese University of Hong KongUniversity of Melbourne

The Chinese University of Hong KongUniversity of Melbourne MetaUniversity of Edinburgh

MetaUniversity of Edinburgh OpenAI

OpenAI The University of Texas at Austin

The University of Texas at Austin Cornell University

Cornell University University of California, San DiegoYonsei University

University of California, San DiegoYonsei University McGill University

McGill University Boston UniversityUniversity of Bamberg

Boston UniversityUniversity of Bamberg Nanyang Technological University

Nanyang Technological University Microsoft

Microsoft KU Leuven

KU Leuven Columbia UniversityUC Santa Barbara

Columbia UniversityUC Santa Barbara Allen Institute for AIGerman Research Center for Artificial Intelligence (DFKI)

Allen Institute for AIGerman Research Center for Artificial Intelligence (DFKI) University of Pennsylvania

University of Pennsylvania Johns Hopkins University

Johns Hopkins University Arizona State University

Arizona State University University of Maryland

University of Maryland University of TokyoUniversity of North Carolina at Chapel HillHebrew University of JerusalemAmazonTilburg UniversityUniversity of Massachusetts AmherstUniversity of RochesterUniversity of Duisburg-EssenSapienza University of RomeUniversity of Sheffield

University of TokyoUniversity of North Carolina at Chapel HillHebrew University of JerusalemAmazonTilburg UniversityUniversity of Massachusetts AmherstUniversity of RochesterUniversity of Duisburg-EssenSapienza University of RomeUniversity of Sheffield Princeton University

Princeton University HKUSTUniversity of TübingenTU BerlinSaarland UniversityTechnical University of DarmstadtUniversity of HaifaUniversity of TrentoUniversity of MontrealBilkent UniversityUniversity of Cape TownBar Ilan UniversityIBMUniversity of Mannheim

HKUSTUniversity of TübingenTU BerlinSaarland UniversityTechnical University of DarmstadtUniversity of HaifaUniversity of TrentoUniversity of MontrealBilkent UniversityUniversity of Cape TownBar Ilan UniversityIBMUniversity of Mannheim ServiceNowPotsdam UniversityPolish-Japanese Academy of Information TechnologySalesforceASAPPAI21 LabsValencia Polytechnic UniversityUniversity of Trento, Italy

ServiceNowPotsdam UniversityPolish-Japanese Academy of Information TechnologySalesforceASAPPAI21 LabsValencia Polytechnic UniversityUniversity of Trento, ItalyA large-scale and diverse benchmark, BIG-bench, was introduced to rigorously evaluate the capabilities and limitations of large language models across 204 tasks. The evaluation revealed that even state-of-the-art models currently achieve aggregate scores below 20 (on a 0-100 normalized scale), indicating significantly lower performance compared to human experts.

A comprehensive survey outlines the landscape of Small Language Models (SLMs) in the context of Large Language Models (LLMs), defining SLMs based on task capability and resource constraints. It details their acquisition, enhancement techniques, diverse applications, synergistic collaboration with LLMs, and critical trustworthiness considerations to enable efficient and privacy-preserving AI.

Code2MCP, an agent-based framework, automatically converts GitHub repositories into standardized Model Context Protocol (MCP) services through a multi-agent workflow and a self-correction loop. This system significantly accelerates the creation of agent-ready tools, enabling AI agents to access complex functionalities from existing codebases for tasks like scientific computing.

SentenceKV introduces a method for efficient Large Language Model inference by using sentence-level semantic KV caching, significantly reducing GPU memory usage and maintaining stable, low latency while preserving accuracy for contexts up to 256K tokens. It demonstrated 97.5% retrieval accuracy on the Needle In A Haystack benchmark with a 128-token budget.

Rensselaer Polytechnic InstituteWuhan UniversitySichuan University New York University

New York University Nanjing UniversityThe Chinese University of Hong Kong, Shenzhen

Nanjing UniversityThe Chinese University of Hong Kong, Shenzhen Yale University

Yale University NVIDIA

NVIDIA Columbia University

Columbia University University of Florida

University of Florida Stony Brook UniversityThe University of ManchesterStevens Institute of TechnologyUniversity of Montreal

Stony Brook UniversityThe University of ManchesterStevens Institute of TechnologyUniversity of Montreal

New York University

New York University Nanjing UniversityThe Chinese University of Hong Kong, Shenzhen

Nanjing UniversityThe Chinese University of Hong Kong, Shenzhen Yale University

Yale University NVIDIA

NVIDIA Columbia University

Columbia University University of Florida

University of Florida Stony Brook UniversityThe University of ManchesterStevens Institute of TechnologyUniversity of Montreal

Stony Brook UniversityThe University of ManchesterStevens Institute of TechnologyUniversity of MontrealFinancial LLMs hold promise for advancing financial tasks and domain-specific

applications. However, they are limited by scarce corpora, weak multimodal

capabilities, and narrow evaluations, making them less suited for real-world

application. To address this, we introduce \textit{Open-FinLLMs}, the first

open-source multimodal financial LLMs designed to handle diverse tasks across

text, tabular, time-series, and chart data, excelling in zero-shot, few-shot,

and fine-tuning settings. The suite includes FinLLaMA, pre-trained on a

comprehensive 52-billion-token corpus; FinLLaMA-Instruct, fine-tuned with 573K

financial instructions; and FinLLaVA, enhanced with 1.43M multimodal tuning

pairs for strong cross-modal reasoning. We comprehensively evaluate

Open-FinLLMs across 14 financial tasks, 30 datasets, and 4 multimodal tasks in

zero-shot, few-shot, and supervised fine-tuning settings, introducing two new

multimodal evaluation datasets. Our results show that Open-FinLLMs outperforms

afvanced financial and general LLMs such as GPT-4, across financial NLP,

decision-making, and multi-modal tasks, highlighting their potential to tackle

real-world challenges. To foster innovation and collaboration across academia

and industry, we release all codes

(this https URL) and models under

OSI-approved licenses.

Researchers from Rensselaer Polytechnic Institute and collaborators audit existing Knowledge Graph Question Answering (KGQA) datasets, revealing an average factual correctness of only 57%. They introduce KGQAGen, an LLM-guided framework for creating high-quality, verifiable benchmarks, and use it to construct KGQAGen-10k, which achieves 96.3% factual accuracy and highlights retrieval as a primary bottleneck for state-of-the-art KG-RAG models.

Rensselaer Polytechnic InstituteUniversity of Bonn University of SouthamptonUniversität Stuttgart

University of SouthamptonUniversität Stuttgart Rutgers UniversitySapienza University of RomeUniversidad de ChileLinköping UniversityVrije UniversiteitUniversidad de OviedoUniversity of BariUniversität PaderbornWU ViennaUniversität Koblenz–Landaudata.worldÉcole des mines de Saint-ÉtienneUniversity of Milano

Bicocca

Rutgers UniversitySapienza University of RomeUniversidad de ChileLinköping UniversityVrije UniversiteitUniversidad de OviedoUniversity of BariUniversität PaderbornWU ViennaUniversität Koblenz–Landaudata.worldÉcole des mines de Saint-ÉtienneUniversity of Milano

Bicocca

University of SouthamptonUniversität Stuttgart

University of SouthamptonUniversität Stuttgart Rutgers UniversitySapienza University of RomeUniversidad de ChileLinköping UniversityVrije UniversiteitUniversidad de OviedoUniversity of BariUniversität PaderbornWU ViennaUniversität Koblenz–Landaudata.worldÉcole des mines de Saint-ÉtienneUniversity of Milano

Bicocca

Rutgers UniversitySapienza University of RomeUniversidad de ChileLinköping UniversityVrije UniversiteitUniversidad de OviedoUniversity of BariUniversität PaderbornWU ViennaUniversität Koblenz–Landaudata.worldÉcole des mines de Saint-ÉtienneUniversity of Milano

BicoccaThis collaborative tutorial from 18 leading researchers provides a comprehensive, unifying summary of knowledge graphs, consolidating fragmented knowledge from diverse fields. It defines core concepts, surveys techniques across data models, knowledge representation, and AI, and outlines lifecycle management and governance for knowledge graphs.

BIOCLIP is a vision foundation model that significantly improves fine-grained biological image classification and generalization to unseen taxa by leveraging a novel 10-million image dataset (TREEOFLIFE-10M) and adapting CLIP's contrastive learning to incorporate the hierarchical structure of biological taxonomy. It achieves an average absolute improvement of 17.5% over original CLIP in zero-shot classification across 10 diverse biology tasks and 38.0% zero-shot accuracy on a novel RARE SPECIES dataset of unseen taxa.

A multi-agent framework called Foam-Agent automates OpenFOAM-based CFD simulations through natural language inputs, achieving an 83.6% success rate with Claude 3.5 Sonnet through specialized agents handling architecture planning, configuration generation, simulation execution, and error correction while maintaining consistency across interdependent files.

05 Mar 2025

DrugAgent is a multi-agent LLM framework that automates machine learning programming for drug discovery tasks, integrating deep domain knowledge and intelligent planning. The system consistently outperforms general-purpose LLM agents and often matches human expert baselines across ADMET, HTS, and DTI prediction tasks, exhibiting zero domain-specific errors due to its specialized architecture.

Researchers from Rensselaer Polytechnic Institute, Michigan State University, New Jersey Institute of Technology, and IBM Research present the first theoretical generalization analysis of task arithmetic on nonlinear Transformer models. The work explains why task vectors are effective for model editing across multi-task learning, unlearning, and out-of-domain generalization, while also proving their inherent low-rank and sparse properties.

02 Oct 2025

Researchers at Rensselaer Polytechnic Institute and IBM Research introduce "Contrastive Retrieval heads" (CoRe heads) for attention-based re-ranking, a method that selectively aggregates attention signals from a small, highly discriminative subset of transformer heads to enhance the accuracy and efficiency of Large Language Model (LLM) re-rankers. This approach consistently achieved state-of-the-art zero-shot performance across diverse benchmarks, including multilingual datasets, while reducing GPU memory usage by 40% and inference latency by 20% through strategic layer pruning.

30 Sep 2025

Computational Fluid Dynamics (CFD) is an essential simulation tool in engineering, yet its steep learning curve and complex manual setup create significant barriers. To address these challenges, we introduce Foam-Agent, a multi-agent framework that automates the entire end-to-end OpenFOAM workflow from a single natural language prompt. Our key innovations address critical gaps in existing systems: 1. An Comprehensive End-to-End Simulation Automation: Foam-Agent is the first system to manage the full simulation pipeline, including advanced pre-processing with a versatile Meshing Agent capable of handling external mesh files and generating new geometries via Gmsh, automatic generation of HPC submission scripts, and post-simulation visualization via ParaView. 2. Composable Service Architecture: Going beyond a monolithic agent, the framework uses Model Context Protocol (MCP) to expose its core functions as discrete, callable tools. This allows for flexible integration and use by other agentic systems, such as Claude-code, for more exploratory workflows. 3. High-Fidelity Configuration Generation: We achieve superior accuracy through a Hierarchical Multi-Index RAG for precise context retrieval and a dependency-aware generation process that ensures configuration consistency. Evaluated on a benchmark of 110 simulation tasks, Foam-Agent achieves an 88.2% success rate with Claude 3.5 Sonnet, significantly outperforming existing frameworks (55.5% for MetaOpenFOAM). Foam-Agent dramatically lowers the expertise barrier for CFD, demonstrating how specialized multi-agent systems can democratize complex scientific computing. The code is public at this https URL.

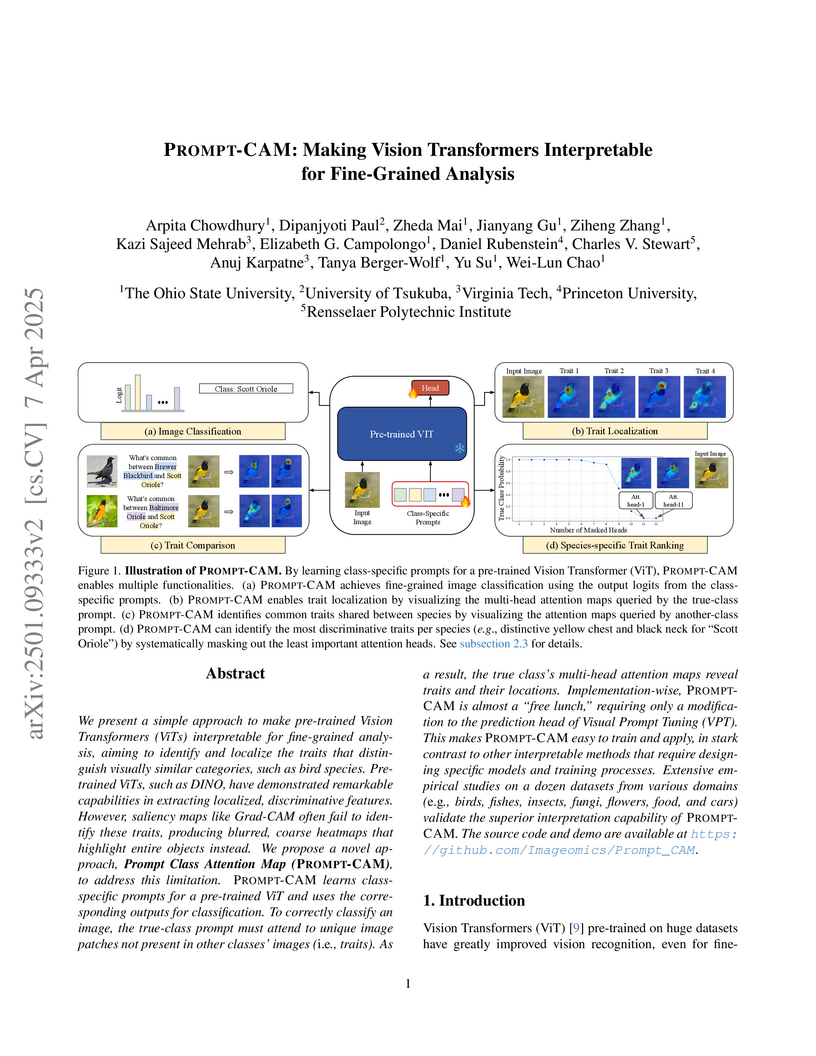

PROMPT-CAM introduces class-specific prompts into frozen Vision Transformers to accurately localize fine-grained visual traits that differentiate visually similar categories. The method demonstrates superior interpretability, achieving higher faithfulness scores and enabling human experts to recognize 60.49% of identified traits, even with a slight trade-off in classification accuracy.

Advancements in large language models (LLMs) allow them to address diverse

questions using human-like interfaces. Still, limitations in their training

prevent them from answering accurately in scenarios that could benefit from

multiple perspectives. Multi-agent systems allow the resolution of questions to

enhance result consistency and reliability. While drug-target interaction (DTI)

prediction is important for drug discovery, existing approaches face challenges

due to complex biological systems and the lack of interpretability needed for

clinical applications. DrugAgent is a multi-agent LLM system for DTI prediction

that combines multiple specialized perspectives with transparent reasoning. Our

system adapts and extends existing multi-agent frameworks by (1) applying

coordinator-based architecture to the DTI domain, (2) integrating

domain-specific data sources, including ML predictions, knowledge graphs, and

literature evidence, and (3) incorporating Chain-of-Thought (CoT) and ReAct

(Reason+Act) frameworks for transparent DTI reasoning. We conducted

comprehensive experiments using a kinase inhibitor dataset, where our

multi-agent LLM method outperformed the non-reasoning multi-agent model (GPT-4o

mini) by 45% in F1 score (0.514 vs 0.355). Through ablation studies, we

demonstrated the contributions of each agent, with the AI agent being the most

impactful, followed by the KG agent and search agent. Most importantly, our

approach provides detailed, human-interpretable reasoning for each prediction

by combining evidence from multiple sources - a critical feature for biomedical

applications where understanding the rationale behind predictions is essential

for clinical decision-making and regulatory compliance. Code is available at

this https URL

19 Feb 2025

FLAG-TRADER introduces a framework integrating Large Language Models with gradient-based Reinforcement Learning for financial trading, where the LLM acts as the policy network. The framework enables small-scale, open-source LLMs to achieve superior performance in single-asset trading tasks compared to larger, proprietary models by effectively leveraging RL-based fine-tuning for sequential decision-making.

Large Language Models (LLMs) have demonstrated strong performance across general NLP tasks, but their utility in automating numerical experiments of complex physical system -- a critical and labor-intensive component -- remains underexplored. As the major workhorse of computational science over the past decades, Computational Fluid Dynamics (CFD) offers a uniquely challenging testbed for evaluating the scientific capabilities of LLMs. We introduce CFDLLMBench, a benchmark suite comprising three complementary components -- CFDQuery, CFDCodeBench, and FoamBench -- designed to holistically evaluate LLM performance across three key competencies: graduate-level CFD knowledge, numerical and physical reasoning of CFD, and context-dependent implementation of CFD workflows. Grounded in real-world CFD practices, our benchmark combines a detailed task taxonomy with a rigorous evaluation framework to deliver reproducible results and quantify LLM performance across code executability, solution accuracy, and numerical convergence behavior. CFDLLMBench establishes a solid foundation for the development and evaluation of LLM-driven automation of numerical experiments for complex physical systems. Code and data are available at this https URL.

Fine-tuning on task-specific data to boost downstream performance is a crucial step for leveraging Large Language Models (LLMs). However, previous studies have demonstrated that fine-tuning the models on several adversarial samples or even benign data can greatly comprise the model's pre-equipped alignment and safety capabilities. In this work, we propose SEAL, a novel framework to enhance safety in LLM fine-tuning. SEAL learns a data ranker based on the bilevel optimization to up rank the safe and high-quality fine-tuning data and down rank the unsafe or low-quality ones. Models trained with SEAL demonstrate superior quality over multiple baselines, with 8.5% and 9.7% win rate increase compared to random selection respectively on Llama-3-8b-Instruct and Merlinite-7b models. Our code is available on github this https URL.

There are no more papers matching your filters at the moment.