Tulane University

This survey establishes a unified framework for Prompt-based Adaptation (PA) in large-scale vision models, meticulously distinguishing between Visual Prompting (VP) and Visual Prompt Tuning (VPT) based on injection granularity and generation mechanisms. It provides a comprehensive overview of methodologies, practical efficiencies, diverse applications, and theoretical underpinnings, aiming to standardize terminology and guide future research.

Paucity of medical data severely limits the generalizability of diagnostic ML models, as the full spectrum of disease variability can not be represented by a small clinical dataset. To address this, diffusion models (DMs) have been considered as a promising avenue for synthetic image generation and augmentation. However, they frequently produce medically inaccurate images, deteriorating the model performance. Expert domain knowledge is critical for synthesizing images that correctly encode clinical information, especially when data is scarce and quality outweighs quantity. Existing approaches for incorporating human feedback, such as reinforcement learning (RL) and Direct Preference Optimization (DPO), rely on robust reward functions or demand labor-intensive expert evaluations. Recent progress in Multimodal Large Language Models (MLLMs) reveals their strong visual reasoning capabilities, making them adept candidates as evaluators. In this work, we propose a novel framework, coined MAGIC (Medically Accurate Generation of Images through AI-Expert Collaboration), that synthesizes clinically accurate skin disease images for data augmentation. Our method creatively translates expert-defined criteria into actionable feedback for image synthesis of DMs, significantly improving clinical accuracy while reducing the direct human workload. Experiments demonstrate that our method greatly improves the clinical quality of synthesized skin disease images, with outputs aligning with dermatologist assessments. Additionally, augmenting training data with these synthesized images improves diagnostic accuracy by +9.02% on a challenging 20-condition skin disease classification task, and by +13.89% in the few-shot setting.

Skin Neglected Tropical Diseases (NTDs) impose severe health and socioeconomic burdens in impoverished tropical communities. Yet, advancements in AI-driven diagnostic support are hindered by data scarcity, particularly for underrepresented populations and rare manifestations of NTDs. Existing dermatological datasets often lack the demographic and disease spectrum crucial for developing reliable recognition models of NTDs. To address this, we introduce eSkinHealth, a novel dermatological dataset collected on-site in Côte d'Ivoire and Ghana. Specifically, eSkinHealth contains 5,623 images from 1,639 cases and encompasses 47 skin diseases, focusing uniquely on skin NTDs and rare conditions among West African populations. We further propose an AI-expert collaboration paradigm to implement foundation language and segmentation models for efficient generation of multimodal annotations, under dermatologists' guidance. In addition to patient metadata and diagnosis labels, eSkinHealth also includes semantic lesion masks, instance-specific visual captions, and clinical concepts. Overall, our work provides a valuable new resource and a scalable annotation framework, aiming to catalyze the development of more equitable, accurate, and interpretable AI tools for global dermatology.

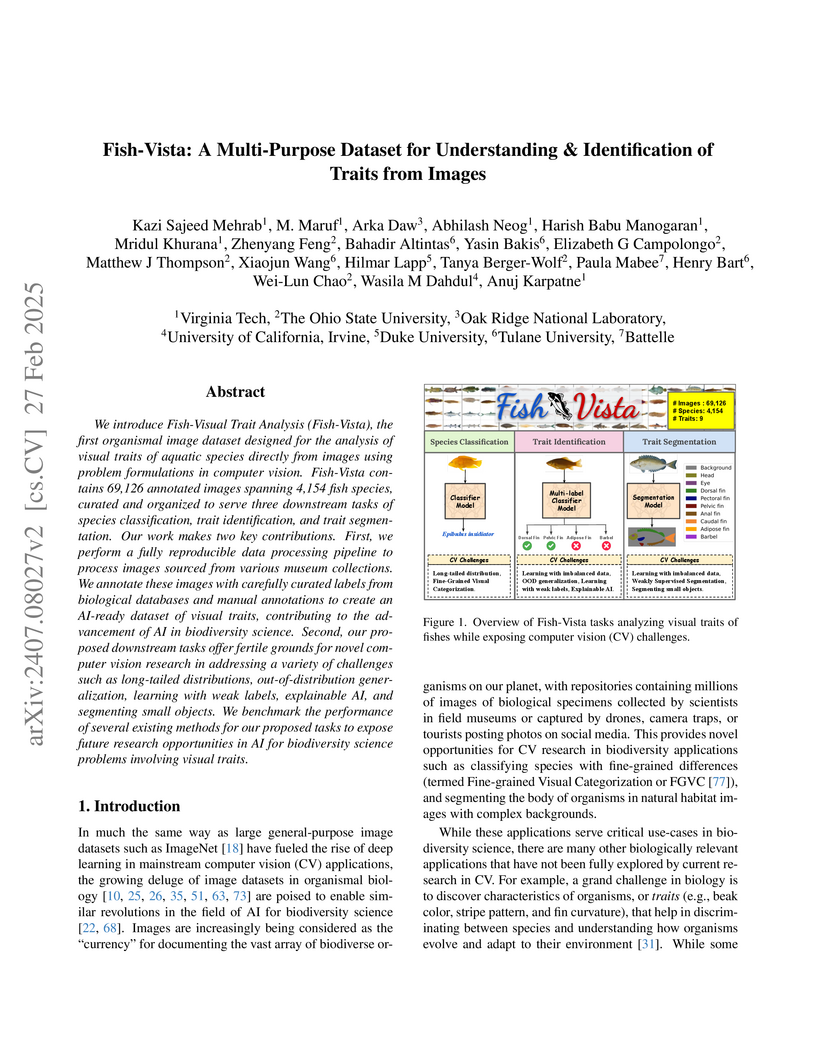

We introduce Fish-Visual Trait Analysis (Fish-Vista), the first organismal

image dataset designed for the analysis of visual traits of aquatic species

directly from images using problem formulations in computer vision. Fish-Vista

contains 69,126 annotated images spanning 4,154 fish species, curated and

organized to serve three downstream tasks of species classification, trait

identification, and trait segmentation. Our work makes two key contributions.

First, we perform a fully reproducible data processing pipeline to process

images sourced from various museum collections. We annotate these images with

carefully curated labels from biological databases and manual annotations to

create an AI-ready dataset of visual traits, contributing to the advancement of

AI in biodiversity science. Second, our proposed downstream tasks offer fertile

grounds for novel computer vision research in addressing a variety of

challenges such as long-tailed distributions, out-of-distribution

generalization, learning with weak labels, explainable AI, and segmenting small

objects. We benchmark the performance of several existing methods for our

proposed tasks to expose future research opportunities in AI for biodiversity

science problems involving visual traits.

Physics-informed neural networks have proven to be a powerful tool for

solving differential equations, leveraging the principles of physics to inform

the learning process. However, traditional deep neural networks often face

challenges in achieving high accuracy without incurring significant

computational costs. In this work, we implement the Physics-Informed

Kolmogorov-Arnold Neural Networks (PIKAN) through efficient-KAN and WAV-KAN,

which utilize the Kolmogorov-Arnold representation theorem. PIKAN demonstrates

superior performance compared to conventional deep neural networks, achieving

the same level of accuracy with fewer layers and reduced computational

overhead. We explore both B-spline and wavelet-based implementations of PIKAN

and benchmark their performance across various ordinary and partial

differential equations using unsupervised (data-free) and supervised

(data-driven) techniques. For certain differential equations, the data-free

approach suffices to find accurate solutions, while in more complex scenarios,

the data-driven method enhances the PIKAN's ability to converge to the correct

solution. We validate our results against numerical solutions and achieve $99

\%$ accuracy in most scenarios.

15 Sep 2025

Role-playing Large language models (LLMs) are increasingly deployed in high-stakes domains such as healthcare, education, and governance, where failures can directly impact user trust and well-being. A cost effective paradigm for LLM role-playing is few-shot learning, but existing approaches often cause models to break character in unexpected and potentially harmful ways, especially when interacting with hostile users. Inspired by Retrieval-Augmented Generation (RAG), we reformulate LLM role-playing into a text retrieval problem and propose a new prompting framework called RAGs-to-Riches, which leverages curated reference demonstrations to condition LLM responses. We evaluate our framework with LLM-as-a-judge preference voting and introduce two novel token-level ROUGE metrics: Intersection over Output (IOO) to quantity how much an LLM improvises and Intersection over References (IOR) to measure few-shot demonstrations utilization rate during the evaluation tasks. When simulating interactions with a hostile user, our prompting strategy incorporates in its responses during inference an average of 35% more tokens from the reference demonstrations. As a result, across 453 role-playing interactions, our models are consistently judged as being more authentic, and remain in-character more often than zero-shot and in-context Learning (ICL) methods. Our method presents a scalable strategy for building robust, human-aligned LLM role-playing frameworks.

In density functional theory, the SCAN (Strongly Constrained and Appropriately Normed) and r2SCAN functionals significantly improve over generalized gradient approximation functionals such as PBE (Perdew-Burke-Ernzerhof) in predicting electronic, magnetic, and structural properties across various materials, including transition-metal compounds. However, there remain puzzling cases where SCAN and r2SCAN underperform, such as in calculating the band structure of graphene, the magnetic moment of Fe, the potential energy curve of the Cr2 molecule, and the bond length of VO2. This research identifies a common characteristic among these challenging materials: non-compact covalent bonding through s-s, p-p, or d-d electron hybridization. While SCAN and r2SCAN excel at capturing electron localization at local atomic sites, they struggle to accurately describe electron localization in non-compact covalent bonds, resulting in a biased improvement. To address this issue, we propose the r2SCAN+V approach as a practical modification that improves accuracy across all the tested materials. The parameter V is 4 eV for metallic Fe, but substantially lower for the other cases. Our findings provide valuable insights for the future development of advanced functionals.

19 Sep 2025

Detecting packed executables is a critical component of large-scale malware analysis and antivirus engine workflows, as it identifies samples that warrant computationally intensive dynamic unpacking to reveal concealed malicious behavior. Traditionally, packer detection techniques have relied on empirical features, such as high entropy or specific binary patterns. However, these empirical, feature-based methods are increasingly vulnerable to evasion by adversarial samples or unknown packers (e.g., low-entropy packers). Furthermore, the dependence on expert-crafted features poses challenges in sustaining and evolving these methods over time.

In this paper, we examine the limitations of existing packer detection methods and propose Pack-ALM, a novel deep-learning-based approach for detecting packed executables. Inspired by the linguistic concept of distinguishing between real and pseudo words, we reformulate packer detection as a task of differentiating between legitimate and "pseudo" instructions. To achieve this, we preprocess native data and packed data into "pseudo" instructions and design a pre-trained assembly language model that recognizes features indicative of packed data. We evaluate Pack-ALM against leading industrial packer detection tools and state-of-the-art assembly language models. Extensive experiments on over 37,000 samples demonstrate that Pack-ALM effectively identifies packed binaries, including samples created with adversarial or previously unseen packing techniques. Moreover, Pack-ALM outperforms traditional entropy-based methods and advanced assembly language models in both detection accuracy and adversarial robustness.

The rapidly increasing capabilities of large language models (LLMs) raise an urgent need to align AI systems with diverse human preferences to simultaneously enhance their usefulness and safety, despite the often conflicting nature of these goals. To address this important problem, a promising approach is to enforce a safety constraint at the fine-tuning stage through a constrained Reinforcement Learning from Human Feedback (RLHF) framework. This approach, however, is computationally expensive and often unstable. In this work, we introduce Constrained DPO (C-DPO), a novel extension of the recently proposed Direct Preference Optimization (DPO) approach for fine-tuning LLMs that is both efficient and lightweight. By integrating dual gradient descent and DPO, our method identifies a nearly optimal trade-off between helpfulness and harmlessness without using reinforcement learning. Empirically, our approach provides a safety guarantee to LLMs that is missing in DPO while achieving significantly higher rewards under the same safety constraint compared to a recently proposed safe RLHF approach.

Warning: This paper contains example data that may be offensive or harmful.

While experimental evidence for spacetime supersymmetry (SUSY) in particle physics remains elusive, condensed matter systems offer a promising arena for its emergence at quantum critical points (QCPs). Although there have been a variety of proposals for emergent SUSY at symmetry-breaking QCPs, the emergence of SUSY at fractionalized QCPs remains largely unexplored. Here, we demonstrate emergent space-time SUSY at a fractionalized QCP in the Kitaev honeycomb model with Su-Schrieffer-Heeger (SSH) spin-phonon coupling. Specifically, through numerical computations and analytical analysis, we show that the anisotropic SSH-Kitaev model hosts a fractionalized QCP between a Dirac spin liquid and an incommensurate/commensurate valence-bond-solid phase coexisting with Z2 topological order. A low-energy field theory incorporating phonon quantum fluctuations reveals that this fractionalized QCP features an emergent N=2 spacetime SUSY. We further discuss their universal experimental signatures in thermal transport and viscosity, highlighting the concrete lattice realization of emergent SUSY at a fractionalized QCP in 2D.

Discrepancies between theory and recent qBounce data have prompted renewed scrutiny of how boundary conditions are implemented for ultracold neutrons bouncing above a mirror in Earth's gravity. We apply the theory of self-adjoint extensions to the linear gravitational potential on the half-line and derive the most general boundary condition that renders the Hamiltonian self-adjoint. This introduces a single real self-adjoint parameter λ that continuously interpolates between the Dirichlet case and more general (Robin-type) reflecting surfaces.

Building on this framework, we provide analytical expressions for the energy spectrum, eigenfunctions, relevant matrix elements, and a set of sum rules valid for arbitrary λ. We show how nontrivial boundary conditions can bias measurements of g and can mimic or mask putative short-range ''fifth-force''. Our results emphasize that enforcing self-adjointness-and modeling the correct boundary physics-is essential for quantitative predictions in gravitational quantum states. Beyond neutron quantum bounces, the approach is broadly applicable to systems where boundaries and self-adjointness govern the observable spectra and dynamics.

Localized image captioning has made significant progress with models like the

Describe Anything Model (DAM), which can generate detailed region-specific

descriptions without explicit region-text supervision. However, such

capabilities have yet to be widely applied to specialized domains like medical

imaging, where diagnostic interpretation relies on subtle regional findings

rather than global understanding. To mitigate this gap, we propose MedDAM, the

first comprehensive framework leveraging large vision-language models for

region-specific captioning in medical images. MedDAM employs medical

expert-designed prompts tailored to specific imaging modalities and establishes

a robust evaluation benchmark comprising a customized assessment protocol, data

pre-processing pipeline, and specialized QA template library. This benchmark

evaluates both MedDAM and other adaptable large vision-language models,

focusing on clinical factuality through attribute-level verification tasks,

thereby circumventing the absence of ground-truth region-caption pairs in

medical datasets. Extensive experiments on the VinDr-CXR, LIDC-IDRI, and

SkinCon datasets demonstrate MedDAM's superiority over leading peers (including

GPT-4o, Claude 3.7 Sonnet, LLaMA-3.2 Vision, Qwen2.5-VL, GPT-4Rol, and

OMG-LLaVA) in the task, revealing the importance of region-level semantic

alignment in medical image understanding and establishing MedDAM as a promising

foundation for clinical vision-language integration.

Accurate road damage detection is crucial for timely infrastructure maintenance and public safety, but existing vision-only datasets and models lack the rich contextual understanding that textual information can provide. To address this limitation, we introduce RoadBench, the first multimodal benchmark for comprehensive road damage understanding. This dataset pairs high resolution images of road damages with detailed textual descriptions, providing a richer context for model training. We also present RoadCLIP, a novel vision language model that builds upon CLIP by integrating domain specific enhancements. It includes a disease aware positional encoding that captures spatial patterns of road defects and a mechanism for injecting road-condition priors to refine the model's understanding of road damages. We further employ a GPT driven data generation pipeline to expand the image to text pairs in RoadBench, greatly increasing data diversity without exhaustive manual annotation. Experiments demonstrate that RoadCLIP achieves state of the art performance on road damage recognition tasks, significantly outperforming existing vision-only models by 19.2%. These results highlight the advantages of integrating visual and textual information for enhanced road condition analysis, setting new benchmarks for the field and paving the way for more effective infrastructure monitoring through multimodal learning.

CD8+ "killer" T cells and CD4+ "helper" T cells play a central role in the adaptive immune system by recognizing antigens presented by Major Histocompatibility Complex (pMHC) molecules via T Cell Receptors (TCRs). Modeling binding between T cells and the pMHC complex is fundamental to understanding basic mechanisms of human immune response as well as in developing therapies. While transformer-based models such as TULIP have achieved impressive performance in this domain, their black-box nature precludes interpretability and thus limits a deeper mechanistic understanding of T cell response. Most existing post-hoc explainable AI (XAI) methods are confined to encoder-only, co-attention, or model-specific architectures and cannot handle encoder-decoder transformers used in TCR-pMHC modeling. To address this gap, we propose Quantifying Cross-Attention Interaction (QCAI), a new post-hoc method designed to interpret the cross-attention mechanisms in transformer decoders. Quantitative evaluation is a challenge for XAI methods; we have compiled TCR-XAI, a benchmark consisting of 274 experimentally determined TCR-pMHC structures to serve as ground truth for binding. Using these structures we compute physical distances between relevant amino acid residues in the TCR-pMHC interaction region and evaluate how well our method and others estimate the importance of residues in this region across the dataset. We show that QCAI achieves state-of-the-art performance on both interpretability and prediction accuracy under the TCR-XAI benchmark.

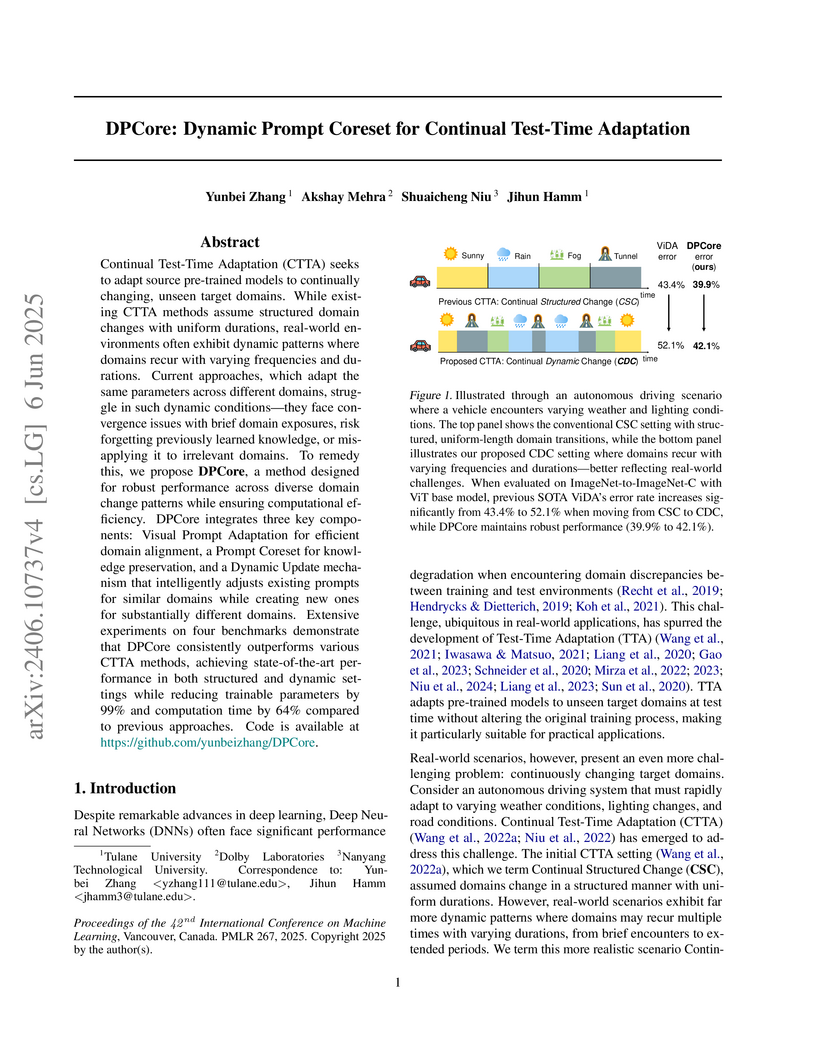

Continual Test-Time Adaptation (CTTA) seeks to adapt source pre-trained

models to continually changing, unseen target domains. While existing CTTA

methods assume structured domain changes with uniform durations, real-world

environments often exhibit dynamic patterns where domains recur with varying

frequencies and durations. Current approaches, which adapt the same parameters

across different domains, struggle in such dynamic conditions-they face

convergence issues with brief domain exposures, risk forgetting previously

learned knowledge, or misapplying it to irrelevant domains. To remedy this, we

propose DPCore, a method designed for robust performance across diverse domain

change patterns while ensuring computational efficiency. DPCore integrates

three key components: Visual Prompt Adaptation for efficient domain alignment,

a Prompt Coreset for knowledge preservation, and a Dynamic Update mechanism

that intelligently adjusts existing prompts for similar domains while creating

new ones for substantially different domains. Extensive experiments on four

benchmarks demonstrate that DPCore consistently outperforms various CTTA

methods, achieving state-of-the-art performance in both structured and dynamic

settings while reducing trainable parameters by 99% and computation time by 64%

compared to previous approaches.

Accurate flood forecasting remains a challenge for water-resource management, as it demands modeling of local, time-varying runoff drivers (e.g., rainfall-induced peaks, baseflow trends) and complex spatial interactions across a river network. Traditional data-driven approaches, such as convolutional networks and sequence-based models, ignore topological information about the region. Graph Neural Networks (GNNs) propagate information exactly along the river network, which is ideal for learning hydrological routing. However, state-of-the-art GNN-based flood prediction models collapse pixels to coarse catchment polygons as the cost of training explodes with graph size and higher resolution. Furthermore, most existing methods treat spatial and temporal dependencies separately, either applying GNNs solely on spatial graphs or transformers purely on temporal sequences, thus failing to simultaneously capture spatiotemporal interactions critical for accurate flood prediction. We introduce a heterogenous basin graph where every land and river pixel is a node connected by physical hydrological flow directions and inter-catchment relationships. We propose HydroGAT, a spatiotemporal network that adaptively learns local temporal importance and the most influential upstream locations. Evaluated in two Midwestern US basins and across five baseline architectures, our model achieves higher NSE (up to 0.97), improved KGE (up to 0.96), and low bias (PBIAS within ±5%) in hourly discharge prediction, while offering interpretable attention maps that reveal sparse, structured intercatchment influences. To support high-resolution basin-scale training, we develop a distributed data-parallel pipeline that scales efficiently up to 64 NVIDIA A100 GPUs on NERSC Perlmutter supercomputer, demonstrating up to 15x speedup across machines. Our code is available at this https URL.

Recent advances in multi-modal models have demonstrated strong performance in tasks such as image generation and reasoning. However, applying these models to the fire domain remains challenging due to the lack of publicly available datasets with high-quality fire domain annotations. To address this gap, we introduce DetectiumFire, a large-scale, multi-modal dataset comprising of 22.5k high-resolution fire-related images and 2.5k real-world fire-related videos covering a wide range of fire types, environments, and risk levels. The data are annotated with both traditional computer vision labels (e.g., bounding boxes) and detailed textual prompts describing the scene, enabling applications such as synthetic data generation and fire risk reasoning. DetectiumFire offers clear advantages over existing benchmarks in scale, diversity, and data quality, significantly reducing redundancy and enhancing coverage of real-world scenarios. We validate the utility of DetectiumFire across multiple tasks, including object detection, diffusion-based image generation, and vision-language reasoning. Our results highlight the potential of this dataset to advance fire-related research and support the development of intelligent safety systems. We release DetectiumFire to promote broader exploration of fire understanding in the AI community. The dataset is available at this https URL

T cell receptor (TCR) recognition of peptide-MHC (pMHC) complexes is a central component of adaptive immunity, with implications for vaccine design, cancer immunotherapy, and autoimmune disease. While recent advances in machine learning have improved prediction of TCR-pMHC binding, the most effective approaches are black-box transformer models that cannot provide a rationale for predictions. Post-hoc explanation methods can provide insight with respect to the input but do not explicitly model biochemical mechanisms (e.g. known binding regions), as in TCR-pMHC binding. ``Explain-by-design'' models (i.e., with architectural components that can be examined directly after training) have been explored in other domains, but have not been used for TCR-pMHC binding. We propose explainable model layers (TCR-EML) that can be incorporated into protein-language model backbones for TCR-pMHC modeling. Our approach uses prototype layers for amino acid residue contacts drawn from known TCR-pMHC binding mechanisms, enabling high-quality explanations for predicted TCR-pMHC binding. Experiments of our proposed method on large-scale datasets demonstrate competitive predictive accuracy and generalization, and evaluation on the TCR-XAI benchmark demonstrates improved explainability compared with existing approaches.

Researchers developed a Graph Neural Network (GNN) based recommendation algorithm incorporating initial residual connections and identity mapping to mitigate over-smoothing and enhance information flow. This approach achieved superior Recall@20 and NDCG@20 scores compared to other GNN and traditional baselines across Gowalla, Yelp-2018, and Amazon-Book datasets.

01 Oct 2025

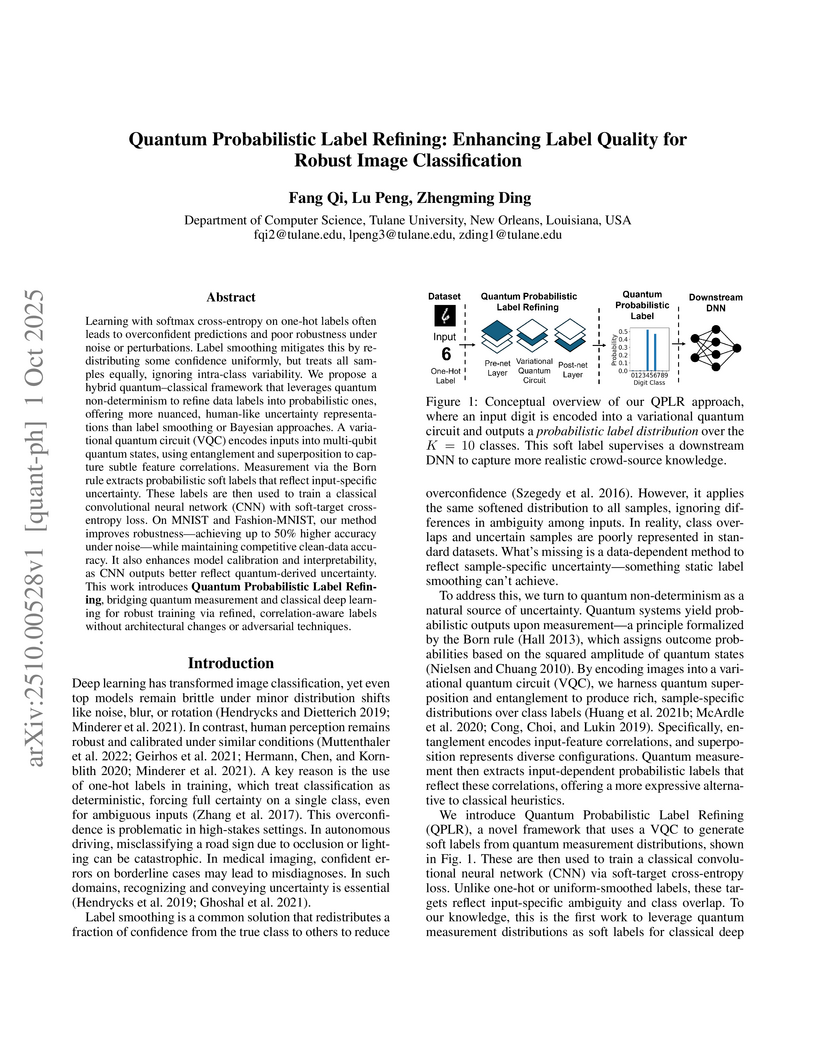

Learning with softmax cross-entropy on one-hot labels often leads to overconfident predictions and poor robustness under noise or perturbations. Label smoothing mitigates this by redistributing some confidence uniformly, but treats all samples equally, ignoring intra-class variability. We propose a hybrid quantum-classical framework that leverages quantum non-determinism to refine data labels into probabilistic ones, offering more nuanced, human-like uncertainty representations than label smoothing or Bayesian approaches. A variational quantum circuit (VQC) encodes inputs into multi-qubit quantum states, using entanglement and superposition to capture subtle feature correlations. Measurement via the Born rule extracts probabilistic soft labels that reflect input-specific uncertainty. These labels are then used to train a classical convolutional neural network (CNN) with soft-target cross-entropy loss. On MNIST and Fashion-MNIST, our method improves robustness, achieving up to 50% higher accuracy under noise while maintaining competitive accuracy on clean data. It also enhances model calibration and interpretability, as CNN outputs better reflect quantum-derived uncertainty. This work introduces Quantum Probabilistic Label Refining, bridging quantum measurement and classical deep learning for robust training via refined, correlation-aware labels without architectural changes or adversarial techniques.

There are no more papers matching your filters at the moment.