University of Siegen

Epoch AI, in collaboration with over 60 mathematicians, introduced FrontierMath, a benchmark of new, unpublished, research-level mathematical problems designed with automated verification to assess advanced AI reasoning. Leading AI models achieved less than a 2% success rate on this benchmark, highlighting a substantial gap between current AI capabilities and human mathematical expertise.

This survey comprehensively introduces and reviews Quantum-enhanced Computer Vision (QeCV), an emerging interdisciplinary field that applies quantum computational paradigms to address computational challenges in classical computer vision. It categorizes existing methods based on quantum annealing and gate-based quantum computing, detailing their application to a wide array of vision tasks.

Data Poisoning attacks modify training data to maliciously control a model trained on such data. In this work, we focus on targeted poisoning attacks which cause a reclassification of an unmodified test image and as such breach model integrity. We consider a particularly malicious poisoning attack that is both "from scratch" and "clean label", meaning we analyze an attack that successfully works against new, randomly initialized models, and is nearly imperceptible to humans, all while perturbing only a small fraction of the training data. Previous poisoning attacks against deep neural networks in this setting have been limited in scope and success, working only in simplified settings or being prohibitively expensive for large datasets. The central mechanism of the new attack is matching the gradient direction of malicious examples. We analyze why this works, supplement with practical considerations. and show its threat to real-world practitioners, finding that it is the first poisoning method to cause targeted misclassification in modern deep networks trained from scratch on a full-sized, poisoned ImageNet dataset. Finally we demonstrate the limitations of existing defensive strategies against such an attack, concluding that data poisoning is a credible threat, even for large-scale deep learning systems.

13 Nov 2024

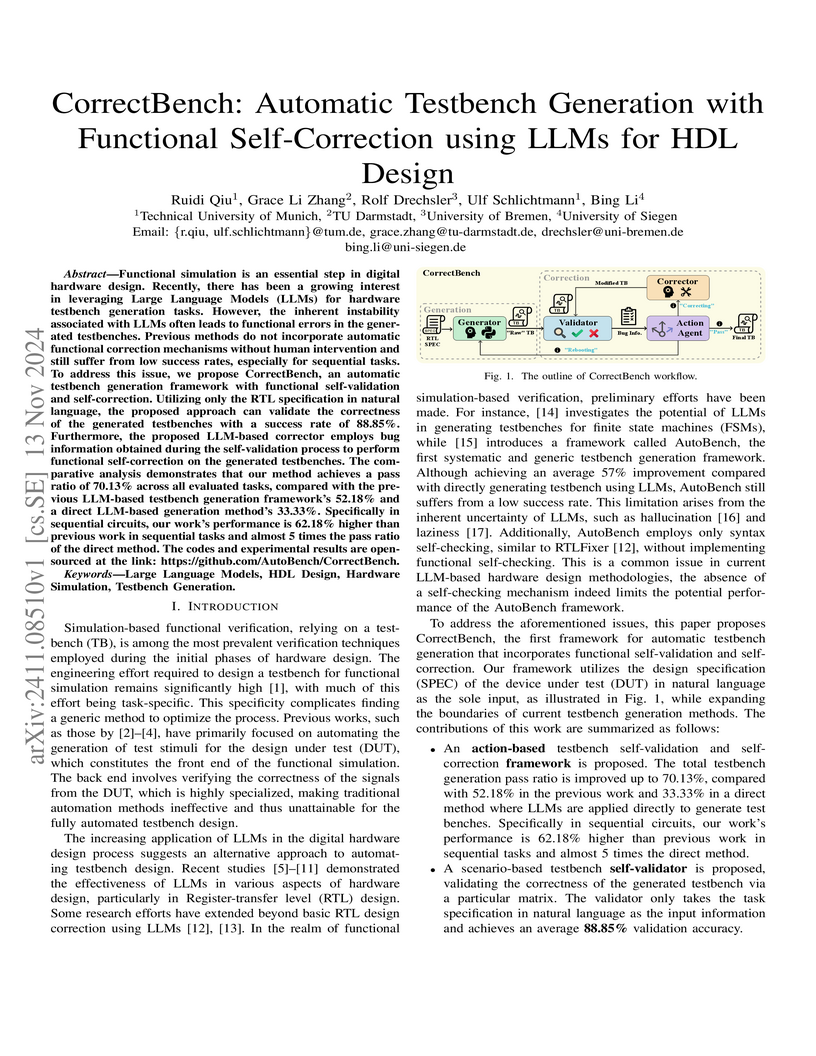

CorrectBench introduces a framework for automatic testbench generation that incorporates functional self-validation and self-correction using Large Language Models (LLMs). The system significantly enhances the reliability of testbenches for hardware description language (HDL) designs, achieving a 70.13% Eval2 pass ratio across 156 tasks and demonstrating particularly strong improvements for complex sequential circuits, enabling fully autonomous verification.

15 Oct 2025

A major step toward Artificial General Intelligence (AGI) and Super Intelligence is AI's ability to autonomously conduct research - what we term Artificial Research Intelligence (ARI). If machines could generate hypotheses, conduct experiments, and write research papers without human intervention, it would transform science. Sakana recently introduced the 'AI Scientist', claiming to conduct research autonomously, i.e. they imply to have achieved what we term Artificial Research Intelligence (ARI). The AI Scientist gained much attention, but a thorough independent evaluation has yet to be conducted.

Our evaluation of the AI Scientist reveals critical shortcomings. The system's literature reviews produced poor novelty assessments, often misclassifying established concepts (e.g., micro-batching for stochastic gradient descent) as novel. It also struggles with experiment execution: 42% of experiments failed due to coding errors, while others produced flawed or misleading results. Code modifications were minimal, averaging 8% more characters per iteration, suggesting limited adaptability. Generated manuscripts were poorly substantiated, with a median of five citations, most outdated (only five of 34 from 2020 or later). Structural errors were frequent, including missing figures, repeated sections, and placeholder text like 'Conclusions Here'. Some papers contained hallucinated numerical results.

Despite these flaws, the AI Scientist represents a leap forward in research automation. It generates full research manuscripts with minimal human input, challenging expectations of AI-driven science. Many reviewers might struggle to distinguish its work from human researchers. While its quality resembles a rushed undergraduate paper, its speed and cost efficiency are unprecedented, producing a full paper for USD 6 to 15 with 3.5 hours of human involvement, far outpacing traditional researchers.

A novel cross-layer parameter sharing strategy, BasisSharing, is introduced for compressing large language models by enabling weight matrices across different layers to share common basis vectors while retaining unique functionality via layer-specific coefficients. This method outperformed existing compression techniques, achieving up to 25% lower perplexity and 4% higher accuracy on downstream tasks, along with a 1.57x inference throughput improvement at 50% compression.

Learning editable high-resolution scene representations for dynamic scenes is an open problem with applications across the domains from autonomous driving to creative editing - the most successful approaches today make a trade-off between editability and supporting scene complexity: neural atlases represent dynamic scenes as two deforming image layers, foreground and background, which are editable in 2D, but break down when multiple objects occlude and interact. In contrast, scene graph models make use of annotated data such as masks and bounding boxes from autonomous-driving datasets to capture complex 3D spatial relationships, but their implicit volumetric node representations are challenging to edit view-consistently. We propose Neural Atlas Graphs (NAGs), a hybrid high-resolution scene representation, where every graph node is a view-dependent neural atlas, facilitating both 2D appearance editing and 3D ordering and positioning of scene elements. Fit at test-time, NAGs achieve state-of-the-art quantitative results on the Waymo Open Dataset - by 5 dB PSNR increase compared to existing methods - and make environmental editing possible in high resolution and visual quality - creating counterfactual driving scenarios with new backgrounds and edited vehicle appearance. We find that the method also generalizes beyond driving scenes and compares favorably - by more than 7 dB in PSNR - to recent matting and video editing baselines on the DAVIS video dataset with a diverse set of human and animal-centric scenes.

Project Page: this https URL

ETH Zurich

ETH Zurich University of Washington

University of Washington CNRS

CNRS University of Pittsburgh

University of Pittsburgh University of CambridgeUniversity of FreiburgHeidelberg UniversityLeibniz University Hannover

University of CambridgeUniversity of FreiburgHeidelberg UniversityLeibniz University Hannover Northeastern University

Northeastern University UCLA

UCLA Imperial College London

Imperial College London University of ManchesterUniversity of Zurich

University of ManchesterUniversity of Zurich New York UniversityUniversity of BernUniversity of Stuttgart

New York UniversityUniversity of BernUniversity of Stuttgart UC Berkeley

UC Berkeley University College London

University College London Fudan University

Fudan University Georgia Institute of TechnologyNational Taiwan University

Georgia Institute of TechnologyNational Taiwan University the University of Tokyo

the University of Tokyo University of California, IrvineUniversity of BonnTechnical University of Berlin

University of California, IrvineUniversity of BonnTechnical University of Berlin University of Bristol

University of Bristol University of MichiganUniversity of EdinburghUniversity of Hong KongUniversity of Alabama at Birmingham

University of MichiganUniversity of EdinburghUniversity of Hong KongUniversity of Alabama at Birmingham Northwestern UniversityUniversity of Bamberg

Northwestern UniversityUniversity of Bamberg University of Florida

University of Florida Emory UniversityUniversity of CologneHarvard Medical School

Emory UniversityUniversity of CologneHarvard Medical School University of Pennsylvania

University of Pennsylvania University of SouthamptonFlorida State University

University of SouthamptonFlorida State University EPFL

EPFL University of Wisconsin-MadisonMassachusetts General HospitalChongqing UniversityKeio University

University of Wisconsin-MadisonMassachusetts General HospitalChongqing UniversityKeio University University of Alberta

University of Alberta King’s College LondonFriedrich-Alexander-Universität Erlangen-NürnbergUniversity of Luxembourg

King’s College LondonFriedrich-Alexander-Universität Erlangen-NürnbergUniversity of Luxembourg Technical University of MunichUniversity of Duisburg-EssenSapienza University of RomeUniversity of HeidelbergUniversity of Sheffield

Technical University of MunichUniversity of Duisburg-EssenSapienza University of RomeUniversity of HeidelbergUniversity of Sheffield HKUSTUniversity of GenevaWashington University in St. LouisTU BerlinUniversity of GlasgowUniversity of SiegenUniversity of PotsdamUniversidade Estadual de CampinasUniversity of Oldenburg

HKUSTUniversity of GenevaWashington University in St. LouisTU BerlinUniversity of GlasgowUniversity of SiegenUniversity of PotsdamUniversidade Estadual de CampinasUniversity of Oldenburg The Ohio State UniversityUniversity of LeicesterGerman Cancer Research Center (DKFZ)University of BremenUniversity of ToulouseUniversity of Miami

The Ohio State UniversityUniversity of LeicesterGerman Cancer Research Center (DKFZ)University of BremenUniversity of ToulouseUniversity of Miami Karlsruhe Institute of TechnologyPeking Union Medical CollegeUniversity of OuluUniversity of HamburgUniversity of RegensburgUniversity of BirminghamUniversity of LeedsChinese Academy of Medical SciencesINSERM

Karlsruhe Institute of TechnologyPeking Union Medical CollegeUniversity of OuluUniversity of HamburgUniversity of RegensburgUniversity of BirminghamUniversity of LeedsChinese Academy of Medical SciencesINSERM University of BaselPeking Union Medical College HospitalUniversity of LausanneUniversity of LilleUniversity of PoitiersUniversity of PassauUniversity of LübeckKing Fahd University of Petroleum and MineralsUniversity of LondonUniversity of NottinghamUniversity of Erlangen-NurembergUniversity of BielefeldSorbonne UniversityUniversity of South FloridaWake Forest UniversityUniversity of CalgaryUniversity of Picardie Jules VerneIBM

University of BaselPeking Union Medical College HospitalUniversity of LausanneUniversity of LilleUniversity of PoitiersUniversity of PassauUniversity of LübeckKing Fahd University of Petroleum and MineralsUniversity of LondonUniversity of NottinghamUniversity of Erlangen-NurembergUniversity of BielefeldSorbonne UniversityUniversity of South FloridaWake Forest UniversityUniversity of CalgaryUniversity of Picardie Jules VerneIBM University of GöttingenUniversity of BordeauxUniversity of MannheimUniversity of California San FranciscoNIHUniversity of KonstanzUniversity of Electro-CommunicationsUniversity of WuppertalUniversity of ReunionUNICAMPUniversity of TrierHasso Plattner InstituteUniversity of BayreuthHeidelberg University HospitalUniversity of StrasbourgDKFZUniversity of LorraineInselspital, Bern University Hospital, University of BernUniversity of WürzburgUniversity of La RochelleUniversity of LyonUniversity of HohenheimUniversity Medical Center Hamburg-EppendorfUniversity of UlmUniversity Hospital ZurichUniversity of TuebingenUniversity of KaiserslauternUniversity of NantesUniversity of MainzUniversity of PaderbornUniversity of KielMedical University of South CarolinaUniversity of RostockThe University of Texas MD Anderson Cancer CenterNational Research Council (CNR)Hannover Medical SchoolItalian National Research CouncilUniversity of MuensterUniversity of MontpellierUniversity of LeipzigUniversity of GreifswaldUniversity Hospital BernSiemens HealthineersThe University of Alabama at BirminghamNational Institutes of HealthUniversity of MarburgUniversity of Paris-SaclayUniversity of LimogesUniversity of Clermont AuvergneUniversity of DortmundUniversity of GiessenKITUniversity of ToulonChildren’s Hospital of PhiladelphiaUniversity of JenaNational Taiwan University HospitalUniversity of SaarlandUniversity of ErlangenNational Cancer InstituteUniversity Hospital HeidelbergSwiss Federal Institute of Technology LausanneUniversity of Texas Health Science Center at HoustonNational Institute of Biomedical Imaging and BioengineeringUniversity of New CaledoniaUniversity of Koblenz-LandauParis Diderot UniversityUniversity of ParisInselspital, Bern University HospitalUniversity of Grenoble AlpesUniversity Hospital BaselMD Anderson Cancer CenterUniversity of AngersUniversity of French PolynesiaUniversity of MagdeburgUniversity of Geneva, SwitzerlandOulu University HospitalUniversity of ToursFriedrich-Alexander-University Erlangen-NurnbergUniversity of Rennes 1Wake Forest School of MedicineNIH Clinical CenterParis Descartes UniversityUniversity of Rouen NormandieUniversity of Aix-MarseilleUniversity of Perpignan Via DomitiaUniversity of Caen NormandieUniversity of FrankfurtUniversity of BochumUniversity of Bourgogne-Franche-ComtéUniversity of Corsica Pasquale PaoliNational Institute of Neurological Disorders and StrokeUniversity of HannoverRoche DiagnosticsUniversity of South BrittanyUniversity of DüsseldorfUniversity of Reims Champagne-ArdenneUniversity of HalleIRCCS Fondazione Santa LuciaUniversity of Applied Sciences TrierUniversity of Southampton, UKUniversity of Nice–Sophia AntipolisUniversit

de LorraineUniversité Paris-Saclay["École Polytechnique Fédérale de Lausanne"]RWTH Aachen UniversityUniversity of Bern, Institute for Advanced Study in Biomedical InnovationCRIBIS University of AlbertaThe Cancer Imaging Archive (TCIA)Fraunhofer Institute for Medical Image Computing MEVISMedical School of HannoverIstituto di Ricovero e Cura a Carattere Scientifico NeuromedFondazione Santa Lucia IRCCSCEA, LIST, Laboratory of Image and Biomedical SystemsUniversity of Alberta, CanadaHeidelberg University Hospital, Department of NeuroradiologyUniversity of Bern, SwitzerlandUniversity of DresdenUniversity of SpeyerUniversity of Trier, GermanyUniversity of Lorraine, FranceUniversity of Le Havre NormandieUniversity of Bretagne OccidentaleUniversity of French GuianaUniversity of the AntillesUniversity of Bern, Institute of Surgical Technology and BiomechanicsUniversity of Bern, ARTORG Center for Biomedical Engineering ResearchUniversity of Geneva, Department of RadiologyUniversity of Zürich, Department of NeuroradiologyRuhr-University-Bochum

University of GöttingenUniversity of BordeauxUniversity of MannheimUniversity of California San FranciscoNIHUniversity of KonstanzUniversity of Electro-CommunicationsUniversity of WuppertalUniversity of ReunionUNICAMPUniversity of TrierHasso Plattner InstituteUniversity of BayreuthHeidelberg University HospitalUniversity of StrasbourgDKFZUniversity of LorraineInselspital, Bern University Hospital, University of BernUniversity of WürzburgUniversity of La RochelleUniversity of LyonUniversity of HohenheimUniversity Medical Center Hamburg-EppendorfUniversity of UlmUniversity Hospital ZurichUniversity of TuebingenUniversity of KaiserslauternUniversity of NantesUniversity of MainzUniversity of PaderbornUniversity of KielMedical University of South CarolinaUniversity of RostockThe University of Texas MD Anderson Cancer CenterNational Research Council (CNR)Hannover Medical SchoolItalian National Research CouncilUniversity of MuensterUniversity of MontpellierUniversity of LeipzigUniversity of GreifswaldUniversity Hospital BernSiemens HealthineersThe University of Alabama at BirminghamNational Institutes of HealthUniversity of MarburgUniversity of Paris-SaclayUniversity of LimogesUniversity of Clermont AuvergneUniversity of DortmundUniversity of GiessenKITUniversity of ToulonChildren’s Hospital of PhiladelphiaUniversity of JenaNational Taiwan University HospitalUniversity of SaarlandUniversity of ErlangenNational Cancer InstituteUniversity Hospital HeidelbergSwiss Federal Institute of Technology LausanneUniversity of Texas Health Science Center at HoustonNational Institute of Biomedical Imaging and BioengineeringUniversity of New CaledoniaUniversity of Koblenz-LandauParis Diderot UniversityUniversity of ParisInselspital, Bern University HospitalUniversity of Grenoble AlpesUniversity Hospital BaselMD Anderson Cancer CenterUniversity of AngersUniversity of French PolynesiaUniversity of MagdeburgUniversity of Geneva, SwitzerlandOulu University HospitalUniversity of ToursFriedrich-Alexander-University Erlangen-NurnbergUniversity of Rennes 1Wake Forest School of MedicineNIH Clinical CenterParis Descartes UniversityUniversity of Rouen NormandieUniversity of Aix-MarseilleUniversity of Perpignan Via DomitiaUniversity of Caen NormandieUniversity of FrankfurtUniversity of BochumUniversity of Bourgogne-Franche-ComtéUniversity of Corsica Pasquale PaoliNational Institute of Neurological Disorders and StrokeUniversity of HannoverRoche DiagnosticsUniversity of South BrittanyUniversity of DüsseldorfUniversity of Reims Champagne-ArdenneUniversity of HalleIRCCS Fondazione Santa LuciaUniversity of Applied Sciences TrierUniversity of Southampton, UKUniversity of Nice–Sophia AntipolisUniversit

de LorraineUniversité Paris-Saclay["École Polytechnique Fédérale de Lausanne"]RWTH Aachen UniversityUniversity of Bern, Institute for Advanced Study in Biomedical InnovationCRIBIS University of AlbertaThe Cancer Imaging Archive (TCIA)Fraunhofer Institute for Medical Image Computing MEVISMedical School of HannoverIstituto di Ricovero e Cura a Carattere Scientifico NeuromedFondazione Santa Lucia IRCCSCEA, LIST, Laboratory of Image and Biomedical SystemsUniversity of Alberta, CanadaHeidelberg University Hospital, Department of NeuroradiologyUniversity of Bern, SwitzerlandUniversity of DresdenUniversity of SpeyerUniversity of Trier, GermanyUniversity of Lorraine, FranceUniversity of Le Havre NormandieUniversity of Bretagne OccidentaleUniversity of French GuianaUniversity of the AntillesUniversity of Bern, Institute of Surgical Technology and BiomechanicsUniversity of Bern, ARTORG Center for Biomedical Engineering ResearchUniversity of Geneva, Department of RadiologyUniversity of Zürich, Department of NeuroradiologyRuhr-University-BochumGliomas are the most common primary brain malignancies, with different

degrees of aggressiveness, variable prognosis and various heterogeneous

histologic sub-regions, i.e., peritumoral edematous/invaded tissue, necrotic

core, active and non-enhancing core. This intrinsic heterogeneity is also

portrayed in their radio-phenotype, as their sub-regions are depicted by

varying intensity profiles disseminated across multi-parametric magnetic

resonance imaging (mpMRI) scans, reflecting varying biological properties.

Their heterogeneous shape, extent, and location are some of the factors that

make these tumors difficult to resect, and in some cases inoperable. The amount

of resected tumor is a factor also considered in longitudinal scans, when

evaluating the apparent tumor for potential diagnosis of progression.

Furthermore, there is mounting evidence that accurate segmentation of the

various tumor sub-regions can offer the basis for quantitative image analysis

towards prediction of patient overall survival. This study assesses the

state-of-the-art machine learning (ML) methods used for brain tumor image

analysis in mpMRI scans, during the last seven instances of the International

Brain Tumor Segmentation (BraTS) challenge, i.e., 2012-2018. Specifically, we

focus on i) evaluating segmentations of the various glioma sub-regions in

pre-operative mpMRI scans, ii) assessing potential tumor progression by virtue

of longitudinal growth of tumor sub-regions, beyond use of the RECIST/RANO

criteria, and iii) predicting the overall survival from pre-operative mpMRI

scans of patients that underwent gross total resection. Finally, we investigate

the challenge of identifying the best ML algorithms for each of these tasks,

considering that apart from being diverse on each instance of the challenge,

the multi-institutional mpMRI BraTS dataset has also been a continuously

evolving/growing dataset.

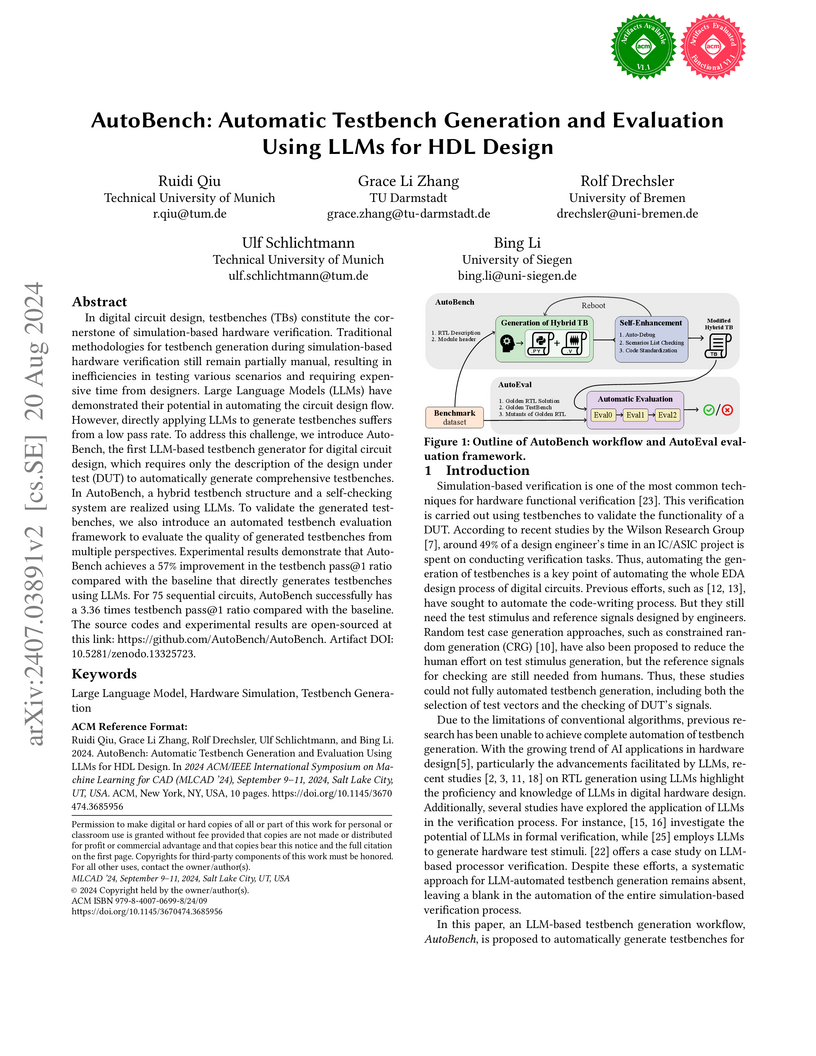

AutoBench presents a Large Language Model (LLM)-based framework for automatically generating and evaluating comprehensive testbenches for digital circuit designs, achieving a 57% improvement in verification coverage (Eval2 pass@1) over direct LLM application. It utilizes a hybrid Verilog/Python architecture and self-enhancement mechanisms to improve testbench quality, especially for complex sequential circuits.

15 Sep 2025

The atmospheric structure of gas giants, especially those of Jupiter and Saturn, has been an object of scientific studies for a long time. The measurement of the gravitational fields by the Juno mission for Jupiter and the Cassini mission for Saturn offered new possibilities to study the interior structure of these planets. Accordingly, the reconstruction of the wind velocities from gravitational data on gas giants has been the subject of many research papers over the years, yet the mathematical foundations of this inverse problem and its numerical resolution have not been studied in detail. This article suggests a rigorous mathematical theory for inferring the wind fields of gas giants. In particular, an orthonormal basis is derived which can be associated to models of the gravitational potential and the interior wind velocity field. Moreover, this approach provides the foundations for existing resolution concepts of the inverse problem.

Emerging large-scale text-to-image generative models, e.g., Stable Diffusion

(SD), have exhibited overwhelming results with high fidelity. Despite the

magnificent progress, current state-of-the-art models still struggle to

generate images fully adhering to the input prompt. Prior work, Attend &

Excite, has introduced the concept of Generative Semantic Nursing (GSN), aiming

to optimize cross-attention during inference time to better incorporate the

semantics. It demonstrates promising results in generating simple prompts,

e.g., "a cat and a dog". However, its efficacy declines when dealing with more

complex prompts, and it does not explicitly address the problem of improper

attribute binding. To address the challenges posed by complex prompts or

scenarios involving multiple entities and to achieve improved attribute

binding, we propose Divide & Bind. We introduce two novel loss objectives for

GSN: a novel attendance loss and a binding loss. Our approach stands out in its

ability to faithfully synthesize desired objects with improved attribute

alignment from complex prompts and exhibits superior performance across

multiple evaluation benchmarks.

This study examines the performance of item-based k-Nearest Neighbors (ItemKNN) algorithms in the RecBole and LensKit recommender system libraries. Using four data sets (Anime, Modcloth, ML-100K, and ML-1M), we assess each library's efficiency, accuracy, and scalability, focusing primarily on normalized discounted cumulative gain (nDCG). Our results show that RecBole outperforms LensKit on two of three metrics on the ML-100K data set: it achieved an 18% higher nDCG, 14% higher precision, and 35% lower recall. To ensure a fair comparison, we adjusted LensKit's nDCG calculation to match RecBole's method. This alignment made the performance more comparable, with LensKit achieving an nDCG of 0.2540 and RecBole 0.2674. Differences in similarity matrix calculations were identified as the main cause of performance deviations. After modifying LensKit to retain only the top K similar items, both libraries showed nearly identical nDCG values across all data sets. For instance, both achieved an nDCG of 0.2586 on the ML-1M data set with the same random seed. Initially, LensKit's original implementation only surpassed RecBole in the ModCloth dataset.

24 Oct 2024

With the rapidly increasing complexity of modern chips, hardware engineers are required to invest more effort in tasks such as circuit design, verification, and physical implementation. These workflows often involve continuous modifications, which are labor-intensive and prone to errors. Therefore, there is an increasing need for more efficient and cost-effective Electronic Design Automation (EDA) solutions to accelerate new hardware development. Recently, large language models (LLMs) have made significant advancements in contextual understanding, logical reasoning, and response generation. Since hardware designs and intermediate scripts can be expressed in text format, it is reasonable to explore whether integrating LLMs into EDA could simplify and fully automate the entire workflow. Accordingly, this paper discusses such possibilities in several aspects, covering hardware description language (HDL) generation, code debugging, design verification, and physical implementation. Two case studies, along with their future outlook, are introduced to highlight the capabilities of LLMs in code repair and testbench generation. Finally, future directions and challenges are highlighted to further explore the potential of LLMs in shaping the next-generation EDA

Unlike traditional vision-only models, vision language models (VLMs) offer an

intuitive way to access visual content through language prompting by combining

a large language model (LLM) with a vision encoder. However, both the LLM and

the vision encoder come with their own set of biases, cue preferences, and

shortcuts, which have been rigorously studied in uni-modal models. A timely

question is how such (potentially misaligned) biases and cue preferences behave

under multi-modal fusion in VLMs. As a first step towards a better

understanding, we investigate a particularly well-studied vision-only bias -

the texture vs. shape bias and the dominance of local over global information.

As expected, we find that VLMs inherit this bias to some extent from their

vision encoders. Surprisingly, the multi-modality alone proves to have

important effects on the model behavior, i.e., the joint training and the

language querying change the way visual cues are processed. While this direct

impact of language-informed training on a model's visual perception is

intriguing, it raises further questions on our ability to actively steer a

model's output so that its prediction is based on particular visual cues of the

user's choice. Interestingly, VLMs have an inherent tendency to recognize

objects based on shape information, which is different from what a plain vision

encoder would do. Further active steering towards shape-based classifications

through language prompts is however limited. In contrast, active VLM steering

towards texture-based decisions through simple natural language prompts is

often more successful.

URL: this https URL

01 Sep 2025

Snapshot Multispectral Light-field Imaging (SMLI) is an emerging computational imaging technique that captures high-dimensional data (x, y, z, θ, ϕ, λ) in a single shot using a low-dimensional sensor. The accuracy of high-dimensional data reconstruction depends on representing the spectrum using neural radiance field models, which requires consideration of broadband spectral decoupling during optimization. Currently, some SMLI approaches avoid the challenge of model decoupling by either reducing light-throughput or prolonging imaging time. In this work, we propose a broadband spectral neural radiance field (BSNeRF) for SMLI systems. Experiments show that our model successfully decouples a broadband multiplexed spectrum. Consequently, this approach enhances multispectral light-field image reconstruction and further advances plenoptic imaging.

19 Apr 2022

It is widely believed that the implicit regularization of SGD is fundamental

to the impressive generalization behavior we observe in neural networks. In

this work, we demonstrate that non-stochastic full-batch training can achieve

comparably strong performance to SGD on CIFAR-10 using modern architectures. To

this end, we show that the implicit regularization of SGD can be completely

replaced with explicit regularization even when comparing against a strong and

well-researched baseline. Our observations indicate that the perceived

difficulty of full-batch training may be the result of its optimization

properties and the disproportionate time and effort spent by the ML community

tuning optimizers and hyperparameters for small-batch training.

Data poisoning -- the process by which an attacker takes control of a model

by making imperceptible changes to a subset of the training data -- is an

emerging threat in the context of neural networks. Existing attacks for data

poisoning neural networks have relied on hand-crafted heuristics, because

solving the poisoning problem directly via bilevel optimization is generally

thought of as intractable for deep models. We propose MetaPoison, a first-order

method that approximates the bilevel problem via meta-learning and crafts

poisons that fool neural networks. MetaPoison is effective: it outperforms

previous clean-label poisoning methods by a large margin. MetaPoison is robust:

poisoned data made for one model transfer to a variety of victim models with

unknown training settings and architectures. MetaPoison is general-purpose, it

works not only in fine-tuning scenarios, but also for end-to-end training from

scratch, which till now hasn't been feasible for clean-label attacks with deep

nets. MetaPoison can achieve arbitrary adversary goals -- like using poisons of

one class to make a target image don the label of another arbitrarily chosen

class. Finally, MetaPoison works in the real-world. We demonstrate for the

first time successful data poisoning of models trained on the black-box Google

Cloud AutoML API. Code and premade poisons are provided at

this https URL

While learning personalization offers great potential for learners, modern practices in higher education require a deeper consideration of domain models and learning contexts, to develop effective personalization algorithms. This paper introduces an innovative approach to higher education curriculum modelling that utilizes large language models (LLMs) for knowledge graph (KG) completion, with the goal of creating personalized learning-path recommendations. Our research focuses on modelling university subjects and linking their topics to corresponding domain models, enabling the integration of learning modules from different faculties and institutions in the student's learning path. Central to our approach is a collaborative process, where LLMs assist human experts in extracting high-quality, fine-grained topics from lecture materials. We develop a domain, curriculum, and user models for university modules and stakeholders. We implement this model to create the KG from two study modules: Embedded Systems and Development of Embedded Systems Using FPGA. The resulting KG structures the curriculum and links it to the domain models. We evaluate our approach through qualitative expert feedback and quantitative graph quality metrics. Domain experts validated the relevance and accuracy of the model, while the graph quality metrics measured the structural properties of our KG. Our results show that the LLM-assisted graph completion approach enhances the ability to connect related courses across disciplines to personalize the learning experience. Expert feedback also showed high acceptance of the proposed collaborative approach for concept extraction and classification.

Recent work in neural networks for image classification has seen a strong

tendency towards increasing the spatial context. Whether achieved through large

convolution kernels or self-attention, models scale poorly with the increased

spatial context, such that the improved model accuracy often comes at

significant costs. In this paper, we propose a module for studying the

effective filter size of convolutional neural networks. To facilitate such a

study, several challenges need to be addressed: 1) we need an effective means

to train models with large filters (potentially as large as the input data)

without increasing the number of learnable parameters 2) the employed

convolution operation should be a plug-and-play module that can replace

conventional convolutions in a CNN and allow for an efficient implementation in

current frameworks 3) the study of filter sizes has to be decoupled from other

aspects such as the network width or the number of learnable parameters 4) the

cost of the convolution operation itself has to remain manageable i.e. we

cannot naively increase the size of the convolution kernel. To address these

challenges, we propose to learn the frequency representations of filter weights

as neural implicit functions, such that the better scalability of the

convolution in the frequency domain can be leveraged. Additionally, due to the

implementation of the proposed neural implicit function, even large and

expressive spatial filters can be parameterized by only a few learnable

weights. Our analysis shows that, although the proposed networks could learn

very large convolution kernels, the learned filters are well localized and

relatively small in practice when transformed from the frequency to the spatial

domain. We anticipate that our analysis of individually optimized filter sizes

will allow for more efficient, yet effective, models in the future.

this https URL

While being very successful in solving many downstream tasks, the application

of deep neural networks is limited in real-life scenarios because of their

susceptibility to domain shifts such as common corruptions, and adversarial

attacks. The existence of adversarial examples and data corruption

significantly reduces the performance of deep classification models.

Researchers have made strides in developing robust neural architectures to

bolster decisions of deep classifiers. However, most of these works rely on

effective adversarial training methods, and predominantly focus on overall

model robustness, disregarding class-wise differences in robustness, which are

critical. Exploiting weakly robust classes is a potential avenue for attackers

to fool the image recognition models. Therefore, this study investigates

class-to-class biases across adversarially trained robust classification models

to understand their latent space structures and analyze their strong and weak

class-wise properties. We further assess the robustness of classes against

common corruptions and adversarial attacks, recognizing that class

vulnerability extends beyond the number of correct classifications for a

specific class. We find that the number of false positives of classes as

specific target classes significantly impacts their vulnerability to attacks.

Through our analysis on the Class False Positive Score, we assess a fair

evaluation of how susceptible each class is to misclassification.

There are no more papers matching your filters at the moment.