Silo.AI

State-Dependent Causal Inference (SDCI) introduces a framework for uncovering causal relationships in conditionally stationary time series by modeling how discrete latent states modulate causal effects. The method provides partial identifiability guarantees for these state-dependent structures, even with unobserved states and an unknown number of states, and empirically demonstrates improved causal graph recovery and forecasting across various datasets, including gene regulatory networks and NBA player trajectories.

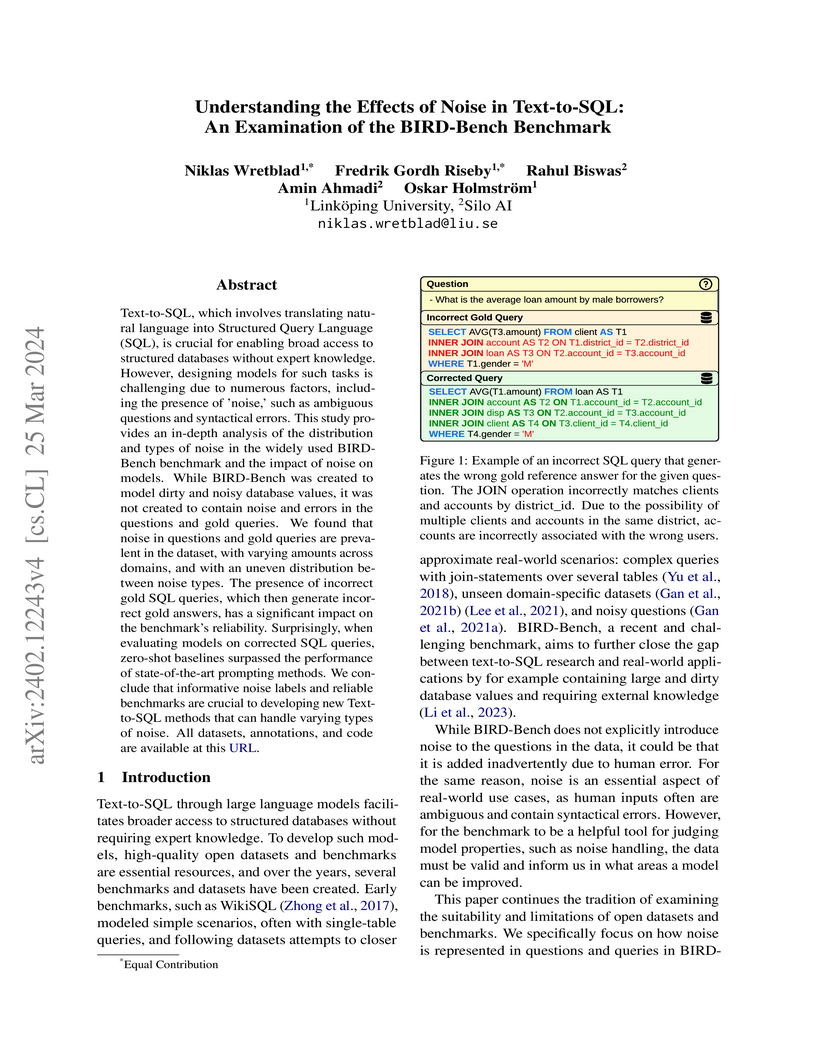

Text-to-SQL, which involves translating natural language into Structured

Query Language (SQL), is crucial for enabling broad access to structured

databases without expert knowledge. However, designing models for such tasks is

challenging due to numerous factors, including the presence of 'noise,' such as

ambiguous questions and syntactical errors. This study provides an in-depth

analysis of the distribution and types of noise in the widely used BIRD-Bench

benchmark and the impact of noise on models. While BIRD-Bench was created to

model dirty and noisy database values, it was not created to contain noise and

errors in the questions and gold queries. We found that noise in questions and

gold queries are prevalent in the dataset, with varying amounts across domains,

and with an uneven distribution between noise types. The presence of incorrect

gold SQL queries, which then generate incorrect gold answers, has a significant

impact on the benchmark's reliability. Surprisingly, when evaluating models on

corrected SQL queries, zero-shot baselines surpassed the performance of

state-of-the-art prompting methods. We conclude that informative noise labels

and reliable benchmarks are crucial to developing new Text-to-SQL methods that

can handle varying types of noise. All datasets, annotations, and code are

available at

https://github.com/niklaswretblad/the-effects-of-noise-in-text-to-SQL.

Emotion understanding is a critical yet challenging task. Most existing

approaches rely heavily on identity-sensitive information, such as facial

expressions and speech, which raises concerns about personal privacy. To

address this, we introduce the De-identity Multimodal Emotion Recognition and

Reasoning (DEEMO), a novel task designed to enable emotion understanding using

de-identified video and audio inputs. The DEEMO dataset consists of two

subsets: DEEMO-NFBL, which includes rich annotations of Non-Facial Body

Language (NFBL), and DEEMO-MER, an instruction dataset for Multimodal Emotion

Recognition and Reasoning using identity-free cues. This design supports

emotion understanding without compromising identity privacy. In addition, we

propose DEEMO-LLaMA, a Multimodal Large Language Model (MLLM) that integrates

de-identified audio, video, and textual information to enhance both emotion

recognition and reasoning. Extensive experiments show that DEEMO-LLaMA achieves

state-of-the-art performance on both tasks, outperforming existing MLLMs by a

significant margin, achieving 74.49% accuracy and 74.45% F1-score in

de-identity emotion recognition, and 6.20 clue overlap and 7.66 label overlap

in de-identity emotion reasoning. Our work contributes to ethical AI by

advancing privacy-preserving emotion understanding and promoting responsible

affective computing.

Replay methods are known to be successful at mitigating catastrophic forgetting in continual learning scenarios despite having limited access to historical data. However, storing historical data is cheap in many real-world settings, yet replaying all historical data is often prohibited due to processing time constraints. In such settings, we propose that continual learning systems should learn the time to learn and schedule which tasks to replay at different time steps. We first demonstrate the benefits of our proposal by using Monte Carlo tree search to find a proper replay schedule, and show that the found replay schedules can outperform fixed scheduling policies when combined with various replay methods in different continual learning settings. Additionally, we propose a framework for learning replay scheduling policies with reinforcement learning. We show that the learned policies can generalize better in new continual learning scenarios compared to equally replaying all seen tasks, without added computational cost. Our study reveals the importance of learning the time to learn in continual learning, which brings current research closer to real-world needs.

Emotion AI is the ability of computers to understand human emotional states.

Existing works have achieved promising progress, but two limitations remain to

be solved: 1) Previous studies have been more focused on short sequential video

emotion analysis while overlooking long sequential video. However, the emotions

in short sequential videos only reflect instantaneous emotions, which may be

deliberately guided or hidden. In contrast, long sequential videos can reveal

authentic emotions; 2) Previous studies commonly utilize various signals such

as facial, speech, and even sensitive biological signals (e.g.,

electrocardiogram). However, due to the increasing demand for privacy,

developing Emotion AI without relying on sensitive signals is becoming

important. To address the aforementioned limitations, in this paper, we

construct a dataset for Emotion Analysis in Long-sequential and De-identity

videos called EALD by collecting and processing the sequences of athletes'

post-match interviews. In addition to providing annotations of the overall

emotional state of each video, we also provide the Non-Facial Body Language

(NFBL) annotations for each player. NFBL is an inner-driven emotional

expression and can serve as an identity-free clue to understanding the

emotional state. Moreover, we provide a simple but effective baseline for

further research. More precisely, we evaluate the Multimodal Large Language

Models (MLLMs) with de-identification signals (e.g., visual, speech, and NFBLs)

to perform emotion analysis. Our experimental results demonstrate that: 1)

MLLMs can achieve comparable, even better performance than the supervised

single-modal models, even in a zero-shot scenario; 2) NFBL is an important cue

in long sequential emotion analysis. EALD will be available on the open-source

platform.

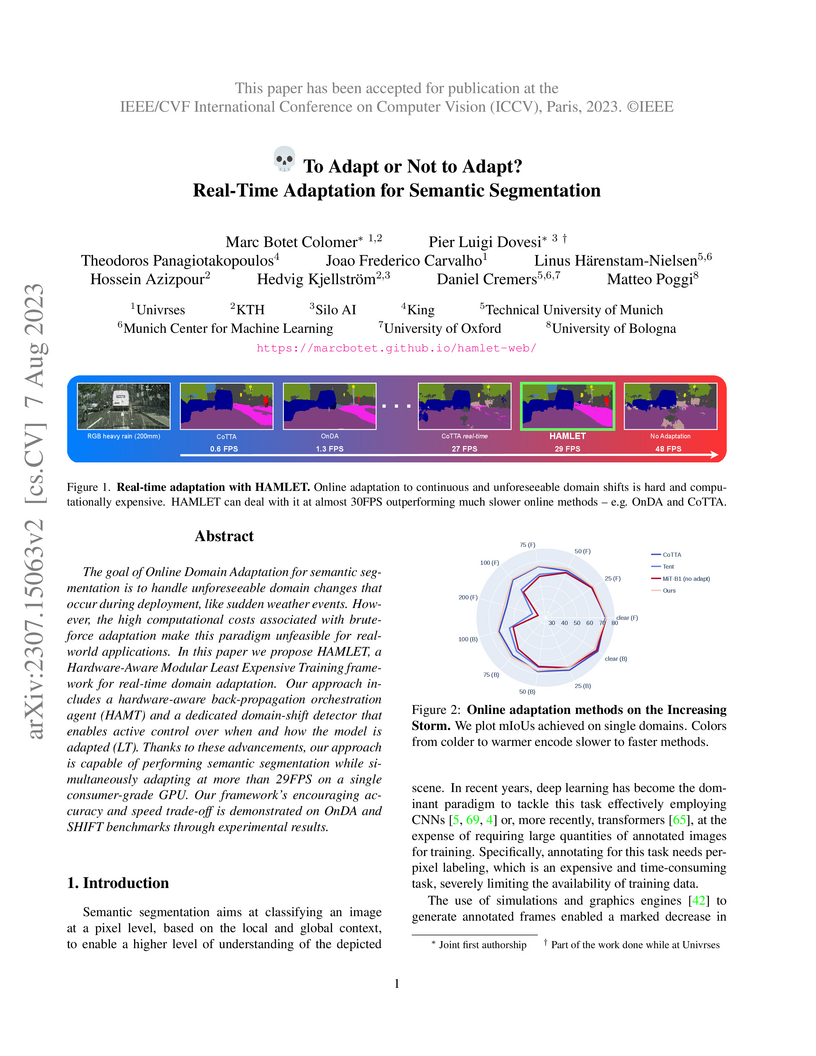

HAMLET is a framework for real-time online domain adaptation in semantic segmentation, enabling models to continuously adapt to unforeseen domain shifts while operating at over 29 FPS on consumer-grade hardware. It achieves this by selectively training only necessary network modules and modulating adaptation based on detected domain shifts, maintaining high accuracy across various challenging conditions.

University of Cambridge

University of Cambridge Université de Montréal

Université de Montréal Mila - Quebec AI InstituteUniversity of Edinburgh

Mila - Quebec AI InstituteUniversity of Edinburgh McGill University

McGill University KU LeuvenConcordia University

KU LeuvenConcordia University EPFL

EPFL Aalto UniversityUniversity of BolognaSaarland UniversityFondazione Bruno KesslerUniversity of TrentoSamsungLaval UniversityTelecom ParisAvignon universityIdiapZaionNational Institute of Informatics - TokyoSilo.AI

Aalto UniversityUniversity of BolognaSaarland UniversityFondazione Bruno KesslerUniversity of TrentoSamsungLaval UniversityTelecom ParisAvignon universityIdiapZaionNational Institute of Informatics - TokyoSilo.AISpeechBrain is an open-source Conversational AI toolkit based on PyTorch, focused particularly on speech processing tasks such as speech recognition, speech enhancement, speaker recognition, text-to-speech, and much more. It promotes transparency and replicability by releasing both the pre-trained models and the complete "recipes" of code and algorithms required for training them. This paper presents SpeechBrain 1.0, a significant milestone in the evolution of the toolkit, which now has over 200 recipes for speech, audio, and language processing tasks, and more than 100 models available on Hugging Face. SpeechBrain 1.0 introduces new technologies to support diverse learning modalities, Large Language Model (LLM) integration, and advanced decoding strategies, along with novel models, tasks, and modalities. It also includes a new benchmark repository, offering researchers a unified platform for evaluating models across diverse tasks.

Achieving state-of-the-art results in face verification systems typically hinges on the availability of labeled face training data, a resource that often proves challenging to acquire in substantial quantities. In this research endeavor, we proposed employing Siamese networks for face recognition, eliminating the need for labeled face images. We achieve this by strategically leveraging negative samples alongside nearest neighbor counterparts, thereby establishing positive and negative pairs through an unsupervised methodology. The architectural framework adopts a VGG encoder, trained as a double branch siamese network. Our primary aim is to circumvent the necessity for labeled face image data, thus proposing the generation of training pairs in an entirely unsupervised manner. Positive training data are selected within a dataset based on their highest cosine similarity scores with a designated anchor, while negative training data are culled in a parallel fashion, though drawn from an alternate dataset. During training, the proposed siamese network conducts binary classification via cross-entropy loss. Subsequently, during the testing phase, we directly extract face verification scores from the network's output layer. Experimental results reveal that the proposed unsupervised system delivers a performance on par with a similar but fully supervised baseline.

This paper presents the OPUS ecosystem with a focus on the development of open machine translation models and tools, and their integration into end-user applications, development platforms and professional workflows. We discuss our on-going mission of increasing language coverage and translation quality, and also describe on-going work on the development of modular translation models and speed-optimized compact solutions for real-time translation on regular desktops and small devices.

12 Mar 2025

As LLMs gain more popularity as chatbots and general assistants, methods have

been developed to enable LLMs to follow instructions and align with human

preferences. These methods have found success in the field, but their

effectiveness has not been demonstrated outside of high-resource languages. In

this work, we discuss our experiences in post-training an LLM for

instruction-following for English and Finnish. We use a multilingual LLM to

translate instruction and preference datasets from English to Finnish. We

perform instruction tuning and preference optimization in English and Finnish

and evaluate the instruction-following capabilities of the model in both

languages. Our results show that with a few hundred Finnish instruction samples

we can obtain competitive performance in Finnish instruction-following. We also

found that although preference optimization in English offers some

cross-lingual benefits, we obtain our best results by using preference data

from both languages. We release our model, datasets, and recipes under open

licenses at this https URL

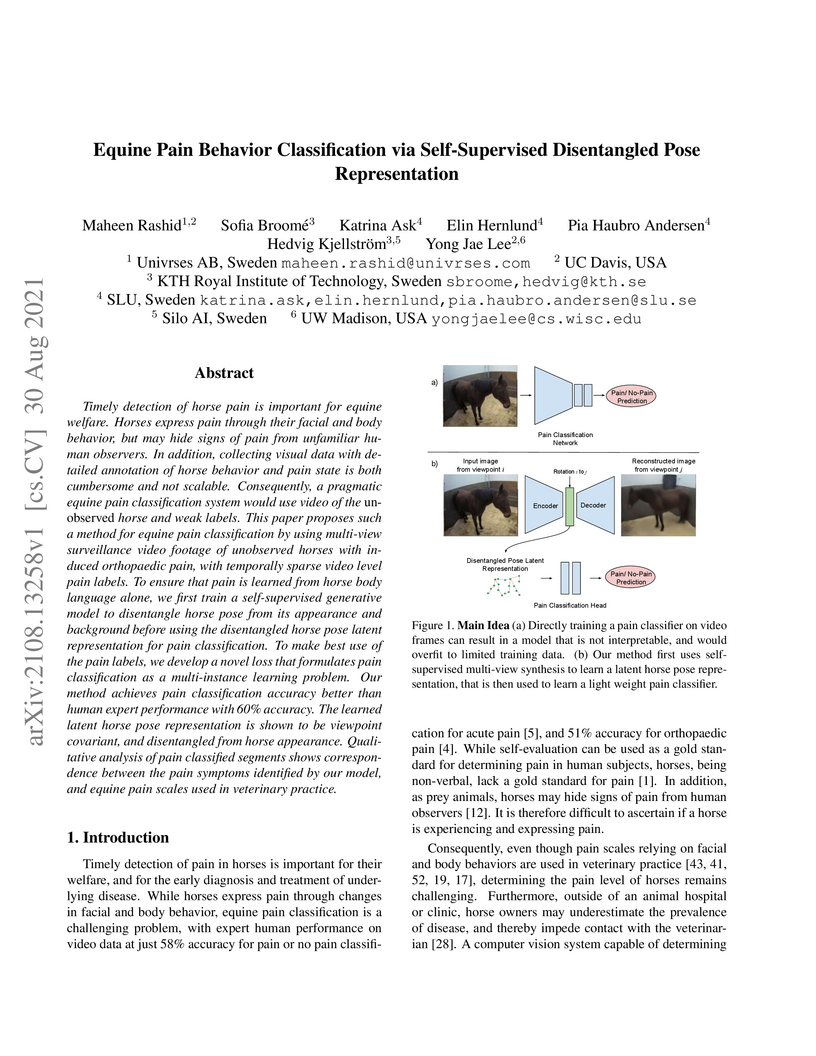

Timely detection of horse pain is important for equine welfare. Horses

express pain through their facial and body behavior, but may hide signs of pain

from unfamiliar human observers. In addition, collecting visual data with

detailed annotation of horse behavior and pain state is both cumbersome and not

scalable. Consequently, a pragmatic equine pain classification system would use

video of the unobserved horse and weak labels. This paper proposes such a

method for equine pain classification by using multi-view surveillance video

footage of unobserved horses with induced orthopaedic pain, with temporally

sparse video level pain labels. To ensure that pain is learned from horse body

language alone, we first train a self-supervised generative model to

disentangle horse pose from its appearance and background before using the

disentangled horse pose latent representation for pain classification. To make

best use of the pain labels, we develop a novel loss that formulates pain

classification as a multi-instance learning problem. Our method achieves pain

classification accuracy better than human expert performance with 60% accuracy.

The learned latent horse pose representation is shown to be viewpoint

covariant, and disentangled from horse appearance. Qualitative analysis of pain

classified segments shows correspondence between the pain symptoms identified

by our model, and equine pain scales used in veterinary practice.

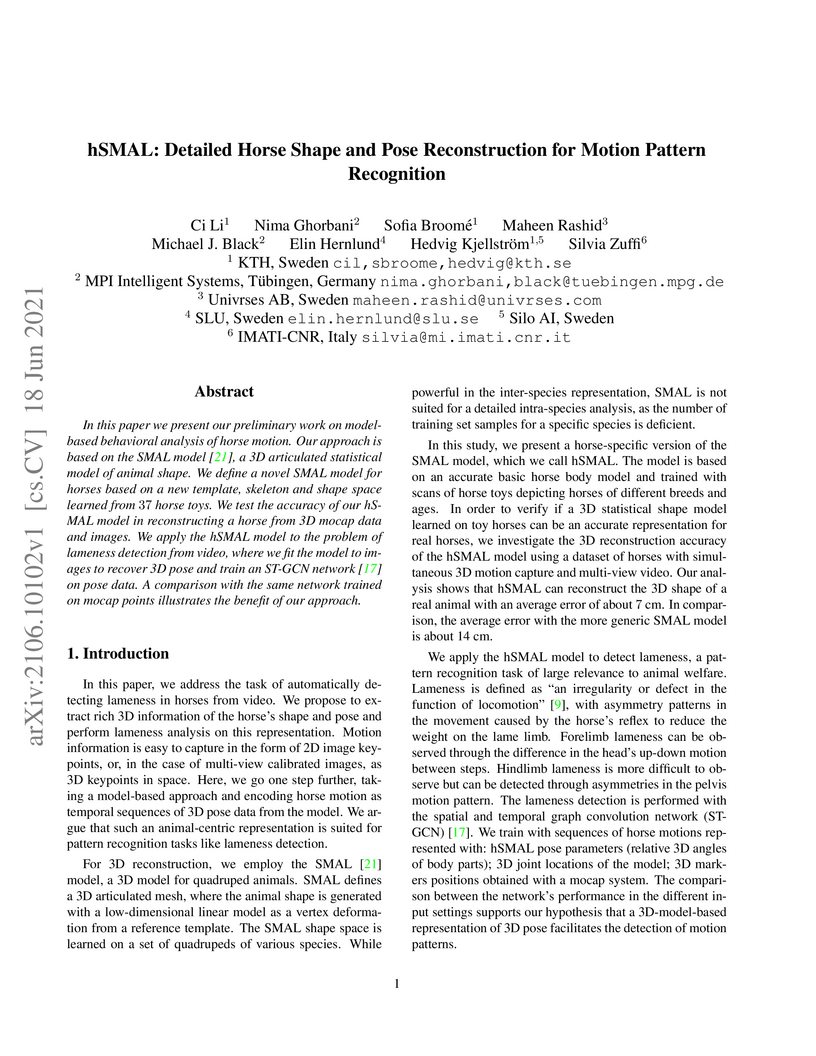

In this paper we present our preliminary work on model-based behavioral

analysis of horse motion. Our approach is based on the SMAL model, a 3D

articulated statistical model of animal shape. We define a novel SMAL model for

horses based on a new template, skeleton and shape space learned from 37

horse toys. We test the accuracy of our hSMAL model in reconstructing a horse

from 3D mocap data and images. We apply the hSMAL model to the problem of

lameness detection from video, where we fit the model to images to recover 3D

pose and train an ST-GCN network on pose data. A comparison with the same

network trained on mocap points illustrates the benefit of our approach.

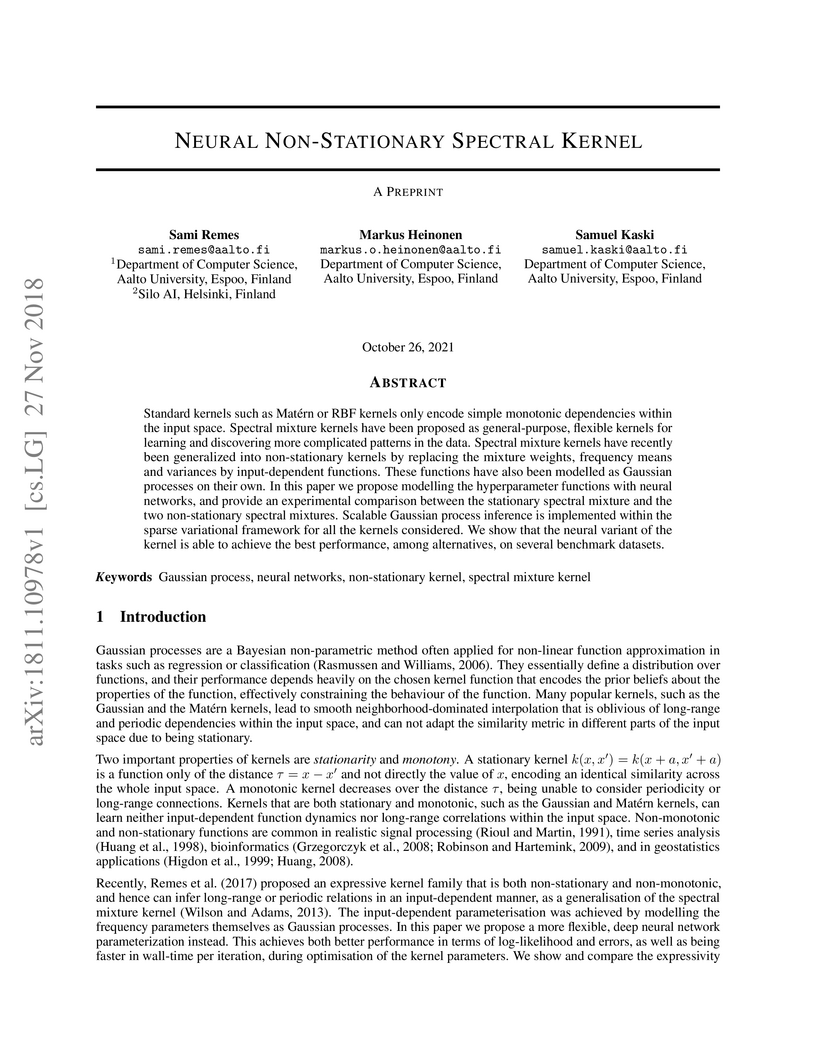

Standard kernels such as Mat\'ern or RBF kernels only encode simple monotonic

dependencies within the input space. Spectral mixture kernels have been

proposed as general-purpose, flexible kernels for learning and discovering more

complicated patterns in the data. Spectral mixture kernels have recently been

generalized into non-stationary kernels by replacing the mixture weights,

frequency means and variances by input-dependent functions. These functions

have also been modelled as Gaussian processes on their own. In this paper we

propose modelling the hyperparameter functions with neural networks, and

provide an experimental comparison between the stationary spectral mixture and

the two non-stationary spectral mixtures. Scalable Gaussian process inference

is implemented within the sparse variational framework for all the kernels

considered. We show that the neural variant of the kernel is able to achieve

the best performance, among alternatives, on several benchmark datasets.

The state-of-the-art face recognition systems are typically trained on a

single computer, utilizing extensive image datasets collected from various

number of users. However, these datasets often contain sensitive personal

information that users may hesitate to disclose. To address potential privacy

concerns, we explore the application of federated learning, both with and

without secure aggregators, in the context of both supervised and unsupervised

face recognition systems. Federated learning facilitates the training of a

shared model without necessitating the sharing of individual private data,

achieving this by training models on decentralized edge devices housing the

data. In our proposed system, each edge device independently trains its own

model, which is subsequently transmitted either to a secure aggregator or

directly to the central server. To introduce diverse data without the need for

data transmission, we employ generative adversarial networks to generate

imposter data at the edge. Following this, the secure aggregator or central

server combines these individual models to construct a global model, which is

then relayed back to the edge devices. Experimental findings based on the

CelebA datasets reveal that employing federated learning in both supervised and

unsupervised face recognition systems offers dual benefits. Firstly, it

safeguards privacy since the original data remains on the edge devices.

Secondly, the experimental results demonstrate that the aggregated model yields

nearly identical performance compared to the individual models, particularly

when the federated model does not utilize a secure aggregator. Hence, our

results shed light on the practical challenges associated with

privacy-preserving face image training, particularly in terms of the balance

between privacy and accuracy.

Most action recognition models today are highly parameterized, and evaluated on datasets with appearance-wise distinct classes. It has also been shown that 2D Convolutional Neural Networks (CNNs) tend to be biased toward texture rather than shape in still image recognition tasks, in contrast to humans. Taken together, this raises suspicion that large video models partly learn spurious spatial texture correlations rather than to track relevant shapes over time to infer generalizable semantics from their movement. A natural way to avoid parameter explosion when learning visual patterns over time is to make use of recurrence. Biological vision consists of abundant recurrent circuitry, and is superior to computer vision in terms of domain shift generalization. In this article, we empirically study whether the choice of low-level temporal modeling has consequences for texture bias and cross-domain robustness. In order to enable a light-weight and systematic assessment of the ability to capture temporal structure, not revealed from single frames, we provide the Temporal Shape (TS) dataset, as well as modified domains of Diving48 allowing for the investigation of spatial texture bias in video models. The combined results of our experiments indicate that sound physical inductive bias such as recurrence in temporal modeling may be advantageous when robustness to domain shift is important for the task.

In various verification systems, Restricted Boltzmann Machines (RBMs) have demonstrated their efficacy in both front-end and back-end processes. In this work, we propose the use of RBMs to the image clustering tasks. RBMs are trained to convert images into image embeddings. We employ the conventional bottom-up Agglomerative Hierarchical Clustering (AHC) technique. To address the challenge of limited test face image data, we introduce Agglomerative Hierarchical Clustering based Method for Image Clustering using Restricted Boltzmann Machine (AHC-RBM) with two major steps. Initially, a universal RBM model is trained using all available training dataset. Subsequently, we train an adapted RBM model using the data from each test image. Finally, RBM vectors which is the embedding vector is generated by concatenating the visible-to-hidden weight matrices of these adapted models, and the bias vectors. These vectors effectively preserve class-specific information and are utilized in image clustering tasks. Our experimental results, conducted on two benchmark image datasets (MS-Celeb-1M and DeepFashion), demonstrate that our proposed approach surpasses well-known clustering algorithms such as k-means, spectral clustering, and approximate Rank-order.

08 Jun 2025

University of Toronto

University of Toronto University College LondonTU DelftHong Kong Baptist UniversityUniversity of TwenteRMIT UniversityUniversity of TsukubaClemson UniversityTU EindhovenUniversity of AntwerpGeorgetown UniversityUniversity of PaduaUniversity of SalzburgBauhaus-Universität WeimarUniversität Duisburg-EssenTH KölnSilo.AI

University College LondonTU DelftHong Kong Baptist UniversityUniversity of TwenteRMIT UniversityUniversity of TsukubaClemson UniversityTU EindhovenUniversity of AntwerpGeorgetown UniversityUniversity of PaduaUniversity of SalzburgBauhaus-Universität WeimarUniversität Duisburg-EssenTH KölnSilo.AIDuring the workshop, we deeply discussed what CONversational Information

ACcess (CONIAC) is and its unique features, proposing a world model abstracting

it, and defined the Conversational Agents Framework for Evaluation (CAFE) for

the evaluation of CONIAC systems, consisting of six major components: 1) goals

of the system's stakeholders, 2) user tasks to be studied in the evaluation, 3)

aspects of the users carrying out the tasks, 4) evaluation criteria to be

considered, 5) evaluation methodology to be applied, and 6) measures for the

quantitative criteria chosen.

NLP in the age of monolithic large language models is approaching its limits

in terms of size and information that can be handled. The trend goes to

modularization, a necessary step into the direction of designing smaller

sub-networks and components with specialized functionality. In this paper, we

present the MAMMOTH toolkit: a framework designed for training massively

multilingual modular machine translation systems at scale, initially derived

from OpenNMT-py and then adapted to ensure efficient training across

computation clusters. We showcase its efficiency across clusters of A100 and

V100 NVIDIA GPUs, and discuss our design philosophy and plans for future

information. The toolkit is publicly available online.

Grounding has been argued to be a crucial component towards the development of more complete and truly semantically competent artificial intelligence systems. Literature has divided into two camps: While some argue that grounding allows for qualitatively different generalizations, others believe it can be compensated by mono-modal data quantity. Limited empirical evidence has emerged for or against either position, which we argue is due to the methodological challenges that come with studying grounding and its effects on NLP systems.

In this paper, we establish a methodological framework for studying what the effects are - if any - of providing models with richer input sources than text-only. The crux of it lies in the construction of comparable samples of populations of models trained on different input modalities, so that we can tease apart the qualitative effects of different input sources from quantifiable model performances. Experiments using this framework reveal qualitative differences in model behavior between cross-modally grounded, cross-lingually grounded, and ungrounded models, which we measure both at a global dataset level as well as for specific word representations, depending on how concrete their semantics is.

The pretraining of state-of-the-art large language models now requires trillions of words of text, which is orders of magnitude more than available for the vast majority of languages. While including text in more than one language is an obvious way to acquire more pretraining data, multilinguality is often seen as a curse, and most model training efforts continue to focus near-exclusively on individual large languages. We believe that multilinguality can be a blessing: when the lack of training data is a constraint for effectively training larger models for a target language, augmenting the dataset with other languages can offer a way to improve over the capabilities of monolingual models for that language. In this study, we introduce Poro 34B, a 34 billion parameter model trained for 1 trillion tokens of Finnish, English, and programming languages, and demonstrate that a multilingual training approach can produce a model that substantially advances over the capabilities of existing models for Finnish and excels in translation, while also achieving competitive performance in its class for English and programming languages. We release the model parameters, scripts, and data under open licenses at this https URL.

There are no more papers matching your filters at the moment.